Essence

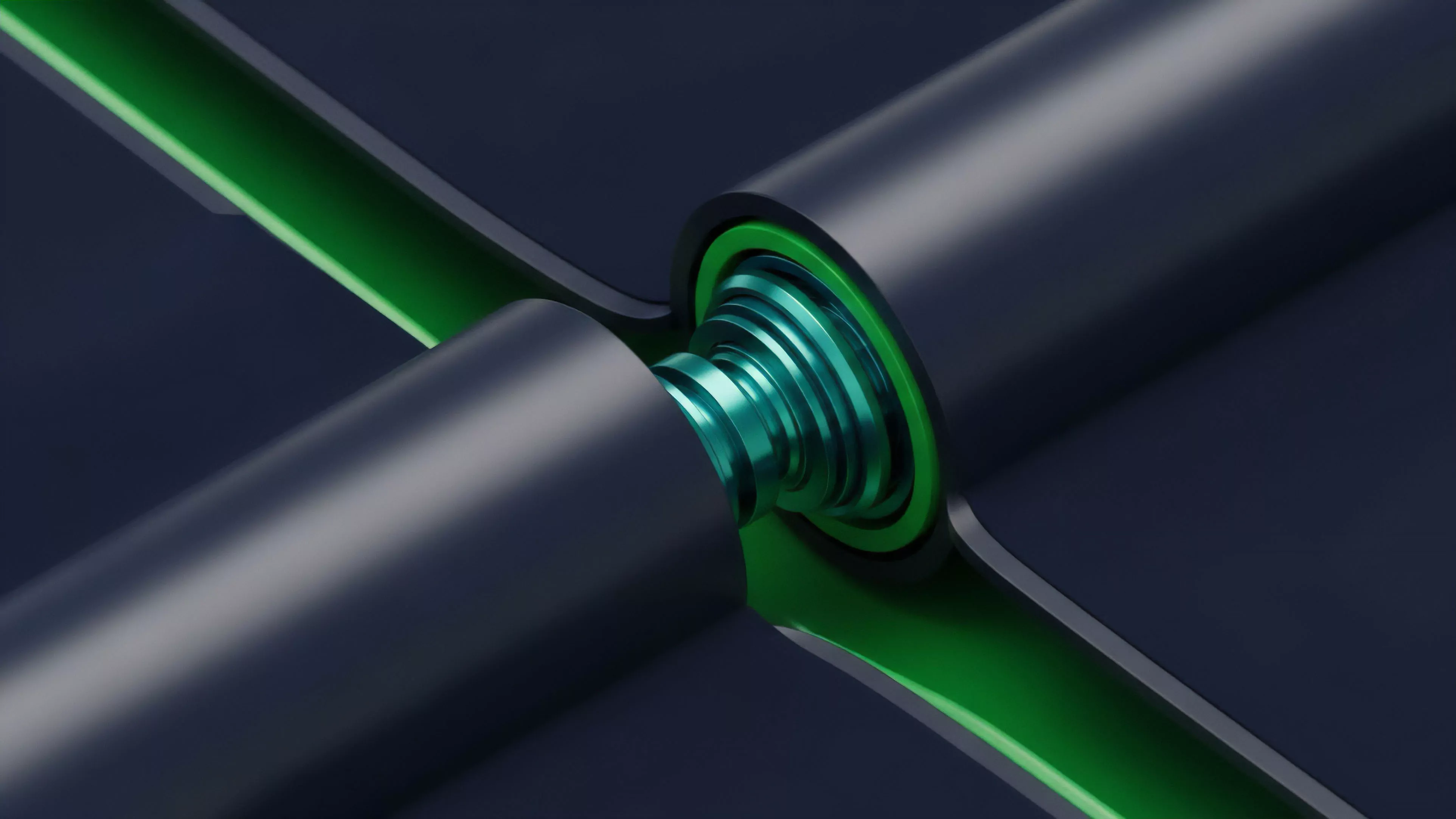

Real-Time Calculations represent the computational backbone of decentralized derivative markets, facilitating the instantaneous translation of raw market data into actionable financial metrics. These operations serve as the primary mechanism for determining margin requirements, mark-to-market valuations, and risk sensitivity parameters without the latency inherent in traditional clearinghouse architectures. The core utility of these systems lies in their ability to maintain systemic equilibrium.

By continuously processing order flow, volatility surfaces, and collateral price feeds, they ensure that the protocol remains solvent under adversarial market conditions. The integrity of the entire decentralized financial structure depends upon the precision and speed of these engines.

Real-Time Calculations function as the instantaneous arbiter of solvency and risk in decentralized derivative protocols.

These systems must resolve complex mathematical models ⎊ often involving non-linear pricing functions ⎊ within the constraints of blockchain block times or sub-second off-chain sequencer environments. Failure to execute these calculations with sufficient velocity leads to stale pricing, inefficient capital allocation, and catastrophic liquidation cascades.

Origin

The genesis of Real-Time Calculations stems from the limitations of traditional finance, where settlement cycles and batch processing introduce significant temporal risk. Early decentralized protocols adopted simple automated market maker models, but the transition toward sophisticated options and perpetual futures necessitated a shift toward continuous, state-dependent computation.

Development emerged from the intersection of distributed systems engineering and quantitative finance. Architects sought to replicate the efficiency of centralized high-frequency trading engines while adhering to the transparency and permissionless nature of blockchain technology. This drive resulted in the creation of specialized margin engines and oracle-linked computation modules.

- Protocol Architecture: Initial designs prioritized state simplicity to minimize gas consumption, leading to rudimentary, periodic re-calculations of portfolio risk.

- Quantitative Requirements: The introduction of exotic crypto derivatives forced a departure from basic arithmetic toward the implementation of Black-Scholes and other pricing models directly within smart contracts.

- Adversarial Adaptation: Market participants quickly exploited latency gaps, necessitating the evolution of these systems toward sub-block execution to protect protocol integrity.

The shift from periodic updates to continuous, Real-Time Calculations mirrors the evolution of digital asset markets themselves, moving away from slow, manual reconciliation toward fully automated, high-velocity financial environments.

Theory

The theoretical framework for Real-Time Calculations rests upon the synchronization of volatile input data with static pricing models. This requires a robust pipeline capable of handling high-throughput telemetry from multiple sources while ensuring that the resulting outputs remain consistent across all participants.

Mathematical Modeling

Pricing engines must account for the unique characteristics of crypto assets, specifically high realized volatility and discontinuous price movements. The model must compute the following components continuously:

| Parameter | Functional Role |

| Mark Price | Determines liquidation thresholds and unrealized PnL |

| Implied Volatility | Updates option premiums and risk sensitivities |

| Maintenance Margin | Triggers automated position closure during insolvency |

The accuracy of derivative pricing relies on the seamless integration of continuous volatility feeds into non-linear mathematical models.

Systemic Feedback Loops

The interplay between Real-Time Calculations and user behavior creates dynamic feedback loops. As calculations adjust margin requirements, they influence the incentives for traders to add or remove liquidity. This interaction defines the market microstructure, where the computational speed of the protocol dictates the effectiveness of arbitrage and the depth of the order book.

Approach

Current implementation strategies focus on balancing computational overhead with the necessity for extreme precision.

Architects employ diverse techniques to ensure that Real-Time Calculations remain performant even during periods of intense market stress.

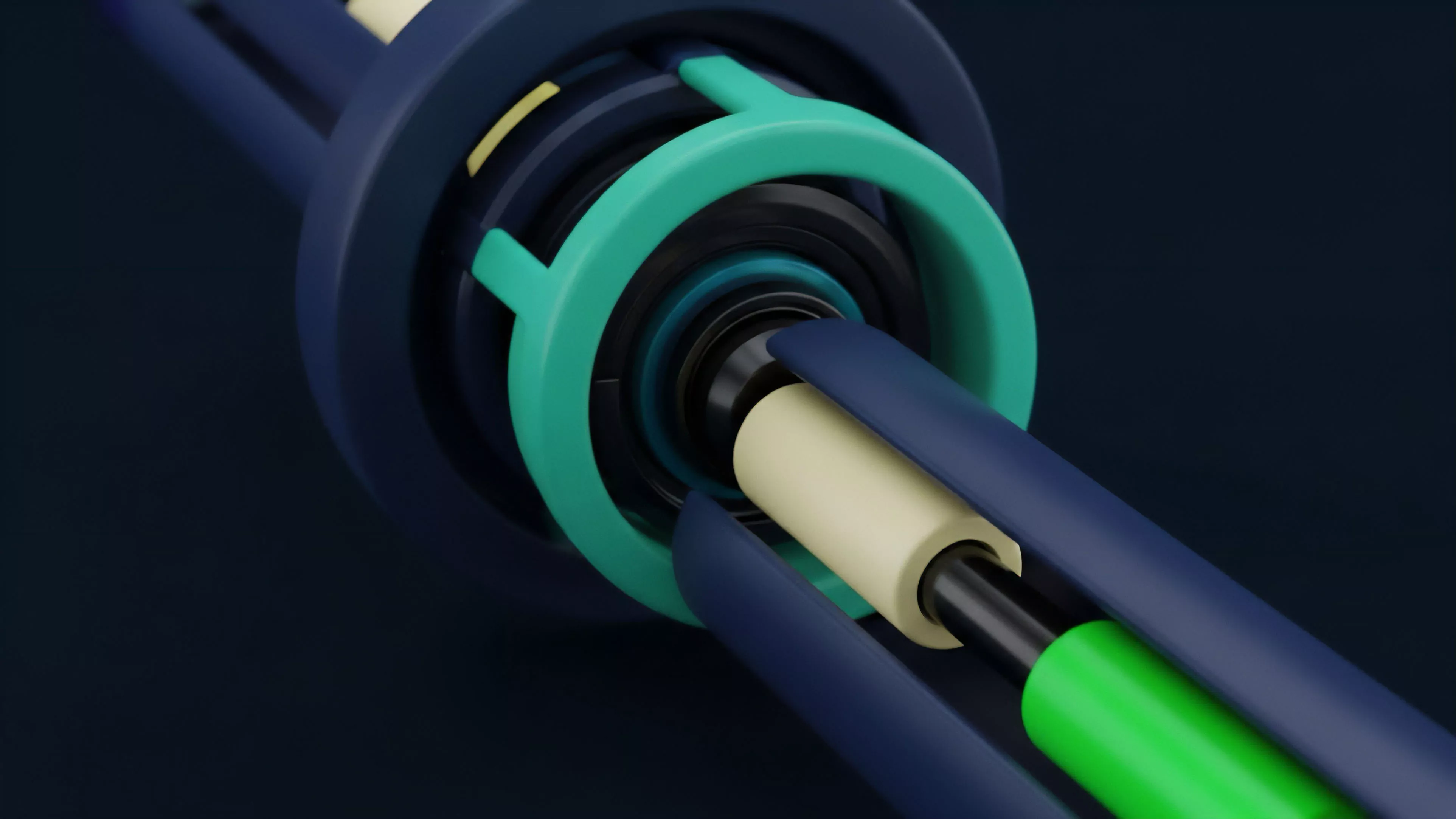

- Off-chain Sequencers: Many protocols shift intensive computation to high-performance off-chain environments, using zero-knowledge proofs to anchor the results back to the blockchain.

- Optimistic Computation: Systems perform rapid calculations assuming validity, allowing for challenges and subsequent corrections if errors occur, which significantly lowers latency.

- Oracle Aggregation: Utilizing multiple decentralized data sources ensures that the input data for Real-Time Calculations is resistant to manipulation and flash-loan attacks.

Decentralized systems mitigate latency through the strategic distribution of computational tasks across off-chain and on-chain layers.

Engineers must account for the reality that the underlying blockchain environment is inherently adversarial. Every calculation represents a potential point of failure; therefore, the approach prioritizes defensive programming and modular design. The objective is to maintain a state of continuous readiness, where every trade is evaluated against the current market reality before settlement occurs.

Evolution

The trajectory of Real-Time Calculations has moved from simple, reactive state updates toward sophisticated, predictive risk management systems.

Early iterations were static, relying on infrequent updates that exposed the protocol to significant market risk during periods of high volatility. Modern systems incorporate advanced statistical methods to anticipate market shifts before they occur. This evolution is driven by the necessity for capital efficiency, as users demand higher leverage and tighter spreads.

The transition toward high-frequency, on-chain derivatives is a direct result of these improvements in computational throughput.

Structural Shifts

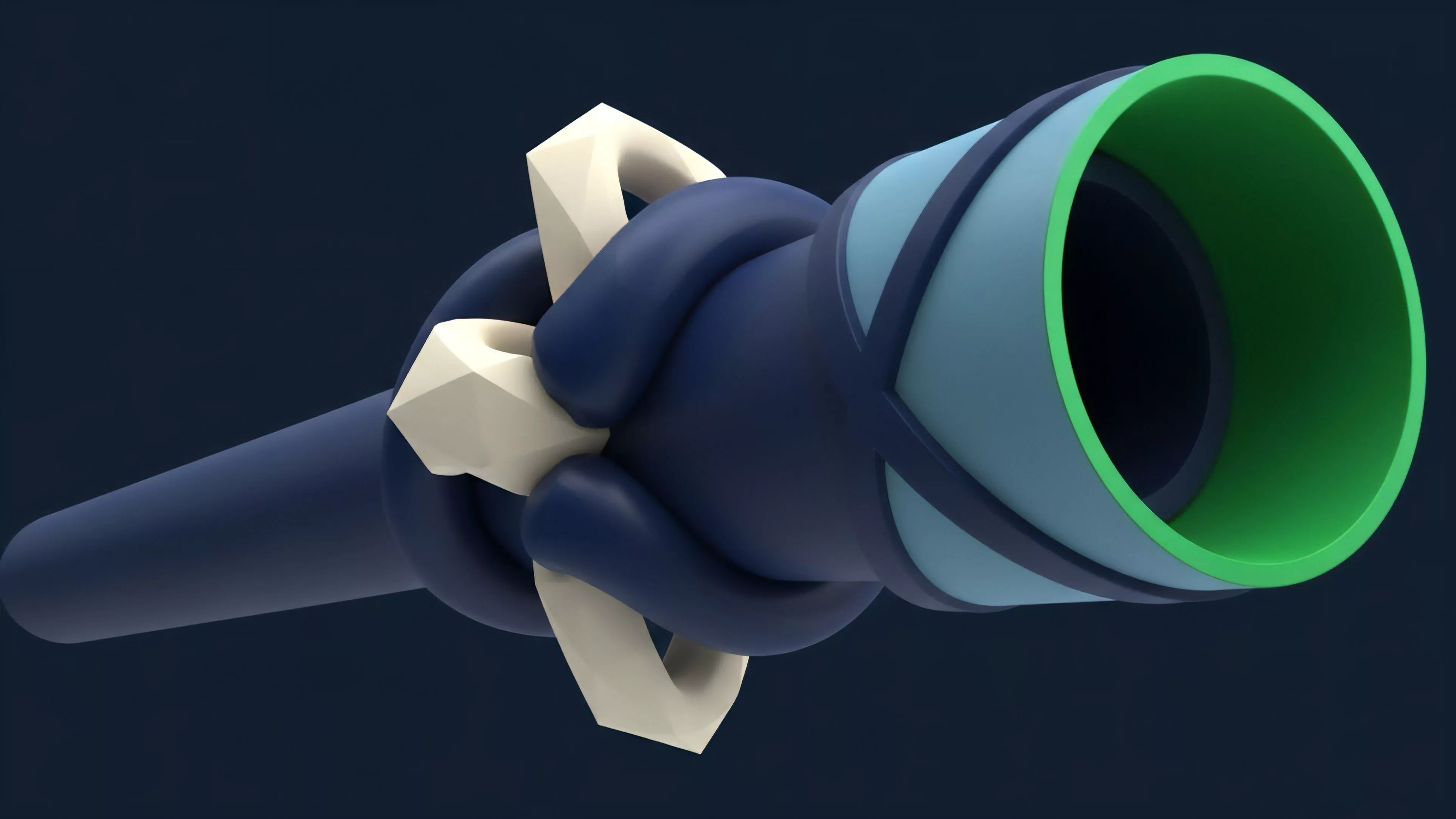

The shift from monolithic smart contracts to modular, composable architectures has enabled more granular control over Real-Time Calculations. Protocols now delegate specific tasks to specialized sub-contracts or external computation providers, reducing the risk of a single point of failure within the core engine. One might observe that this mirrors the transition in traditional systems from mainframe computing to distributed cloud architectures, yet the stakes remain vastly higher due to the immutable nature of smart contract execution.

This progress necessitates a constant reassessment of the trade-offs between speed, decentralization, and security. Protocols that prioritize speed often sacrifice some degree of decentralization, while those that emphasize absolute security face significant latency challenges. The ongoing search for the optimal balance remains the defining challenge for system architects.

Horizon

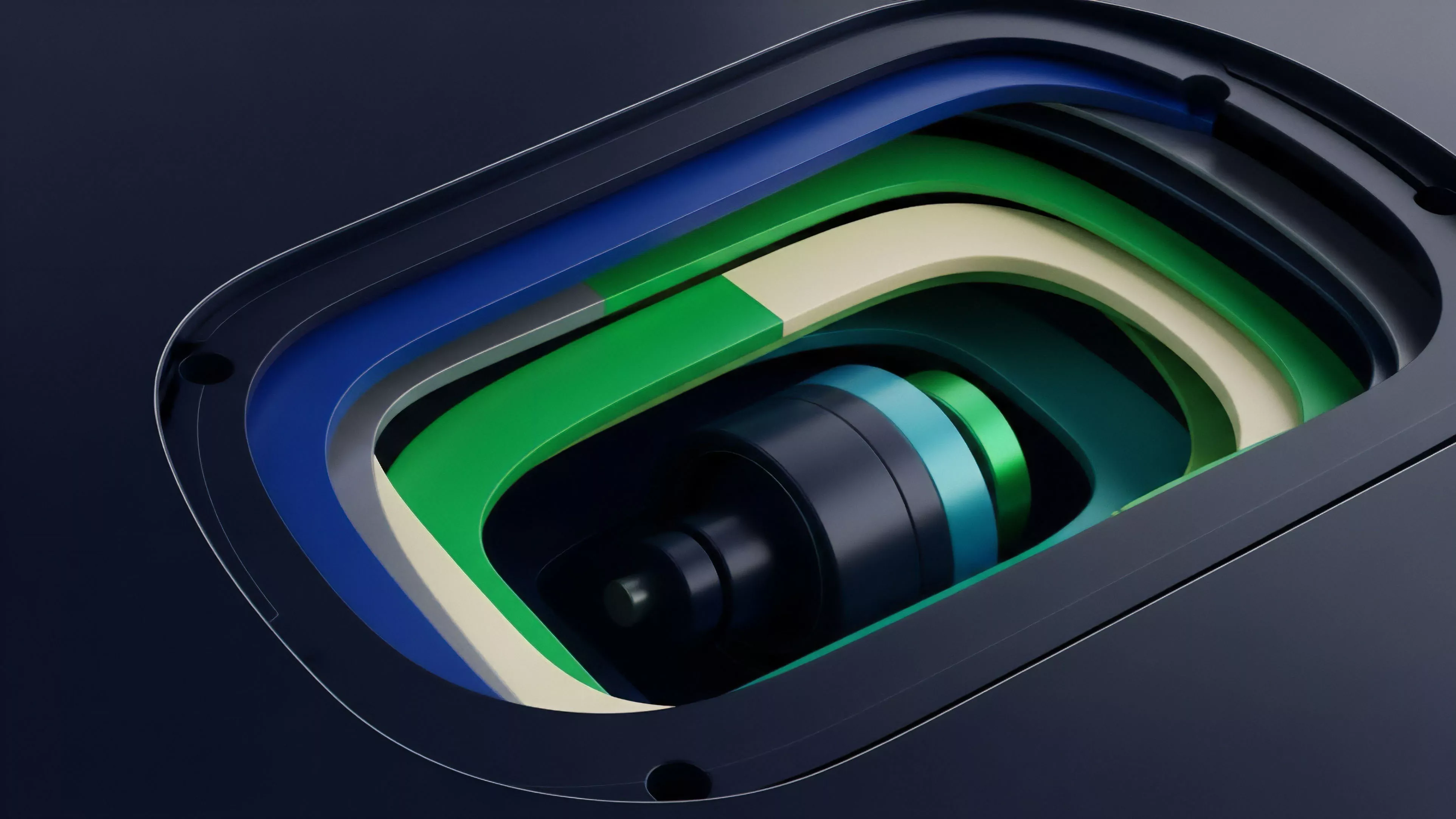

The future of Real-Time Calculations lies in the integration of hardware-accelerated computation and advanced cryptographic primitives.

As the demand for complex, cross-margin derivative products grows, the underlying systems must achieve performance levels that rival centralized exchanges. Future developments will likely center on:

- Hardware Security Modules: Integrating trusted execution environments to perform sensitive calculations off-chain while maintaining verifiable integrity.

- Predictive Risk Engines: Moving beyond reactive thresholds to proactive, machine-learning-based models that adjust margin requirements based on projected market conditions.

- Cross-Protocol Synchronization: Enabling real-time risk assessment across multiple chains, allowing for a unified view of a user’s collateral and exposure.

Advanced hardware integration and predictive modeling represent the next frontier for high-velocity decentralized derivative protocols.

The ultimate goal is a financial system where Real-Time Calculations are invisible to the user, yet robust enough to withstand any market condition. The success of this vision depends on the ability of architects to solve the fundamental problem of trustless, high-performance computation in an adversarial digital environment.