Essence

The Greeks Streaming Architecture (GSA) is the computational backbone that translates the theoretical risk sensitivities of options ⎊ the Greeks ⎊ into actionable, near-instantaneous data streams for automated risk management and market making. This architecture acknowledges that in decentralized finance, where collateral is programmatic and liquidations are immutable, the latency of risk assessment is a systemic vulnerability. The GSA is a complex system designed to maintain a perpetually current view of the Implied Volatility Surface (IVS) and, consequently, the risk profile of every participant.

The speed of options risk management determines the solvency of the entire protocol; a delayed Delta calculation is a silent liability waiting for the next volatility spike.

The functional requirement is simple: a change in the underlying asset’s price, or a change in time, must immediately update the margin requirements and liquidation thresholds for all positions. This demands a computational throughput far exceeding that of simple spot trading. GSA moves beyond periodic batch processing ⎊ a relic of traditional finance ⎊ to a continuous computation model, recognizing that the crypto options market operates under a state of perpetual, adversarial stress.

This constant recalculation is not an operational luxury; it is the fundamental mechanism for systemic stability, particularly for margin engines that rely on precise, real-time Delta values to determine collateral adequacy.

Systemic Function and Solvency

The architecture’s core task is to prevent the protocol from inheriting counterparty risk. This is achieved by feeding the constantly updated Greeks directly into two critical systems. The first is the Liquidation Engine , which uses the calculated risk (often represented by a combination of Delta and Gamma exposure) to check collateral health against a predefined threshold.

The second is the automated Hedging Bot layer, typically run by market makers or the protocol’s own treasury, which uses the streaming Delta data to maintain a near-neutral exposure to the underlying asset. A failure in the GSA means a stale risk view, which, during a market dislocation, leads to cascading liquidations or, worse, protocol insolvency.

Origin

The genesis of Greeks Streaming Architecture lies in the historical inadequacy of end-of-day or even hourly batch calculations that defined early electronic trading.

In traditional, regulated markets, risk systems often relied on overnight processes, tolerable due to the existence of centralized clearing houses absorbing some of the gap risk. The transition to decentralized, 24/7 crypto markets ⎊ and particularly the advent of options with no centralized guarantor ⎊ rendered this latency unacceptable. The problem intensified with the rise of Perpetual Futures , which, despite not being options, introduced the concept of continuous funding and margining, setting a new, higher standard for real-time risk.

The Shift from Batch to Stream

Early crypto options platforms, seeking to replicate the Black-Scholes-Merton (BSM) framework, initially adopted simplified, low-frequency calculations. This created significant, exploitable windows of arbitrage, where sophisticated actors could observe a market move and execute a trade based on a known, soon-to-be-updated price, exploiting the lag in the protocol’s risk system. The true origin of GSA is a direct response to this adversarial environment ⎊ a computational arms race where the protocol had to achieve the same speed as the most advanced market-making algorithms.

The architecture was born from the necessity to collapse the time interval between a market event and the corresponding update to the collateral requirement to a sub-second timeframe.

The move to streaming risk metrics was a forced evolution, a defensive measure against the speed and ruthlessness of automated arbitrageurs operating in a 24/7 global market.

This realization ⎊ that risk management had to operate at the frequency of Market Microstructure itself ⎊ forced a fundamental re-architecture. The old model assumed a stable, liquid market; the new model, GSA, assumes a state of constant, volatile flux and requires the risk engine to be a real-time, active participant in the price discovery process, not a passive, lagging observer.

Theory

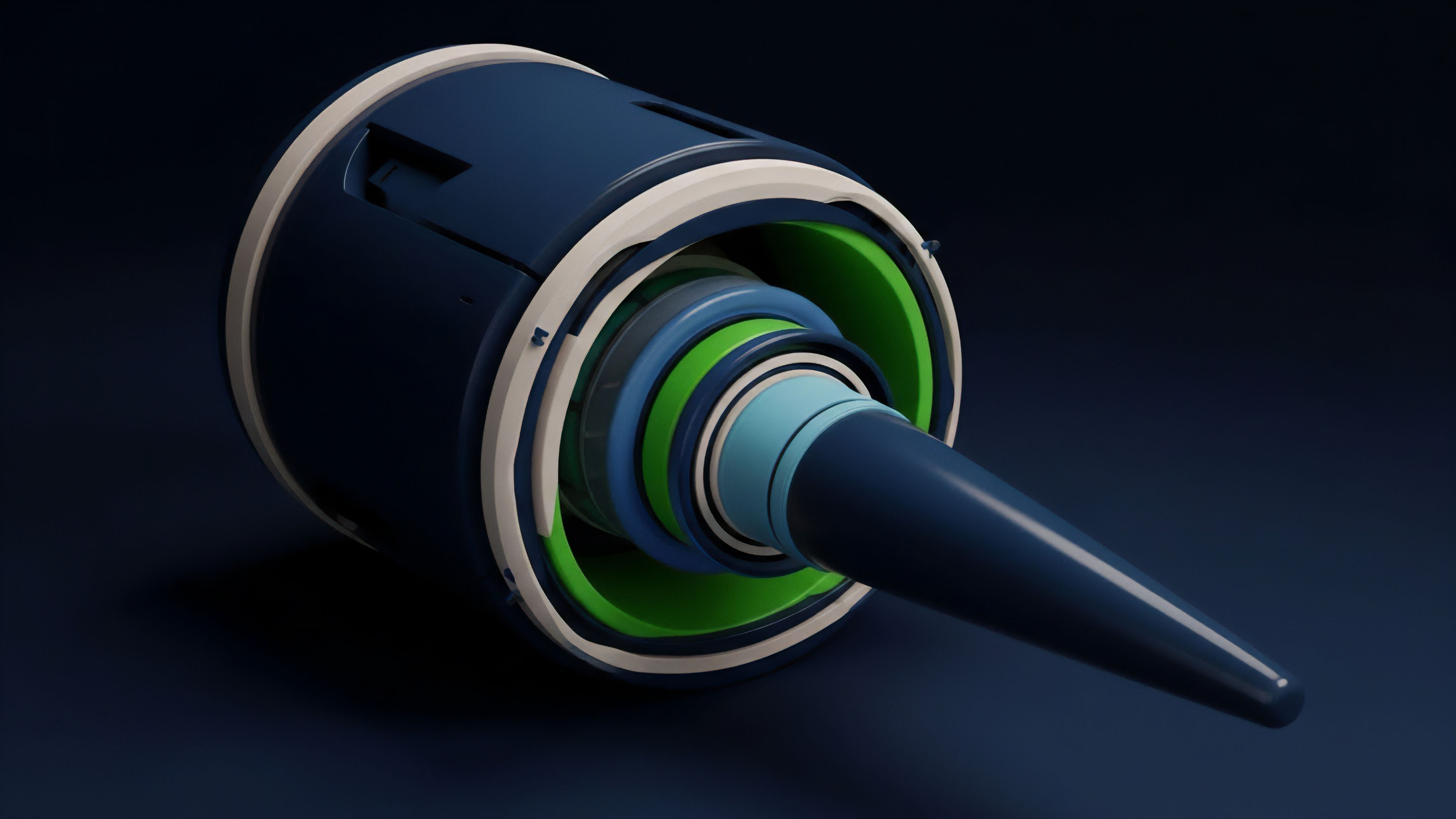

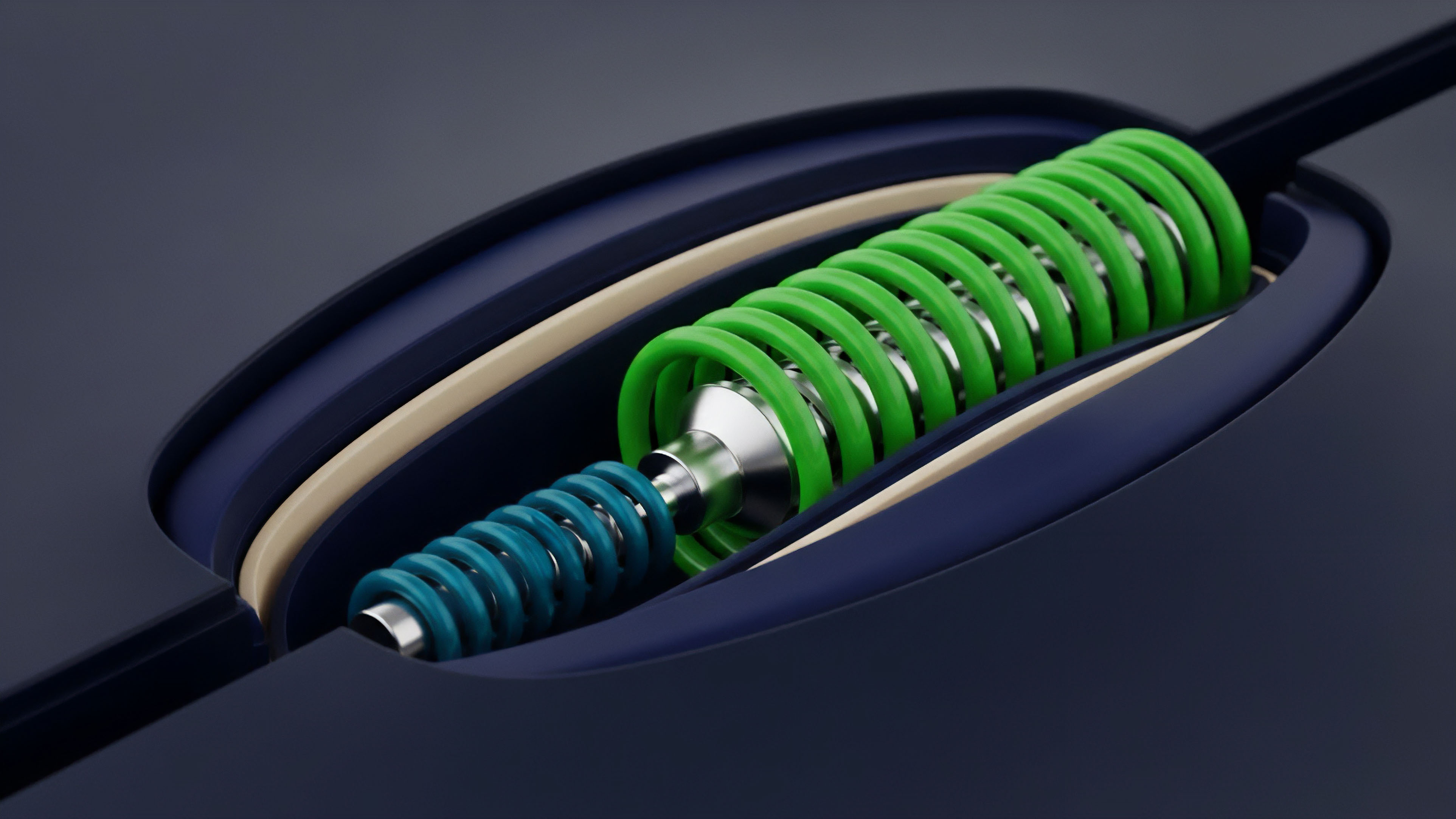

The theoretical challenge of Greeks Streaming Architecture is the continuous reconciliation of a path-dependent, stochastic model ⎊ like the implied volatility surface ⎊ with the discrete, deterministic nature of a blockchain ledger.

The core mathematical issue is the calculation of Delta and Gamma ⎊ the first and second derivatives of the option price with respect to the underlying asset’s price ⎊ at a speed that outpaces the movement of the underlying. The computational load is immense, growing polynomially with the number of strike prices, expiries, and active positions. Consider a typical options vault with thousands of open contracts: the GSA must calculate five Greeks for each, plus the underlying option price, all while simultaneously accounting for the time decay ( Theta ) and volatility sensitivity ( Vega ).

This is a multi-dimensional optimization problem under a hard latency constraint. The model must not simply output a number; it must output a stream of numbers whose consistency and coherence are maintained across every block confirmation. The system is predicated on the assumption that while the true volatility is unobservable, the Implied Volatility Surface derived from current market prices is the most accurate proxy for the market’s collective risk assessment.

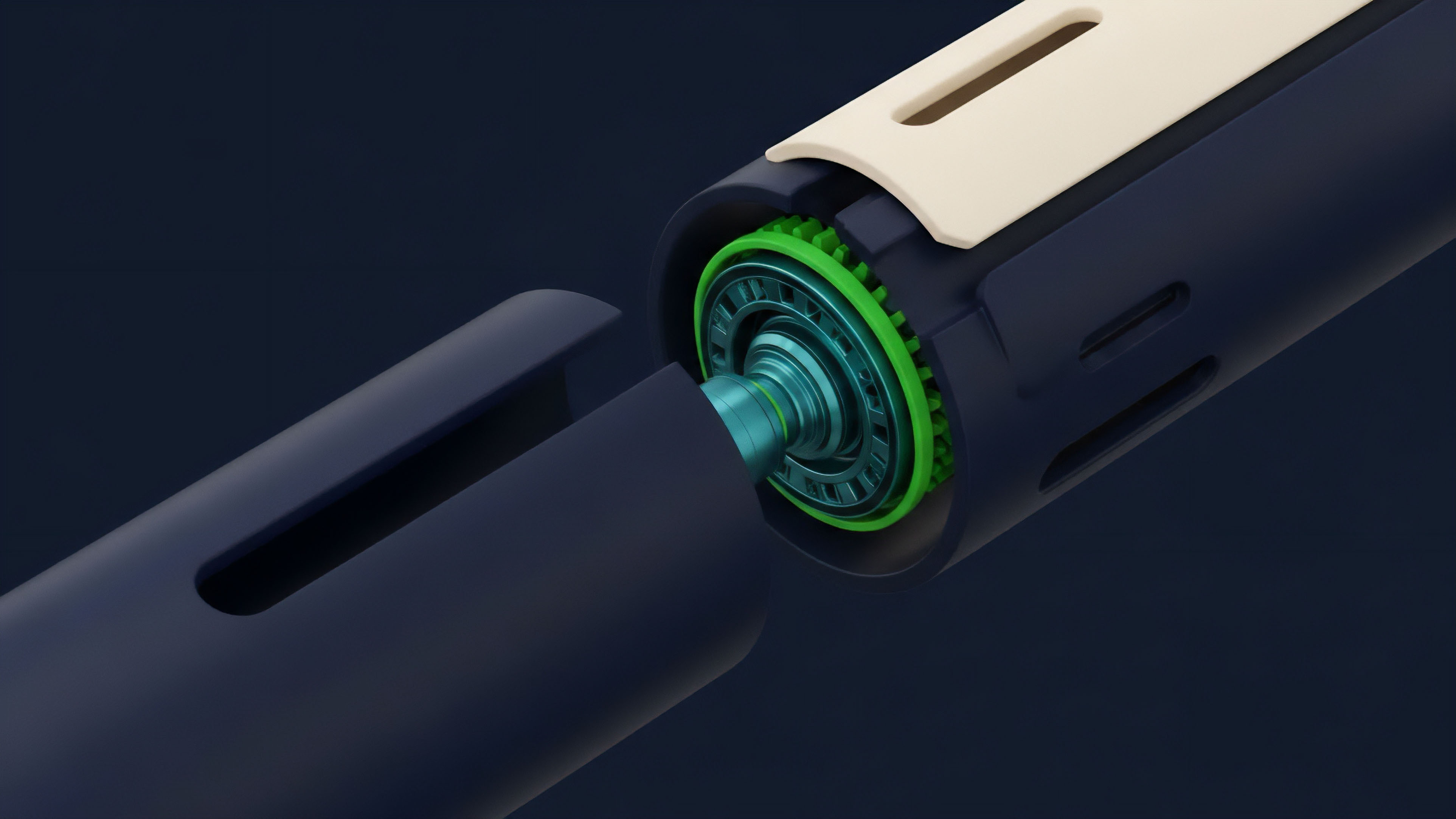

The architecture’s true complexity lies in its ability to manage the Vega risk, which is the sensitivity to changes in that very surface. When the surface shifts ⎊ a common event in crypto ⎊ the entire risk profile of the protocol changes instantly, demanding a complete re-evaluation of all positions. This necessitates sophisticated caching, parallel processing, and often, the use of hardware acceleration or specialized off-chain calculation environments to prevent the computational cost from exceeding the economic value of the trade itself.

The architecture, at its heart, is a real-time numerical solver for the complex partial differential equations that govern option pricing, a solver that must operate not on a local server, but across a distributed, asynchronous network. The precision of the GSA, therefore, becomes a direct function of its computational capacity to minimize the error between the theoretical price and the market price, particularly during high-stress periods. This is where the pricing model becomes truly elegant ⎊ and dangerous if ignored.

Approach

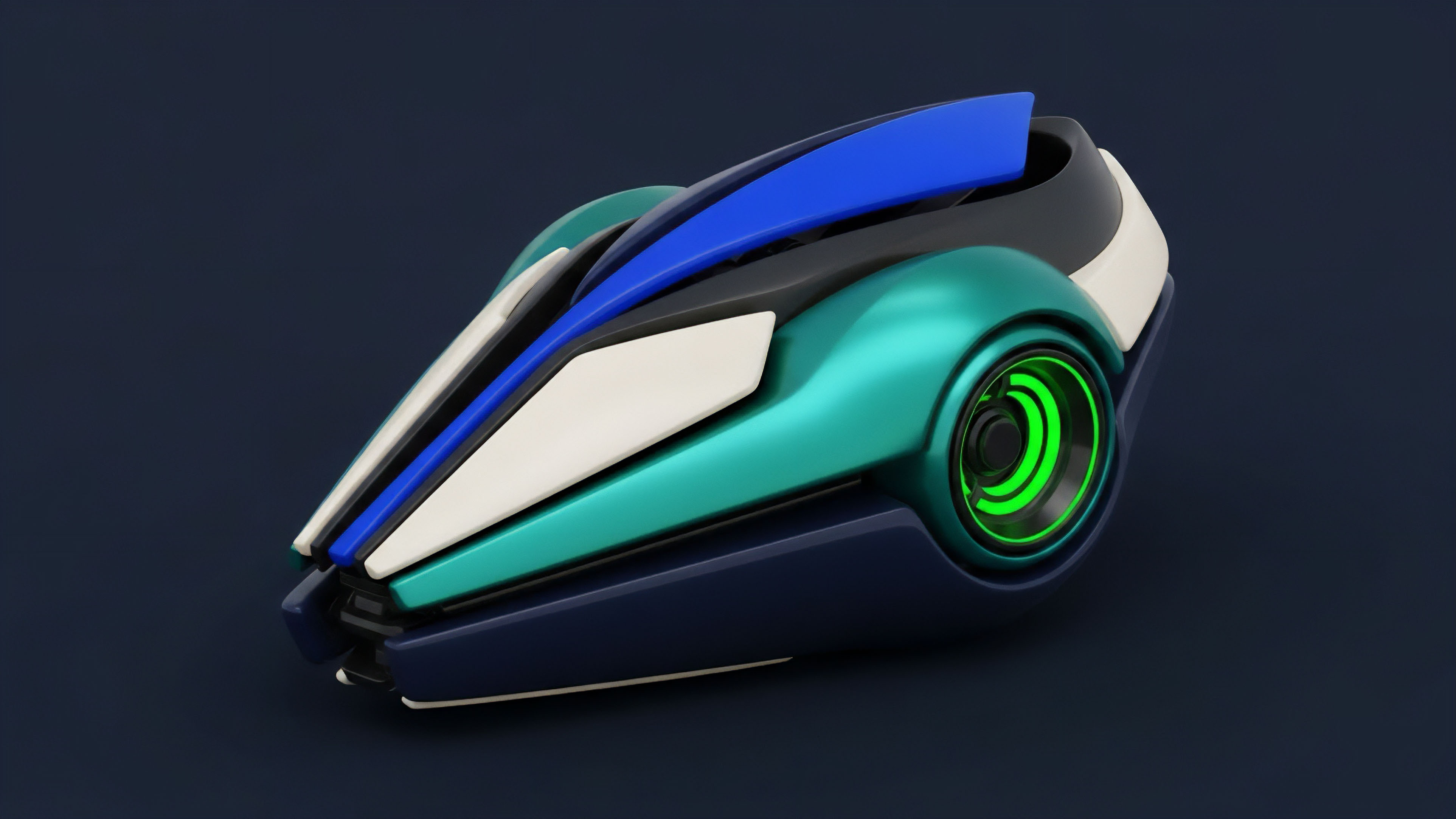

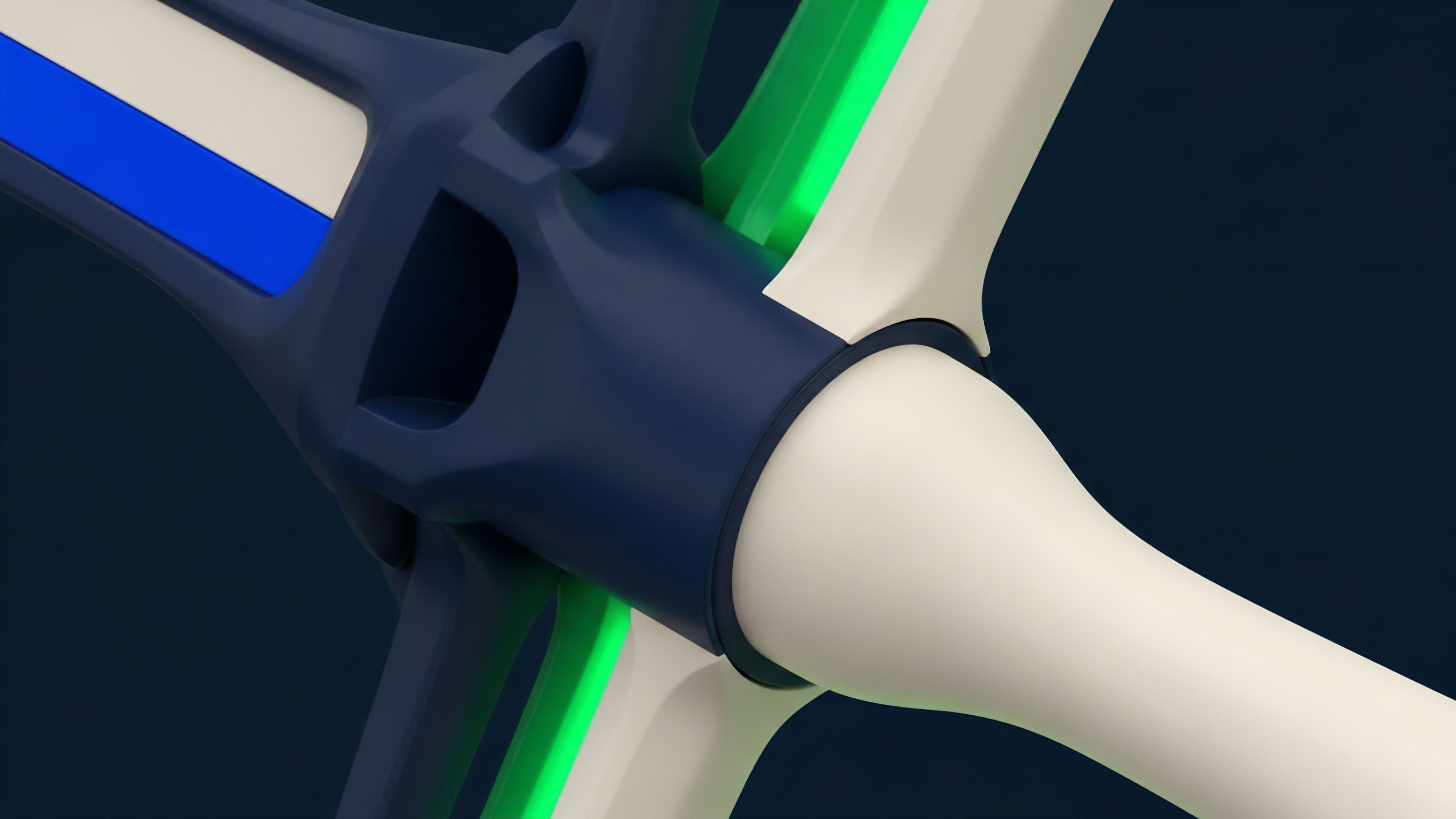

The implementation of a functional Greeks Streaming Architecture requires a hybrid approach, leveraging the computational efficiency of off-chain systems while maintaining the trustless settlement of the on-chain environment. The primary components are the Data Oracle, the Calculation Engine, and the Risk Feed Distributor.

Data Oracle and Surface Construction

The GSA begins with the real-time aggregation of market data to construct the Implied Volatility Surface. This is not a trivial task; it requires normalizing data across fragmented liquidity pools and often necessitates proprietary filtering to discard malicious or stale quotes.

- Data Normalization: Raw market data, including bids, asks, and trade history from all relevant venues, must be standardized into a unified format.

- Surface Fitting: A mathematical model ⎊ often a local volatility model or a variance swap curve ⎊ is fit to the normalized data to create a smooth, continuous surface, allowing for the interpolation of Implied Volatility for any strike and expiry.

- Filtering for Anomalies: Automated processes must identify and discard quotes that fall outside a statistically significant band, preventing market manipulation from corrupting the risk model.

Calculation Engine Design

The core of the GSA is the calculation engine, which is typically an off-chain, high-performance computing cluster. This engine processes the IVS and underlying price to calculate the Greeks for every position.

| Metric | Black-Scholes-Merton (BSM) | Monte Carlo Simulation | Finite Difference Method |

|---|---|---|---|

| Speed/Latency | Extremely Fast (Closed-form) | Slow (High Computational Cost) | Moderate (Iterative) |

| Applicability | European, Non-exotic | American, Path-dependent | American, Exotic (High precision) |

| GSA Use Case | Primary Delta/Gamma Stream | Risk Scenario Testing | Model Calibration/Verification |

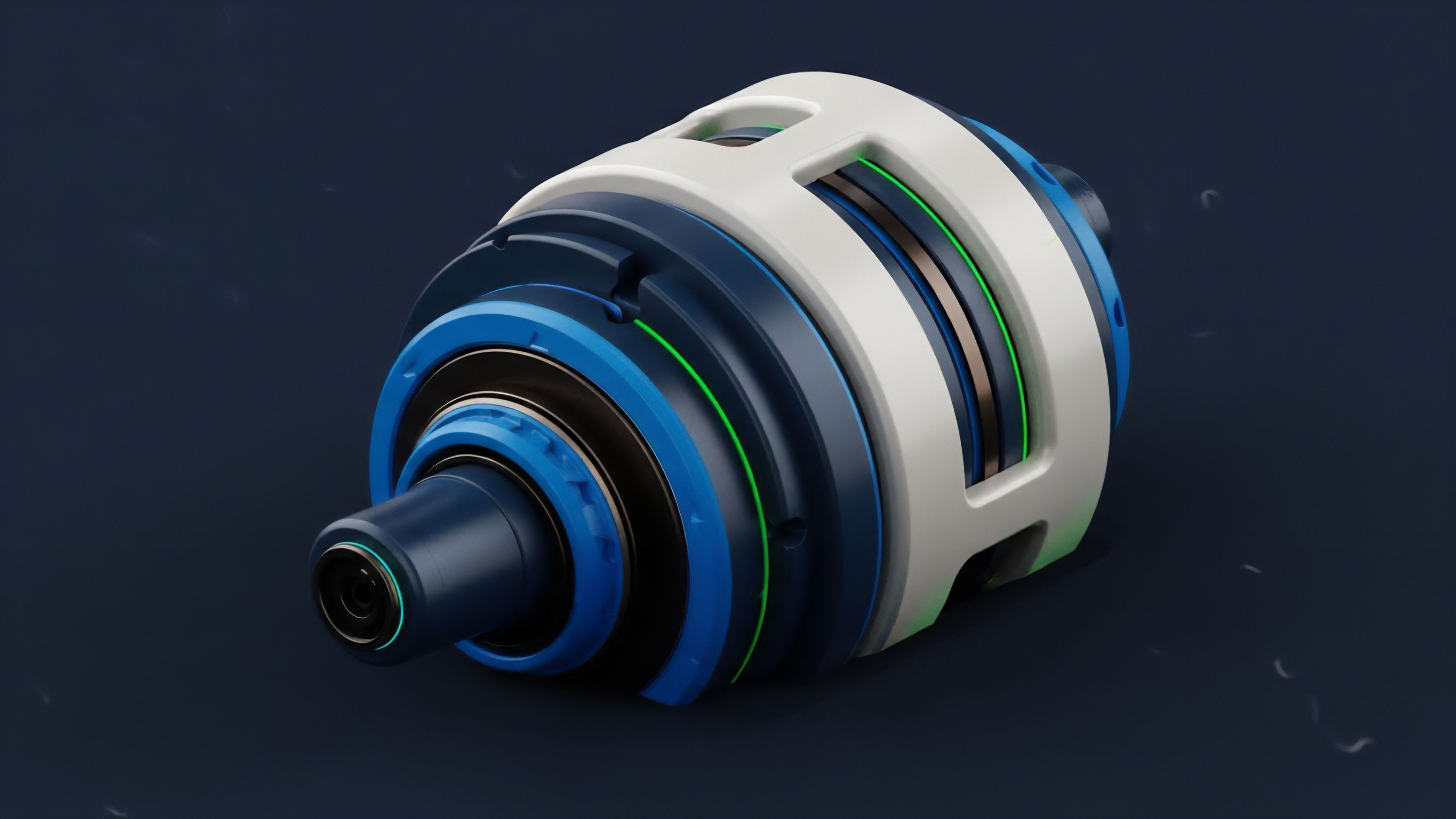

The most practical approach for real-time streaming is to utilize the BSM model for its closed-form solutions for Delta and Gamma , reserving more computationally intensive methods for periodic recalibration and stress testing. The output of this engine ⎊ the streaming Greeks ⎊ is then packaged and signed as a verifiable off-chain data feed.

The Calculation Engine is the protocol’s central nervous system, translating market chaos into the precise language of derivatives risk.

Risk Feed Distribution

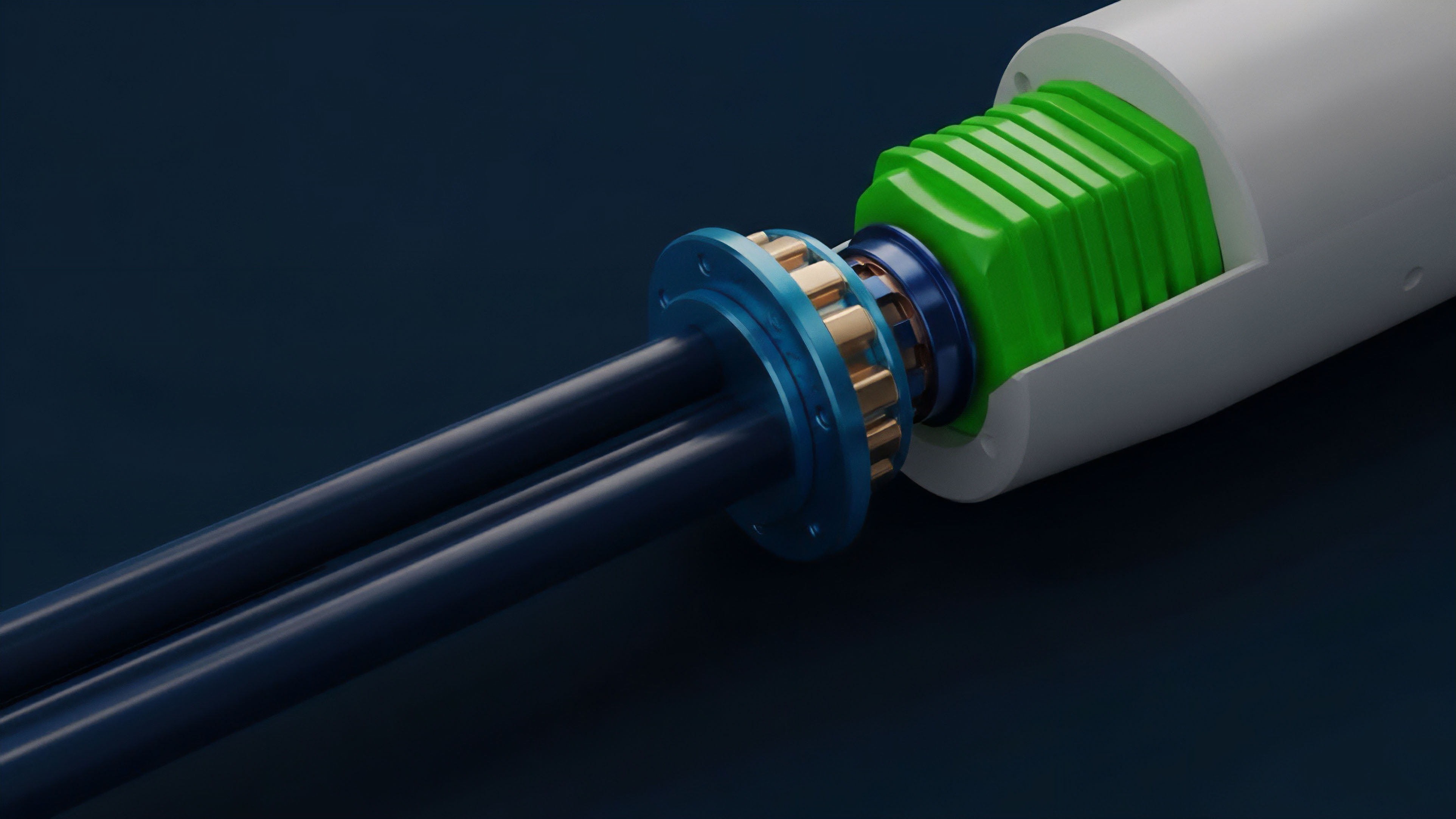

The calculated Greeks are delivered to the on-chain Liquidation Engine via a low-latency oracle solution. This feed must be cryptographically secured to prevent tampering and batched efficiently to minimize gas costs. The system must operate under a Protocol Physics constraint: the time taken for the oracle update must be less than the time it takes for a market move to breach a significant number of margin requirements.

Evolution

The evolution of Greeks Streaming Architecture has been a progression from a purely centralized, off-chain service ⎊ where trust in the exchange’s internal calculation was mandatory ⎊ to increasingly verifiable and decentralized systems. The first generation relied on the exchange’s word; the current generation is attempting to build trust through cryptographic proof and transparent methodology.

Hybrid Verification and On-Chain Constraints

The primary development has been the introduction of hybrid verification models. Protocols now often publish the mathematical methodology and the specific parameters used in their GSA, allowing sophisticated users to audit the risk framework. This move is driven by the realization that a black-box risk engine is an unacceptable single point of failure in a permissionless environment.

Our inability to respect the skew in real-time was the critical flaw in the initial models ⎊ the assumption of constant volatility was always a lie. The current GSA attempts to solve this by externalizing the Implied Volatility Surface itself as a verifiable data product.

Challenges in Decentralized GSA

The move toward fully decentralized GSA is fraught with technical and economic hurdles.

- Gas Cost Prohibitions: Executing complex, iterative option pricing algorithms on-chain remains prohibitively expensive, making fully on-chain GSA economically infeasible for high-frequency updates.

- Oracle Latency and Security: Relying on external oracles to feed the Greeks introduces the security risk of the oracle itself, a necessary trade-off for computational speed.

- Model Consistency: Ensuring that all market participants, from the protocol to the liquidators, are using the exact same, cryptographically proven GSA output at the exact same block height is a massive synchronization problem.

- Adversarial Model Attacks: Sophisticated actors can attempt to manipulate the inputs (e.g. flash loan attacks on the underlying asset price) to force a miscalculation in the GSA, triggering profitable liquidations.

GSA’s true battle is not against market volatility, but against the computational limits of trustless settlement.

The architectural shift is one of pushing computation off-chain while keeping the verification of the result on-chain, utilizing techniques like optimistic rollups or zero-knowledge proofs to assert the correctness of the Greeks calculation without revealing the entire proprietary model.

Horizon

The future of Greeks Streaming Architecture is defined by the quest for verifiable, low-latency computation that can support cross-chain risk aggregation. We are moving toward a financial operating system where the risk of a position on one chain is instantly reflected in the margin requirements on another.

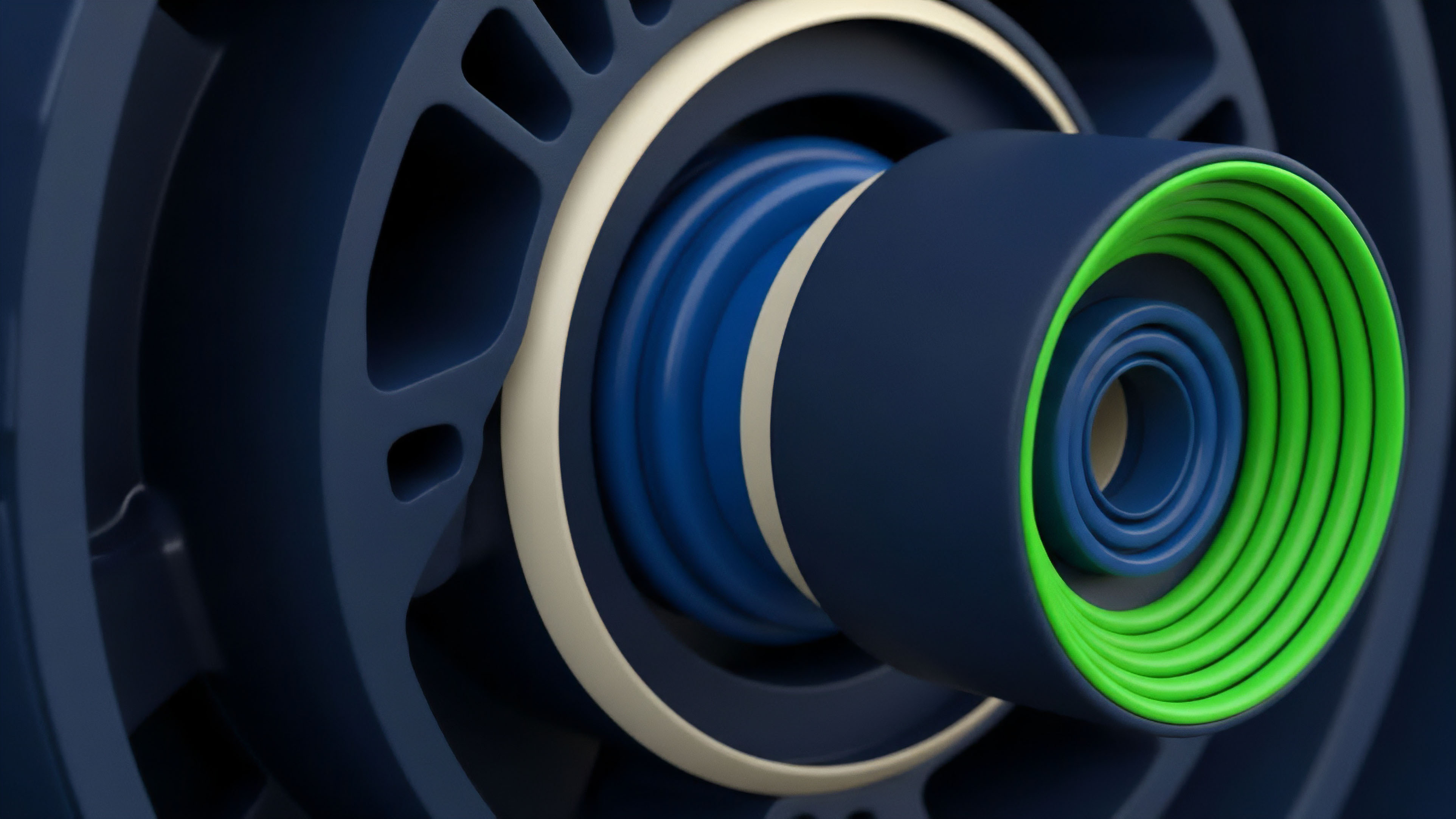

Zero-Knowledge Risk Proofs

The most promising development is the use of Zero-Knowledge (ZK) Proofs to verify the GSA calculation. This would allow a centralized entity ⎊ a market maker or a protocol ⎊ to calculate the entire suite of Greeks off-chain, generate a ZK-SNARK proving that the calculation was performed correctly according to the publicly auditable pricing model, and submit only the proof and the resulting Greeks on-chain. This maintains the computational speed of the centralized system while achieving the trustless verification of a decentralized one.

Next-Generation GSA Components

The final form of GSA will look less like a single server and more like a distributed computational graph.

- Verifiable Volatility Oracles: Dedicated oracle networks that do not just report the underlying price, but report a verifiable, ZK-proven Implied Volatility Surface itself.

- Cross-Chain Margin Engines: Protocols that can accept a GSA stream from a remote chain to calculate a unified margin requirement for a user’s entire portfolio, regardless of asset location.

- Hardware Acceleration for Gamma: Specialized hardware (e.g. FPGAs) to calculate high-order Greeks like Gamma and Vanna at microsecond latency, giving the hedging layer a decisive speed advantage over adversarial trading bots.

This technological trajectory suggests a future where the latency of risk calculation approaches the theoretical minimum, making the crypto options market fundamentally more resilient than its legacy counterparts. The systems architect’s goal is to remove the temporal gap between market event and risk reaction entirely, ensuring that the solvency of the protocol is never a function of computational lag.

Glossary

Real-Time Gamma Exposure

Risk Score Calculation

Options Greek Calculation

Real-Time Fee Adjustment

Smart Contract Risk Management

Arbitrage Cost Calculation

Real-Time Liquidity Monitoring

Options Greeks Calculation Methods and Their Implications in Options Trading

Margin Calculation Models