Essence

Quantitative Trading Methods in crypto options represent the systematic application of mathematical models and algorithmic execution to derive alpha or manage risk within decentralized derivative markets. These methods replace discretionary judgment with statistical frameworks, utilizing price data, order flow, and volatility surfaces to identify inefficiencies. The functional objective involves neutralizing directional exposure while extracting value from mispriced volatility or structural imbalances inherent in automated market-making protocols.

Quantitative trading methods deploy mathematical models to automate the capture of statistical edges within decentralized derivative markets.

These systems operate by decomposing asset price movements into measurable variables, specifically targeting the non-linear relationship between underlying spot prices and derivative contracts. By prioritizing data-driven feedback loops, these strategies function as the mechanical infrastructure for price discovery, ensuring liquidity provision across fragmented decentralized exchanges while maintaining strict adherence to pre-defined risk parameters.

Origin

The roots of these methods lie in the adaptation of classical Black-Scholes pricing and delta-hedging frameworks to the unique technical constraints of blockchain-based settlement. Early participants recognized that the transparency of public ledgers allowed for unprecedented analysis of order flow, leading to the development of latency-sensitive arbitrage bots.

These initial iterations prioritized speed and basic price parity across exchanges.

Foundational models evolved from traditional derivative pricing theory to accommodate the unique latency and settlement constraints of blockchain environments.

As decentralized finance protocols matured, the focus shifted toward more complex strategies involving automated liquidity provisioning. The transition from simple arbitrage to sophisticated yield-generation models mirrors the historical progression of traditional finance, albeit accelerated by the programmable nature of smart contracts. Developers began integrating game-theoretic incentives into protocol design, ensuring that quantitative participants were compensated for maintaining market equilibrium.

Theory

The theoretical framework rests on the rigorous analysis of Volatility Skew and Term Structure, where mathematical models quantify the market’s expectation of future price action.

Participants calculate the Greeks ⎊ Delta, Gamma, Vega, and Theta ⎊ to measure sensitivity to underlying movements and time decay. These metrics allow traders to construct delta-neutral portfolios, isolating specific risk factors while hedging away unwanted directional exposure.

| Metric | Functional Role |

| Delta | Measures directional sensitivity |

| Gamma | Quantifies rate of change in delta |

| Vega | Assesses volatility exposure |

| Theta | Calculates time decay impact |

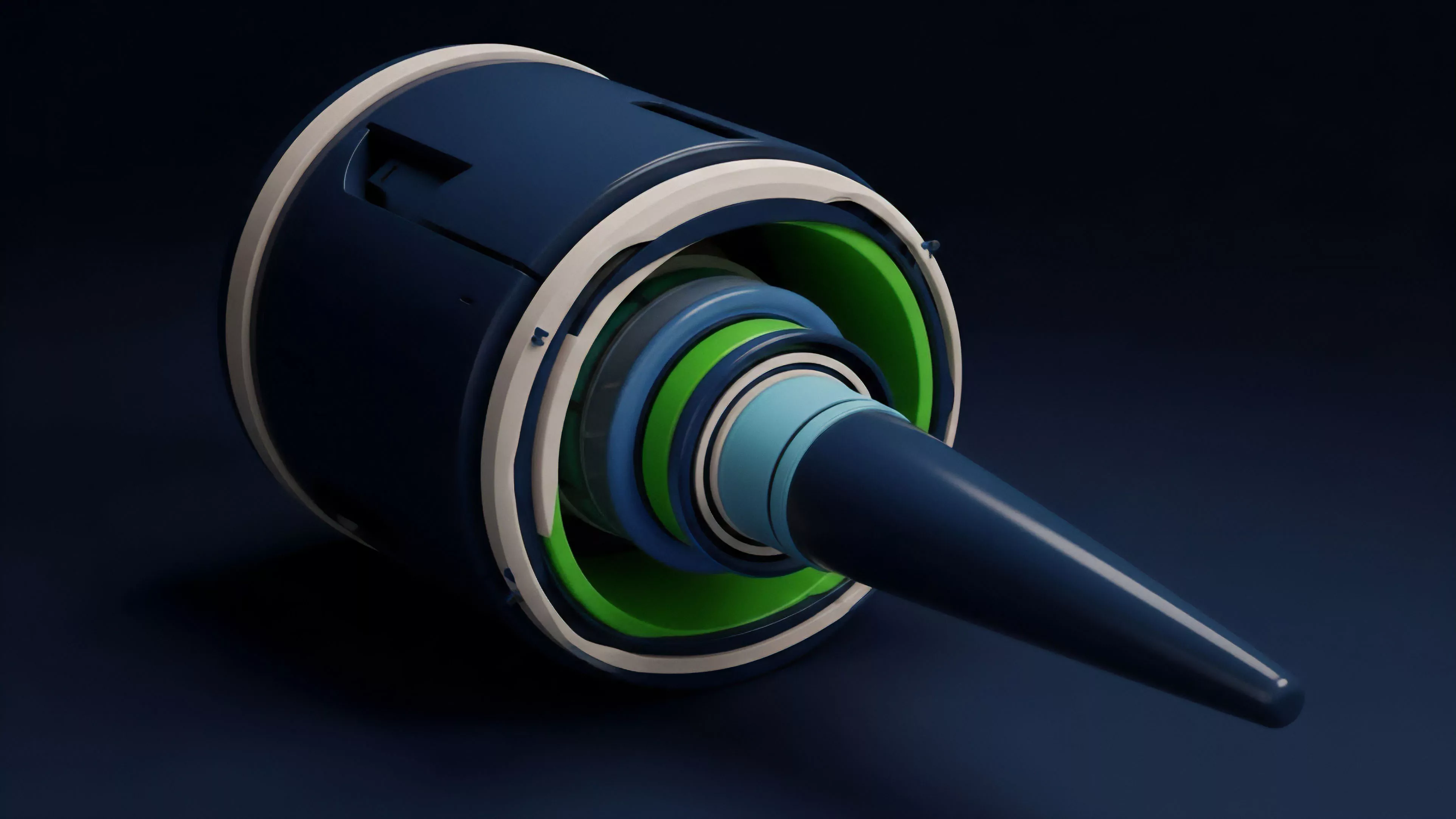

The architecture of these strategies requires a deep understanding of Protocol Physics, specifically how liquidation engines and collateral requirements impact market depth during periods of high volatility. In these adversarial environments, the primary challenge involves managing systemic risk, as the interconnection between liquidity providers and decentralized lending protocols creates pathways for rapid contagion.

- Automated Market Making functions by maintaining a constant product or curve to facilitate continuous trade execution.

- Statistical Arbitrage exploits temporary price deviations between correlated assets or across multiple trading venues.

- Volatility Arbitrage captures the spread between implied volatility and realized volatility through delta-neutral positioning.

Market participants often grapple with the limitations of these models when confronted with black swan events. The mathematical elegance of a pricing formula collapses when liquidity vanishes from the order book, forcing a transition from theoretical modeling to survival-focused risk management.

Approach

Current implementation involves high-frequency data ingestion from on-chain sources and off-chain order books to refine pricing engines in real-time. Traders utilize specialized infrastructure to minimize execution latency, ensuring their models remain synchronized with the rapid pace of decentralized markets.

This requires a synthesis of software engineering and financial engineering, where smart contract efficiency directly dictates the profitability of a strategy.

Systemic resilience requires the integration of real-time on-chain data with high-frequency execution engines to maintain competitive pricing.

Risk management remains the most critical component, involving the dynamic adjustment of collateral and the implementation of circuit breakers within the execution code. Participants must anticipate the second-order effects of their own trading activity, as large positions can trigger automated liquidations that exacerbate price volatility and impact the overall stability of the protocol.

Evolution

The transition from centralized exchange-based strategies to decentralized protocols has forced a re-evaluation of market structure. Earlier approaches relied on off-chain matching engines, whereas current systems utilize Automated Market Makers that rely on liquidity pools rather than order books.

This shift has democratized access to derivatives but increased the complexity of managing slippage and impermanent loss.

- Order Flow Analysis has evolved from simple trade monitoring to complex mempool observation for front-running protection.

- Liquidity Provision now incorporates active management of price ranges to maximize fee accrual.

- Cross-Protocol Arbitrage requires sophisticated smart contract routing to capture spreads across disparate liquidity sources.

Technological advancements in zero-knowledge proofs and layer-two scaling solutions are now enabling higher throughput for these strategies. This evolution allows for more complex derivative products, such as exotic options and interest rate swaps, to be implemented on-chain, effectively bridging the gap between traditional institutional instruments and decentralized capabilities.

Horizon

The trajectory points toward the full integration of autonomous agents capable of managing entire portfolio lifecycles without human intervention. These systems will likely utilize advanced machine learning to predict volatility regimes and adjust risk parameters dynamically, potentially outperforming traditional static models.

The primary challenge remains the development of robust oracle systems that provide reliable, tamper-proof data to these autonomous engines.

Future market architectures will prioritize autonomous portfolio management agents that dynamically adjust risk in response to evolving volatility regimes.

Regulatory frameworks will also play a decisive role, as jurisdictions begin to codify the status of decentralized derivatives. Successful strategies will require a modular design, allowing for rapid adaptation to changing compliance requirements without compromising the integrity of the underlying protocol. The ultimate goal is a resilient financial infrastructure that provides transparent, efficient, and permissionless access to sophisticated hedging tools for all participants.