Essence

Quantitative Pricing Models serve as the mathematical bedrock for valuing derivatives within decentralized financial environments. These frameworks translate abstract market expectations into actionable price discovery, converting volatility, time decay, and underlying asset dynamics into precise financial outputs. By distilling complex stochastic processes into computable values, these models provide the necessary infrastructure for participants to hedge risk and speculate with institutional rigor.

Quantitative pricing models function as the mathematical translation layer that converts market sentiment and volatility into standardized derivative values.

The core utility resides in establishing a fair value for options contracts, enabling efficient capital allocation. When market participants engage with decentralized exchanges, they rely on these computational structures to navigate the inherent opacity of blockchain-based liquidity. These models mitigate the information asymmetry that often plagues nascent markets, fostering a environment where price discovery operates on verifiable, algorithmic logic rather than speculative guesswork.

Origin

The lineage of these models traces back to classical financial theory, specifically the seminal work on Black-Scholes-Merton frameworks.

While originally designed for traditional equity markets, these foundations underwent significant modification to accommodate the unique properties of digital assets. The transition from centralized exchange environments to permissionless protocols necessitated a shift in how market makers approach risk, liquidity, and settlement.

- Black-Scholes foundations provide the initial mathematical architecture for calculating European-style option premiums based on underlying price and volatility.

- Binomial Option Pricing models offer a discrete-time approach, useful for handling American-style exercise features often found in complex DeFi structures.

- Stochastic Volatility Models incorporate the reality that volatility itself changes over time, a requirement for accurate crypto derivative pricing.

Early adoption within the space mirrored traditional finance, yet the rapid emergence of automated market makers and on-chain order books forced a departure from legacy assumptions. The necessity for real-time settlement and the avoidance of human-mediated clearinghouses drove the development of specialized, protocol-native pricing engines.

Theory

Mathematical modeling in this context requires managing high-frequency data streams and protocol-specific risks. The Greeks ⎊ delta, gamma, theta, vega, and rho ⎊ remain the primary tools for sensitivity analysis.

In a decentralized setting, these metrics must account for smart contract risk, liquidity fragmentation, and the absence of a central clearinghouse.

| Metric | Financial Function | Systemic Implication |

|---|---|---|

| Delta | Price sensitivity | Determines directional hedging requirements |

| Gamma | Delta acceleration | Quantifies the speed of necessary rebalancing |

| Theta | Time decay | Drives the profitability of option sellers |

| Vega | Volatility sensitivity | Measures exposure to market regime shifts |

The accuracy of a pricing model depends entirely on its ability to internalize the specific risks associated with decentralized settlement and collateral management.

Beyond standard metrics, Local Volatility and Implied Volatility Surfaces provide deeper insight into market expectations. By observing the smile or skew in options pricing, participants infer the probability of extreme tail events. This is where the pricing model becomes elegant and dangerous if ignored; the surface acts as a diagnostic tool for systemic stress, revealing how participants price the risk of black swan events in a 24/7, high-leverage environment.

Approach

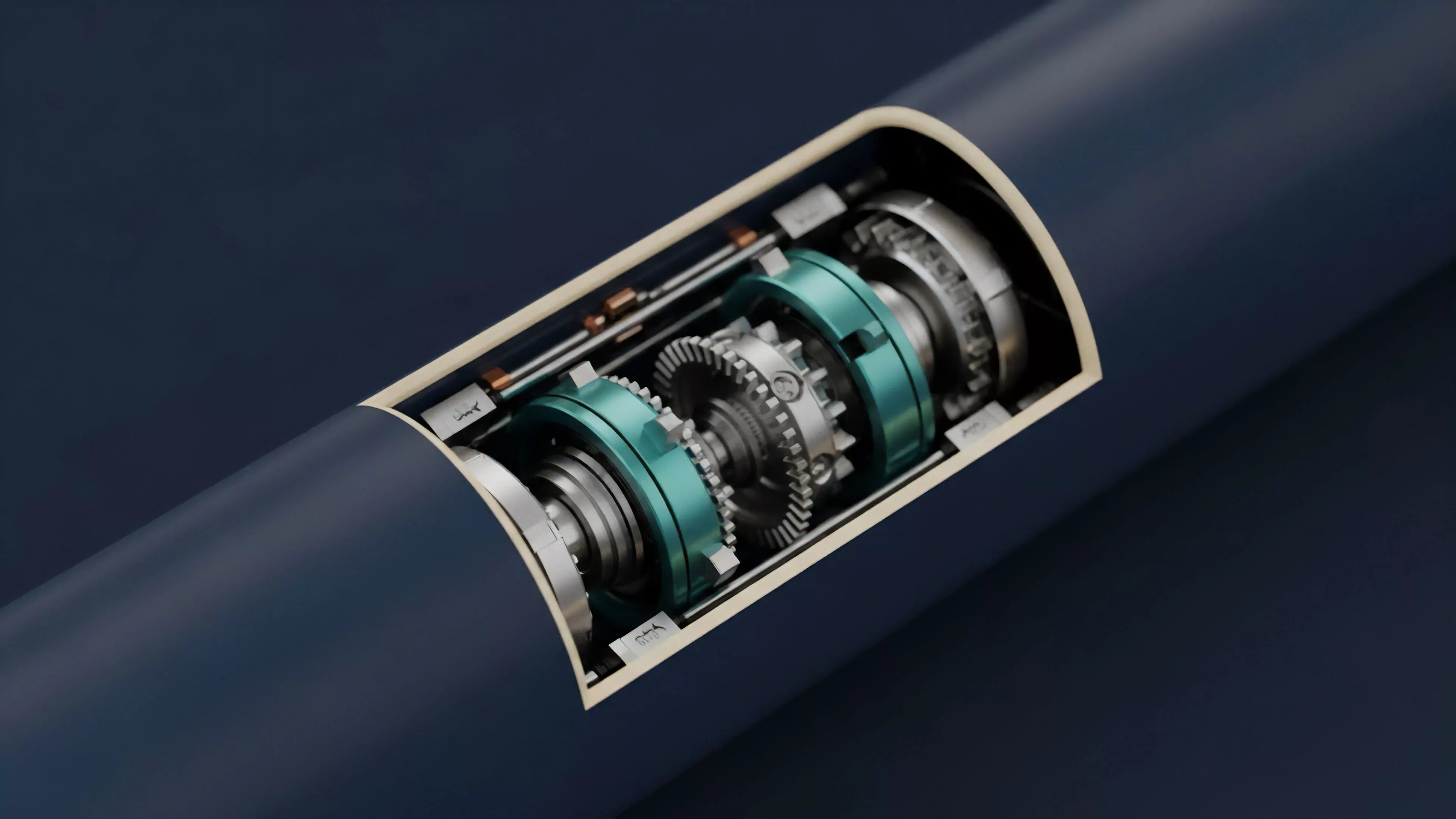

Current methodologies focus on integrating off-chain computational efficiency with on-chain settlement transparency.

Market makers now utilize Hybrid Architectures, where complex pricing calculations occur off-chain to minimize latency, while the resulting trade execution and collateral movement are recorded on the blockchain. This separation allows for high-frequency updates to the pricing model without congesting the underlying network.

- Automated Market Makers utilize constant function algorithms to ensure liquidity availability without traditional order books.

- Oracles provide the external data feeds necessary for pricing models to track underlying asset movements accurately.

- Liquidation Engines act as the final backstop, ensuring that pricing models remain anchored to reality through forced collateral adjustment.

One might observe that the shift toward modular infrastructure reflects a broader trend in financial engineering, where the separation of execution from settlement mirrors the evolution of global banking systems during the late twentieth century. This structural evolution allows for more resilient protocol designs that survive even during periods of extreme market volatility or network congestion.

Evolution

The trajectory of these models has moved from simple replication of traditional instruments toward the creation of entirely new, protocol-native derivatives. The early reliance on centralized exchange data has been superseded by decentralized, multi-source price feeds.

This change reduces dependency on single points of failure, though it introduces new complexities regarding data latency and manipulation.

Evolution in derivative pricing models is characterized by the transition from static, legacy frameworks toward adaptive, protocol-aware systems.

Recent developments emphasize Cross-Chain Pricing, where derivative value must account for liquidity across multiple, often disconnected, blockchain environments. This requires models to incorporate not just asset price, but the cost and risk of bridging capital. The maturity of these models directly correlates with the ability of decentralized protocols to attract institutional liquidity, as sophisticated capital requires precise, transparent, and verifiable risk quantification.

Horizon

The future of quantitative pricing lies in the application of Machine Learning and Real-Time Analytics to optimize liquidity provision and risk management.

As protocols gain complexity, models will likely evolve to include endogenous risk factors, such as governance-driven changes to collateral requirements or protocol-level upgrades that alter economic parameters.

- Predictive Analytics will enable dynamic adjustment of margin requirements based on real-time volatility forecasting.

- Decentralized Clearinghouses will replace current ad-hoc collateral models, providing standardized risk assessment across the entire DeFi space.

- Protocol Physics integration will allow pricing models to account for network-level congestion and gas price fluctuations directly in the derivative premium.

The ultimate goal is a self-regulating market where pricing models automatically adjust to the systemic risk profile of the entire ecosystem. This transition marks the move from reactive financial engineering to proactive, system-wide stability, creating a landscape where decentralized derivatives provide the same level of utility as their traditional counterparts, but with superior transparency and accessibility.