Essence

Quantitative Finance Verification functions as the rigorous, algorithmic audit layer for derivative pricing models and risk parameters within decentralized venues. It addresses the systemic requirement to validate that mathematical assumptions ⎊ such as implied volatility surfaces, Greeks, and collateralization ratios ⎊ align with actual on-chain market behavior. This process ensures that smart contracts governing options and structured products execute according to established financial engineering standards, rather than relying on unverified off-chain inputs or flawed heuristic assumptions.

Quantitative Finance Verification acts as the mathematical bridge between theoretical pricing models and the reality of decentralized settlement.

The core utility resides in its ability to enforce consistency across automated margin engines. By verifying the integrity of the data feeds and the mathematical correctness of the liquidation logic, it minimizes the probability of protocol-wide insolvency events caused by mispriced assets or latent latency in price discovery mechanisms. This verification framework moves beyond simple code audits, targeting the underlying financial logic that governs the lifecycle of complex derivatives.

Origin

The necessity for Quantitative Finance Verification emerged from the systemic failures observed in early decentralized derivative protocols.

These platforms often imported traditional finance pricing models without adjusting for the distinct microstructure of crypto assets, such as non-linear liquidation penalties, fragmented liquidity, and high-frequency volatility clusters. Initial iterations of decentralized options relied on optimistic assumptions that broke under high market stress, leading to cascading liquidations and severe capital erosion.

- Financial Discontinuity: Traditional Black-Scholes implementations failed to account for the discontinuous price jumps common in digital asset markets.

- Model Incompatibility: Standard pricing frameworks lacked mechanisms to process the specific risk-weighted collateral requirements inherent in trustless environments.

- Latency Exploitation: Early protocols were susceptible to oracle manipulation, where discrepancies between internal model prices and external spot prices created arbitrage opportunities that drained liquidity pools.

As decentralized finance matured, the focus shifted from simple smart contract security to the validation of economic models. Developers recognized that code correctness did not guarantee financial stability. This realization catalyzed the development of specialized verification frameworks designed to stress-test derivative protocols against extreme market conditions, ensuring that margin requirements remain sufficient even during periods of extreme volatility.

Theory

The theoretical framework of Quantitative Finance Verification rests on the intersection of stochastic calculus, game theory, and distributed systems architecture.

It assumes that market participants are rational actors operating within an adversarial environment where information asymmetry is the primary source of alpha. Verification protocols must therefore treat every pricing parameter as a potential attack vector, subjecting them to continuous, real-time validation against objective market data.

Mathematical Modeling

Pricing models must be subjected to formal verification to ensure they do not produce irrational outcomes. This involves checking the consistency of Greeks ⎊ specifically Delta, Gamma, and Vega ⎊ to ensure they accurately reflect the sensitivity of the derivative’s value to changes in the underlying asset price and volatility. Any deviation between the model output and the realized market sensitivity signals a failure in the verification layer.

Adversarial Feedback Loops

The system architecture incorporates adversarial agents that continuously test the protocol’s liquidity and solvency limits. These agents attempt to force the system into states where the collateralization ratio falls below critical thresholds, thereby identifying vulnerabilities in the margin engine before they can be exploited by malicious actors.

| Parameter | Verification Metric | Systemic Risk Impact |

| Implied Volatility | Surface Consistency | High |

| Delta Neutrality | Hedge Accuracy | Moderate |

| Liquidation Threshold | Collateral Adequacy | Critical |

Quantitative Finance Verification employs adversarial modeling to stress-test the structural integrity of margin engines against extreme market anomalies.

Approach

Current practices involve the integration of decentralized oracle networks and on-chain computational engines that perform real-time verification of derivative contract states. This approach requires that every transaction ⎊ whether opening a position, adjusting a hedge, or triggering a liquidation ⎊ undergoes a verification step where the protocol calculates the expected value based on current market inputs and compares it against the requested action.

- Automated Margin Auditing: Real-time calculation of portfolio risk, ensuring that the Value at Risk remains within protocol-defined boundaries at all times.

- Oracle Integrity Validation: Cross-referencing multiple decentralized price sources to prevent oracle manipulation from impacting the derivative’s settlement value.

- Stress Testing: Simulating high-volatility scenarios to verify that the protocol’s liquidation mechanisms are capable of maintaining solvency during rapid price declines.

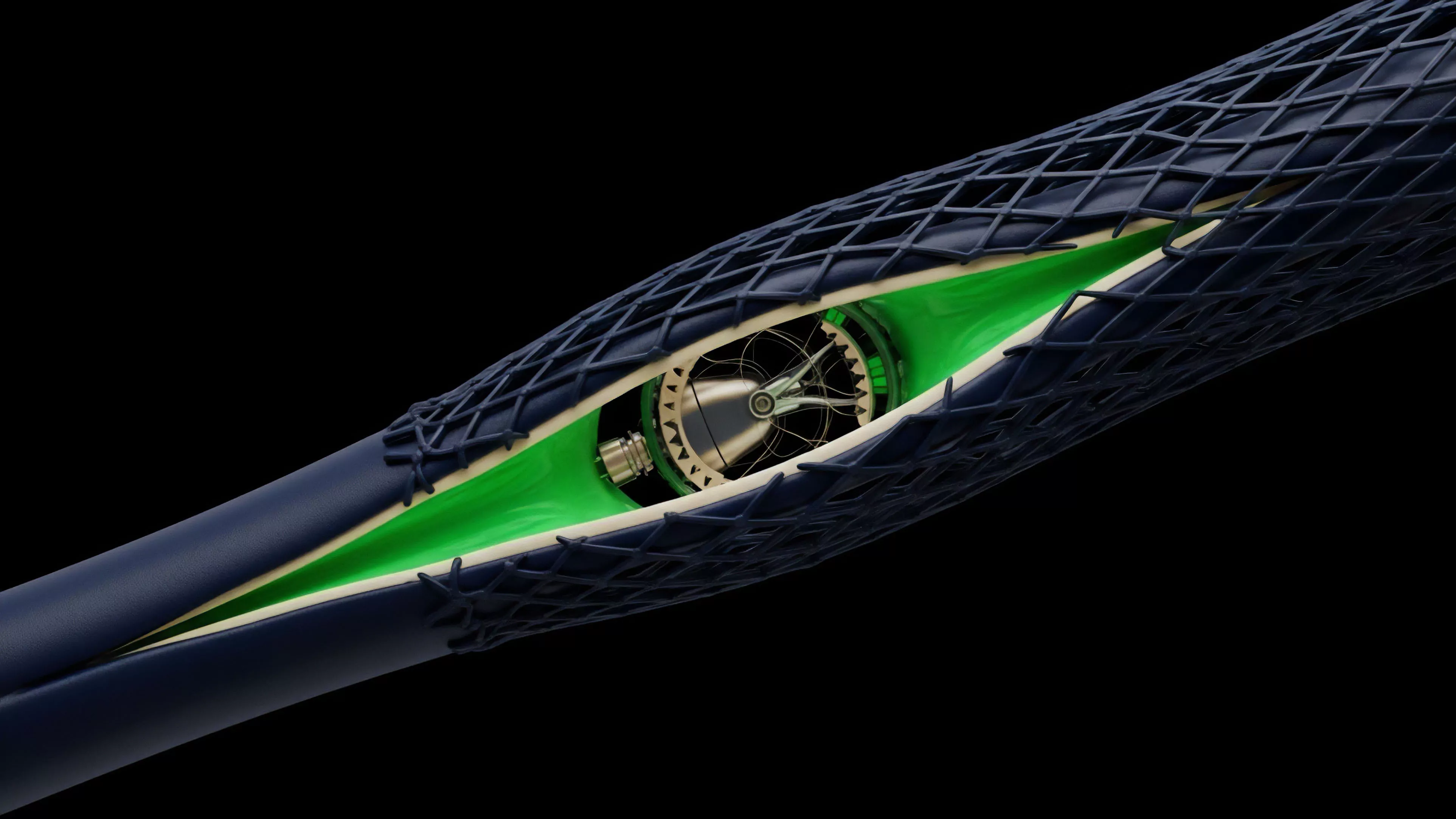

The shift toward modular verification architectures allows protocols to swap pricing engines as market conditions change, providing greater flexibility without compromising security. This methodology acknowledges that market microstructure is not static; it evolves as participants adjust their strategies to new incentive structures. Consequently, the verification process must be adaptive, incorporating new data points to refine its risk assessment algorithms.

Evolution

The progression of Quantitative Finance Verification mirrors the broader development of sophisticated financial infrastructure within decentralized markets.

Early versions were limited to basic checks on collateral levels, but the current state involves comprehensive, multi-dimensional validation of entire portfolios. This transformation was driven by the introduction of cross-margining and portfolio-based risk management, which significantly increased the complexity of the underlying financial models. One might consider how the shift from single-asset collateral to multi-asset pools mimics the transition from primitive bartering systems to modern, ledger-based credit economies.

This progression necessitates a more robust verification layer, as the interconnectedness of assets introduces new contagion risks that were previously absent.

The evolution of Quantitative Finance Verification reflects the transition from simplistic collateral checks to sophisticated, multi-dimensional risk assessment frameworks.

Future iterations will likely utilize zero-knowledge proofs to verify the accuracy of complex pricing calculations off-chain, while maintaining the transparency and trustlessness of on-chain settlement. This would allow protocols to handle significantly higher volumes of derivative trades without incurring the high gas costs associated with on-chain computational verification. The focus is shifting toward scalability and the reduction of latency, ensuring that verification can keep pace with the high-frequency nature of modern crypto markets.

Horizon

The future of Quantitative Finance Verification lies in the development of autonomous, self-healing risk management systems. These systems will not only verify the accuracy of pricing models but will also automatically adjust risk parameters, such as margin requirements and liquidation penalties, in response to shifting market conditions. This creates a closed-loop system where the protocol actively manages its own systemic risk, reducing the need for human intervention or centralized governance. Further, the integration of machine learning into the verification layer will allow for the detection of anomalous trading patterns that may indicate impending market manipulation or liquidity crunches. By identifying these patterns before they manifest as systemic failures, protocols can preemptively tighten risk constraints, thereby enhancing the overall stability of the decentralized financial landscape. The ultimate objective is to create financial instruments that are mathematically provable and inherently resilient, regardless of the underlying market environment.