Essence

Quantitative Analysis Techniques in crypto options represent the formal application of mathematical modeling and statistical rigor to the pricing, risk management, and strategic deployment of decentralized financial instruments. These methods convert the raw, stochastic noise of blockchain order books and decentralized liquidity pools into actionable probabilistic frameworks. By prioritizing computational precision over heuristic intuition, these techniques allow participants to map the complex interdependencies between underlying asset volatility, time decay, and protocol-specific mechanics.

Quantitative analysis transforms decentralized market uncertainty into structured probabilistic exposure through mathematical modeling.

The primary objective involves the systematic decomposition of derivative payoffs into measurable components. Practitioners utilize these models to establish theoretical fair value, calibrate delta-neutral strategies, and quantify tail-risk exposure within adversarial environments. The reliance on deterministic code execution for settlement requires that these quantitative frameworks account for the discrete nature of smart contract interactions and the specific latency profiles of various blockchain consensus mechanisms.

Origin

The genesis of these techniques draws directly from classical derivatives theory, adapted for the high-frequency, non-custodial landscape of digital assets.

Early implementations sought to replicate the Black-Scholes-Merton paradigm within automated market makers and decentralized exchanges. This transition required adjusting traditional assumptions regarding continuous trading, as blockchain finality introduces distinct temporal constraints and gas-cost friction into the pricing of options.

- Black-Scholes-Merton provided the foundational differential equations for pricing European-style options.

- Market Microstructure research introduced the necessity of modeling order flow and liquidity provision mechanisms.

- Protocol Physics emerged as a requirement to address the impact of on-chain liquidation thresholds on derivative pricing.

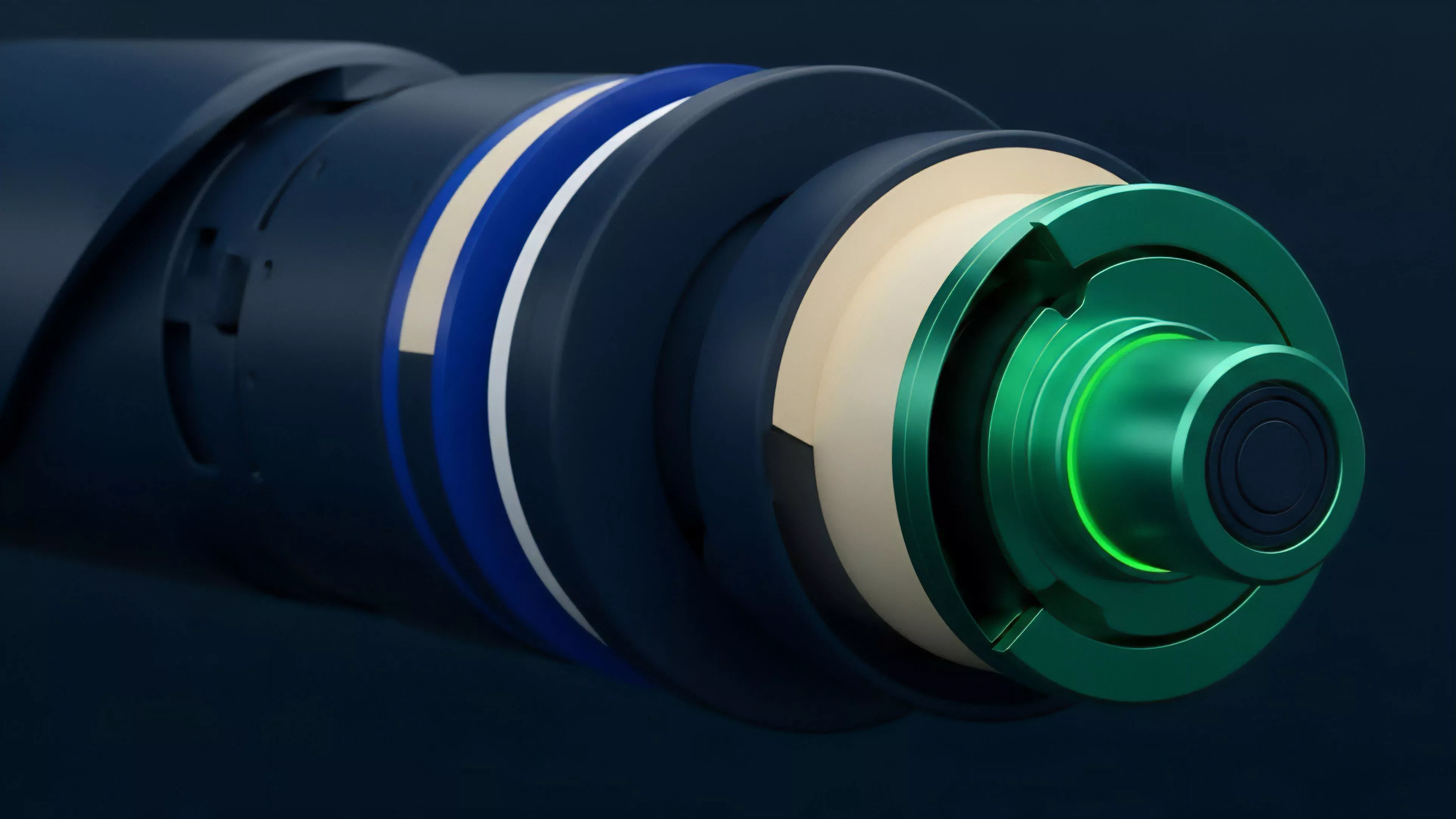

These methods matured as developers integrated off-chain oracle data with on-chain execution logic. The move away from centralized clearinghouses toward trustless, protocol-governed margin engines forced a redesign of risk sensitivity analysis. Current models incorporate these architectural realities, ensuring that the mathematical representation of an option reflects the actual constraints of the underlying decentralized protocol.

Theory

The theoretical framework rests on the rigorous calculation of Greeks ⎊ delta, gamma, theta, vega, and rho ⎊ to isolate specific dimensions of market risk.

In the context of decentralized markets, these sensitivities must incorporate the unique volatility signatures of digital assets, which frequently exhibit higher kurtosis and fat-tailed distributions than traditional equities.

| Metric | Systemic Focus | Derivative Impact |

|---|---|---|

| Delta | Directional Exposure | Hedge ratio calibration |

| Gamma | Convexity Risk | Rate of change for delta |

| Vega | Volatility Sensitivity | Implied volatility surface shifts |

| Theta | Time Decay | Option value erosion |

The mathematical architecture often employs Monte Carlo simulations to model path-dependent outcomes in protocols where collateral can be liquidated based on price deviations. This involves analyzing the interaction between user behavior, incentive structures, and protocol-level security. The systemic stability of these derivatives depends on the precision of these models, as flawed assumptions regarding correlation or liquidity can trigger cascading liquidations.

The accuracy of quantitative models hinges on the precise calibration of volatility surfaces against the realities of on-chain liquidity.

Sometimes, the intersection of game theory and quantitative finance becomes evident, particularly when analyzing how market participants respond to arbitrage opportunities created by model mispricing. This suggests that the market itself functions as a massive, distributed computing engine, constantly testing the robustness of the pricing models deployed by its participants.

Approach

Modern implementation focuses on the integration of real-time on-chain data streams with sophisticated risk-assessment engines. Analysts utilize high-frequency data from decentralized exchanges to monitor order book depth and slippage, which directly influence the cost of delta hedging.

The current methodology emphasizes the automation of these processes through smart contracts that manage margin requirements and execute liquidations without human intervention.

- Data Ingestion involves capturing raw event logs from decentralized protocols and oracle feeds.

- Model Calibration adjusts pricing parameters based on current implied volatility and skew data.

- Execution Logic maps the calculated hedges to on-chain liquidity pools while minimizing gas expenditure.

Strategies now frequently account for regulatory arbitrage, where protocol architecture is designed to function within diverse jurisdictional constraints while maintaining capital efficiency. This requires a synthesis of legal analysis and quantitative engineering, ensuring that the technical implementation remains resilient to both code-based exploits and shifting regulatory environments.

Evolution

The trajectory of these techniques tracks the shift from simple, centralized trading venues to complex, composable decentralized protocols. Initially, models merely replicated traditional financial instruments.

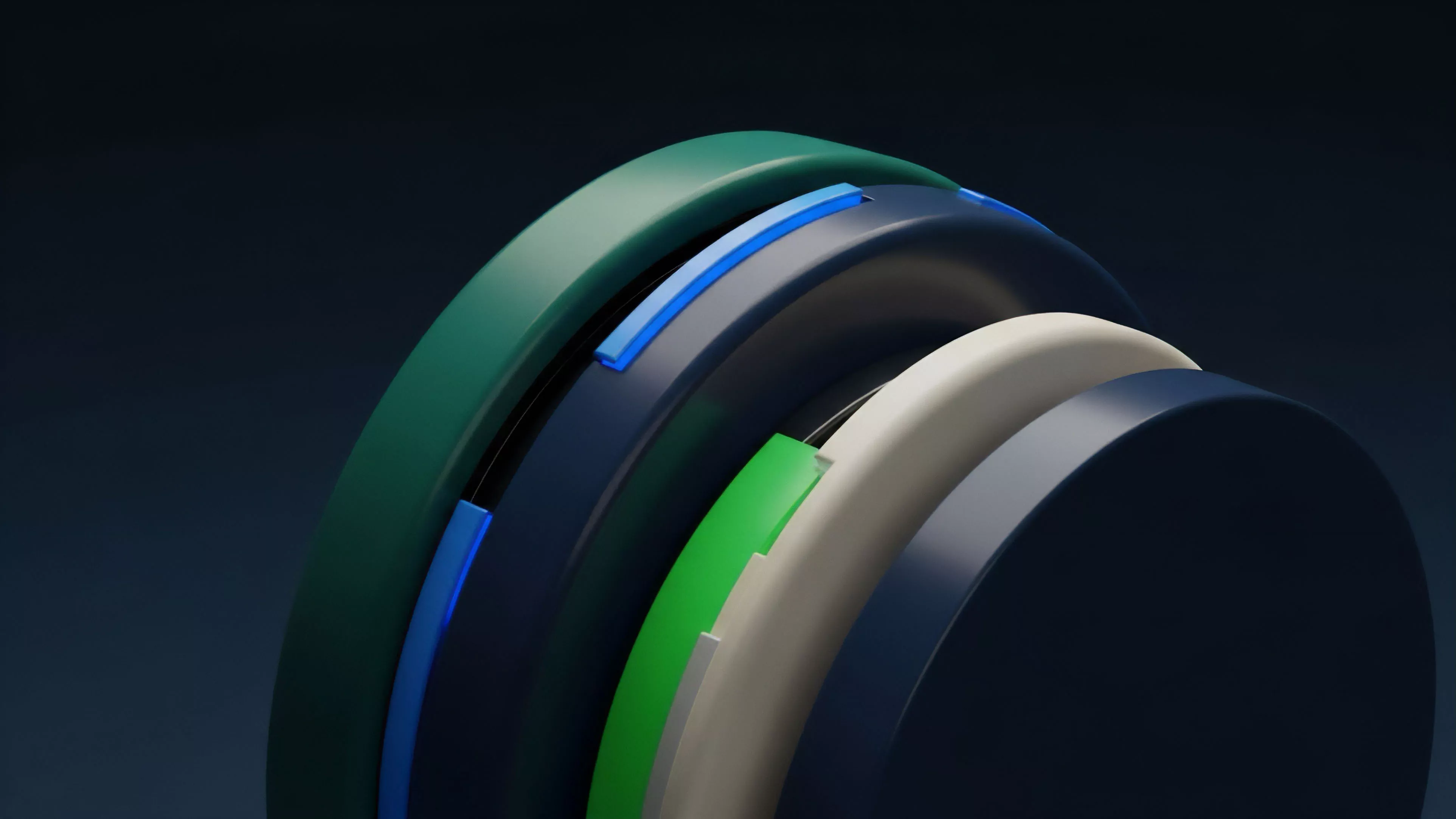

The current state demands an architecture that accounts for the composability of DeFi, where a single derivative position might rely on multiple underlying protocols for collateral, yield, and liquidity.

Evolution in derivative architecture demands that models account for the systemic risks inherent in cross-protocol composability.

Future development points toward the integration of advanced machine learning techniques to forecast volatility regimes more accurately than static models allow. This shift reflects the increasing sophistication of market participants who are moving beyond standard option pricing to develop bespoke, synthetic instruments that offer tailored risk-return profiles. The focus is no longer on individual assets but on the management of systemic risk across the entire decentralized landscape.

Horizon

The next phase involves the maturation of decentralized volatility trading, moving toward more efficient, protocol-native derivative markets.

We expect the rise of cross-chain margin engines that allow for unified risk management across heterogeneous blockchain environments. This will necessitate a new generation of quantitative tools capable of pricing assets while accounting for the inherent latency and security risks of cross-chain messaging protocols.

| Future Driver | Strategic Implication |

|---|---|

| Cross-chain liquidity | Unified margin management |

| Algorithmic volatility | Dynamic risk adjustment |

| Institutional participation | Increased model standardization |

The ultimate goal remains the construction of a self-sustaining, resilient financial system where risk is priced accurately and transparently by code rather than intermediaries. The quantitative techniques described here will serve as the core logic for this transition, enabling the development of markets that are both highly efficient and fundamentally more robust than their centralized predecessors.