Essence

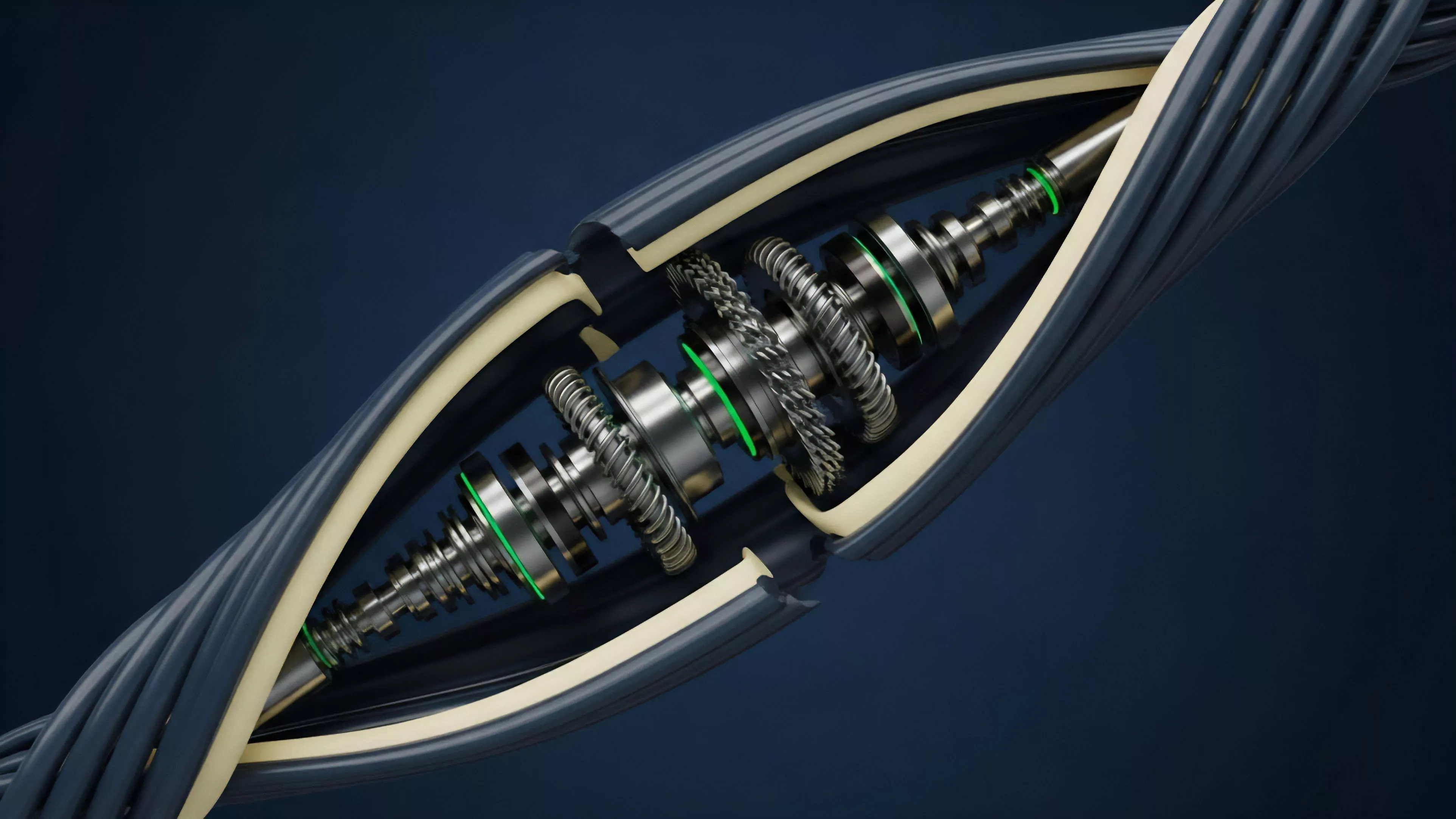

Protocol Performance Optimization functions as the architectural discipline of refining decentralized financial infrastructure to maximize throughput, minimize latency, and reduce the systemic cost of capital. It encompasses the deliberate calibration of smart contract execution paths, consensus overhead, and state storage efficiency to ensure that derivative markets maintain integrity under extreme load.

Protocol Performance Optimization serves as the mechanical foundation for maintaining price discovery efficiency and systemic stability in decentralized derivative markets.

This practice moves beyond simple code efficiency, addressing the fundamental trade-offs between decentralization, security, and financial speed. When protocols execute complex derivative settlements, the underlying infrastructure must process concurrent state updates without creating bottlenecks that distort market microstructure or encourage predatory extraction by automated agents.

Origin

The requirement for Protocol Performance Optimization originated from the observed limitations of early automated market makers and collateralized debt positions. Initial designs struggled with high gas costs and significant latency during periods of extreme market volatility, which frequently led to stale pricing and cascading liquidation failures.

- Systemic Fragility: Early architectures demonstrated that unoptimized state transitions created artificial liquidity droughts during rapid market moves.

- Computational Overhead: Initial consensus mechanisms prioritized validator decentralization over the high-frequency settlement demands required for robust options pricing.

- Financial Contagion: Flaws in performance led to price divergence between on-chain assets and external benchmarks, necessitating more sophisticated execution environments.

These early challenges highlighted that financial protocols must prioritize the synchronization of state updates with market reality. Designers recognized that without granular control over computational resources, decentralized derivatives could not compete with traditional centralized exchanges in terms of capital efficiency and risk management.

Theory

The theoretical framework for Protocol Performance Optimization relies on balancing the computational cost of cryptographic verification against the necessity for low-latency financial settlement. Advanced protocols utilize modular execution layers to decouple consensus from transaction processing, allowing for high-throughput state updates.

| Parameter | Unoptimized Protocol | Optimized Protocol |

| State Access | Synchronous/Linear | Asynchronous/Parallel |

| Execution Cost | Variable/High | Predictable/Low |

| Latency | Block-time dependent | Sub-second/Off-chain |

Quantitative models in this domain focus on Greeks sensitivity and liquidation thresholds. If the protocol cannot compute the delta or gamma of an option position within the required timeframe, the risk engine becomes inaccurate, exposing the entire liquidity pool to toxic flow.

Effective performance architecture aligns the speed of state validation with the volatility of the underlying asset to prevent model-driven liquidation failures.

Mathematical rigor in this context requires minimizing the number of state reads required for a single trade. By implementing optimized data structures like Merkle trees or specialized key-value stores, developers reduce the load on the underlying consensus layer, enabling complex derivative instruments to function within the constraints of distributed ledgers.

Approach

Modern approaches to Protocol Performance Optimization involve a multi-layered strategy that spans from low-level smart contract bytecode minimization to high-level network topology adjustments. Engineers focus on minimizing the number of operations performed within the critical path of a transaction.

- Bytecode Stripping: Removing redundant execution paths to decrease gas consumption and improve transaction inclusion rates.

- State Batching: Consolidating multiple derivative settlements into a single state update to reduce the burden on validator nodes.

- Optimistic Execution: Assuming validity of transactions and only reverting upon challenge, which significantly lowers the latency for standard option interactions.

The current state of the art involves the deployment of specialized virtual machines tailored for high-frequency financial tasks. By restricting the computational scope to specific financial primitives, these systems achieve performance metrics that were previously impossible on general-purpose blockchains.

Evolution

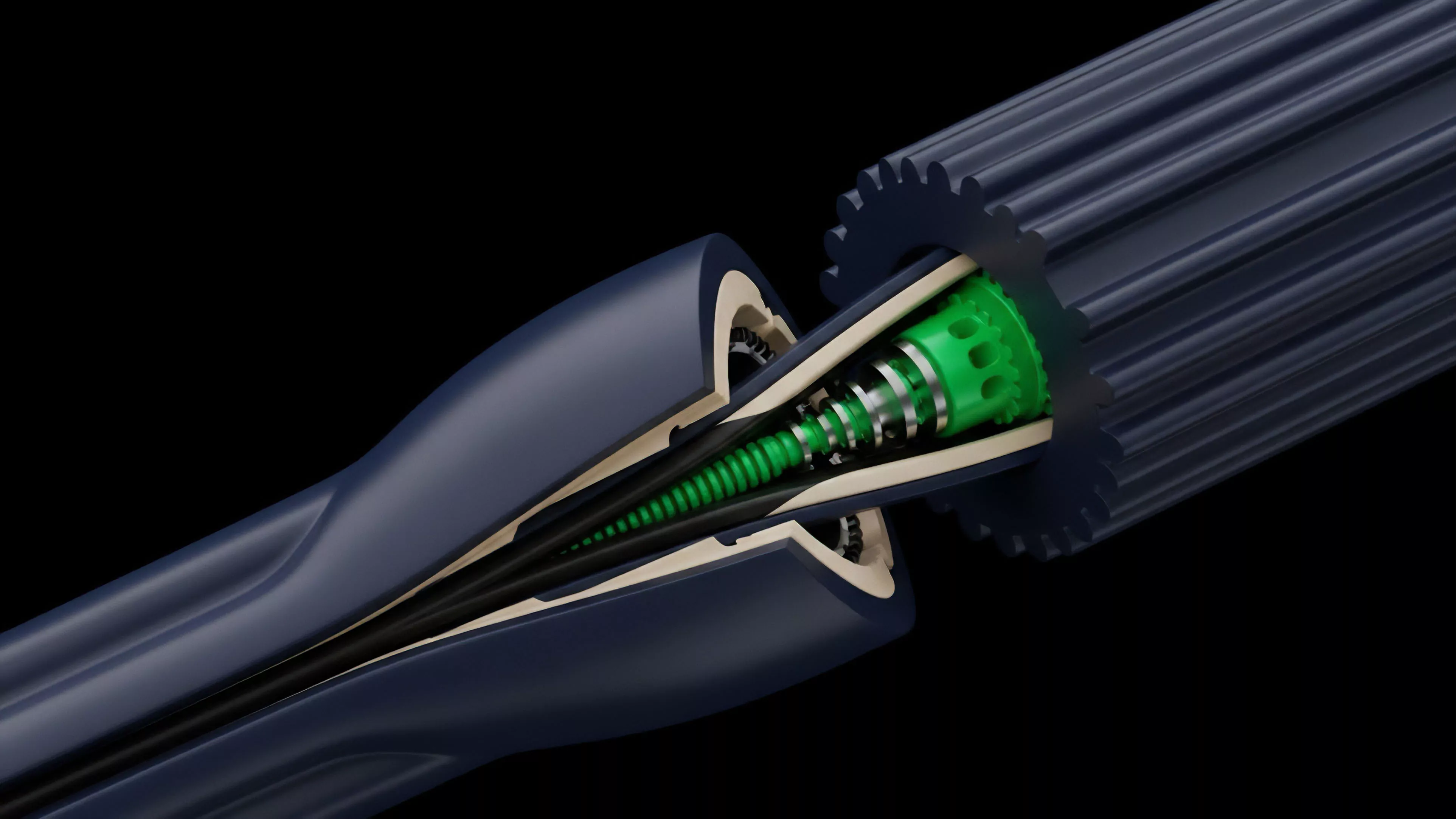

The transition from monolithic architectures to modular, roll-up-centric designs marks the most significant shift in Protocol Performance Optimization. Initially, all activity occurred on the main consensus layer, which imposed severe limits on throughput and financial complexity.

The move toward off-chain execution environments allowed for the separation of settlement and computation. This shift enabled the creation of sophisticated order books and high-frequency option platforms that leverage the security of the base layer while benefiting from the speed of specialized execution layers.

Systemic evolution prioritizes the off-loading of intensive derivative calculations to specialized environments while maintaining immutable settlement on the base layer.

Market participants now demand sub-millisecond execution for complex multi-leg option strategies. This requirement forced developers to rethink how protocols interact with external data feeds, leading to the adoption of decentralized oracle networks that provide low-latency, high-fidelity pricing data without introducing significant delay into the settlement pipeline.

Horizon

Future developments in Protocol Performance Optimization will likely focus on hardware-accelerated consensus and zero-knowledge proofs for private, high-speed derivative settlement. The integration of specialized hardware at the validator level will allow for the verification of complex mathematical models without incurring traditional latency penalties. One testable hypothesis involves the impact of parallelized state execution on the volatility of liquidity provider returns. If parallelization reduces latency, the frequency of adverse selection should decrease, potentially narrowing bid-ask spreads across decentralized derivative venues. The instrument of agency here is a modular architecture specification for a high-frequency options protocol that utilizes zero-knowledge proofs to verify risk parameters off-chain. This specification would define the interfaces for liquidity providers to submit risk-adjusted collateral commitments, which are then verified by the protocol’s consensus mechanism without requiring the full state update for every individual trade. What paradox emerges when the pursuit of absolute performance potentially centralizes the validation process, thereby introducing new systemic risks that outweigh the gains in execution speed?