Essence

Protocol Consensus Mechanisms function as the foundational distributed governance and state-synchronization engines that validate transaction legitimacy within decentralized networks. These frameworks dictate how disparate nodes reach agreement on the ordering and validity of ledger entries without reliance on a centralized clearinghouse or authoritative intermediary.

Consensus mechanisms provide the technical ruleset that ensures financial finality and state integrity across decentralized distributed ledgers.

At their most fundamental level, these protocols solve the Byzantine Generals Problem, ensuring that malicious actors cannot subvert the network or double-spend assets. The mechanism dictates the distribution of power, the speed of settlement, and the economic cost of network participation, which directly influences the liquidity and volatility characteristics of the underlying financial assets.

- Proof of Work utilizes computational energy to provide probabilistic security through irreversible resource expenditure.

- Proof of Stake relies on economic collateral to align validator incentives with long-term network health and security.

- Delegated Proof of Stake introduces representative governance structures to enhance transaction throughput at the cost of increased centralization.

Origin

The genesis of these protocols lies in the requirement for trustless, peer-to-peer value transfer. Early digital cash experiments struggled with the double-spending problem until the introduction of Nakamoto consensus, which linked cryptographic hashing to physical energy expenditure. This innovation transformed the ledger from a static database into an immutable, adversarial-resistant financial record.

The transition from centralized clearing to cryptographic consensus shifted the burden of security from human institutions to mathematical proofs.

Historically, the evolution began with simple hash-based puzzles designed to throttle spam, eventually maturing into the complex, game-theoretic structures seen today. These early iterations demonstrated that decentralized networks could achieve state consistency even when participants remained anonymous and uncoordinated. This breakthrough allowed for the creation of programmable money where the settlement engine is embedded directly into the protocol rules.

| Mechanism | Primary Security Driver | Historical Context |

|---|---|---|

| Proof of Work | Energy Expenditure | Bitcoin Whitepaper |

| Proof of Stake | Capital Collateral | Peercoin Implementation |

| Practical Byzantine Fault Tolerance | Quorum Agreement | Academic Research |

Theory

The theoretical framework rests on balancing safety and liveness within the CAP theorem constraints. Protocol designers must navigate the trade-offs between latency, throughput, and decentralization. A robust consensus mechanism effectively aligns the incentives of validators with the stability of the system, often utilizing slashing conditions or block rewards to penalize adversarial behavior and reward honest participation.

Consensus protocols act as the ultimate margin engine by defining the latency and reliability of transaction settlement in volatile markets.

In the context of derivative systems, the choice of consensus mechanism directly impacts the reliability of oracle data feeds and the execution speed of smart contract liquidations. A slow or congested consensus layer introduces systemic latency, which creates opportunities for front-running and arbitrage that can drain liquidity from decentralized options markets. The game theory behind validator selection determines the threshold for censorship resistance and the cost to attack the network, both of which are critical variables for institutional risk modeling.

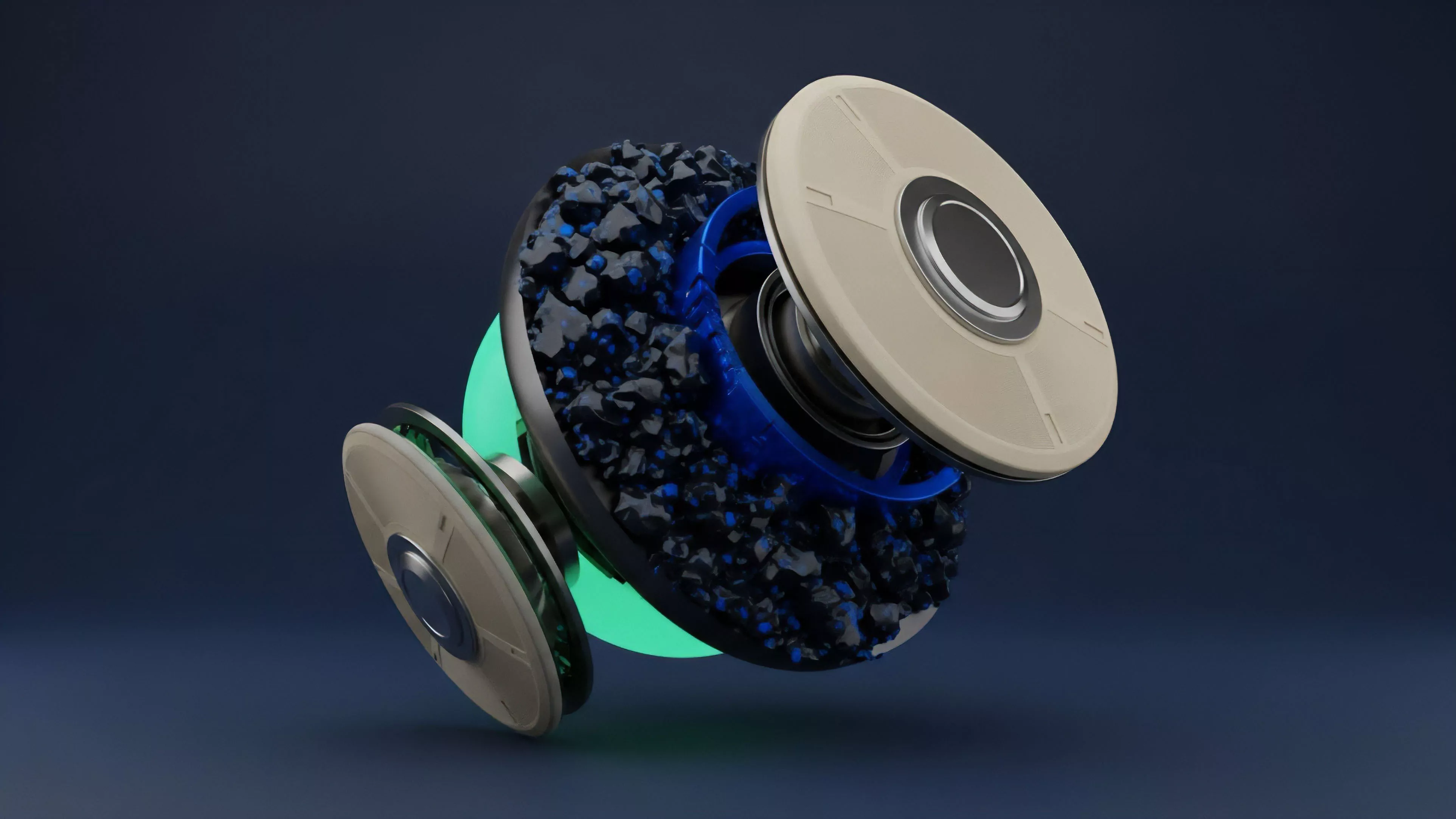

The internal architecture of these systems often involves a multi-stage process:

- Proposal where a designated validator suggests the next block of transactions.

- Verification where participating nodes check the proposed state against existing protocol rules.

- Finalization where the network reaches a deterministic agreement, rendering the transaction immutable.

Mathematics often feels like a cold, distant language, yet it is the only medium capable of translating human desire for autonomy into a functional, machine-enforced reality. The intersection of stochastic processes and validator behavior provides the true insight into network health.

Approach

Modern implementations favor hybrid models that combine high-speed consensus with rigorous security audits. The current focus centers on maximizing throughput without sacrificing the decentralization required for censorship resistance.

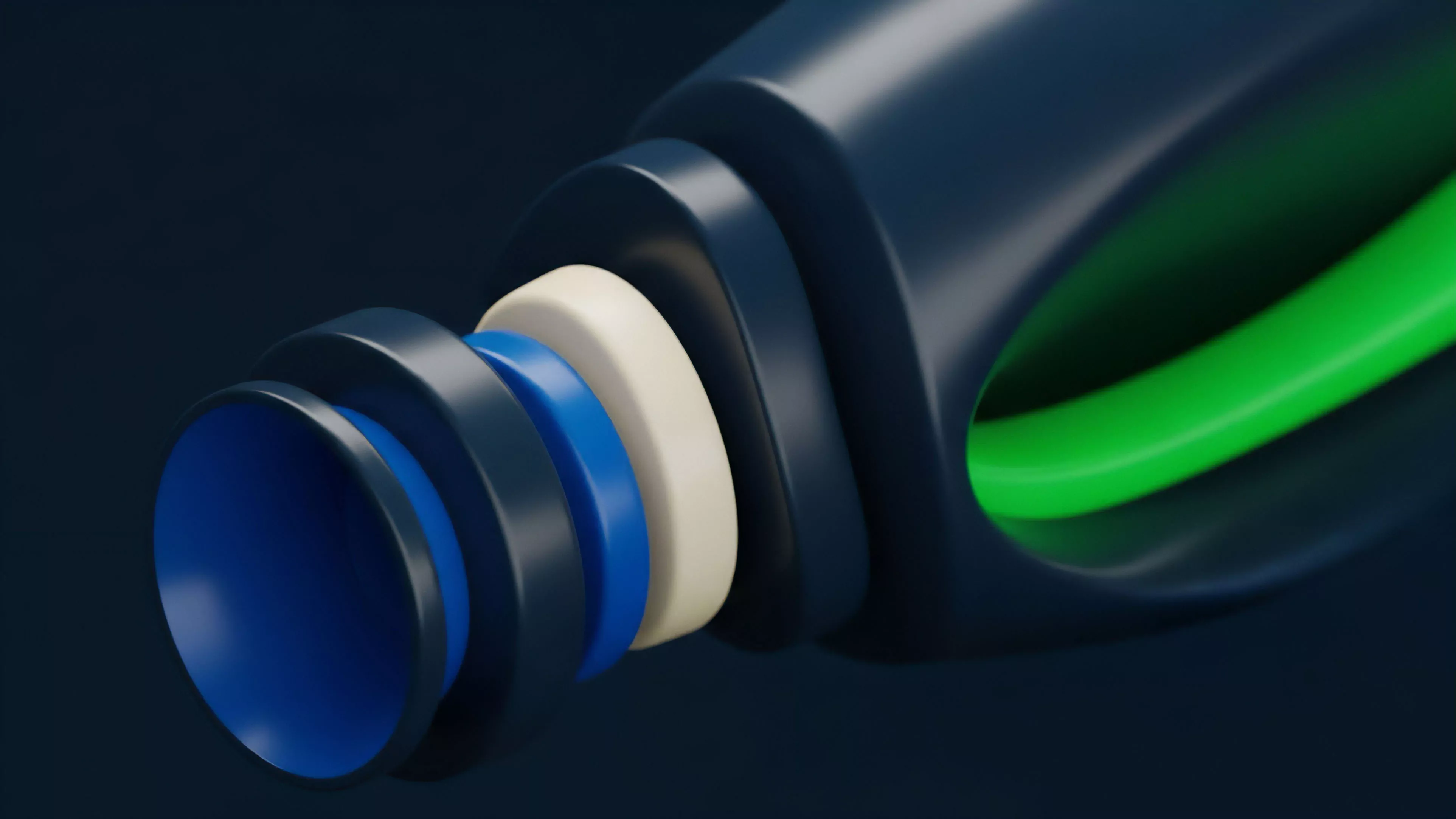

Financial protocols now utilize modular architectures where consensus is decoupled from execution, allowing for specialized scaling solutions that maintain the integrity of the underlying settlement layer.

Modular consensus architectures allow protocols to optimize for specific financial use cases without compromising base layer security.

Liquidity providers and market makers must account for the specific consensus properties of the chain they operate on, as these dictate the cost of capital and the risk of liquidation delays. Advanced systems utilize zero-knowledge proofs to verify consensus states efficiently, significantly reducing the data overhead for light clients. This optimization path is essential for creating the low-latency environments required for sophisticated derivative instruments to compete with centralized exchanges.

| Metric | Impact on Derivatives |

|---|---|

| Settlement Finality | Determines margin call execution speed |

| Validator Dispersion | Influences systemic risk and censorship resistance |

| Gas Costs | Dictates the feasibility of complex option strategies |

Evolution

The progression of these systems has moved from monolithic chains to complex, interconnected networks of specialized zones. Early systems prioritized simplicity and raw security, whereas contemporary designs emphasize composability and cross-chain interoperability. This shift reflects a maturing market that demands higher efficiency and the ability to move assets seamlessly between different execution environments without losing the guarantee of consensus finality.

The evolution toward modularity signifies the maturation of decentralized infrastructure into a tiered financial operating system.

As the industry moves forward, the focus shifts toward mitigating systemic risks associated with cross-chain bridges and interoperability protocols. The integration of liquid staking derivatives has changed the incentive landscape, as validators now leverage their staked capital to participate in additional yield-generating activities. This development creates a complex web of interconnected leverage that requires new frameworks for monitoring contagion risk and managing cross-protocol collateral requirements.

Horizon

Future development will likely prioritize the creation of application-specific consensus engines that can dynamically adjust parameters based on real-time market volatility.

These adaptive systems will allow for granular control over block times and validation thresholds, enabling the creation of high-frequency decentralized trading environments. The ultimate objective is to provide institutional-grade performance while maintaining the permissionless, trust-minimized architecture that defines the sector.

Adaptive consensus mechanisms will eventually allow for the dynamic scaling of network resources during periods of extreme market stress.

Research into cryptographic primitives like threshold signatures and multi-party computation will further decentralize the validator set, reducing the reliance on single-entity node operators. As these technologies mature, the barrier between centralized and decentralized finance will continue to erode, with the consensus layer serving as the transparent, auditable foundation for a global, permissionless derivatives market.