Essence

Proof of Computation functions as a verifiable cryptographic commitment to the integrity of off-chain execution. It serves as the bridge between deterministic protocol logic and the intensive resource requirements of complex financial modeling. By generating succinct, immutable evidence that a specific computation occurred according to defined parameters, this mechanism allows decentralized systems to outsource heavy lifting while maintaining trustless settlement.

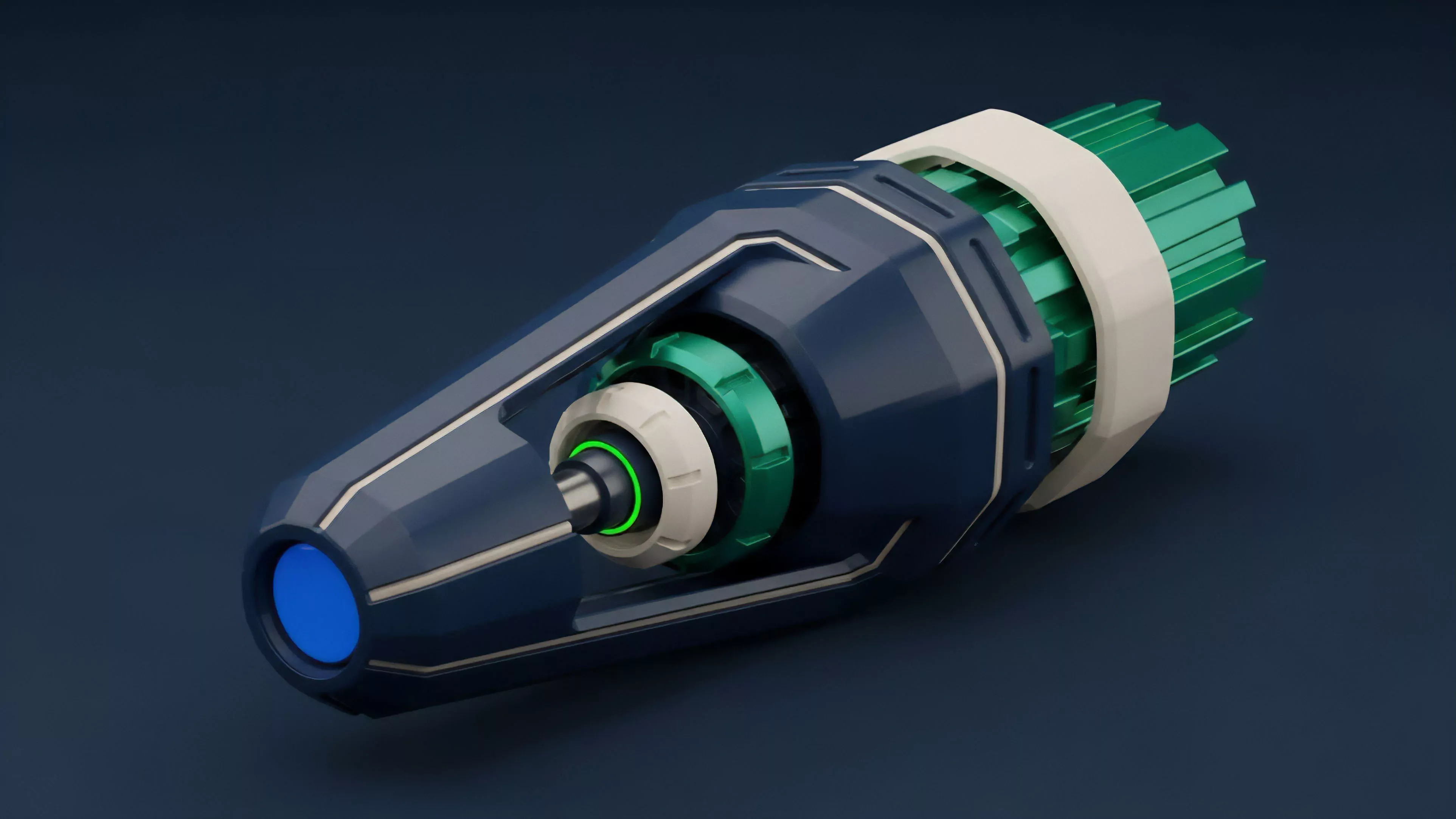

Proof of Computation validates off-chain execution through cryptographic evidence to ensure deterministic outcomes within decentralized financial protocols.

This concept fundamentally shifts the burden of proof from active network participation to verifiable algorithmic output. Financial protocols utilize these proofs to integrate sophisticated derivative pricing models and risk management engines that exceed the constraints of traditional on-chain execution environments. The systemic reliance on this mechanism transforms the blockchain from a mere ledger into a robust settlement layer for high-frequency, complex financial activity.

Origin

The architectural roots trace back to the intersection of zero-knowledge cryptography and distributed systems engineering.

Early iterations focused on scaling throughput by offloading state transitions, yet the financial implications remained secondary to performance optimization. The shift occurred when market participants recognized that decentralized derivative markets required computational intensity that existing consensus mechanisms could not sustain without significant latency.

- Zero Knowledge Succinct Non-Interactive Argument of Knowledge provides the foundational mathematical architecture for compact, verifiable execution traces.

- Verifiable Delay Functions introduce temporal constraints that prevent front-running and ensure the chronological integrity of computational submissions.

- Optimistic Execution Models offer an alternative pathway where computation is assumed correct unless challenged by a fraud-proof mechanism.

This evolution was driven by the necessity to replicate the speed and complexity of centralized clearinghouses within an environment that mandates transparency and decentralization. The transition from simple balance transfers to programmable financial instruments demanded a shift toward verifiable off-chain processing to prevent protocol stagnation.

Theory

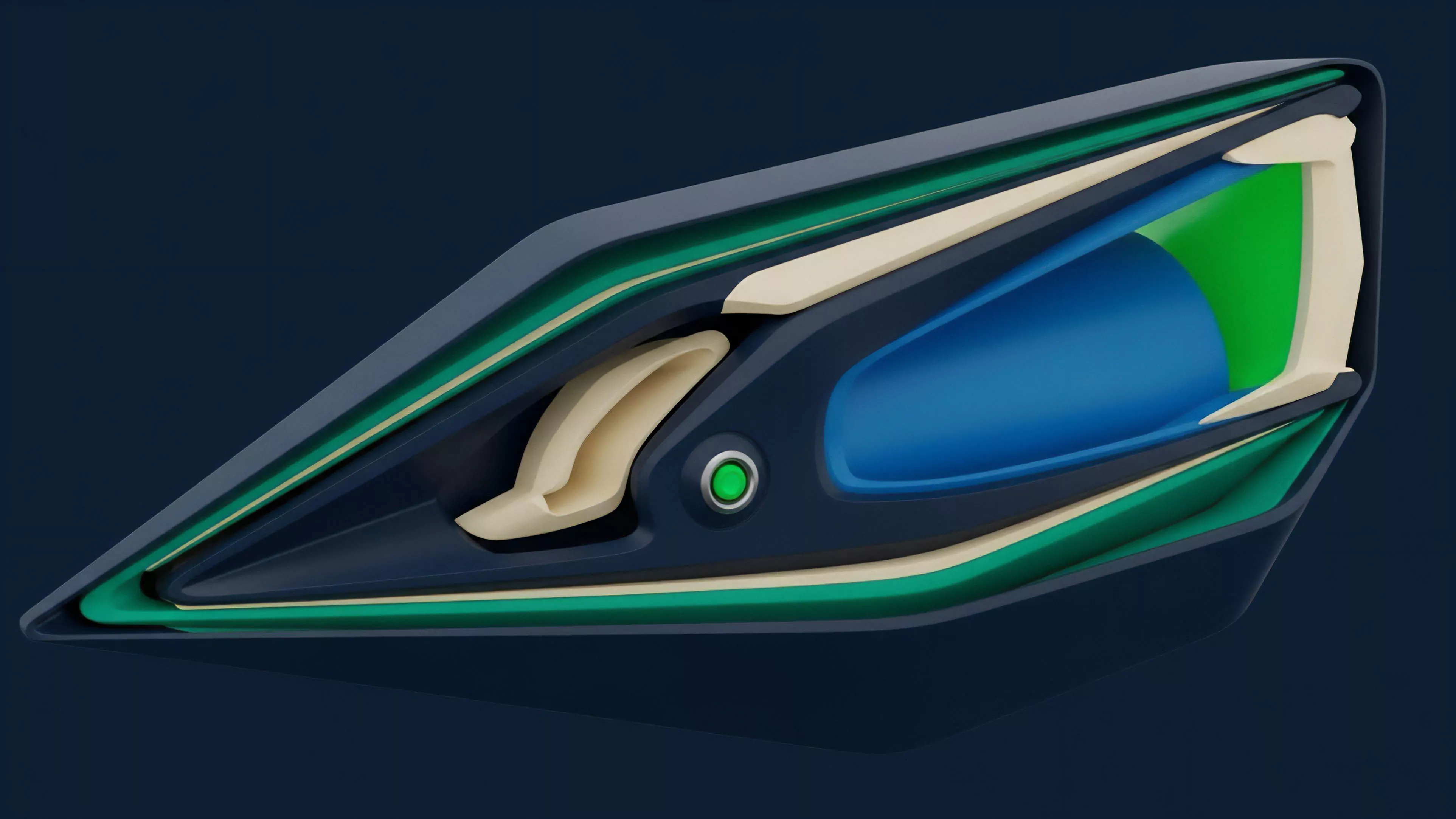

The theoretical framework relies on the separation of execution from consensus. By isolating the computational workload, protocols can leverage specialized hardware or parallelized off-chain agents to perform complex derivative pricing, such as Black-Scholes simulations or dynamic margin calculations, without taxing the base layer.

The resulting proof acts as a compressed audit trail, which the main chain verifies with minimal computational cost.

Decoupling execution from consensus allows protocols to scale financial complexity while maintaining the integrity of on-chain settlement.

Quantitative modeling within this structure necessitates rigorous attention to the interaction between the proof generation latency and the market’s volatility. The systemic risk resides in the potential for proof generation delays to induce liquidity gaps during high-volatility events. My concern centers on how these lag times affect margin calls; if the computation for a liquidation threshold lags behind real-time market price movements, the protocol risks insolvency due to outdated risk data.

| Mechanism | Verification Cost | Computational Latency |

| ZK-Proofs | Low | High |

| Fraud Proofs | Medium | Low |

| Trusted Execution Environments | Minimal | Minimal |

The mathematical rigor required for these proofs creates a new layer of systemic dependency. We are essentially betting on the assumption that the cryptographic security of the proof remains superior to the risk of code exploitation within the off-chain execution environment.

Approach

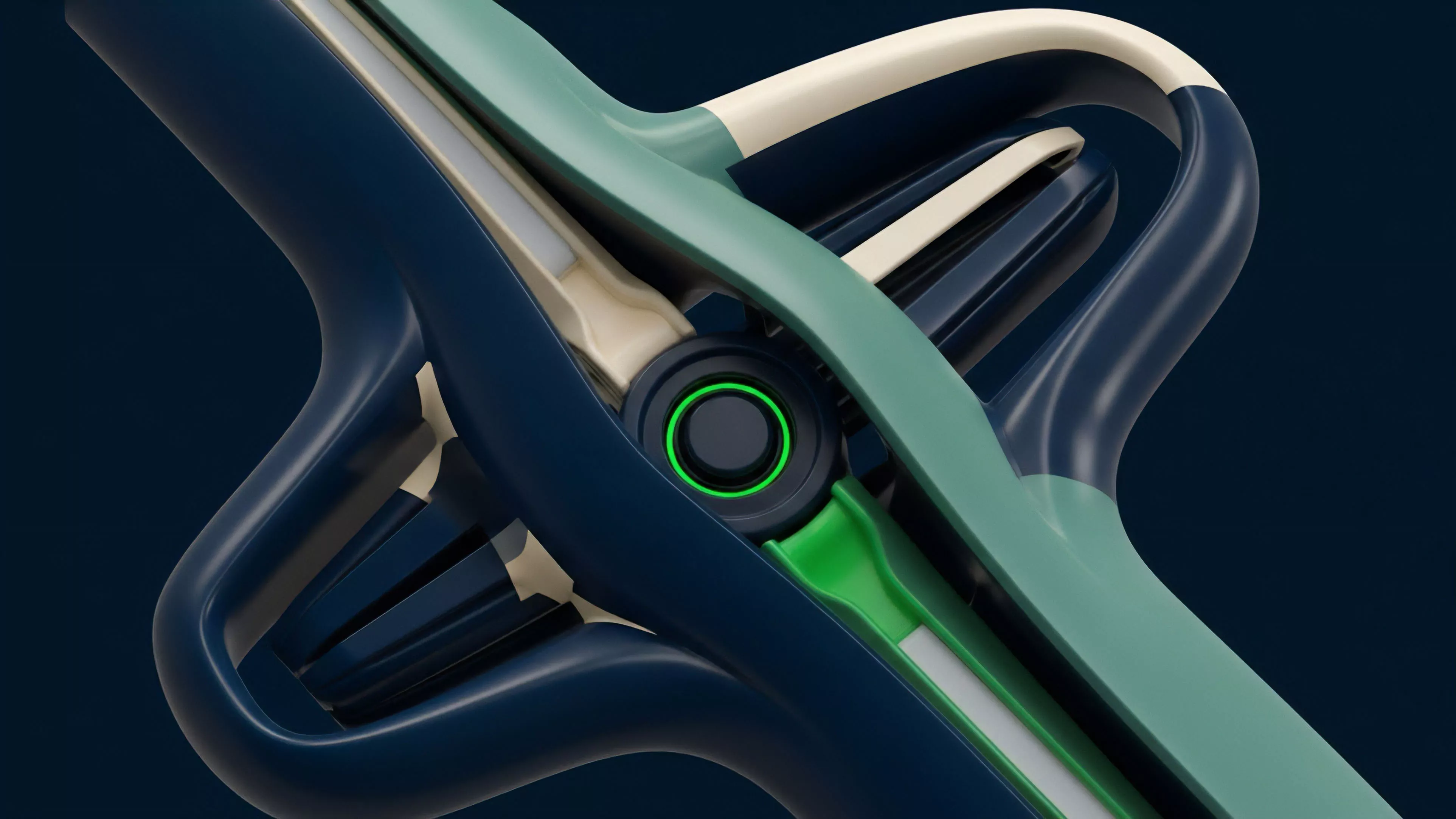

Current implementations favor hybrid models where specialized sequencers or decentralized oracle networks handle the heavy computation. These entities submit the results alongside cryptographic proofs to the smart contract, which validates the output before updating the state.

This architecture facilitates real-time derivative settlement, allowing for dynamic portfolio rebalancing and margin maintenance that would be otherwise impossible.

- Decentralized Sequencer Networks aggregate and process order flow to ensure fair sequencing before generating the necessary computational proofs.

- Hardware Accelerated Provers utilize field-programmable gate arrays to reduce the time required for complex cryptographic proof generation.

- Modular Settlement Layers allow protocols to customize the verification parameters based on the specific risk profile of the derivative instrument.

This approach necessitates a high degree of transparency regarding the hardware and software stack of the provers. Market participants must assess the risk of prover collusion or failure, which could lead to temporary suspension of settlement or incorrect state updates. I find the current trend toward prover decentralization to be a positive development, as it mitigates the single-point-of-failure risks inherent in early, centralized implementations.

Evolution

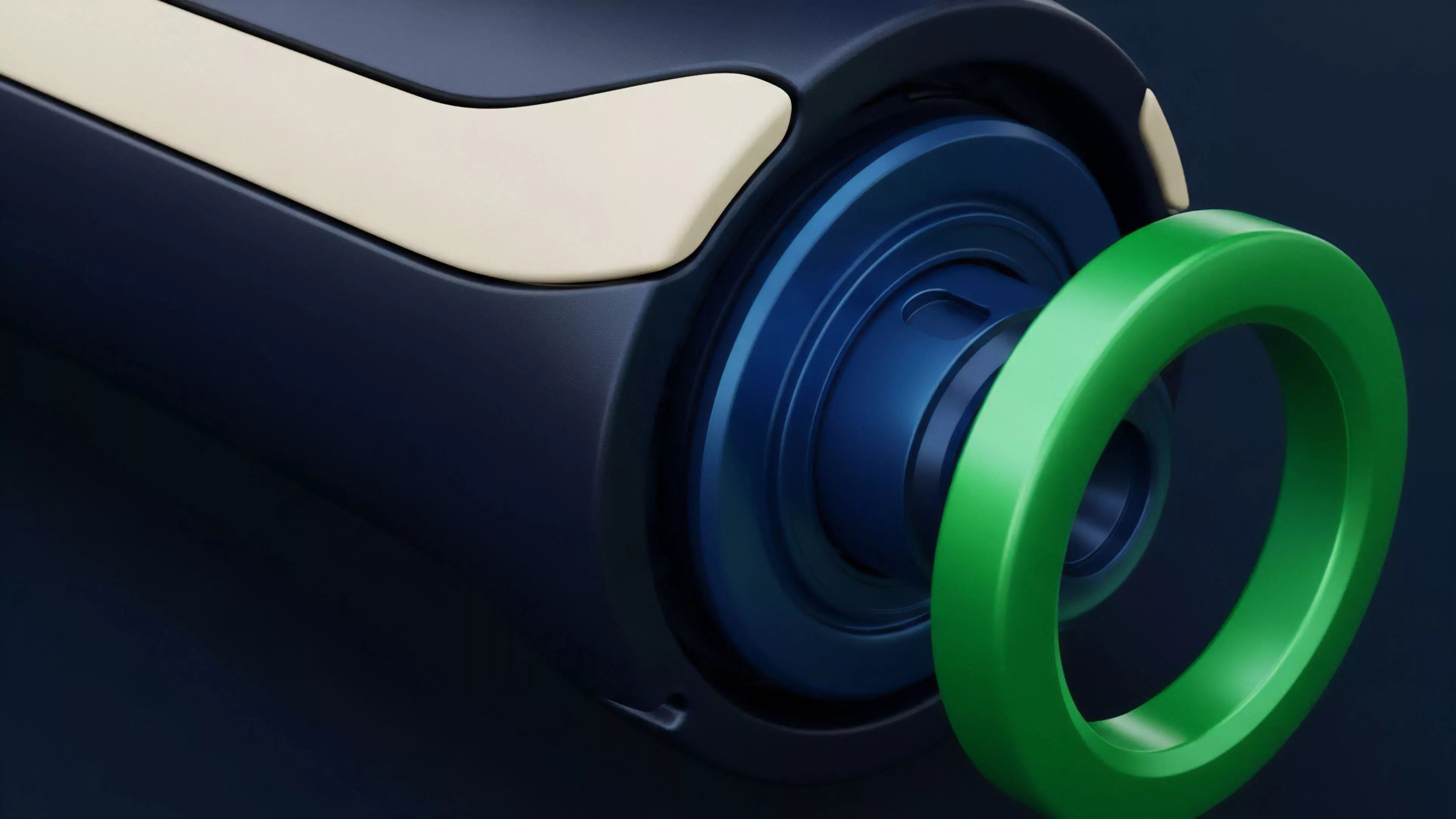

The transition has moved from simple, monolithic execution toward highly specialized, modular architectures.

Early designs suffered from severe throughput limitations, forcing developers to compromise on the sophistication of financial instruments. Today, we observe a maturation where the focus shifts toward optimizing the cost-to-verify ratio, enabling the deployment of institutional-grade derivative protocols that operate with sub-second finality.

Modular architectures enable specialized execution environments that support advanced financial engineering without compromising base-layer security.

The historical trajectory mirrors the development of traditional exchange technology, where the push for lower latency and higher complexity consistently drives architectural innovation. We are witnessing the maturation of the decentralized clearinghouse. This evolution is not linear; it is characterized by intense periods of experimentation followed by consolidations around proven cryptographic standards.

I am particularly struck by how the integration of recursive proof composition allows for the aggregation of multiple financial transactions into a single, succinct verification event.

Horizon

The future lies in the complete abstraction of computational complexity from the user experience. We are moving toward environments where protocols automatically negotiate the optimal prover infrastructure based on the required speed, cost, and security of the specific derivative contract. This will allow for the seamless integration of cross-chain liquidity, where computational proofs verify state across disparate protocols without requiring trust in third-party bridges.

| Innovation Vector | Systemic Impact |

| Recursive Proof Composition | Scalable multi-protocol settlement |

| Hardware-Level ZK Integration | Near-instantaneous derivative pricing |

| Automated Prover Markets | Optimized cost for execution |

Strategic positioning requires recognizing that the ultimate value lies in the protocol’s ability to maintain liquidity under extreme market stress. As these systems become more efficient, the competition will shift from basic functionality to the precision of risk models and the robustness of the proof generation process. The survival of decentralized finance depends on our ability to build systems that remain deterministic even when the underlying markets are in total disarray.