Essence

Probabilistic Models in the context of crypto derivatives function as the mathematical architecture for quantifying uncertainty within decentralized environments. These frameworks transform raw market data into structured distributions, allowing participants to price risk where traditional assumptions of market continuity often fail. By mapping potential price paths against time-weighted volatility, these models provide the quantitative foundation for fair value determination and margin requirement calibration.

Probabilistic models serve as the mathematical scaffolding for pricing uncertainty and managing risk in decentralized derivative markets.

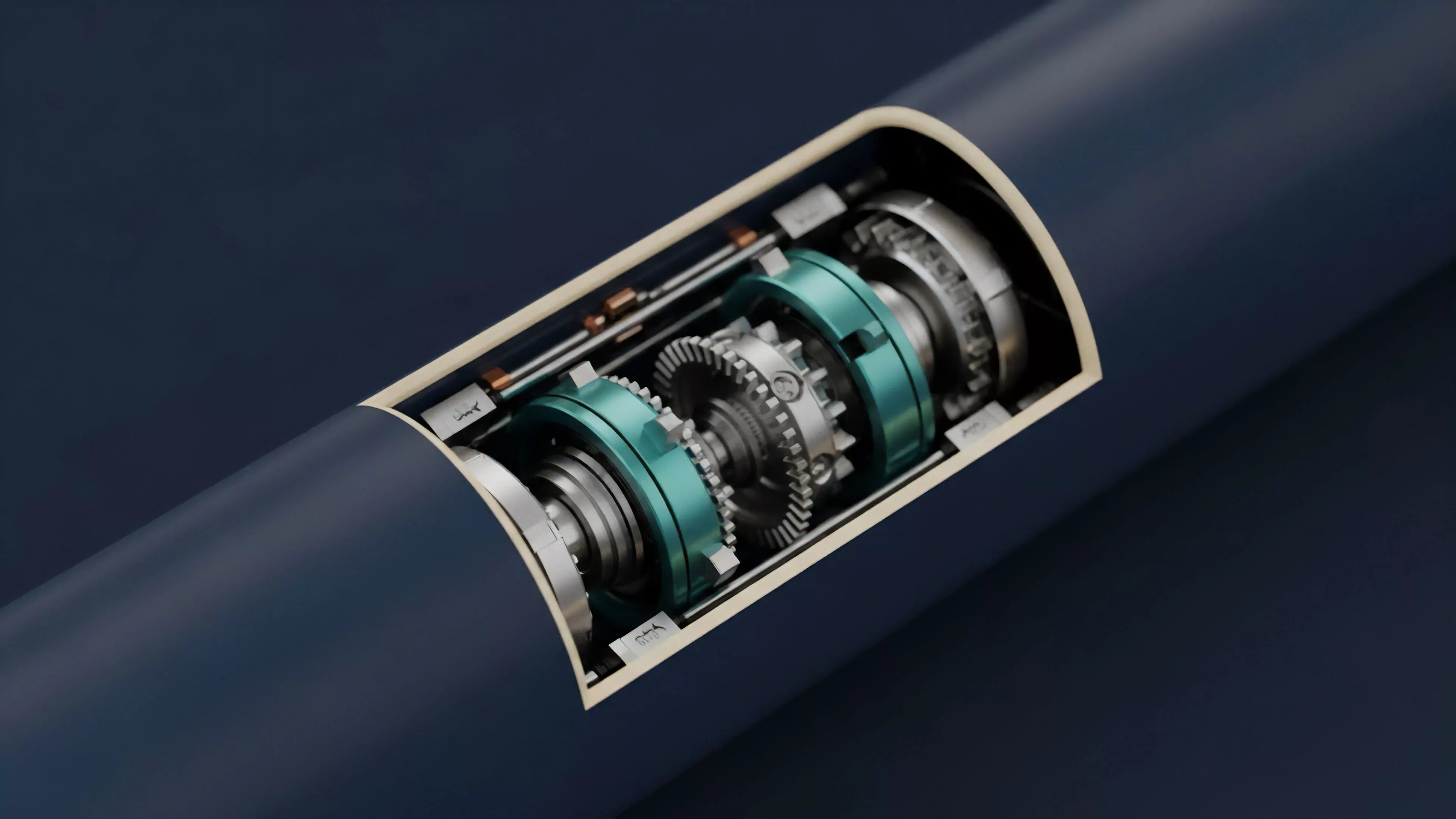

The systemic utility of these models extends beyond mere valuation. They act as the primary interface between stochastic calculus and smart contract execution. When liquidity providers or automated market makers operate, they utilize these models to determine the optimal spread, ensuring that capital remains productive while protecting the protocol from toxic flow.

The core challenge involves calibrating these models to account for the extreme tail risks and rapid regime shifts common in digital asset markets.

Origin

The genesis of Probabilistic Models in digital finance traces back to the adaptation of classical quantitative finance frameworks, specifically the Black-Scholes-Merton model and its subsequent refinements for stochastic volatility. Early decentralized finance experiments attempted to port these legacy systems directly onto blockchain rails, yet quickly encountered the friction of high latency and the absence of reliable, high-frequency price oracles. This initial period highlighted the incompatibility of traditional continuous-time models with the discrete, block-based nature of decentralized settlement.

Historical adaptation of classical quantitative finance frameworks to decentralized environments necessitated significant architectural modifications to account for block-based settlement constraints.

The evolution shifted toward bespoke models designed specifically for the realities of on-chain liquidity. Developers recognized that the lack of central clearinghouses meant that models had to incorporate endogenous risk factors, such as liquidation cascades and protocol-specific governance vulnerabilities. This shift marked the transition from passive replication of legacy models to the creation of native, adaptive systems that treat network congestion and gas price volatility as integral components of the option pricing equation.

Theory

The theoretical structure of Probabilistic Models rests on the interaction between underlying asset price processes and the volatility surface.

In decentralized markets, this interaction is mediated by the specific consensus mechanism and the throughput constraints of the underlying blockchain.

Mathematical Foundations

- Stochastic Processes provide the foundational logic for modeling asset price movement, utilizing Geometric Brownian Motion or Jump-Diffusion models to capture the discontinuous nature of crypto price action.

- Volatility Surfaces represent the term structure and skew of implied volatility, allowing traders to observe how market participants price different strike prices and maturities.

- Greeks Analysis enables the measurement of sensitivity to changes in underlying parameters, such as Delta, Gamma, Vega, and Theta, which are critical for hedging strategies.

Theoretical robustness depends on integrating stochastic price processes with protocol-specific constraints to accurately model market dynamics.

The model architecture often incorporates Bayesian inference to update probability distributions in real-time as new trade data enters the mempool. This creates a feedback loop where the model constantly recalibrates based on observed order flow. The mathematical rigor required here is immense, as the model must remain performant within the execution limits of smart contracts while avoiding the pitfalls of overfitting to noisy, high-frequency data.

| Model Component | Functional Objective |

| Distribution Fitting | Characterizing asset return probabilities |

| Parameter Estimation | Calibrating sensitivity to market shocks |

| Simulation Engine | Stress testing against tail-event scenarios |

Approach

Current implementations prioritize the synthesis of off-chain computation and on-chain verification. Market makers and protocol architects employ hybrid architectures to maintain precision without sacrificing speed. By offloading complex simulations to decentralized oracle networks or specialized computation layers, protocols achieve a balance between rigorous pricing and the necessity of rapid trade execution.

Modern implementation strategies leverage hybrid computation models to achieve the required balance between mathematical precision and execution speed.

The practical application focuses on managing the Liquidation Threshold. Models are configured to dynamically adjust collateral requirements based on the current volatility regime. If the model detects an increase in market stress, it preemptively tightens the margin constraints to mitigate systemic risk.

This approach reflects a shift from static, rule-based systems to intelligent, state-dependent frameworks that adapt to the adversarial nature of decentralized trading environments.

Evolution

The trajectory of Probabilistic Models has moved from simple, monolithic structures to complex, modular systems capable of handling multi-asset portfolios. Initially, protocols treated each derivative instrument in isolation. Today, the focus is on cross-margining and portfolio-level risk assessment, where models evaluate the correlations between different assets to optimize capital efficiency.

Evolutionary trends favor modular, cross-margining architectures that optimize capital efficiency through holistic portfolio risk assessment.

This development has been driven by the need to survive cycles of extreme volatility. As market participants became more sophisticated, the models had to account for reflexivity, where the act of hedging itself impacts the underlying asset price. The industry is currently witnessing the integration of machine learning techniques into these models to better predict liquidity gaps and order flow toxicity, moving away from rigid parametric assumptions toward more flexible, data-driven frameworks.

- Modular Design allows protocols to swap pricing engines as market conditions change.

- Cross-Asset Correlation models have become standard for determining margin requirements in complex derivative portfolios.

- Automated Rebalancing mechanisms now utilize probabilistic outputs to trigger risk-mitigation trades before liquidation thresholds are breached.

Horizon

The future of Probabilistic Models lies in the intersection of zero-knowledge cryptography and high-fidelity risk modeling. As computational overhead for cryptographic proofs decreases, we will see the emergence of fully on-chain, privacy-preserving risk engines. These systems will allow for private, institutional-grade risk assessment that remains transparent to the protocol’s consensus layer.

Future advancements will likely focus on the integration of zero-knowledge proofs to enable private, high-fidelity risk modeling within decentralized protocols.

The ultimate objective is the creation of a unified, interoperable risk standard for decentralized derivatives. By standardizing how probability distributions are calculated and reported, the ecosystem will gain a shared language for quantifying systemic risk. This will allow for the development of automated, cross-protocol insurance mechanisms, fundamentally changing how capital is allocated and protected in decentralized finance.

| Future Focus Area | Expected Impact |

| Zero-Knowledge Risk Proofs | Enhanced privacy with verifiable safety |

| Cross-Protocol Risk Standards | Reduced contagion through shared metrics |

| AI-Driven Adaptive Models | Faster response to regime shifts |