Essence

Privacy Preserving Machine Learning represents the computational intersection where algorithmic training occurs on encrypted or obfuscated datasets, ensuring sensitive inputs remain hidden from the processing entity. This framework solves the fundamental tension between data utility and data confidentiality in decentralized financial environments. By leveraging cryptographic primitives, institutions execute complex predictive models ⎊ such as risk scoring or volatility forecasting ⎊ without ever exposing the underlying raw data points to potential adversaries or centralized intermediaries.

Privacy Preserving Machine Learning enables model training on encrypted data to maintain confidentiality while extracting actionable financial insights.

The systemic relevance of this technology lies in its ability to unlock siloed data for market participants. Traditional order flow data and user behavior metrics often remain locked behind regulatory or competitive walls. Through secure computation, these entities contribute to aggregate intelligence without sacrificing proprietary advantage.

The result is a more robust information environment where the collective knowledge of the market grows while individual data sovereignty remains intact.

Origin

The architectural roots of Privacy Preserving Machine Learning trace back to the theoretical breakthroughs in Homomorphic Encryption and Secure Multi-Party Computation. Early cryptographic literature focused on the impossibility of processing data without decryption, a constraint that stifled collaborative research and automated financial decision-making. Researchers identified that if data could remain in an encrypted state while undergoing mathematical transformations, the entire paradigm of data processing would shift from centralized trust to mathematical certainty.

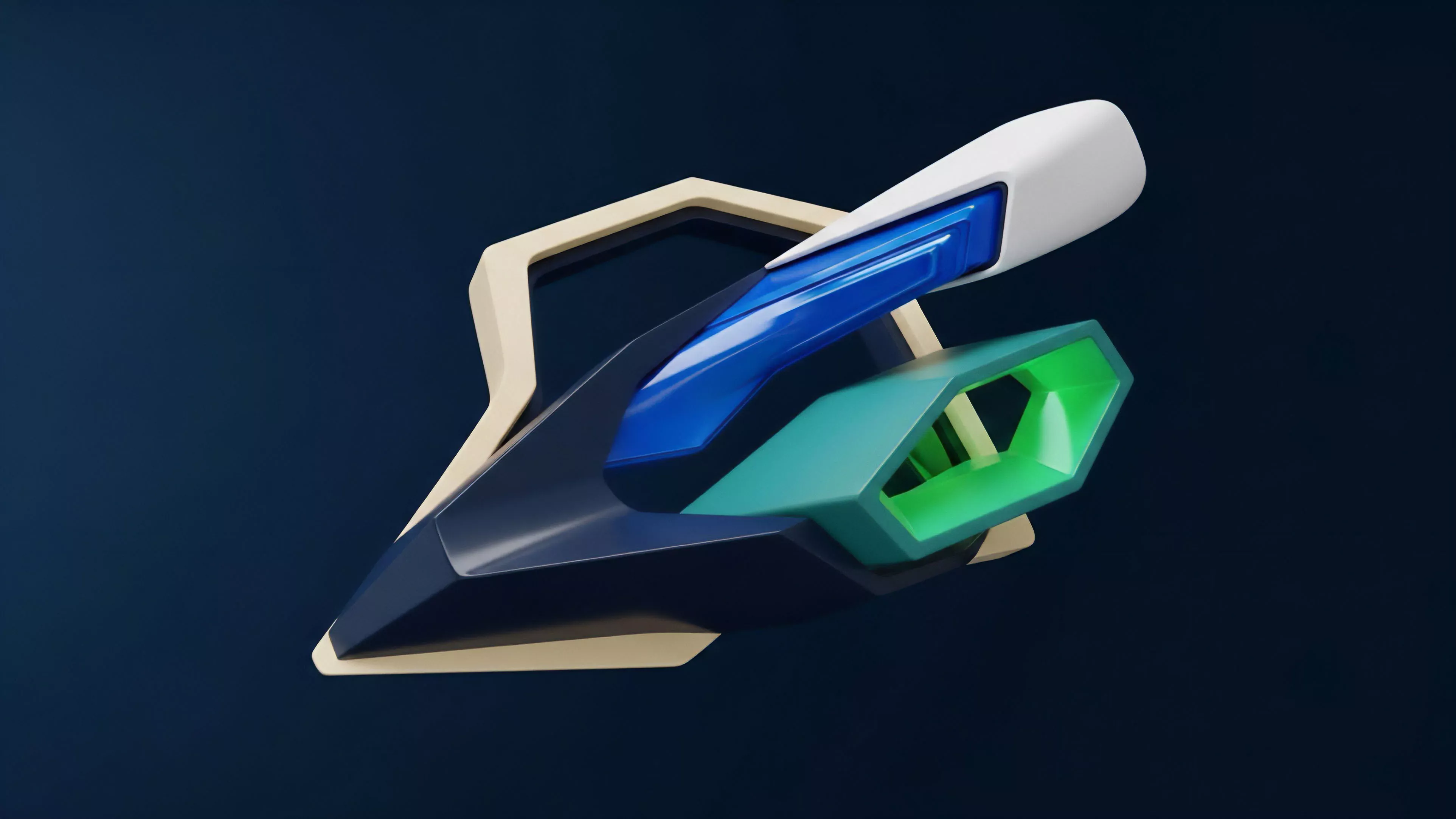

The evolution of these techniques moved from abstract academic concepts into the practical domain through the development of Zero-Knowledge Proofs and Trusted Execution Environments. As blockchain networks sought to provide institutional-grade financial instruments, the requirement for privacy in automated strategy execution became a primary constraint. This drove the integration of these cryptographic methods into decentralized protocols, allowing for the creation of privacy-first margin engines and predictive market makers that function without revealing private position data.

Theory

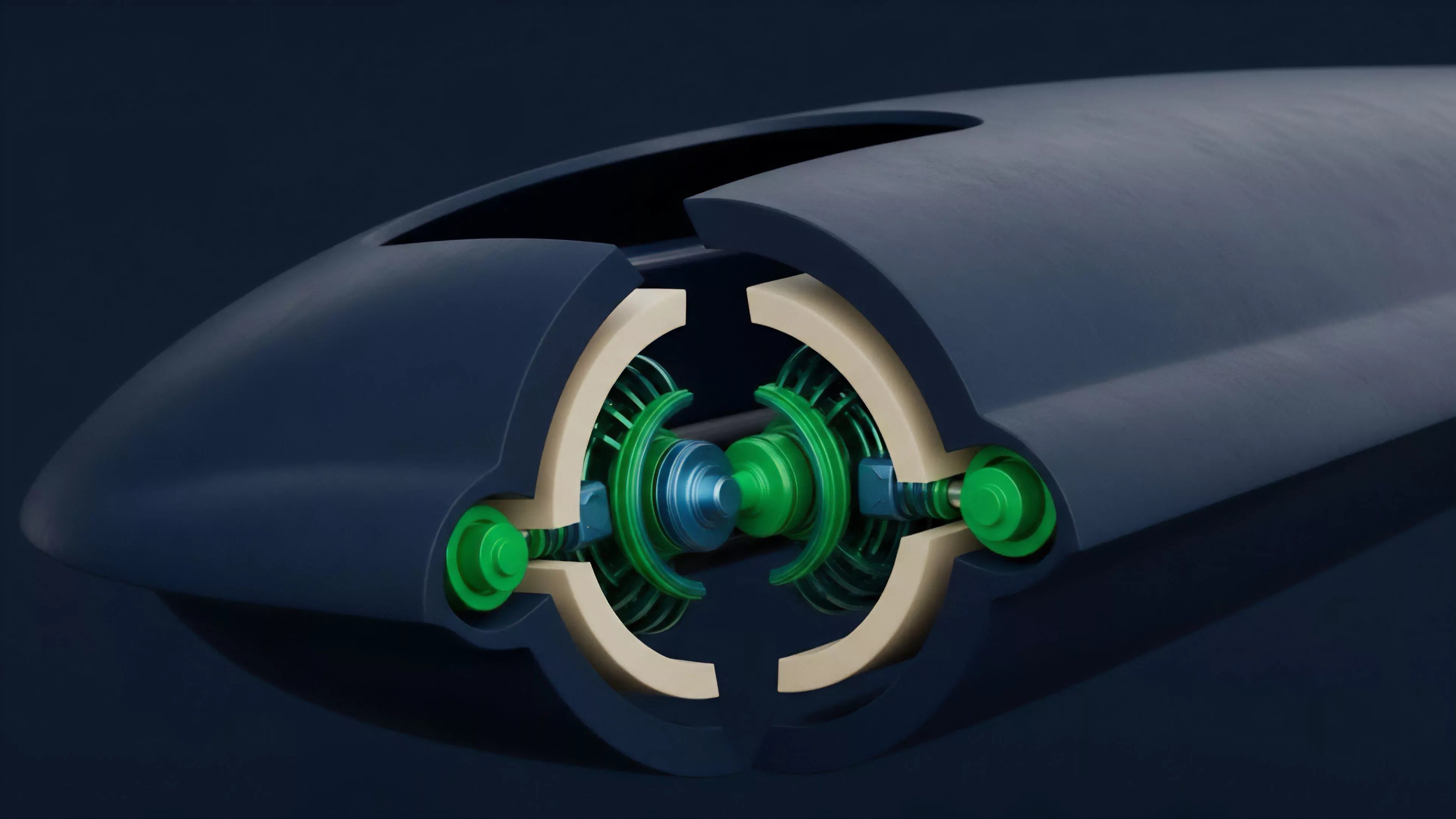

The structure of Privacy Preserving Machine Learning relies on a multi-layered cryptographic stack that ensures both data integrity and model accuracy.

At the base, Homomorphic Encryption allows for arithmetic operations on ciphertexts, producing an encrypted result that, when decrypted, matches the output of operations performed on plaintext. This creates a powerful mechanism for secure data aggregation in decentralized markets.

- Secure Multi-Party Computation facilitates joint computation where participants compute a function over their inputs while keeping those inputs private.

- Federated Learning distributes the training process across decentralized nodes, sharing only model weight updates rather than raw data.

- Differential Privacy introduces mathematical noise into datasets to prevent the re-identification of individual data points within an aggregate model.

Mathematical noise and encrypted computations form the structural foundation that prevents unauthorized access to sensitive input variables.

The mathematical complexity of these systems introduces significant latency, which dictates the current boundaries of implementation. In high-frequency trading scenarios, the computational overhead of Fully Homomorphic Encryption remains a hurdle. Consequently, architects often employ hybrid approaches, utilizing Trusted Execution Environments for speed while maintaining cryptographic verification for integrity.

This balancing act defines the current frontier of secure financial engineering.

Approach

Current implementation strategies prioritize modularity and compatibility with existing blockchain infrastructures. Developers deploy Privacy Preserving Machine Learning pipelines through decentralized oracle networks that serve as the secure computation layer. By offloading the training of risk models to these specialized environments, protocols maintain the transparency of the blockchain for settlement while preserving the confidentiality of the inputs used for pricing derivatives.

| Methodology | Computational Cost | Privacy Guarantee |

| Homomorphic Encryption | Very High | Mathematical |

| Multi-Party Computation | Moderate | Game-Theoretic |

| Trusted Execution | Low | Hardware-Based |

The operational focus centers on the integration of these models into automated market maker architectures. By utilizing Privacy Preserving Machine Learning, liquidity providers adjust their spreads based on private order flow information without revealing the specific size or direction of their trades to the public mempool. This reduces the risk of front-running and improves the overall efficiency of price discovery in decentralized venues.

Evolution

The transition of Privacy Preserving Machine Learning from theoretical research to production-ready protocol architecture mirrors the broader maturation of decentralized finance.

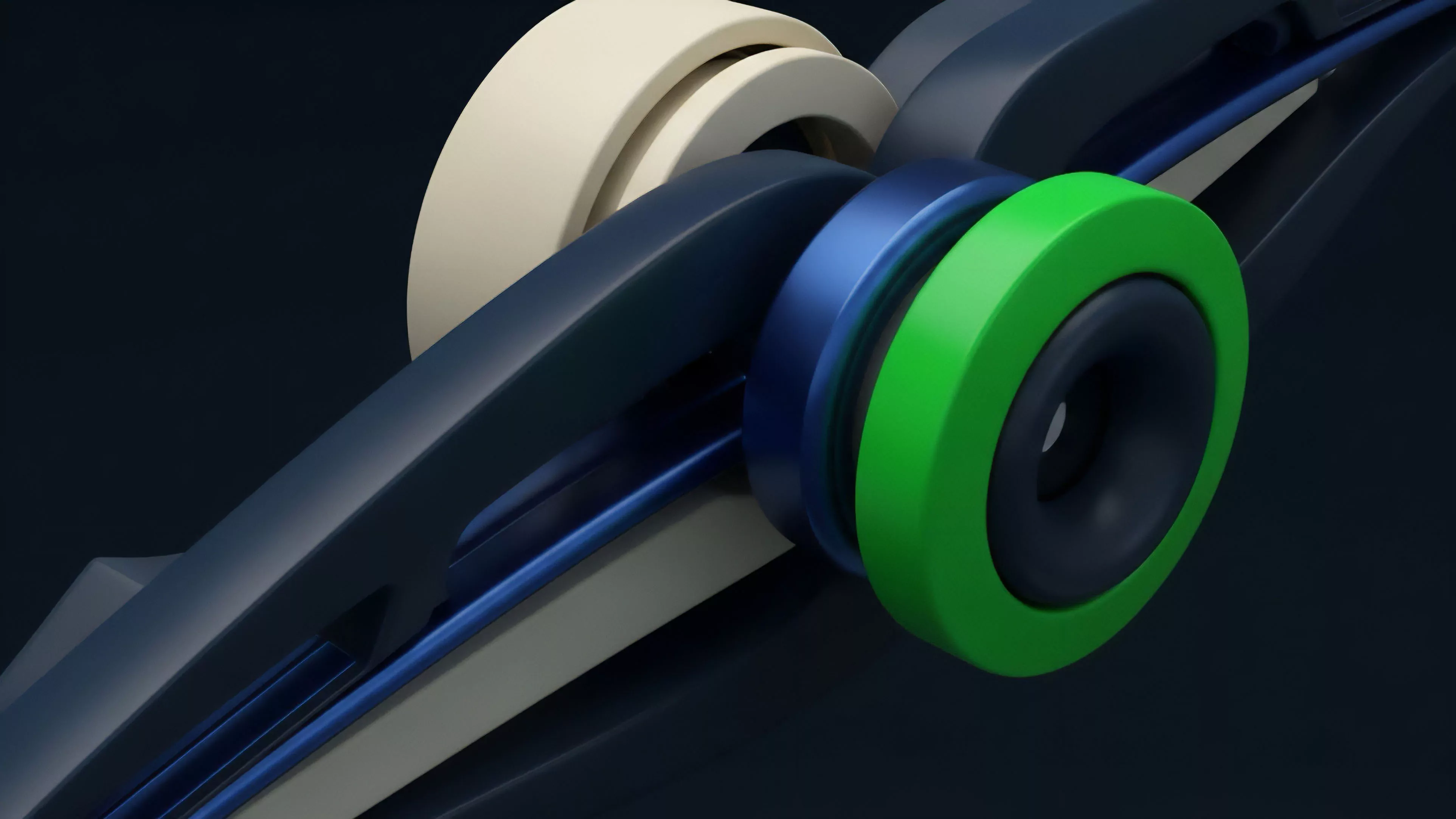

Early iterations struggled with significant performance bottlenecks, limiting their utility to low-frequency tasks. The current generation of protocols has overcome these constraints through the use of specialized cryptographic hardware and optimized circuit design. The industry has shifted from attempting to encrypt everything to a more surgical application of privacy primitives.

This refinement allows for faster execution while maintaining the necessary security guarantees. The integration of Zero-Knowledge Machine Learning now allows for the verification of model output integrity, ensuring that the model has not been tampered with by the entity performing the computation. This verification layer adds a critical component to the trustless nature of decentralized systems.

Refined cryptographic circuit design enables the verification of model integrity while significantly reducing computational latency for financial applications.

Consider the parallel with the development of early packet-switched networks, where the fundamental challenge was ensuring data reached its destination intact; here, the challenge is ensuring the computation itself remains untampered and private. As these technologies scale, the focus moves toward the standardization of privacy-preserving model architectures, allowing for interoperability between different decentralized liquidity pools and risk management engines.

Horizon

Future developments in Privacy Preserving Machine Learning will likely center on the reduction of computational costs through hardware-accelerated cryptographic primitives. The next phase of development involves the deployment of specialized application-specific integrated circuits designed solely for Zero-Knowledge operations and Homomorphic Encryption.

This hardware-software co-design will reduce latency to the point where real-time, privacy-preserving risk management becomes the standard for all decentralized derivative platforms.

- Cross-Protocol Intelligence will allow liquidity providers to share risk data across different chains without compromising individual user privacy.

- Autonomous Portfolio Management will leverage these models to execute complex, privacy-guaranteed rebalancing strategies on behalf of institutional users.

- Decentralized Model Auditing will provide a trustless framework for verifying the fairness and bias-resistance of financial algorithms.

The ultimate trajectory leads toward a financial system where algorithmic decision-making is ubiquitous yet entirely confidential. This creates a environment where the competitive advantage shifts from the possession of data to the sophistication of the models themselves. The systemic risk associated with centralized data repositories will diminish, replaced by a distributed architecture where the intelligence is public but the inputs remain strictly private.