Essence

Price Feed Optimization represents the structural engineering of data ingestion and processing mechanisms designed to ensure that decentralized derivative protocols operate on accurate, low-latency, and manipulation-resistant valuation signals. It functions as the heartbeat of any automated margin engine, dictating the precision of liquidation thresholds and the reliability of settlement prices. Without robust mechanisms to distill fragmented, noisy, and adversarial exchange data into a singular, reliable index, the entire premise of trustless financial exposure collapses under the weight of oracle latency or localized price spikes.

Price Feed Optimization is the systemic calibration of data ingestion to produce reliable valuation signals for decentralized derivative settlement.

The primary challenge lies in the adversarial nature of digital asset markets, where liquidity is fragmented across disparate venues. Price Feed Optimization methodologies must balance the requirement for rapid updates against the need for filtering outliers that could trigger cascading, erroneous liquidations. This necessitates a sophisticated architecture capable of weighting data sources based on real-time liquidity, historical reliability, and cryptographic proof of origin, rather than relying on simple arithmetic averages that fail during periods of high volatility.

Origin

The necessity for Price Feed Optimization emerged directly from the catastrophic failures of early decentralized lending and derivatives platforms.

Initial iterations utilized rudimentary, single-source oracles or basic time-weighted averages that proved highly susceptible to flash-loan-driven price manipulation. Attackers identified that by temporarily skewing the price on a low-liquidity decentralized exchange, they could force protocol-level liquidations of large, collateralized positions, thereby capturing the resulting spread or profit from the protocol’s insurance fund.

- Manipulation Resistance: The shift from centralized endpoints to decentralized oracle networks created the foundation for modern verification techniques.

- Latency Mitigation: Developers recognized that block-time constraints often led to stale pricing, necessitating off-chain computation before on-chain settlement.

- Liquidity Aggregation: The evolution of decentralized finance required protocols to synthesize data from multiple high-volume venues to establish a representative market price.

These early vulnerabilities forced the industry to move beyond naive data feeds. Architects began implementing multi-source aggregation, medianizer functions, and circuit breakers, marking the transition toward more rigorous, defensive data architectures. This era established the foundational understanding that price discovery in a decentralized environment is not a passive observation but an active, security-critical protocol design choice.

Theory

The theoretical framework of Price Feed Optimization is rooted in quantitative finance and distributed systems engineering.

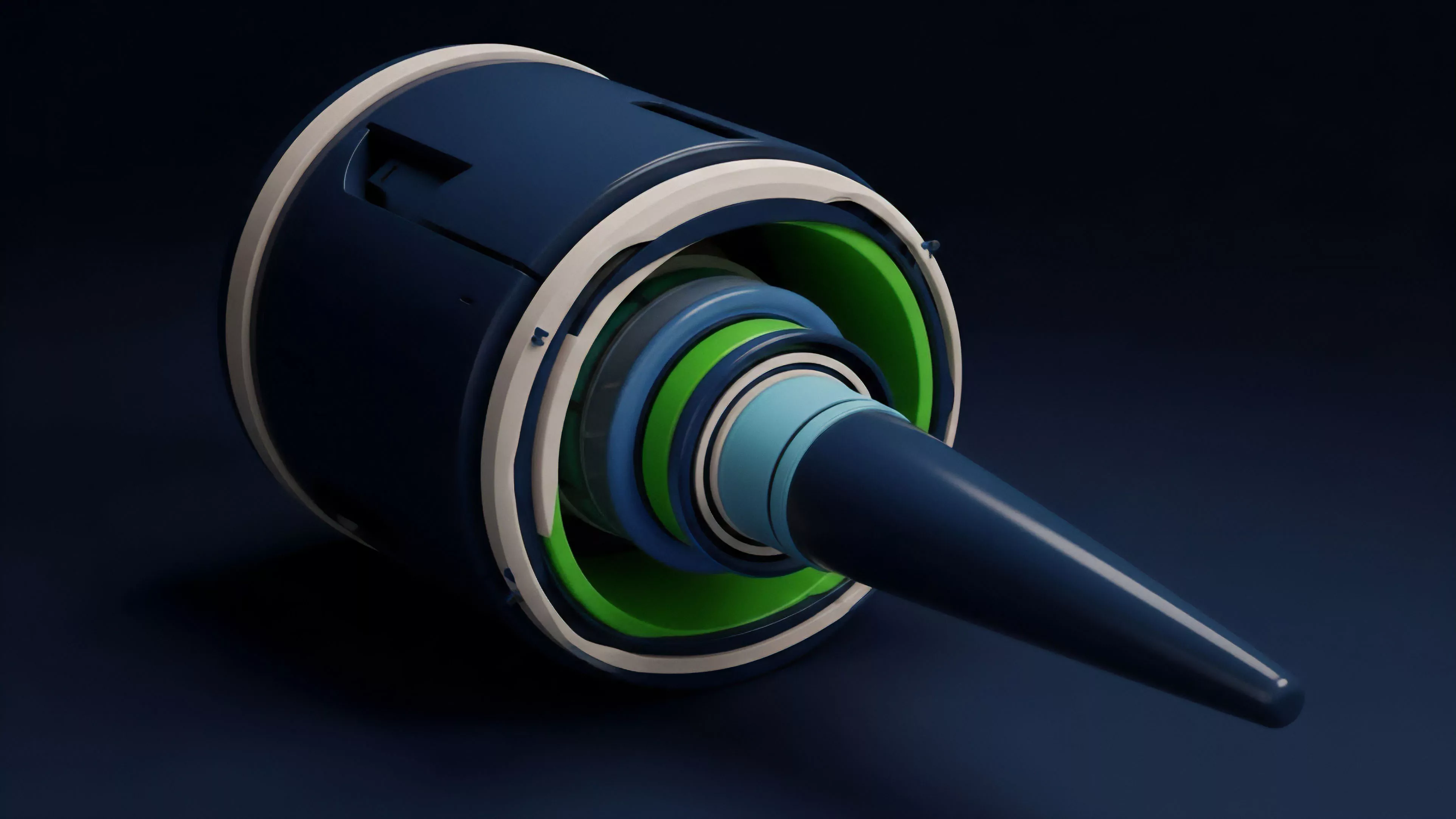

It requires the continuous minimization of the delta between the reported on-chain price and the true, global market price, subject to constraints on bandwidth, gas costs, and computational overhead. The core objective is to maximize the Signal-to-Noise Ratio (SNR) of incoming data streams while minimizing the probability of a system-wide failure caused by a malicious or malfunctioning data provider.

Optimal price feeds function by dynamically weighting diverse liquidity sources to maintain a robust and manipulation-resistant valuation index.

Mathematically, this involves the deployment of weighted moving averages or Bayesian estimation models that adjust the influence of each exchange based on its recent contribution to price discovery. The system must account for:

| Metric | Functional Significance |

|---|---|

| Latency | Minimizing the time gap between off-chain events and on-chain settlement. |

| Liquidity Weighting | Prioritizing high-volume venues to prevent small-trade price impact. |

| Deviation Thresholds | Filtering extreme outliers to prevent erroneous liquidation triggers. |

The physics of these systems often requires a trade-off between absolute accuracy and systemic safety. A highly reactive feed might provide superior precision but remains prone to triggering liquidations during transient volatility. Conversely, an overly smoothed feed protects against flash crashes but exposes the protocol to long-term insolvency if the price diverges significantly from the actual market reality.

The architect’s role is to tune these parameters to align with the specific risk tolerance of the derivative instrument.

Approach

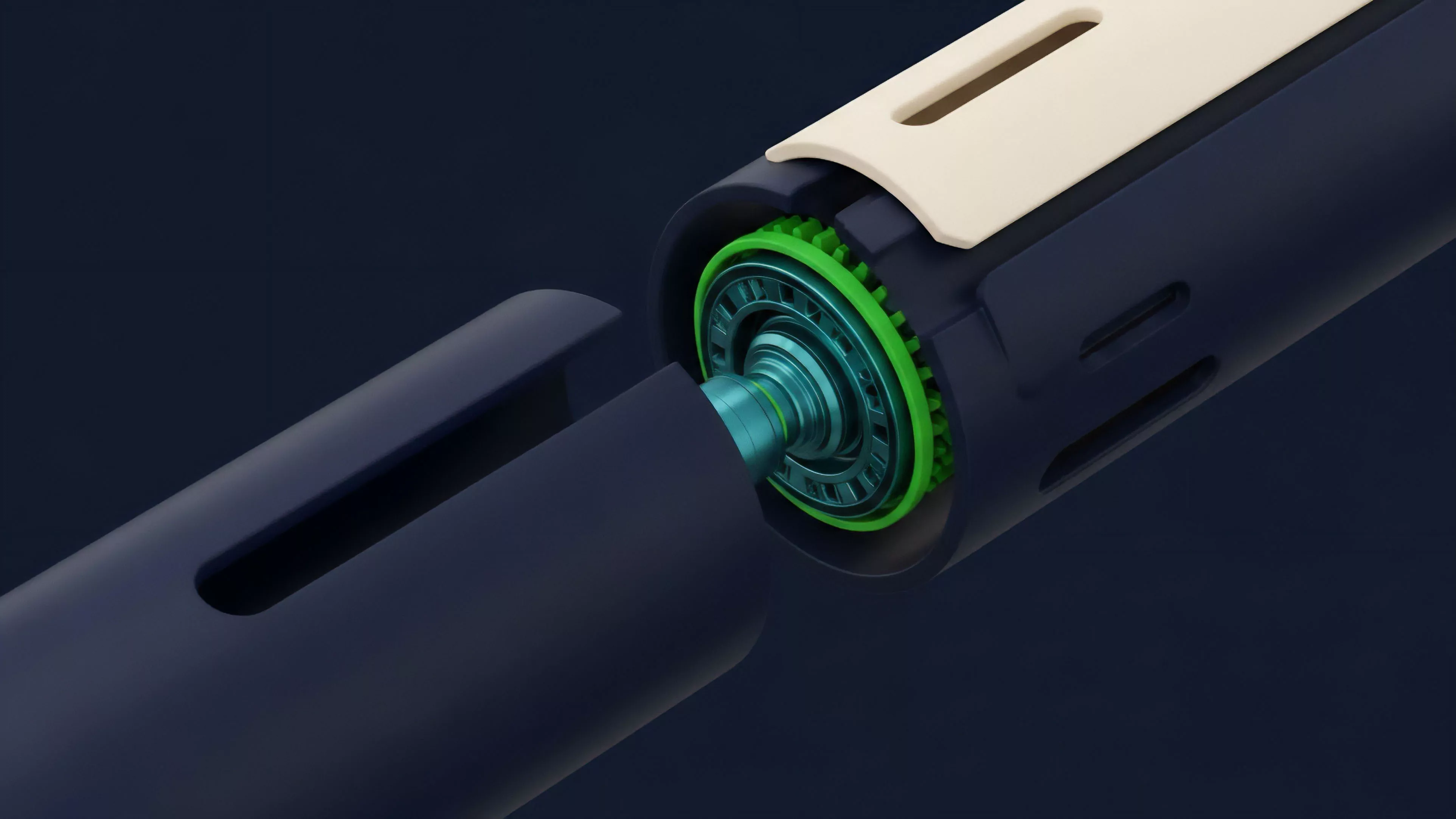

Current implementations of Price Feed Optimization rely on a hybrid architecture combining off-chain data aggregation with on-chain verification. Providers utilize off-chain nodes to query dozens of exchanges, calculate the aggregate price, and sign the result cryptographically. This data is then transmitted to the protocol, where it is verified against a pre-set list of trusted nodes or a decentralized validator set before being accepted by the smart contract.

- Decentralized Oracle Networks: These utilize consensus mechanisms to validate pricing data, ensuring no single entity can influence the feed.

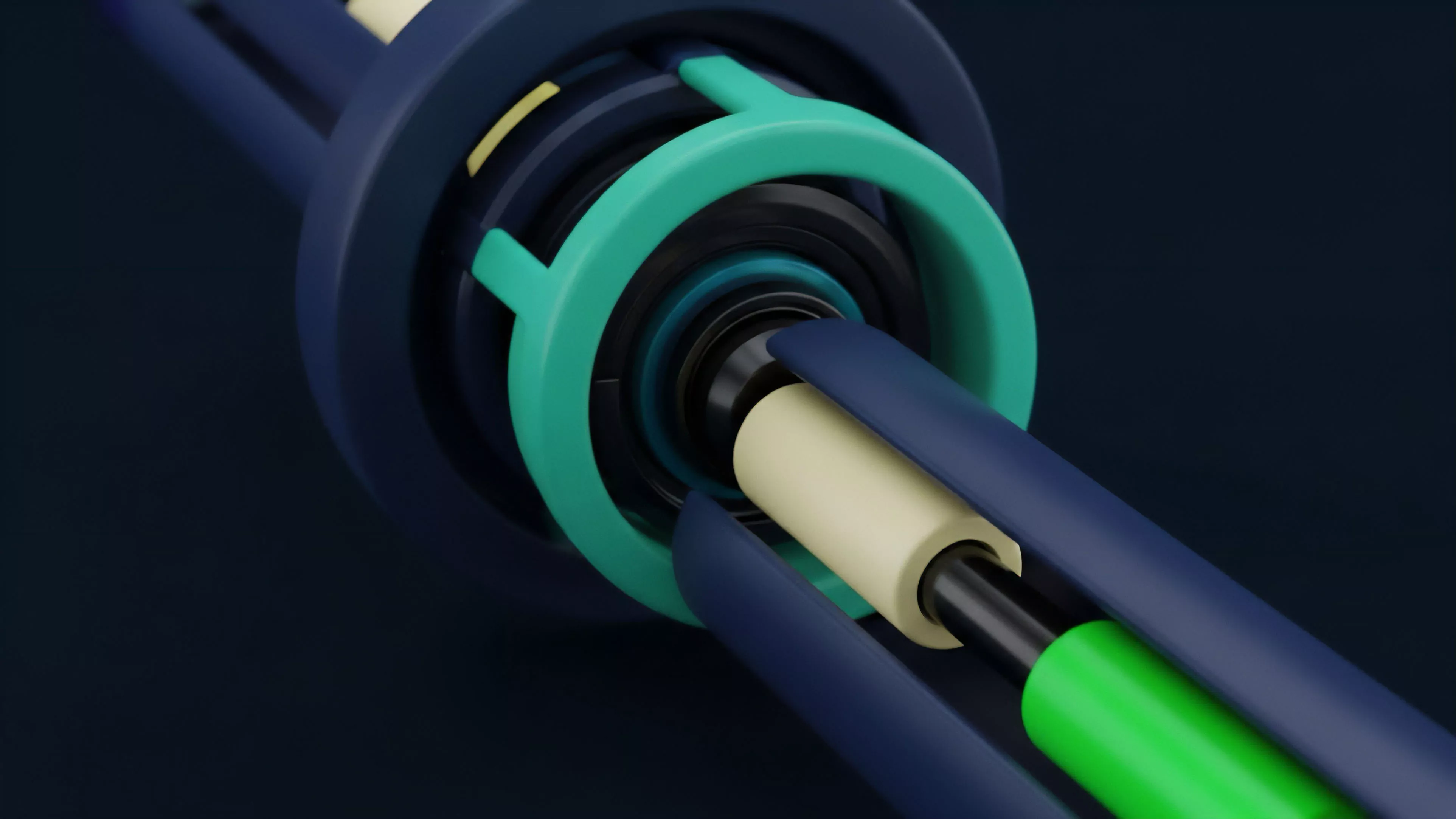

- Time-Weighted Average Price: TWAP mechanisms provide a smoothed price over a specific duration, effectively mitigating the impact of momentary market manipulation.

- Volume-Weighted Average Price: VWAP incorporates trading volume, ensuring that larger, more significant trades exert greater influence on the final index.

The shift toward modular data layers allows protocols to customize their feed logic without re-engineering the core settlement contract. Some systems now incorporate real-time volatility monitoring to dynamically adjust the update frequency; when market volatility exceeds a defined threshold, the system automatically increases the sampling rate to ensure the derivative contract remains tightly coupled with the underlying asset’s price action. This is where the pricing model becomes truly elegant ⎊ and dangerous if ignored.

Sometimes I think we treat these mathematical models as objective truths, when in reality, they are merely stylized representations of human greed and fear interacting at light speed. The structural integrity of the feed is the only thing standing between an orderly market and a system-wide collapse.

Evolution

The trajectory of Price Feed Optimization has moved from simple, centralized data ingestion toward highly resilient, multi-layered, and cryptographically verifiable pipelines. Early systems suffered from extreme fragility, as they depended on a limited set of external APIs that could be throttled or manipulated.

The development of decentralized oracle networks represented a significant milestone, introducing decentralized consensus to the data ingestion process.

| Generation | Mechanism | Primary Limitation |

|---|---|---|

| First | Single-Source APIs | Single point of failure and manipulation. |

| Second | Medianizer Aggregation | Susceptible to collusion among few providers. |

| Third | Consensus-Based Networks | High latency and significant operational complexity. |

Modern systems now integrate advanced filtering techniques, such as standard deviation-based outlier detection, to ensure that anomalous data points do not propagate into the protocol. Furthermore, the rise of zero-knowledge proofs is enabling the verification of off-chain computations directly on-chain, drastically reducing the trust requirements for third-party data providers. This evolution reflects a broader movement toward building protocols that are not only transparent but also inherently resistant to the adversarial conditions that characterize decentralized finance.

Horizon

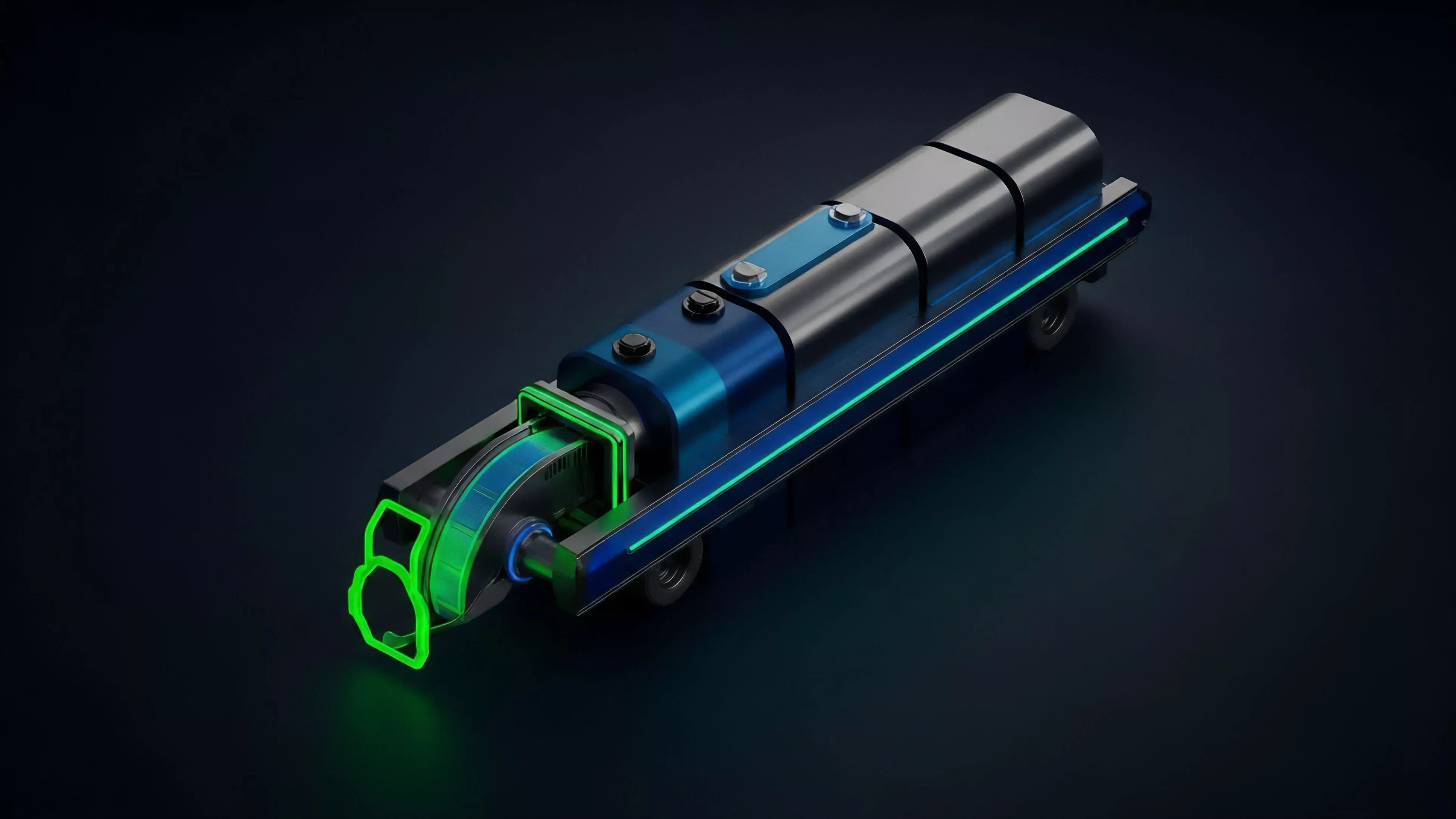

Future developments in Price Feed Optimization will focus on increasing data granularity and reducing the cost of high-frequency updates.

The integration of cross-chain liquidity aggregation will allow protocols to derive prices from the global state of the entire crypto asset landscape, rather than being limited to the liquidity present on a single chain. This will be critical for the growth of cross-chain derivative instruments that require unified pricing across fragmented ecosystems.

Future oracle designs will prioritize real-time risk assessment, automatically adjusting data weightings based on the specific liquidity conditions of the underlying asset.

We are also witnessing the emergence of intent-based pricing, where the feed itself is optimized not just for accuracy, but for the specific requirements of the derivative contract, such as minimizing slippage for large-scale liquidations. The ultimate goal is the creation of a self-correcting data architecture that can adapt to changing market regimes without human intervention. As these systems become more autonomous, the reliance on human-defined parameters will decrease, replaced by algorithmic adjustments that prioritize the long-term solvency of the protocol over short-term accuracy.