Essence

Price Feed Decentralization represents the architectural transition from centralized data ingestion to trust-minimized, consensus-driven oracle networks for financial derivatives. This shift replaces single-point-of-failure providers with distributed nodes that aggregate market data to produce a single, tamper-resistant reference price for settlement engines. The systemic importance lies in decoupling derivative contracts from the operational integrity of any single entity, thereby anchoring market integrity in cryptographic proofs and game-theoretic incentive structures.

Decentralized price feeds provide the foundational truth required for trustless settlement in high-leverage derivative markets.

These systems function by soliciting price observations from multiple independent data providers, which are then processed through aggregation algorithms to generate a canonical value. By distributing the responsibility of reporting, these networks mitigate the risk of price manipulation or data withholding, which are critical vulnerabilities in automated liquidation engines.

Origin

The necessity for Price Feed Decentralization emerged directly from the fragility observed in early decentralized finance applications, where reliance on centralized APIs led to catastrophic failures. Developers recognized that if an oracle could be compromised, the entire collateralization mechanism of a lending protocol or options vault would collapse, leading to mass liquidations and insolvency.

- Systemic Fragility: Early protocols used single-source data feeds, creating targets for adversarial actors.

- Manipulation Vectors: Centralized feeds were susceptible to local exchange outages or malicious data injection.

- Trust Minimization: The movement toward on-chain verification sought to remove human intermediaries from the critical path of financial settlement.

This evolution was driven by the realization that market participants require immutable and verifiable data to engage in long-term financial commitments. The move toward decentralized solutions mirrors the broader transition of the entire digital asset space from custodial reliance to non-custodial ownership.

Theory

The mechanics of Price Feed Decentralization rely on the convergence of distributed systems engineering and game theory. At its core, the protocol must ensure that the aggregated price accurately reflects global market conditions while remaining resistant to Byzantine faults.

The mathematical framework typically involves median-based aggregation, which naturally filters out outliers and prevents individual nodes from skewing the final result.

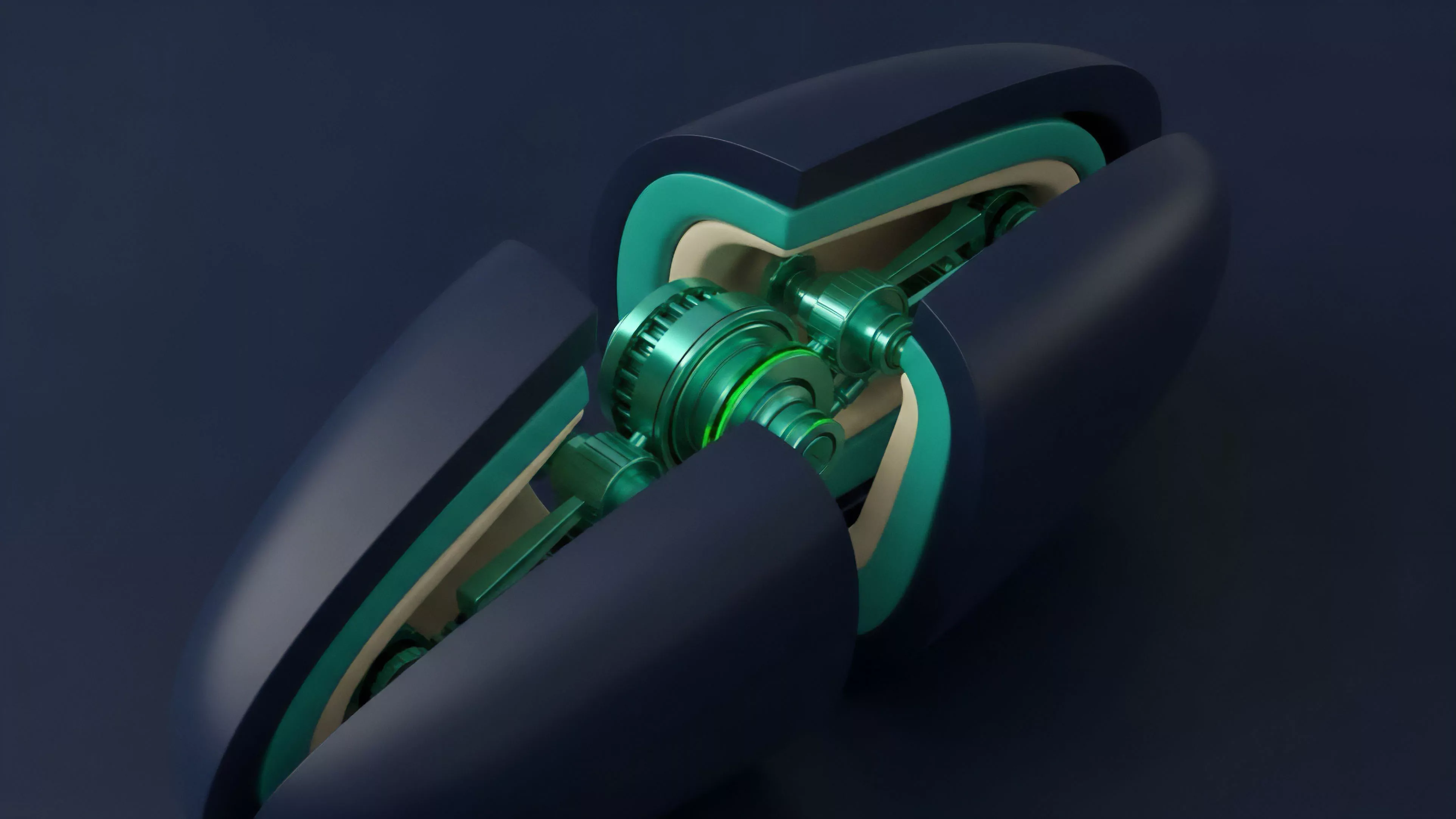

Consensus Architecture

The consensus mechanism functions as a distributed truth-seeking algorithm. Nodes are incentivized to provide accurate data through staking mechanisms, where dishonest reporting leads to slashing of capital. This creates an adversarial environment where the cost of attacking the feed exceeds the potential gain from manipulating the derivative contract.

Robust decentralized feeds utilize median-based aggregation to filter adversarial data inputs and ensure settlement accuracy.

Risk Sensitivity

The design must account for the latency between off-chain price discovery and on-chain settlement. If the update frequency is too low, the protocol risks exposure to arbitrageurs who can trade against stale prices. If the frequency is too high, the system incurs prohibitive gas costs.

Optimizing this balance requires a deep understanding of market volatility and the specific liquidity profile of the underlying assets.

| Parameter | Centralized Oracle | Decentralized Oracle |

| Fault Tolerance | Low | High |

| Attack Vector | Entity Compromise | Collusion or Sybil |

| Settlement Trust | High | Minimal |

Approach

Current implementations utilize sophisticated staking and reputation systems to maintain data integrity. Nodes, often professional validators, continuously monitor liquidity across various exchanges to calculate volume-weighted averages. This approach moves beyond simple arithmetic means to capture the true state of global liquidity.

Incentive Alignment

The economic design ensures that validators are rewarded for honesty and penalized for malicious activity. This alignment is managed through tokenomics that link the oracle protocol’s native asset to the security of the data provided. If a validator reports a price that diverges significantly from the consensus without a clear market reason, they face immediate financial consequences.

Validator staking and slashing mechanisms enforce data accuracy by making dishonesty economically irrational.

Operational Realities

Managing these systems involves constant monitoring of network health and potential exploits. Protocol architects must account for the fact that validators may attempt to collude. Therefore, the network design often includes randomized node selection or committee-based reporting to ensure that no single cluster of nodes can control the final price output.

Evolution

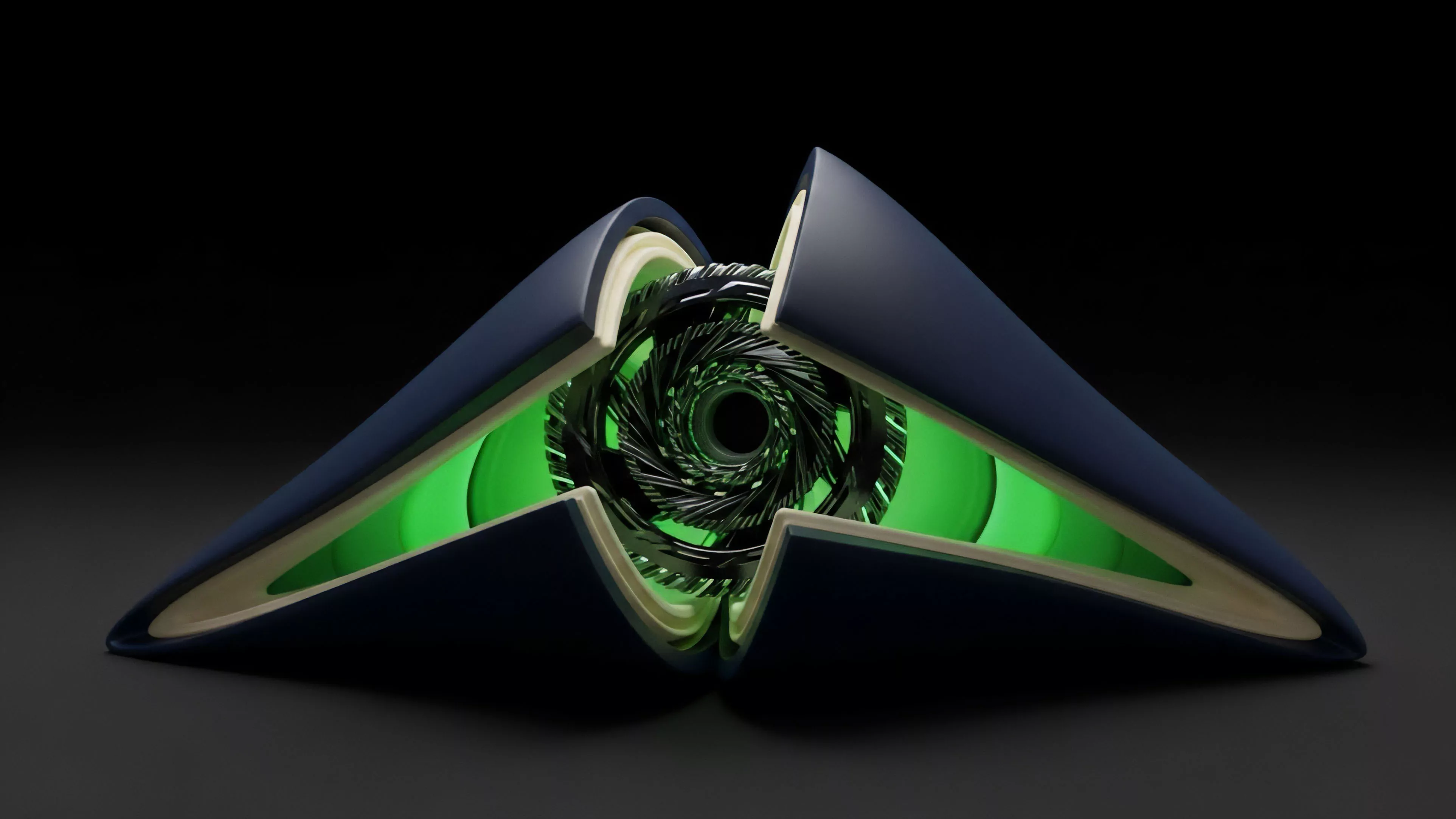

The architecture has matured from simple, single-asset reporting to complex, multi-layered data verification systems.

Initially, protocols relied on basic push-based models where data was periodically updated on-chain. This was inefficient and exposed users to significant front-running risks during periods of high market volatility.

- Push Models: Early systems relied on periodic, scheduled updates which often lagged behind rapid price movements.

- Pull Models: Newer architectures allow users to request data on-demand, reducing unnecessary gas consumption and improving data freshness.

- Zero-Knowledge Integration: Recent advancements incorporate cryptographic proofs to verify the source and integrity of data without revealing sensitive information.

The evolution reflects a broader trend of moving intelligence to the edges of the network. While the early days were defined by basic functionality, current systems are built to handle the intense pressures of high-frequency trading and complex option strategies, where even a millisecond of latency can be the difference between a successful hedge and a total loss. Sometimes the most effective security measure is not adding more complexity, but rather reducing the number of moving parts that can fail simultaneously.

The industry has shifted from focusing solely on the price to focusing on the provenance and speed of the data.

Horizon

Future developments in Price Feed Decentralization will focus on high-fidelity data feeds that can support increasingly complex financial instruments. As options markets grow, the demand for reliable volatility data and greeks will force oracle networks to evolve into more comprehensive data providers. We will likely see a move toward cross-chain, permissionless data streams that are native to the underlying execution layer.

The Synthesis of Divergence

The divergence between protocols that prioritize speed and those that prioritize maximum decentralization will define the next phase of market competition. Protocols that successfully bridge this gap ⎊ maintaining high-speed updates without compromising the integrity of the consensus mechanism ⎊ will become the standard infrastructure for decentralized derivatives.

The Novel Conjecture

I hypothesize that the next generation of oracle networks will transition from simple price reporting to providing real-time, on-chain risk assessments that dynamically adjust margin requirements based on global liquidity conditions. This would transform oracles from passive data providers into active risk-management agents.

The Instrument of Agency

A technical specification for a dynamic margin-adjustment module would integrate oracle data directly into the protocol’s margin engine, automatically scaling leverage limits as market volatility crosses pre-defined thresholds. This mechanism would provide an automated, algorithmic defense against the systemic risks inherent in high-leverage derivative trading. What happens to the integrity of decentralized markets when the oracle network itself becomes the primary source of liquidity, rather than just a reporter of it?