Essence

Price Feed Data Validation constitutes the architectural integrity of decentralized derivative protocols. It functions as the authoritative verification layer ensuring that external asset valuations align with on-chain settlement logic. Without this mechanism, the gap between market reality and protocol state creates an immediate surface for exploitation.

Price Feed Data Validation ensures decentralized protocols maintain accurate collateralization by reconciling external market data with internal settlement logic.

This process necessitates the ingestion, sanitization, and consensus-based filtering of price data from disparate venues. The objective centers on producing a single, tamper-resistant reference price that governs margin requirements, liquidation triggers, and option payoff functions.

Origin

The necessity for Price Feed Data Validation stems from the fundamental limitation of blockchain environments regarding external data access. Early decentralized finance experiments relied upon centralized, single-source oracles, which frequently collapsed under the weight of price manipulation or technical downtime.

- Single-Point Vulnerability: Reliance on a lone data source allows adversarial actors to skew reported prices for localized profit.

- Latency Mismatches: Discrepancies between high-frequency exchange feeds and slower blockchain confirmation times lead to stale price reporting.

- Consensus Fragmentation: The lack of a standardized validation protocol resulted in disparate, incompatible price representations across different decentralized platforms.

These historical failures highlighted that secure financial derivatives demand a decentralized, multi-source validation architecture to withstand systemic attacks.

Theory

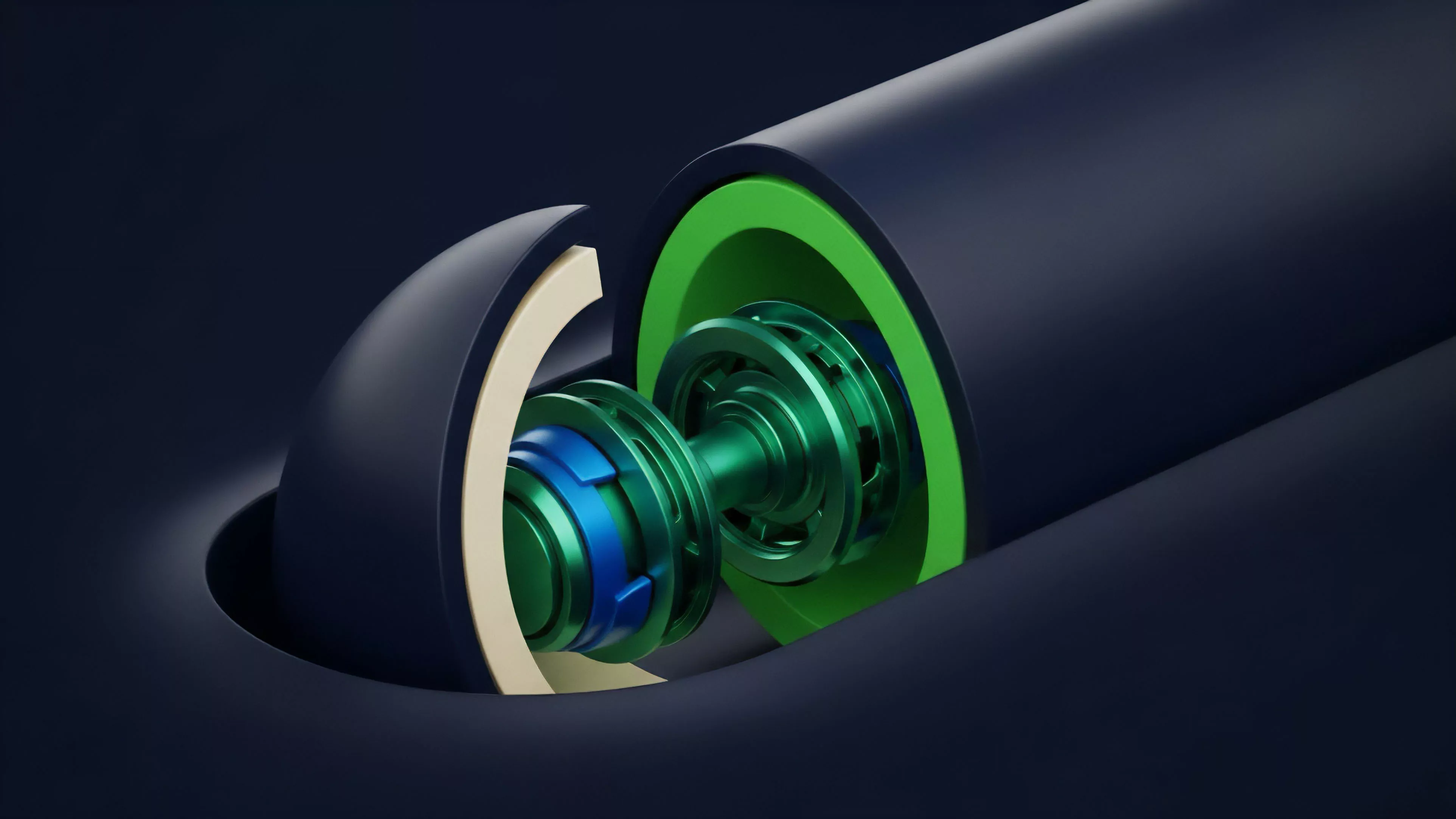

The mechanical foundation of Price Feed Data Validation rests upon the aggregation of weighted inputs and the application of statistical filters to discard outliers. This structure mitigates the impact of anomalous volatility or malicious data injection from any single node.

Aggregation Mechanics

The protocol evaluates multiple independent data providers, calculating a median or volume-weighted average price. This approach minimizes the influence of extreme deviations, often referred to as flash crashes or fat-finger errors on individual exchanges.

Statistical Filtering

Advanced validation models incorporate variance analysis and time-weighted thresholds to detect anomalous behavior. When a reported price deviates beyond a pre-defined standard deviation, the system triggers a circuit breaker or rejects the specific input.

| Mechanism | Function | Risk Mitigation |

|---|---|---|

| Median Aggregation | Selects central value | Prevents outlier manipulation |

| Volume Weighting | Prioritizes liquid venues | Reduces noise from thin markets |

| Deviation Thresholds | Rejects extreme spikes | Stops flash crash propagation |

Validation protocols utilize statistical filtering and multi-source aggregation to construct a robust, tamper-resistant price signal for derivatives.

Data processing often requires the reconciliation of disparate exchange order books, where liquidity depth varies significantly. The protocol must balance the need for high-frequency updates against the gas costs of on-chain computation.

Approach

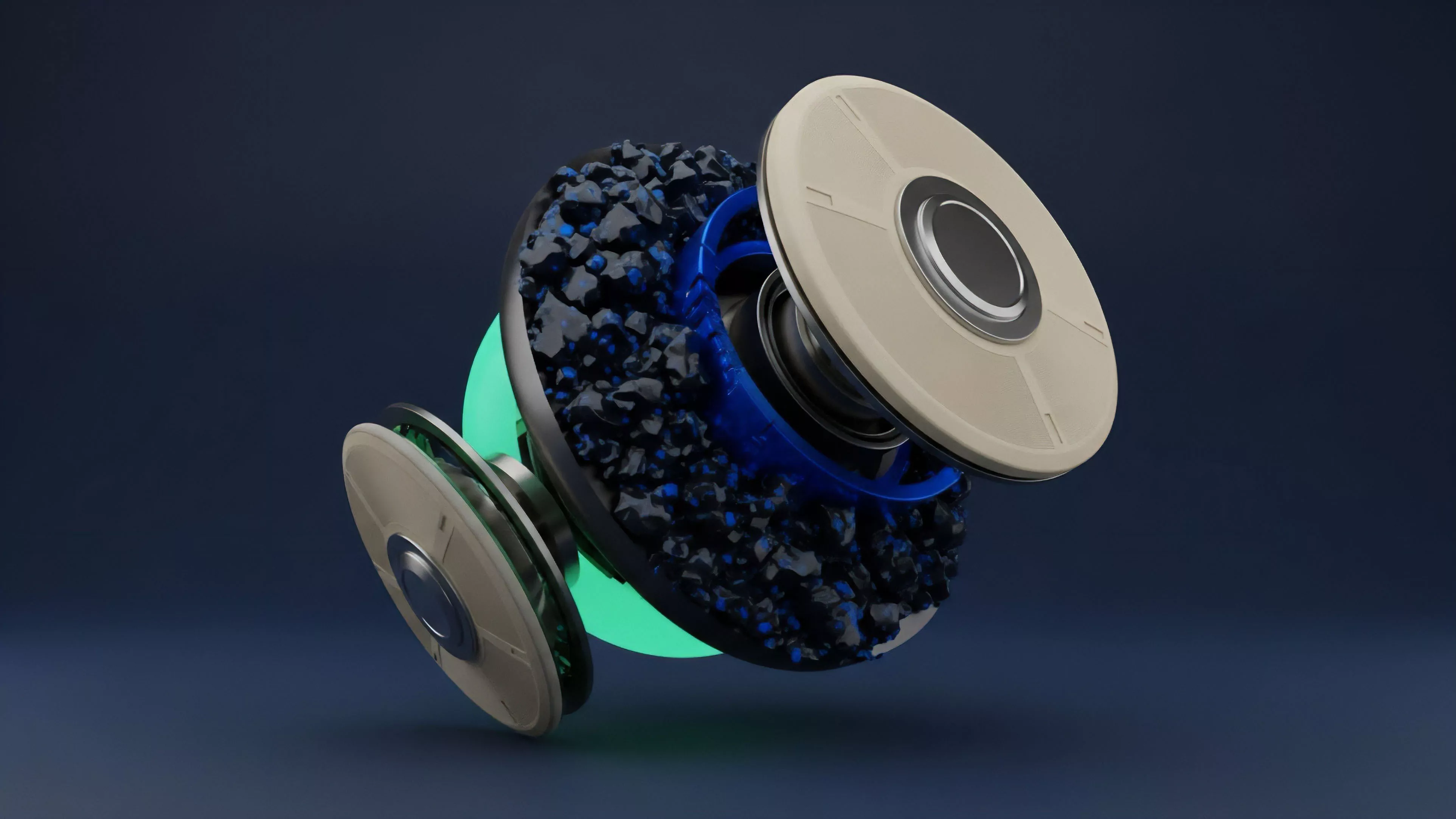

Current strategies for Price Feed Data Validation emphasize decentralized oracle networks and cryptographically secured data streams. These systems move beyond simple averaging to incorporate complex reputation-based weightings for individual data providers.

- Reputation Scoring: Nodes providing consistent, accurate data receive higher weights, while those with frequent deviations face stake slashing.

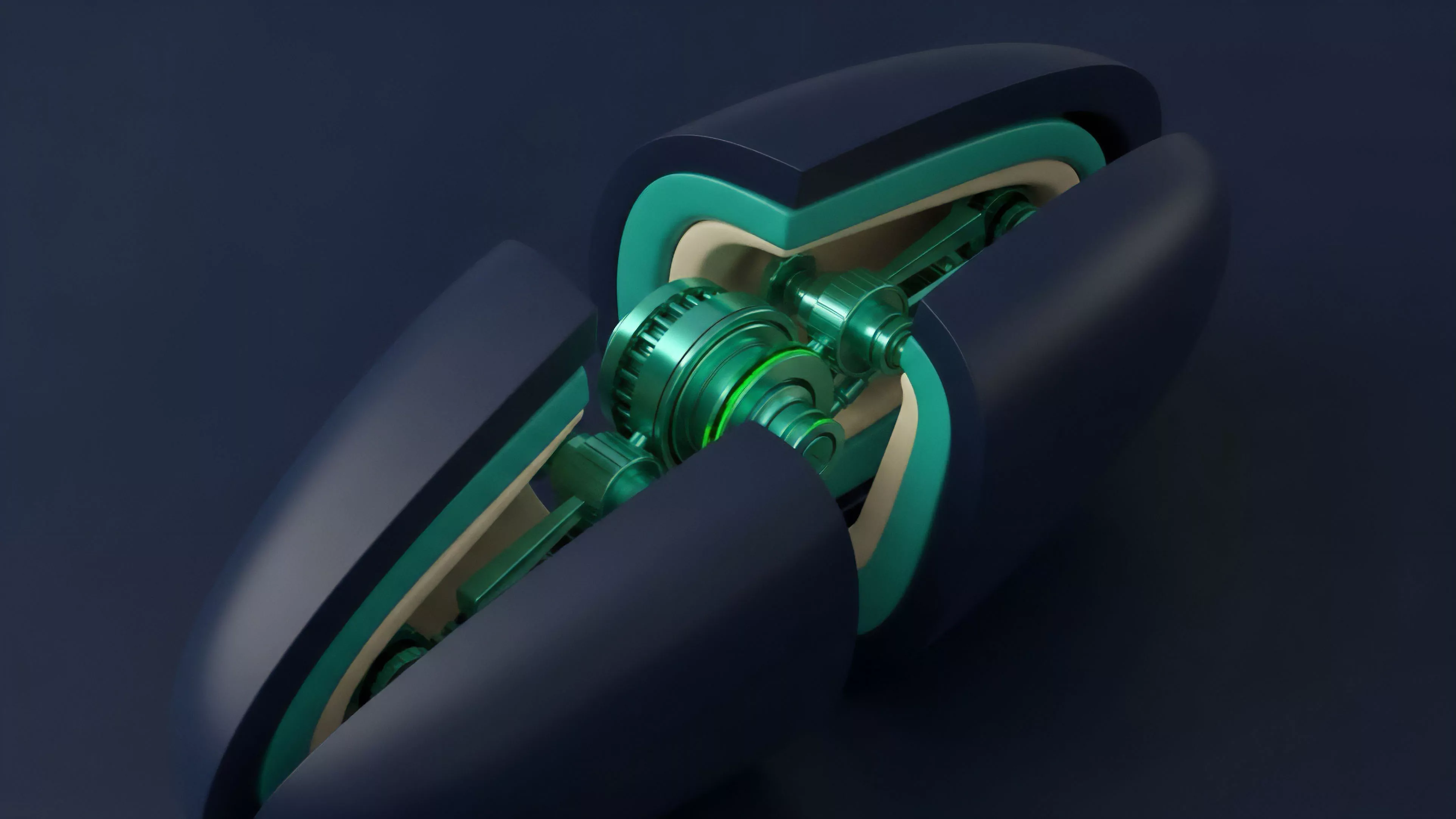

- Cryptographic Proofs: Utilization of zero-knowledge proofs allows providers to verify the authenticity of exchange data without revealing proprietary order flow information.

- Multi-Chain Synchronization: Validation layers ensure that price consistency exists across fragmented liquidity environments, reducing arbitrage opportunities between protocols.

Sophisticated validation architectures employ node reputation systems and cryptographic proofs to maintain price accuracy within adversarial market conditions.

The strategic challenge lies in minimizing the latency between an exchange price movement and the update on-chain. As market volatility increases, the window for potential exploitation widens, forcing developers to prioritize update frequency without compromising the rigor of the validation process.

Evolution

The transition from rudimentary data feeds to sophisticated validation protocols reflects the maturation of decentralized derivatives. Early iterations prioritized simplicity, whereas modern systems treat data integrity as a first-class security parameter.

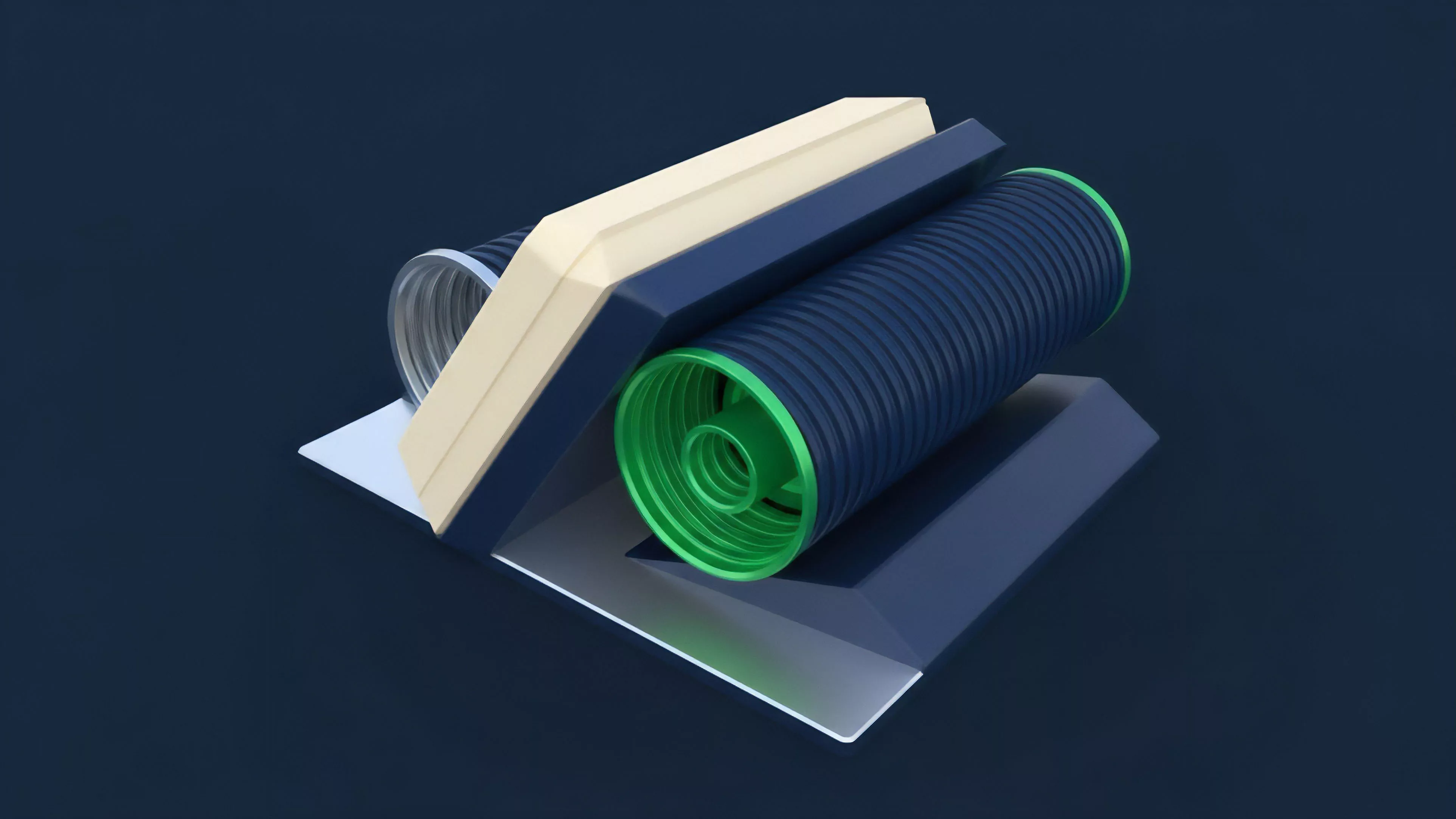

The trajectory shows a shift toward modularity, where protocols can plug into various validation services based on their specific risk tolerance. This flexibility allows for customized trade-offs between speed, cost, and security levels. One might consider how this mimics the evolution of physical infrastructure, where centralized hubs gave way to decentralized, resilient grids to prevent cascading failures.

The industry now recognizes that the quality of the price signal directly dictates the viability of the entire financial instrument.

Horizon

The future of Price Feed Data Validation moves toward predictive validation, where protocols incorporate machine learning to anticipate and filter malicious data before it enters the consensus layer. This proactive stance shifts the defensive posture from reactive filtering to active threat detection.

Predictive Modeling

Integration of real-time order flow analysis allows protocols to distinguish between legitimate market volatility and coordinated manipulation attempts.

Decentralized Governance

Governance models will increasingly allow participants to adjust validation parameters dynamically in response to changing market conditions.

Cross-Protocol Standardization

Common standards for data reporting will reduce fragmentation, fostering a more resilient infrastructure for global decentralized finance.