Essence

Predictive Analytics Models in the context of crypto derivatives represent the quantitative infrastructure designed to forecast future price trajectories, volatility surfaces, and liquidity shifts within decentralized markets. These frameworks operate by ingesting high-frequency on-chain data, order book dynamics, and macro-financial indicators to reduce the inherent uncertainty surrounding digital asset exposure. At their core, these models function as probabilistic engines that translate raw market entropy into actionable risk metrics, enabling participants to anticipate liquidation cascades or capitalize on mispriced options premiums before the market reaches equilibrium.

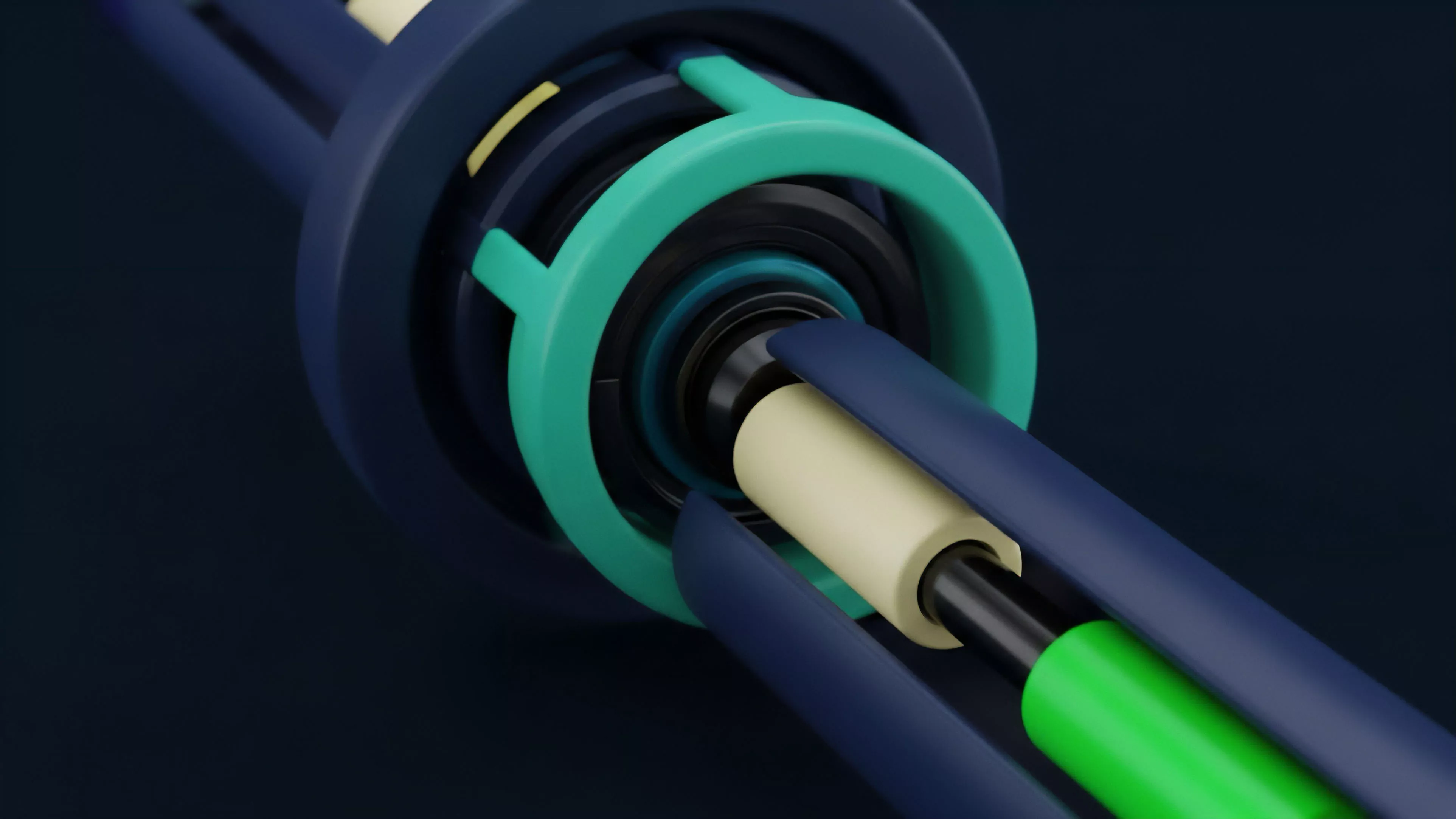

Predictive analytics models serve as the computational foundation for mapping future volatility and liquidity states in decentralized derivative markets.

The systemic relevance of these models extends beyond individual trading performance. They provide the necessary mathematical scaffolding for automated market makers and decentralized margin engines to adjust collateral requirements in real time. When these models fail to capture the nuances of non-linear asset behavior or ignore the impact of protocol-level governance shifts, the resulting information asymmetry accelerates systemic contagion.

Participants who deploy sophisticated modeling techniques effectively turn market volatility into a structured input for risk management rather than a chaotic external force.

Origin

The genesis of these models traces back to the integration of traditional quantitative finance principles ⎊ specifically the Black-Scholes framework ⎊ into the permissionless, high-latency environments of early decentralized exchanges. Initial iterations relied on simplified Brownian motion assumptions, which proved inadequate for the heavy-tailed, reflexive nature of crypto asset price action. As decentralized finance matured, developers began incorporating elements from stochastic calculus and game theory to account for the unique liquidity constraints and the recursive incentives embedded in protocol tokenomics.

- Stochastic Volatility Models emerged to address the failure of constant volatility assumptions in high-frequency trading environments.

- Machine Learning Regression was adopted to identify non-linear patterns within massive datasets of historical on-chain transaction logs.

- Game Theoretic Simulations were developed to model the adversarial behavior of participants during periods of extreme market stress.

This evolution was driven by the necessity to solve for the unique failure modes of programmable money, such as flash loan attacks and rapid oracle manipulation. By borrowing from the rigorous mathematical history of legacy derivatives, these early builders constructed the first generation of predictive tools, transforming decentralized exchanges from simple swap venues into complex, derivative-heavy financial architectures.

Theory

The theoretical framework governing Predictive Analytics Models relies on the synthesis of market microstructure and quantitative finance. Unlike traditional equities, crypto assets exhibit reflexive properties where price movements directly influence the incentive structures of the underlying protocols.

Consequently, models must integrate Protocol Physics ⎊ the technical constraints of the blockchain ⎊ to accurately forecast settlement outcomes. The following table delineates the primary variables influencing these models:

| Variable | Impact on Predictive Accuracy |

| Order Flow Imbalance | High predictive value for short-term directional movement. |

| Liquidation Thresholds | Critical for modeling tail-risk and systemic cascade potential. |

| Implied Volatility Skew | Essential for pricing non-linear options and assessing sentiment. |

| Protocol TVL Velocity | Indicator of capital efficiency and systemic fragility. |

Effective modeling requires the integration of protocol-specific constraints with traditional quantitative variables to account for reflexive market dynamics.

Mathematical rigor is required to maintain the stability of these systems. Models often employ GARCH (Generalized Autoregressive Conditional Heteroskedasticity) processes to forecast volatility clustering, combined with Monte Carlo simulations to stress-test portfolios against black-swan events. The challenge remains the adversarial nature of these protocols.

Participants are not merely passive observers; they actively seek to exploit model blind spots, necessitating constant recalibration of the underlying algorithms to prevent the models from becoming obsolete or, worse, weaponized against the system itself.

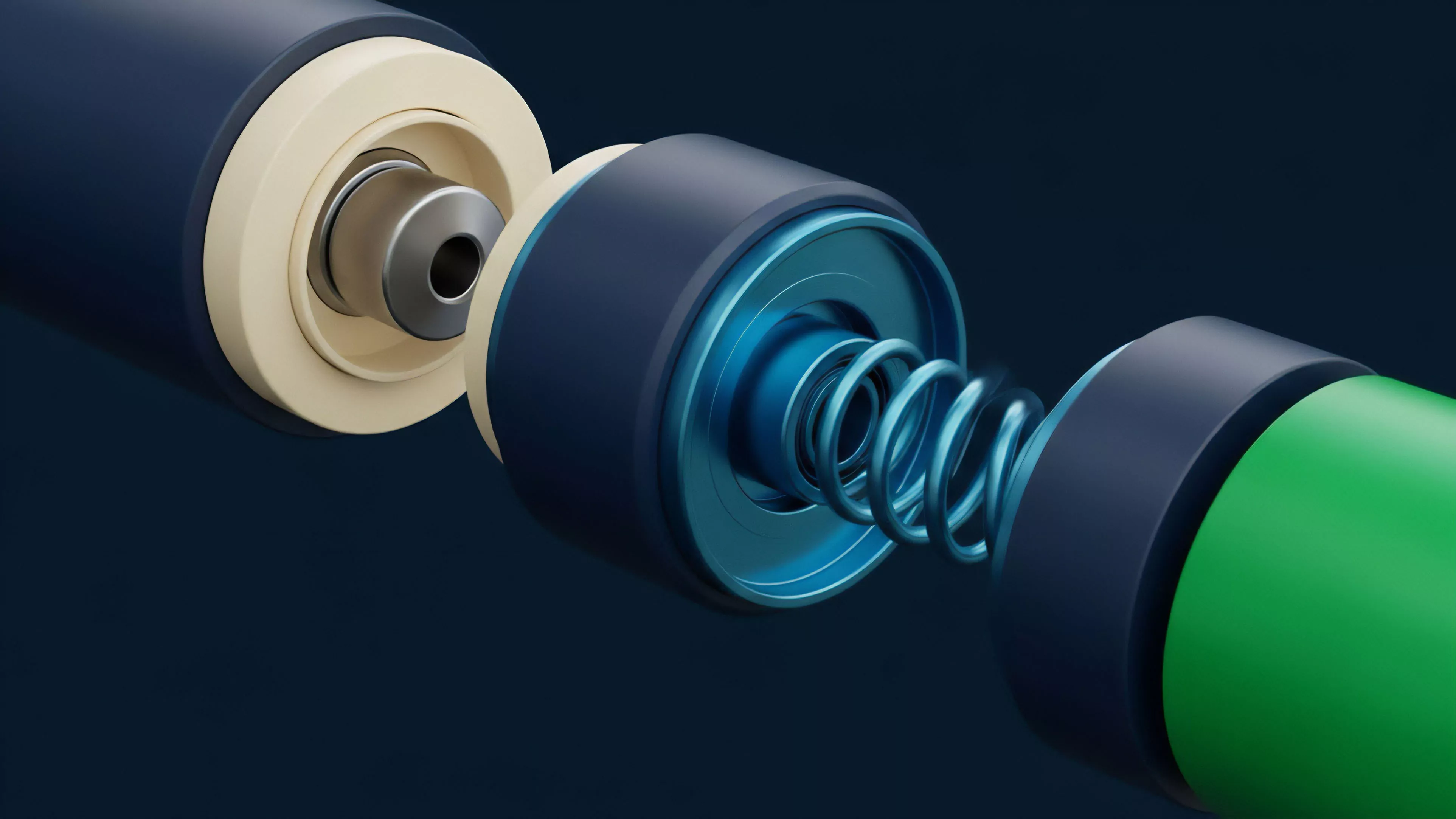

Approach

Current practitioners deploy Predictive Analytics Models through a layered stack that combines off-chain computational power with on-chain verification. The prevailing strategy involves building proprietary pipelines that ingest real-time websocket data from major exchanges, normalizing this data against network congestion metrics, and feeding the result into ensemble learning architectures. These architectures weigh multiple signals ⎊ ranging from whale wallet movements to funding rate divergence ⎊ to produce a consolidated probability distribution for asset prices.

- Data Normalization ensures that raw signals from fragmented liquidity sources are comparable and ready for analysis.

- Signal Synthesis involves weighting inputs based on their historical predictive power and current market regime.

- Strategy Execution translates the model output into automated order placement, hedging, or collateral rebalancing.

This approach is characterized by an obsession with latency and data fidelity. In a system where execution speed can be the difference between profit and a liquidated position, the infrastructure supporting the model is as significant as the mathematics behind it. The goal is to achieve a state of continuous adaptation, where the model learns from every trade and adjusts its parameters to the evolving behavior of market participants, ensuring that the strategy remains resilient against shifting liquidity landscapes.

Evolution

The trajectory of these models has shifted from simple trend-following heuristics toward complex, multi-agent systems.

Early tools focused on basic moving averages and RSI-based indicators, which were easily gamed by sophisticated market makers. Today, the focus has moved to Bayesian Inference and Reinforcement Learning, allowing models to update their beliefs about the market state in real time as new blocks are mined. The rise of decentralized autonomous organizations has further forced these models to account for governance risk, where a single protocol vote can fundamentally alter the value accrual mechanics of an entire derivative product.

The transition from static indicators to adaptive agent-based systems marks the current frontier in derivative risk management and price discovery.

The systemic impact of this evolution is the professionalization of decentralized market participants. As the sophistication of predictive tools increases, so does the efficiency of price discovery. However, this progress introduces a paradox: as more participants use similar models to anticipate market moves, the market becomes prone to synchronized reflexive behaviors, potentially increasing the frequency and severity of localized flash crashes.

Understanding the limitations of one’s own model is now as vital as the accuracy of its output.

Horizon

The future of Predictive Analytics Models lies in the convergence of Zero-Knowledge Proofs and decentralized computation, enabling the execution of complex, private models on-chain. This advancement will allow for the development of trustless, verifiable risk-scoring systems that do not require exposing proprietary trading strategies. Furthermore, the integration of cross-chain liquidity analytics will provide a holistic view of derivative markets, allowing models to predict contagion propagation across different ecosystems before it reaches the broader financial network.

- On-chain Model Verification will permit trustless auditing of predictive performance.

- Cross-Protocol Liquidity Mapping will reveal systemic interdependencies previously obscured by fragmentation.

- Autonomous Risk Management Agents will perform real-time portfolio optimization without human intervention.

As these technologies mature, the barrier between traditional quantitative research and decentralized protocol development will continue to dissolve. The ultimate success of these models will be measured by their ability to provide stability in an inherently volatile environment, effectively turning the chaos of decentralized finance into a predictable and manageable system for all participants.