Essence

The integration of predictive analytics into crypto options architecture represents a necessary evolution from simple statistical inference to complex systems modeling. The goal shifts from forecasting a single asset’s price to predicting the behavior of the entire interconnected network. In traditional finance, predictive analytics primarily addresses volatility forecasting and directional price movements.

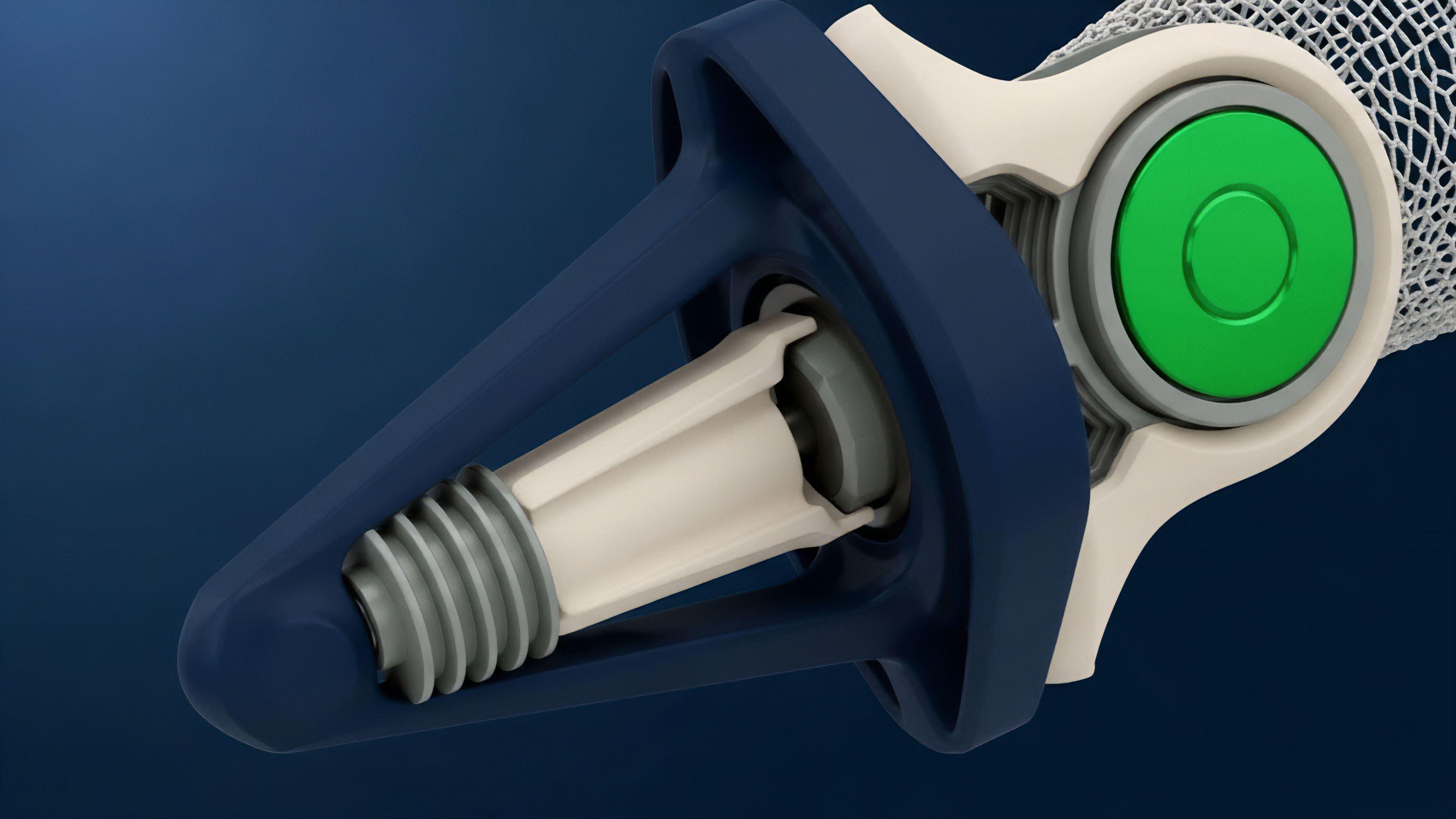

In the decentralized context, this function expands to include the prediction of systemic risk propagation, smart contract state changes, and the dynamic response of liquidity pools to external shocks. A predictive model in this domain must account for both market microstructure data and the “protocol physics” governing the underlying decentralized application.

This approach requires moving beyond standard time-series analysis. The data generation process in decentralized finance (DeFi) is non-stationary and highly reflexive, meaning the act of observation and prediction influences the system’s future state. The integration of predictive analytics seeks to model these feedback loops, providing insights into potential liquidation cascades, capital efficiency, and the stability of collateralized debt positions.

It transforms options pricing from a purely mathematical exercise into a game-theoretic problem, where a model must predict how various market participants will react to specific on-chain events.

Predictive analytics integration in crypto options is the process of synthesizing market microstructure data and protocol physics to forecast systemic risk propagation and network state changes.

Origin

The genesis of predictive analytics in crypto options traces back to the limitations exposed during periods of extreme market stress. Early crypto derivatives platforms, both centralized and decentralized, relied on models adapted from traditional finance. These models, often variations of Black-Scholes or GARCH, proved brittle when faced with the unique characteristics of digital assets.

The high volatility clustering, low liquidity in tail events, and the absence of a truly risk-free rate in many protocols rendered these legacy approaches insufficient for accurate risk management.

The critical turning point occurred with the rise of on-chain derivatives protocols and automated market makers (AMMs). These new architectures introduced a wealth of publicly verifiable data, but also new failure modes. Unlike centralized exchanges where data access is privileged, on-chain data allows for a transparent view of every transaction, liquidation, and protocol parameter change.

The challenge became how to process this new, high-dimensional data set to predict outcomes like impermanent loss for liquidity providers or the likelihood of collateral default. This necessitated the development of new models specifically designed to interpret the unique physics of decentralized protocols.

Theory

The theoretical foundation for predictive analytics in crypto options centers on modeling non-stationarity and high-dimensionality. Traditional quantitative models assume certain statistical properties of price movements that do not hold true in crypto markets. The market structure is constantly evolving, driven by new protocol deployments, tokenomics changes, and regulatory shifts.

This necessitates a move toward machine learning models that can dynamically adapt to changing data distributions.

The core challenge lies in data source integration. A robust predictive framework for crypto options must synthesize data from disparate sources to create a complete picture of risk. This requires a shift from relying solely on price history to incorporating data on network health and protocol state.

Data Source Stratification

- On-Chain Data: This includes transaction volume, gas fees, smart contract interactions, and the real-time state of collateral pools. Analyzing this data provides a view of actual user behavior and capital flows.

- Market Microstructure Data: Order book depth, bid-ask spreads, and order flow imbalance on centralized exchanges (CEX) and decentralized exchanges (DEX). This data provides insights into immediate supply and demand dynamics.

- Tokenomics Data: Changes in token distribution, vesting schedules, and governance proposals that affect future supply and demand.

Model Selection and Application

The selection of models for predictive analytics depends on the specific risk being addressed. For short-term volatility forecasting, high-frequency data and deep learning models (such as LSTMs) are often employed to capture patterns in order flow. For longer-term systemic risk assessment, models must incorporate game-theoretic elements.

This involves predicting how liquidity providers and arbitrageurs will react to changes in protocol parameters.

The fundamental theoretical shift involves moving from traditional time-series models to dynamic, multi-variate machine learning frameworks capable of modeling the non-stationary and reflexive nature of decentralized markets.

A critical theoretical component is the concept of volatility skew. In traditional options, skew reflects market sentiment about tail risk. In crypto, this skew is often more pronounced and directly tied to on-chain events, such as upcoming liquidations or major protocol upgrades.

Predictive models must accurately capture this skew to avoid mispricing options and exposing market makers to significant tail risk.

Approach

The practical implementation of predictive analytics integration requires a structured approach to risk management and pricing. This involves building a system that processes real-time data, generates risk signals, and automatically adjusts strategies. The first step involves creating a robust data pipeline capable of handling high-frequency on-chain data and market data feeds.

This pipeline must clean and normalize the data to remove noise and ensure consistency across different protocols.

Once the data pipeline is established, a multi-model approach is typically employed. No single model provides a complete view of risk. Instead, a combination of models provides different signals that are aggregated into a single risk score or pricing adjustment.

For example, a GARCH model might forecast short-term volatility, while a separate model monitors on-chain collateral health to predict systemic risk.

Risk Management Framework Components

A successful implementation requires a clear understanding of the specific risks inherent in crypto options. These risks go beyond simple price movement and include technical and systemic factors.

- Liquidation Risk Forecasting: Predicting the probability and magnitude of liquidation cascades by analyzing collateralization ratios across a protocol’s user base.

- Implied Volatility Surface Dynamics: Adjusting the implied volatility surface in real time based on order flow imbalance and on-chain activity.

- Smart Contract Vulnerability Prediction: Identifying patterns in code changes or governance proposals that could introduce new technical risks.

The practical approach to pricing options involves using predictive models to adjust the inputs of standard pricing formulas. Instead of using historical volatility, predictive analytics provides a forward-looking volatility forecast. This results in a more accurate pricing mechanism that accounts for future expected volatility rather than past performance.

Effective implementation requires a multi-model approach that combines traditional volatility forecasting with real-time on-chain data analysis to predict systemic risks and adjust option pricing dynamically.

Evolution

The evolution of predictive analytics in crypto options has mirrored the increasing complexity of the underlying protocols themselves. Early models focused on simple time-series analysis, treating crypto assets as isolated financial instruments. This approach quickly proved inadequate during periods of high leverage and interconnectedness.

The first major evolutionary leap was the integration of market microstructure data from centralized exchanges, allowing for more accurate short-term volatility forecasts by analyzing order flow dynamics.

The second, and more significant, evolutionary step involved incorporating “protocol physics” into the models. This shift was driven by the realization that on-chain events, such as liquidations and changes in collateral requirements, create feedback loops that are entirely unique to decentralized systems. Models evolved from simple statistical forecasting to complex simulations that model the behavior of market participants under various stress scenarios.

This transition required a deeper understanding of game theory and behavioral economics.

This evolution led to the development of sophisticated risk dashboards and automated risk engines. These tools move beyond simple data presentation to actively recommend changes in protocol parameters or adjust risk exposure in real time. The focus shifted from passively observing the market to actively managing systemic risk through data-driven intervention.

Horizon

Looking forward, the future of predictive analytics integration in crypto options involves a complete integration into automated risk governance. The next generation of protocols will not rely on human operators to adjust risk parameters; instead, predictive models will feed directly into autonomous risk engines. These engines will dynamically adjust collateral requirements, liquidation thresholds, and funding rates based on real-time predictions of market stress and liquidity depth.

This future state represents a move toward “predictive governance.” A decentralized autonomous organization (DAO) will use predictive models to make decisions about protocol upgrades or treasury management. For example, a model might predict the impact of a new collateral asset on systemic risk, and the DAO would then vote on whether to approve the asset based on the model’s output. This creates a more resilient and self-optimizing financial system.

A key area of development will be the integration of predictive analytics with decentralized insurance and hedging mechanisms. By accurately forecasting systemic risk, protocols can price insurance policies more effectively and create dynamic hedging strategies that automatically adjust based on predicted volatility spikes. This transforms risk management from a reactive measure into a proactive, automated process.

| Model Input Category | Traditional Finance Approach | Decentralized Finance Integration |

|---|---|---|

| Volatility Data | Historical price movements, implied volatility from CBOE. | Real-time on-chain transaction data, liquidity pool depth, order flow imbalance across CEX/DEX. |

| Risk-Free Rate | Treasury bond yield. | Lending protocol interest rates, stablecoin yield curves, or derived risk-free rate from protocol mechanics. |

| Systemic Risk Factors | Macroeconomic indicators, credit default swaps. | Collateralization ratios, liquidation thresholds, protocol governance actions, and inter-protocol dependencies. |

The ultimate goal is to create a closed-loop system where predictive analytics provides the intelligence necessary for a protocol to maintain stability and capital efficiency autonomously. This requires addressing the challenges of data privacy, model interpretability, and the potential for new forms of manipulation where adversaries attempt to “game” the predictive model itself.

Glossary

Sentiment Analysis Integration

Garch Model Application

Sequencer Integration

Compiler Toolchain Integration

Regulatory Integration Challenges

Gas Fee Integration

Yield-Bearing Collateral Integration

Options Trading Analytics

Bridge-Fee Integration