Probabilistic Loss Boundary

The abrupt liquidation of highly leveraged positions during market dislocations underscores the requirement for a rigorous Portfolio VaR Calculation. This metric functions as a statistical threshold, defining the maximum expected loss within a specific confidence interval over a defined temporal window. In decentralized finance, where volatility is the primary state rather than an anomaly, this calculation provides the mathematical floor for capital adequacy and solvency.

It transforms raw market data into a single, actionable number that represents the catastrophic edge of a trading strategy.

VaR represents the maximum expected loss over a specific time horizon at a predefined confidence level.

Systemic stability in crypto derivatives markets relies on the ability of participants to quantify their aggregate exposure across multiple underlyings. Unlike isolated position monitoring, a Portfolio VaR Calculation accounts for the correlations between assets, identifying when a diversified set of holdings might simultaneously fail. This systemic perspective is vital for automated margin engines that must execute liquidations before a participant’s equity becomes negative.

The calculation acts as a governor on leverage, ensuring that the velocity of market moves does not outpace the protocol’s ability to remain solvent. The architecture of a decentralized option vault or a perpetual futures exchange depends on these boundaries to set collateral requirements. Without a robust Portfolio VaR Calculation, protocols risk either over-collateralization, which stifles capital efficiency, or under-collateralization, which invites insolvency during tail events.

By anchoring risk management in probabilistic outcomes, the system moves away from arbitrary leverage limits toward a model-driven environment where risk is priced and managed with precision.

Historical Genesis

The transition from the “4:15 Report” at J.P. Morgan in the 1990s to the 24/7 liquidity of digital assets represents a massive shift in risk management speed. Traditional finance developed these metrics to provide executives with a daily snapshot of exposure, yet the crypto environment demands continuous, real-time updates. The Portfolio VaR Calculation emerged in the digital asset space as a response to the limitations of simple delta-based limits, which failed to account for the rapid correlation spikes observed during the 2020 and 2021 market cycles.

- Risk managers demanded a single metric to synthesize aggregate market exposure across disparate blockchains.

- The shift from asset-specific limits to aggregate capital requirements necessitated a more sophisticated statistical tool.

- The Basel Accords established the regulatory precedent for standardized risk reporting that crypto protocols now emulate.

- Automated liquidators required a mathematical trigger to maintain protocol health during flash crashes.

Standard deviations in crypto markets frequently exceed four sigmas, making Gaussian models inherently dangerous.

Early implementations of Portfolio VaR Calculation in crypto were often rudimentary, relying on simple historical lookbacks that ignored the unique “fat-tail” distribution of token returns. As the market matured, the introduction of sophisticated option Greeks and cross-margin systems forced a transition toward more complex methodologies. This development was driven by the realization that in an adversarial, code-governed environment, the inability to accurately predict loss thresholds leads to immediate and irreversible capital depletion.

Mathematical Logic

The mathematical foundation of a Portfolio VaR Calculation typically rests on three primary methodologies: the Variance-Covariance method, Historical Simulation, and Monte Carlo Simulation.

The Variance-Covariance approach assumes that asset returns follow a multivariate normal distribution, allowing for a closed-form solution that is computationally efficient. Yet, this method often underestimates risk in crypto because it fails to capture the leptokurtosis ⎊ the tendency for extreme events to occur more frequently than a normal distribution predicts. Historical Simulation avoids distributional assumptions by using actual past price movements to project potential future losses, though it is limited by the quality and relevance of the lookback period.

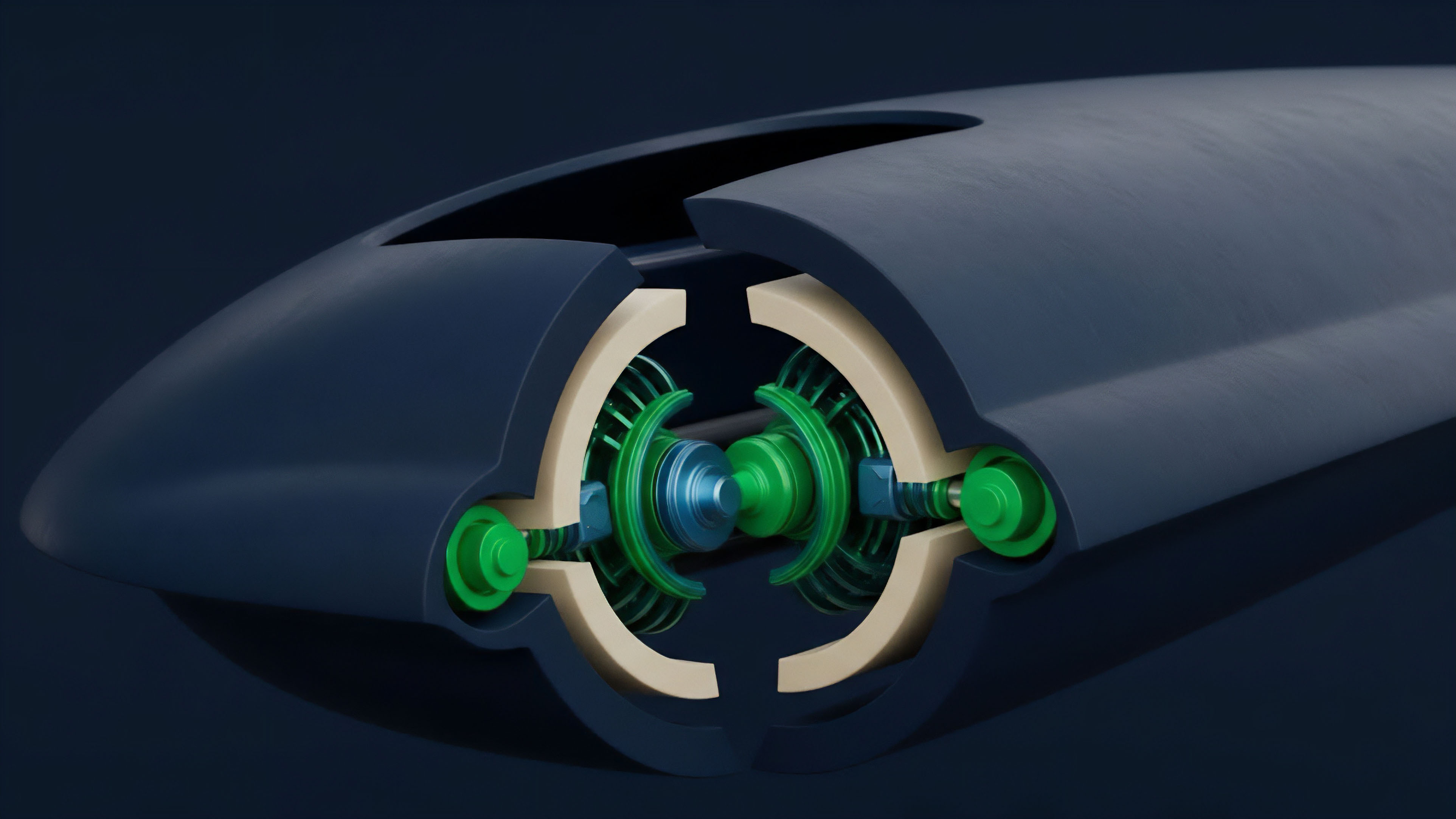

Monte Carlo Simulation represents the most robust, albeit computationally expensive, method, generating thousands of random price paths based on specified volatility and correlation parameters to map the entire distribution of potential outcomes. For an options portfolio, the Portfolio VaR Calculation must also incorporate non-linear risk factors, specifically Gamma and Vega, which describe how the portfolio’s sensitivity changes with price and volatility shifts. The Delta-Gamma approximation is often used to provide a more accurate loss estimate than a simple linear model, accounting for the curvature of the option’s price relative to the underlying asset.

This complexity is necessary because the risk of an options portfolio is not static; it evolves as the underlying price approaches the strike, creating a dynamic risk profile that requires constant recalibration. In this context, the covariance matrix ⎊ the grid of correlations between every asset pair ⎊ is the most sensitive input, as correlation breakdown during crises is the primary driver of systemic failure.

| Methodology | Data Requirement | Computation Speed | Tail Risk Capture |

|---|---|---|---|

| Variance-Covariance | Low | High | Poor |

| Historical Simulation | Medium | Medium | Moderate |

| Monte Carlo | High | Low | Excellent |

Dynamic hedging requires real-time updates to the covariance matrix to prevent catastrophic liquidation cascades.

Methodological Execution

Implementing a Portfolio VaR Calculation in a production environment involves a sequence of rigorous steps to ensure the output is both accurate and timely. The process begins with data ingestion, where real-time price feeds and volatility surfaces are pulled from decentralized oracles or centralized exchange APIs. This data is then used to calculate the Greeks for every position in the portfolio, providing a granular view of sensitivity.

- Aggregate the delta-equivalent exposure of the entire portfolio to establish a baseline sensitivity.

- Apply the current covariance matrix to the position vectors to determine the joint distribution of returns.

- Calculate the loss threshold at the ninety-ninth percentile to identify the maximum expected drawdown.

- Backtest the model against historical data to verify that the number of “VaR breaks” matches the expected frequency.

The sensitivity of the Portfolio VaR Calculation to its inputs is a primary concern for the systems architect. Small changes in the correlation between Bitcoin and Ethereum can lead to significant shifts in the required collateral for a cross-asset portfolio. To manage this, risk engines often apply a “stress-test” overlay, where the calculation is rerun under extreme scenarios, such as a 50% market drop or a 300% spike in implied volatility.

| Risk Factor | Impact on VaR | Mitigation Strategy |

|---|---|---|

| Delta | Linear Price Sensitivity | Spot Hedging |

| Gamma | Non-linear Acceleration | Option Rebalancing |

| Vega | Volatility Sensitivity | Calendar Spreads |

| Correlation | Diversification Decay | Cross-Asset Hedges |

Structural Metamorphosis

The shift from static, periodic risk assessments to dynamic, programmatic risk management defines the current state of Portfolio VaR Calculation. Traditional models were designed for human intervention, but the speed of decentralized markets has necessitated the automation of the entire risk loop. This metamorphosis has led to the adoption of Conditional VaR, also known as Expected Shortfall, which measures the average loss in the tail beyond the VaR threshold.

This provides a more comprehensive view of the “worst-case” scenario, addressing the primary criticism that standard VaR ignores the magnitude of losses once the threshold is breached. The failure of several major protocols during recent volatility events can be traced back to flawed Portfolio VaR Calculation logic that assumed liquidity would remain constant. In reality, liquidity vanishes exactly when the model predicts the highest risk, creating a feedback loop where liquidations drive prices lower, further increasing the VaR and triggering more liquidations.

Modern systems now incorporate liquidity-adjusted VaR, which adds a penalty for large positions that cannot be exited without significant market impact. This adaptation ensures that the risk metric reflects the actual cost of closing a position in a distressed market.

Future Risk Frontiers

The next phase of risk management will see the Portfolio VaR Calculation integrated directly into smart contract logic, enabling autonomous, self-healing financial systems. We are moving toward a world where the risk engine is not an external observer but a central component of the protocol’s state machine.

Machine learning models will likely replace static covariance matrices, using neural networks to predict correlation shifts before they occur, allowing the Portfolio VaR Calculation to become a predictive rather than a reactive tool.

Future risk systems will operate as autonomous agents executing liquidations before insolvency becomes systemic.

This evolution will also involve the integration of cross-chain data, where a Portfolio VaR Calculation can account for assets held across multiple disparate networks. As the financial stack becomes more fragmented, the ability to synthesize risk into a single metric will be the deciding factor in which protocols attract deep, institutional liquidity. The ultimate goal is a transparent, verifiable risk layer that allows for maximum capital efficiency while maintaining a mathematically guaranteed safety margin against systemic collapse.

Glossary

Vega Risk

Non-Linear Payoffs

Tail Risk

Flash Crash

Scenario Analysis

Decentralized Finance Risk

Behavioral Game Theory

Monte Carlo Simulation

Expected Shortfall