Essence

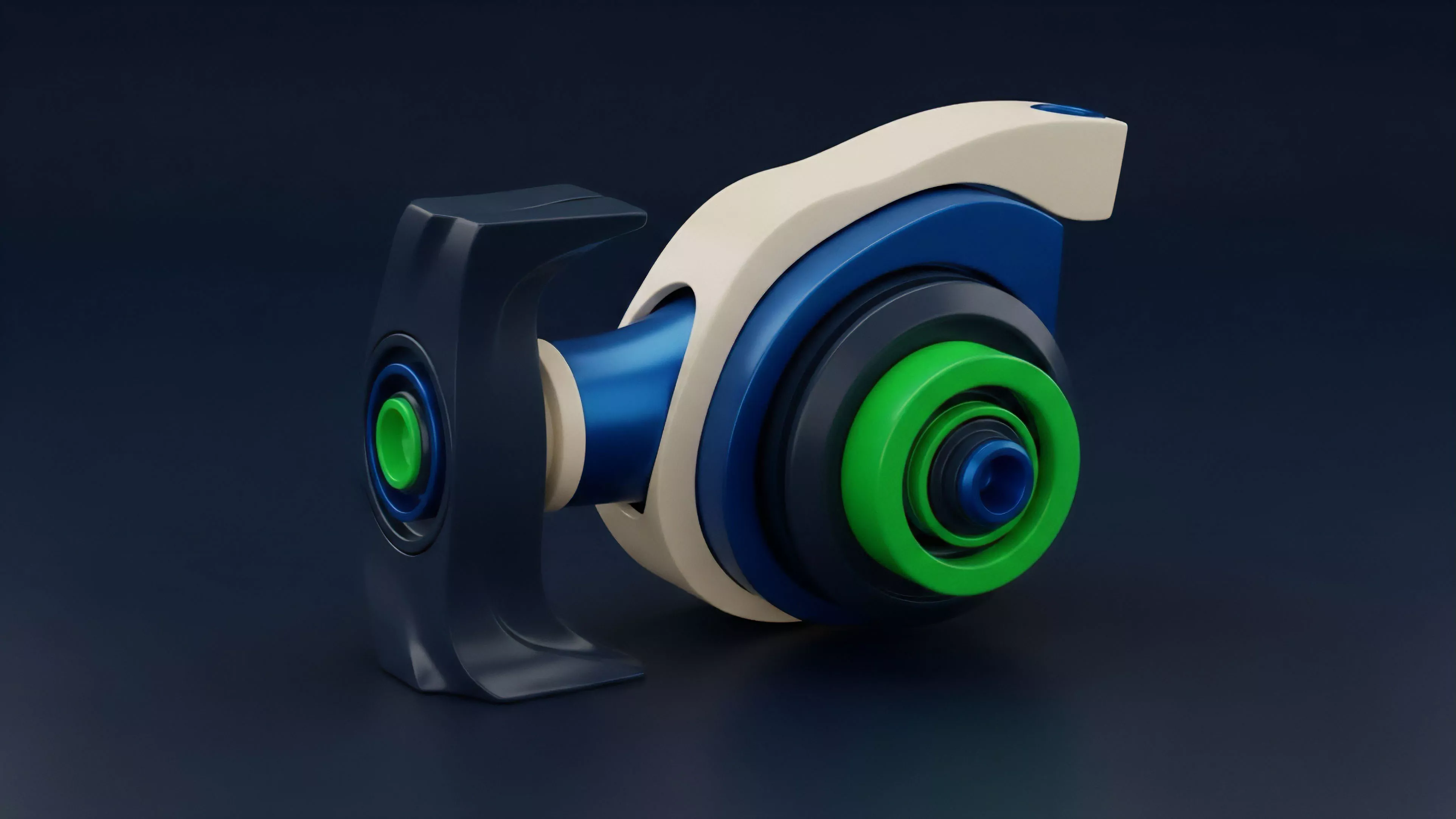

A Piecewise Non Linear Function in decentralized finance represents a segmented mathematical structure where the payoff or pricing behavior changes at predefined thresholds. Unlike global models that attempt to fit a single curve across all market conditions, these functions partition the state space into distinct intervals. Within each interval, the logic remains consistent, but the transition between segments introduces discrete shifts in risk profiles or asset valuation.

This architecture allows protocols to encode complex conditional logic directly into automated market makers or derivative margin engines.

The function partitions market state space into discrete segments to enforce specific conditional behaviors within decentralized financial protocols.

This modularity facilitates precise control over liquidity provisioning and risk mitigation. When a protocol designer defines a Piecewise Non Linear Function, they effectively map market variables ⎊ such as volatility, collateralization ratios, or order size ⎊ to specific, localized response curves. The systemic value lies in the ability to handle non-linear market events, such as flash crashes or liquidity droughts, by triggering structural changes in the governing algorithm exactly when predefined boundaries are breached.

Origin

The implementation of segmented logic in digital assets stems from the limitations of continuous constant product formulas.

Early automated liquidity providers struggled with capital efficiency because they applied uniform pricing across all price levels, leading to significant slippage for large trades. Developers sought to refine these mechanisms by incorporating segmented curves that approximate deeper liquidity near the current spot price while tapering exposure during extreme deviations.

- Segmented Liquidity Provisioning: Borrowed from traditional order book depth strategies to optimize capital utilization within automated protocols.

- Threshold-Based Risk Controls: Derived from circuit breaker mechanisms in legacy exchange infrastructure to manage sudden volatility spikes.

- Conditional Payoff Architectures: Inspired by barrier options where specific payout conditions activate only upon crossing predefined asset price levels.

This transition toward segmented design mirrors the evolution of algorithmic trading, where the goal shifted from simple price matching to managing the geometry of the order flow. By moving away from monolithic curves, protocol architects gained the ability to calibrate liquidity density based on observed volatility regimes, establishing a foundation for more robust decentralized derivative pricing.

Theory

The core mathematical challenge involves ensuring continuity and differentiability at the boundaries between segments to prevent arbitrage opportunities or unintended protocol drain. A Piecewise Non Linear Function requires rigorous calibration of the join points, often referred to as knots, where the transition occurs.

If the slope of the function changes abruptly without proper smoothing, the protocol risks creating localized price distortions that predatory actors can exploit.

| Component | Functional Role |

| Knot Placement | Defines the boundaries where market logic shifts |

| Local Curvature | Determines slippage and sensitivity within a segment |

| Boundary Continuity | Ensures arbitrage-free transitions between logic segments |

Quantitative analysts view these structures as localized approximations of a complex, global utility function. By utilizing Piecewise Non Linear Function logic, developers decompose a high-dimensional risk problem into manageable, lower-dimensional segments. The mathematical rigor resides in the management of the derivatives at the knots; maintaining C1 continuity ⎊ where the first derivative remains consistent across segments ⎊ is the standard for preventing instantaneous price gaps that would otherwise collapse the protocol’s margin engine.

Mathematical continuity at segment boundaries is the primary defense against predatory arbitrage and localized liquidity depletion.

Market microstructure analysis reveals that these segments act as synthetic stabilizers. When market participants push prices toward a knot, the changing slope of the function alters the marginal cost of liquidity, effectively providing a feedback loop that dampens or amplifies price movement based on the intended protocol design.

Approach

Current implementation strategies focus on maximizing capital efficiency while minimizing systemic risk. Developers deploy these functions within concentrated liquidity pools and structured derivative vaults to ensure that capital is only active within the price ranges where it is most needed.

This shift necessitates real-time monitoring of volatility clusters, as the static definition of segments can become obsolete during regime shifts.

- Dynamic Knot Adjustment: Protocols now programmatically shift segment boundaries based on rolling volatility windows to maintain optimal liquidity density.

- Risk-Adjusted Margin Scaling: Margin requirements for derivative positions scale according to the segment the underlying asset currently occupies.

- Automated Rebalancing: Smart contracts detect when liquidity concentration in a specific segment falls below a threshold and redistribute assets to adjacent segments.

My assessment of current market participants indicates a growing reliance on these segmented models to navigate high-volatility environments. The challenge remains the computational cost of updating these functions on-chain, leading many protocols to adopt off-chain calculation paths that verify results via zero-knowledge proofs. This hybrid approach balances the need for high-frequency adjustments with the requirement for trustless settlement.

Evolution

The transition from primitive constant product models to sophisticated, multi-segmented architectures marks a maturation of decentralized market design.

Early iterations relied on rigid, hard-coded segments that could not adapt to rapid shifts in market structure. Modern iterations utilize modular, upgradable contract designs that allow governance to update the parameters of the Piecewise Non Linear Function in response to changing systemic conditions. The development trajectory moves toward fully autonomous, machine-learning-informed segment adjustment.

Instead of relying on manual governance votes to change the knots of a function, protocols now integrate oracles that feed real-time market data into the function’s parameters. This removes the latency of human intervention, allowing the protocol to react to flash crashes with millisecond precision.

Autonomous segment adjustment represents the current frontier in protocol design, replacing static logic with real-time market responsiveness.

One might observe that the shift toward these complex geometries mirrors the transition from Newtonian mechanics to quantum field theory; we are moving from simple, deterministic price paths to probabilistic, state-dependent curves. This evolution is driven by the necessity of survival in an adversarial environment where any inefficiency in the pricing function is identified and extracted by automated agents within seconds.

Horizon

Future developments will likely focus on the integration of cross-protocol segmented functions, where the liquidity density of one platform influences the pricing logic of another. This interconnectedness could create a unified, decentralized derivative landscape where Piecewise Non Linear Function parameters are shared across protocols to optimize global capital efficiency.

The ultimate goal is a self-optimizing market structure that requires minimal intervention to remain liquid and stable.

| Development Stage | Expected Impact |

| Cross-Protocol Calibration | Increased liquidity efficiency across the entire stack |

| Predictive Knot Shifting | Anticipatory liquidity deployment before volatility spikes |

| Hardware-Accelerated Computation | Reduced latency in complex segment calculation |

The potential for systemic risk remains high, particularly if multiple protocols synchronize their segment transitions simultaneously, leading to correlated liquidity withdrawal. The next generation of designers must account for this inter-protocol contagion, potentially building in staggered or randomized segment updates to break the correlation. The path forward demands a synthesis of advanced quantitative modeling and robust, adversarial-aware smart contract architecture.