Essence

Parameter optimization represents the rigorous calibration of variables governing the behavior of financial models. Within decentralized option markets, this involves adjusting inputs such as implied volatility surfaces, skew coefficients, and decay functions to align theoretical pricing with observed order flow. The objective centers on minimizing the discrepancy between synthetic valuations and real-time execution costs, ensuring liquidity providers maintain efficient risk exposure.

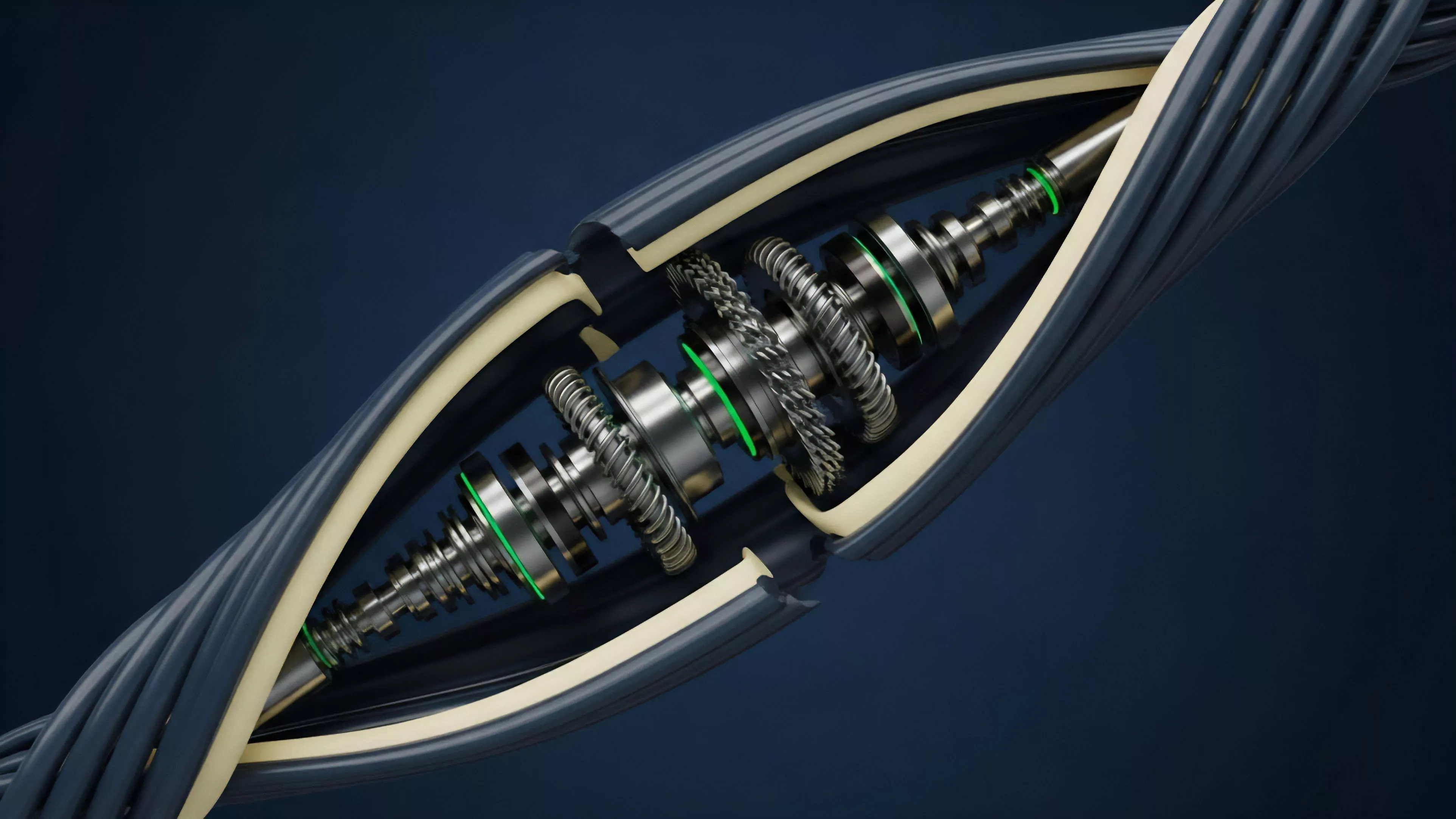

Parameter optimization functions as the mechanical bridge between abstract pricing models and the chaotic reality of decentralized liquidity provision.

This practice addresses the inherent limitations of standard models like Black-Scholes when applied to assets exhibiting high tail risk and discontinuous price action. By treating model parameters as dynamic rather than static, market makers improve their ability to quote tighter spreads and manage inventory risk across fragmented on-chain venues. The system gains stability when these parameters react proportionally to shifts in underlying market microstructure.

Origin

The necessity for these techniques stems from the early adaptation of traditional equity derivatives models to the idiosyncratic volatility profiles of digital assets.

Early decentralized finance protocols utilized static parameterization, which frequently led to adverse selection and catastrophic depletion of liquidity pools during periods of market stress. Practitioners recognized that the rapid evolution of crypto-native liquidity cycles required a departure from fixed-input assumptions.

Historical failures in early decentralized margin engines underscore the lethal danger of relying on outdated volatility assumptions.

This shift mirrors the historical transition from static floor-based trading to algorithmic high-frequency market making in traditional finance. As on-chain order books matured, the focus moved toward automated adjustment mechanisms that could interpret fee structures, collateralization ratios, and time-decay components in real time. The architecture evolved from rigid, governance-set variables toward autonomous, data-driven feedback loops that reflect current market conditions.

Theory

Optimization theory in this domain relies on the interaction between risk-neutral pricing frameworks and stochastic processes.

Market makers define a loss function, often based on the difference between the model-predicted price and the actual execution price, and then apply gradient-based or heuristic methods to update model inputs. The primary challenge involves the non-linear relationship between parameters like delta, gamma, and vega in environments where liquidity is scarce.

- Implied Volatility Surface represents the distribution of expected future price variance across various strike prices and expiration dates.

- Skew and Kurtosis Adjustment accounts for the higher probability of extreme price movements in crypto assets compared to traditional equities.

- Liquidity Decay Functions model the reduction in execution quality as trade sizes increase relative to the available pool depth.

This theoretical framework assumes that market participants act strategically to extract value from mispriced options. Therefore, the optimization process must incorporate adversarial considerations, ensuring that the model does not become predictable or susceptible to front-running. The math governing these adjustments often draws from control theory, treating the market as a system that requires constant damping to prevent runaway oscillations.

| Parameter Type | Systemic Function | Risk Sensitivity |

| Volatility Surface | Pricing Accuracy | High |

| Collateral Haircut | Solvency Protection | Extreme |

| Fee Decay | Incentive Alignment | Moderate |

Approach

Modern practitioners utilize automated agents to perform continuous re-calibration of pricing engines. This involves ingestion of real-time trade data and order book depth to update the underlying distribution assumptions. Rather than relying on human intervention, these systems employ machine learning models to identify regime shifts ⎊ such as sudden transitions from low to high volatility ⎊ and adjust parameters accordingly.

Automated calibration agents mitigate the latency inherent in manual governance updates during rapid market corrections.

Execution of these techniques requires a deep understanding of the underlying blockchain’s block time and finality constraints. Optimization parameters must account for the delay between price discovery and trade settlement, as this window exposes the liquidity provider to significant delta drift. Systems are architected to prioritize capital efficiency while maintaining a safety buffer against extreme tail events, often through dynamic re-balancing of margin requirements.

- Data Ingestion processes raw trade logs and order book snapshots from decentralized exchanges to feed the optimization engine.

- Model Validation involves backtesting adjusted parameters against historical crash data to ensure survival under stress.

- Parameter Smoothing prevents excessive volatility in quoted prices by applying filters to incoming data signals.

Evolution

The transition from manual governance-driven parameter changes to autonomous, protocol-level optimization marks a significant advancement in decentralized derivatives. Early versions suffered from the inability to respond to high-frequency shocks, whereas current iterations leverage oracle data and on-chain analytics to predict volatility clusters before they fully manifest. This progress has shifted the burden of risk management from the individual participant to the protocol’s mathematical architecture.

The evolution of these systems reflects a broader trend toward algorithmic self-regulation in decentralized markets. One might observe that this mirrors the biological homeostasis found in complex organisms, where internal conditions remain stable despite external environmental changes. As these protocols continue to iterate, the reliance on human-set parameters will likely decrease, replaced by fully endogenous systems that derive their optimization logic from the collective behavior of participants.

Horizon

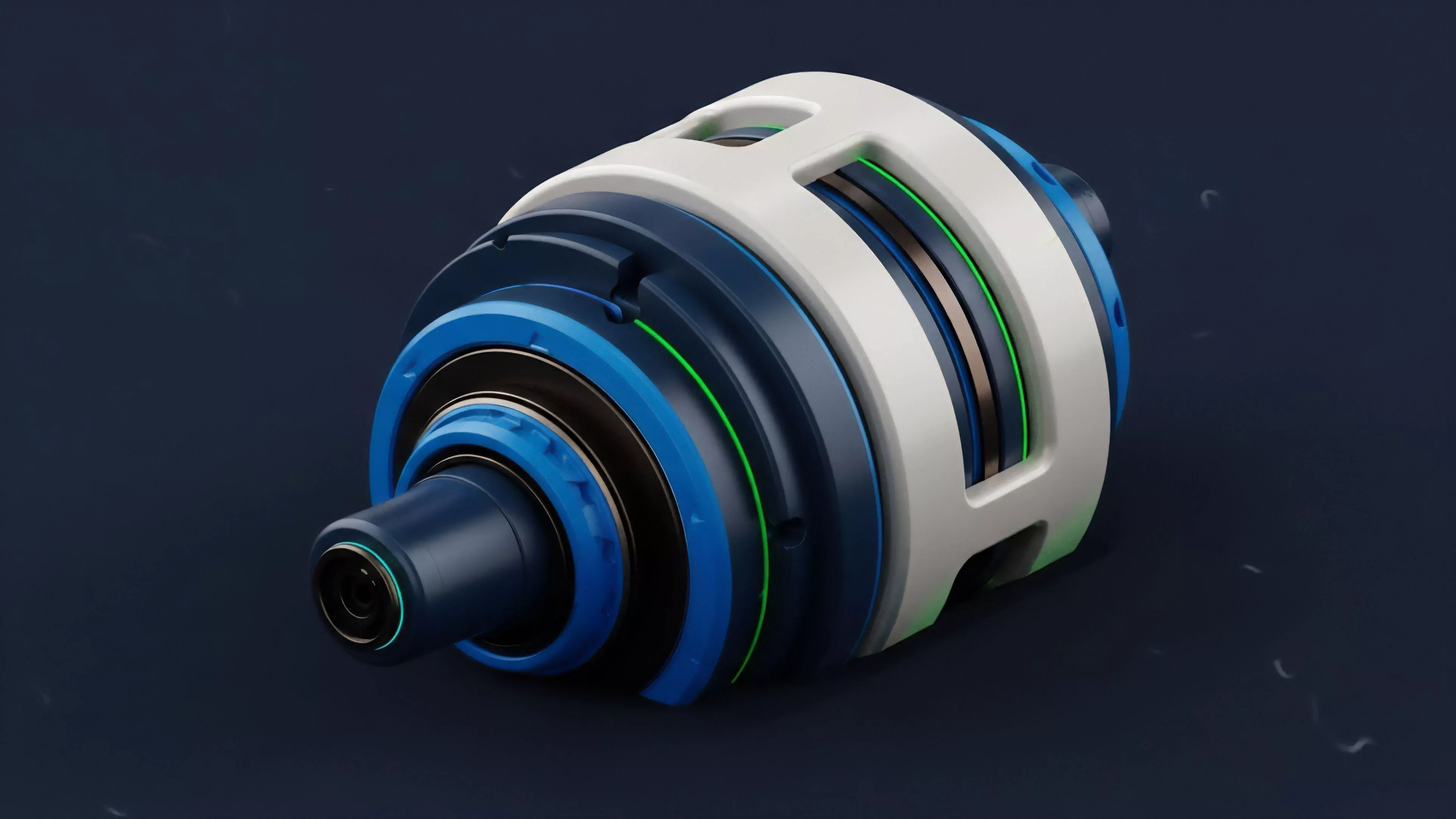

Future developments will focus on cross-protocol parameter synchronization, where liquidity providers share optimization signals to create a unified view of global volatility.

This integration will reduce the fragmentation of pricing across different chains and platforms. Furthermore, the incorporation of advanced cryptographic techniques like zero-knowledge proofs will allow protocols to optimize parameters based on private, off-chain order flow without compromising user anonymity.

| Future Focus | Primary Objective | Technological Enabler |

| Cross-Chain Sync | Unified Pricing | Interoperability Protocols |

| Privacy-Preserving Data | Secure Optimization | Zero-Knowledge Proofs |

| Predictive Regimes | Proactive Hedging | Reinforcement Learning |

The ultimate goal involves the creation of self-healing financial systems capable of maintaining liquidity during systemic crises without external bailouts. These systems will likely become the foundational layer for all decentralized risk transfer, providing the stability required for institutional adoption of crypto-native derivative instruments.