Essence

Parallel Transaction Processing represents the architectural departure from strictly linear, sequential block validation toward concurrent execution models. By decoupling the consensus layer from the state transition logic, protocols enable multiple, non-conflicting transactions to be processed simultaneously. This shift directly addresses the primary bottleneck in decentralized ledger performance, where throughput is traditionally constrained by the requirement for global ordering.

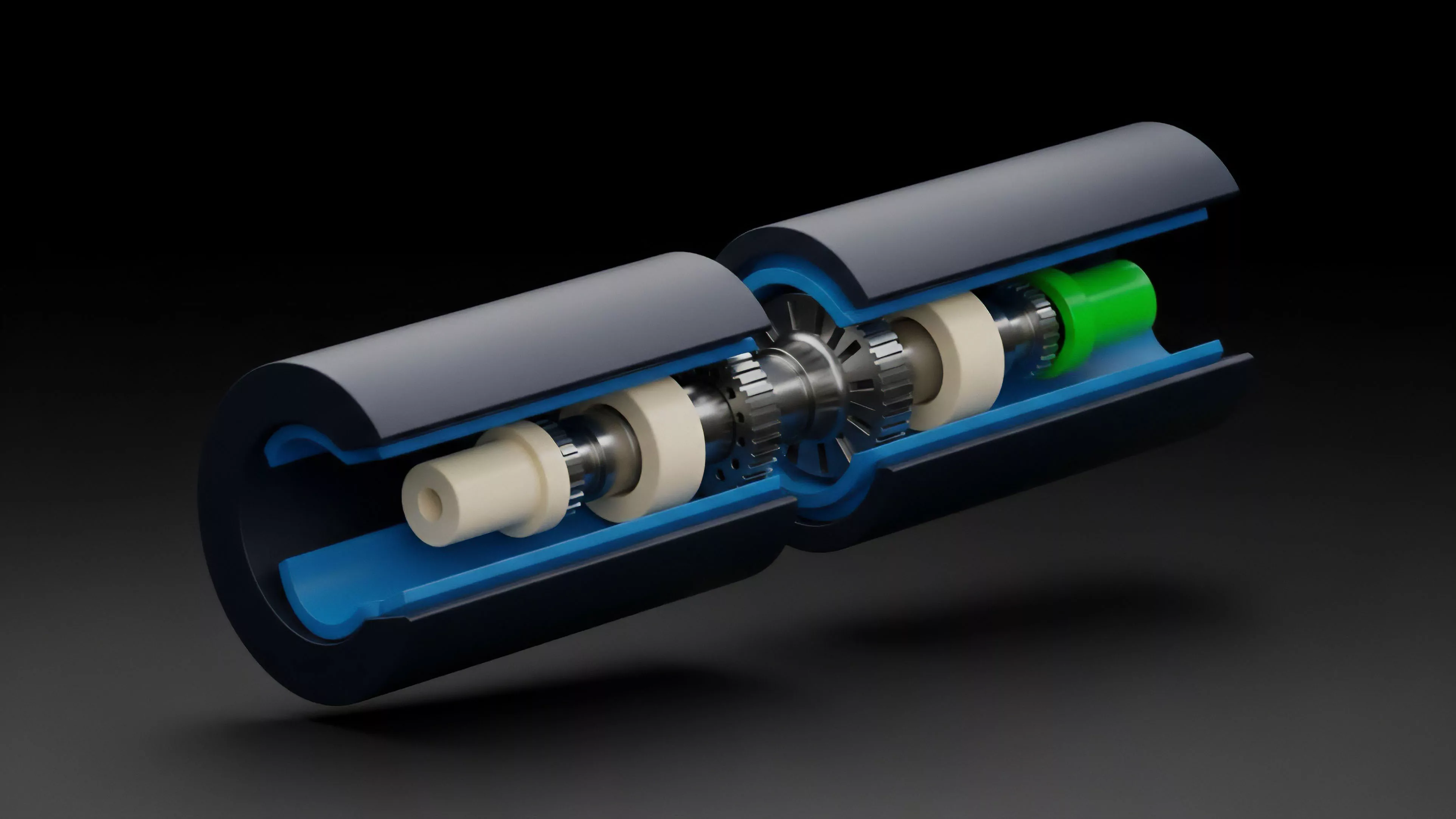

Parallel transaction processing shifts network capacity from serial bottlenecking to concurrent execution architectures.

The fundamental utility of this mechanism lies in its ability to maximize hardware utilization and throughput without sacrificing decentralization. By employing sharding or multi-threaded virtual machines, these systems categorize transactions into independent sets that operate on distinct state partitions. This minimizes contention and significantly reduces the latency experienced by users in high-frequency trading environments or complex decentralized finance applications.

Origin

The genesis of Parallel Transaction Processing stems from the limitations observed in early-generation smart contract platforms.

Developers recognized that the single-threaded nature of the Ethereum Virtual Machine created a global state contention problem. As network activity grew, the sequential processing of transactions forced users into a competitive bidding war for block space, driving fees to unsustainable levels.

- Sequential Execution: The traditional model where transactions are processed one after another, ensuring a deterministic global state but imposing a strict upper limit on throughput.

- State Contention: The phenomenon where multiple transactions attempt to access or modify the same smart contract storage simultaneously, necessitating serial processing to prevent data corruption.

- Scalability Trilemma: The foundational tension between security, decentralization, and scalability that necessitated research into concurrent processing techniques.

Research into database management systems provided the blueprint for these advancements. Engineers adapted concepts from multi-version concurrency control and optimistic execution to the blockchain environment. This evolution was driven by the urgent need to support institutional-grade volume, where latency is synonymous with capital inefficiency and slippage.

Theory

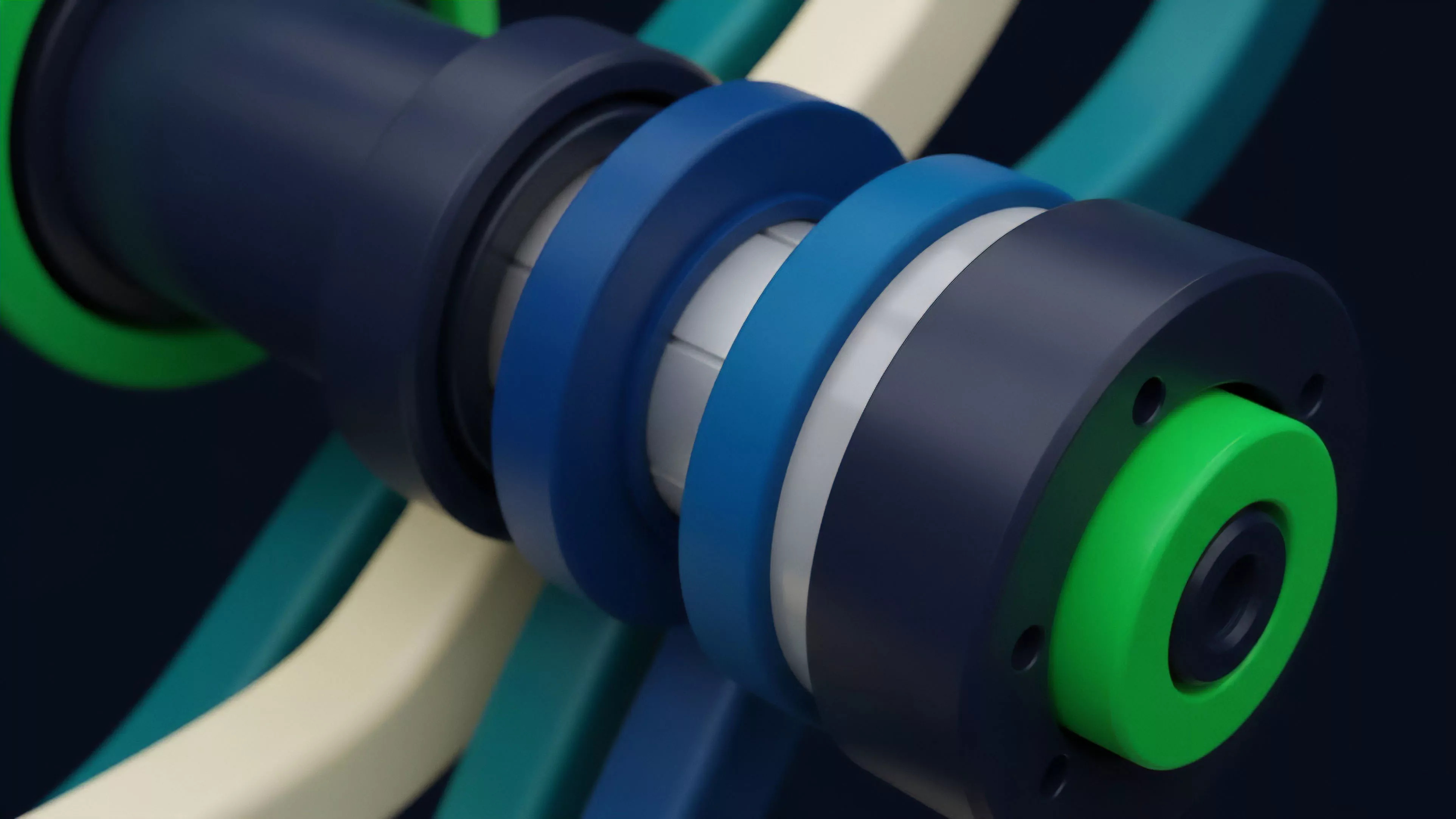

The theoretical framework of Parallel Transaction Processing relies on identifying dependency graphs within transaction batches.

If two transactions do not share common state inputs or outputs, they are commutative and can be executed in any order, or simultaneously, without violating the integrity of the ledger.

| Architecture | Mechanism | Primary Benefit |

| Optimistic Concurrency | Speculative execution assuming no conflicts | Reduced latency in low-contention environments |

| State Partitioning | Sharding state into independent buckets | Horizontal scalability of transaction throughput |

| Multi-Threaded VM | Concurrent access to state via locks | Efficient utilization of multi-core hardware |

The mathematical rigor here involves calculating the probability of state collision. If the cost of conflict resolution exceeds the time saved through parallelism, the system experiences diminishing returns. Consequently, protocol designers must implement sophisticated scheduling algorithms to group transactions efficiently.

Concurrent execution relies on the identification of non-overlapping state dependencies to ensure deterministic outcomes.

The system operates under the constant pressure of adversarial agents attempting to trigger re-orgs or state inconsistencies. Security hinges on the robustness of the locking mechanisms that govern state access. If these mechanisms fail, the protocol risks double-spending or unauthorized state transitions, rendering the performance gains irrelevant.

Approach

Current implementations of Parallel Transaction Processing utilize various strategies to achieve high-performance settlement.

Many modern Layer 1 and Layer 2 solutions employ an account-based model where transactions specify their accessed state keys upfront. This allow the protocol to pre-sort and batch these transactions into parallel threads.

- Dependency Mapping: Protocols scan incoming transaction pools to create directed acyclic graphs that identify which operations can safely run in parallel.

- Conflict Detection: Real-time validation checks ensure that parallel threads do not overwrite shared state, rolling back any transactions that violate these constraints.

- Hardware Acceleration: Advanced virtual machines utilize specialized instruction sets to handle concurrent state reads and writes at the CPU level.

This is where the pricing model becomes truly elegant ⎊ and dangerous if ignored. When traders execute strategies across these parallelized environments, the underlying execution speed can fundamentally alter the effectiveness of arbitrage. High-frequency bots now optimize for the specific concurrency models of each chain, creating a new dimension of technical competition.

Evolution

The path from early, monolithic chains to current high-throughput architectures has been defined by a relentless drive for efficiency.

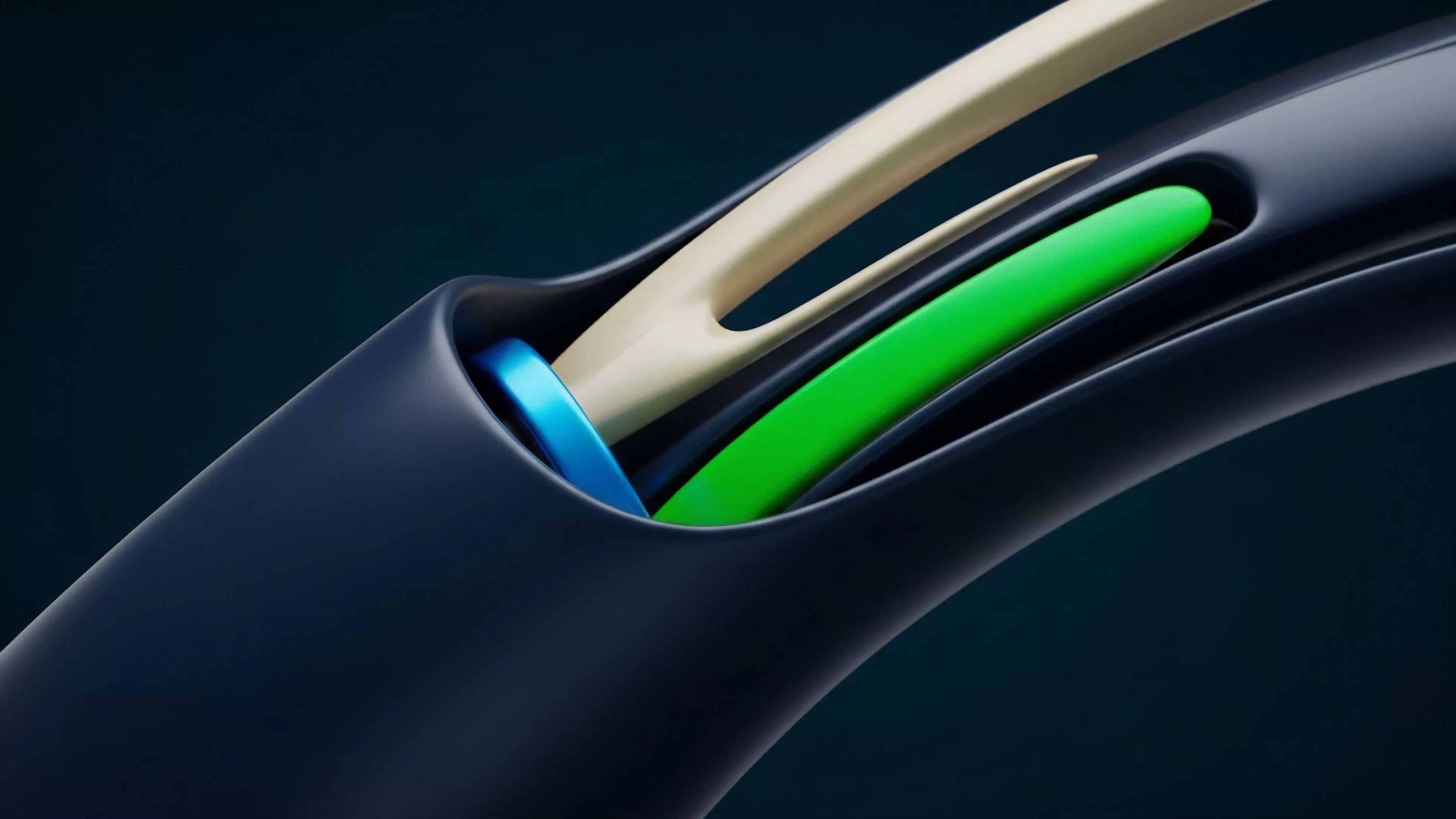

Initially, developers attempted to optimize sequential execution through more efficient code compilers. This reached a point of diminishing returns, shifting focus toward structural redesigns. The industry moved from simple serial processing to modular designs where the execution environment is decoupled from the data availability layer.

This allows for specialized, high-performance execution shards that handle massive volumes of transactions while maintaining the security of the root chain. It seems that the industry is finally moving toward a reality where the bottleneck is no longer the protocol, but the speed of light in network propagation.

Modular execution layers enable horizontal scaling by offloading computation from the primary consensus mechanism.

This transition has not been without cost. Increased architectural complexity introduces new vectors for smart contract security risks. As we distribute the state, the surface area for exploits grows, necessitating more rigorous formal verification of the concurrency control logic.

Horizon

Future developments in Parallel Transaction Processing will likely focus on asynchronous execution and zero-knowledge proof integration.

As we move toward a multi-chain future, the ability to maintain consistent state across parallelized, interoperable environments will be the definitive challenge.

| Future Trend | Implication for Derivatives | Risk Factor |

| Asynchronous State | Faster cross-chain margin settlement | Increased complexity in liquidation logic |

| ZK-Parallelization | Privacy-preserving concurrent execution | High computational overhead for proofs |

| Hardware-Level Sharding | Native speed for high-frequency trading | Centralization of high-performance nodes |

The ultimate goal is a system that scales linearly with the number of participating nodes. This would permit the migration of entire traditional financial exchanges onto decentralized rails, providing transparency and settlement finality at speeds previously reserved for centralized dark pools. Our inability to respect the inherent trade-offs in these designs is the critical flaw in our current models. How will the interaction between hardware-level concurrency and cryptographic proofs redefine the limits of decentralized market efficiency?