Essence

Out of Sample Testing functions as the definitive mechanism for validating the predictive integrity of financial models by subjecting them to data entirely absent from the initial calibration phase. It serves as the primary firewall against the tendency of quantitative strategies to overfit historical noise, ensuring that a system captures genuine market signals rather than mere statistical artifacts of past volatility.

Out of Sample Testing acts as a rigorous barrier preventing the implementation of models that perform exclusively on historical data.

The core utility resides in its ability to simulate real-world uncertainty, forcing a strategy to prove its robustness under conditions it has never encountered. When dealing with crypto derivatives, where liquidity profiles and volatility regimes shift with unprecedented speed, this testing protocol becomes the only reliable method to distinguish between genuine edge and transient curve-fitting.

Origin

The conceptual framework for Out of Sample Testing emerged from the broader discipline of econometrics, specifically designed to address the inherent limitations of regression analysis. Statisticians recognized that a model achieving a perfect fit on training data often failed spectacularly when applied to subsequent observations. This discrepancy, known as the overfitting problem, necessitated a methodology that split available data into distinct segments: one for model training and one for independent verification.

In the evolution of algorithmic trading, this approach transitioned from academic statistics into the bedrock of quantitative finance. Practitioners realized that market environments are non-stationary; patterns that appear statistically significant during a specific bull cycle often dissolve as market microstructure changes. Consequently, the practice of sequestering data became the industry standard for risk management, providing a standardized way to evaluate if a trading strategy possesses genuine predictive power or if it is a byproduct of arbitrary parameter selection.

Theory

The mathematical foundation of Out of Sample Testing rests on the variance-bias tradeoff. As model complexity increases to capture more historical nuances, the risk of capturing noise ⎊ the bias ⎊ decreases, but the variance ⎊ the sensitivity to small fluctuations in data ⎊ increases. By reserving a portion of the dataset, architects can observe whether the model’s performance remains consistent across different temporal slices of market activity.

| Testing Phase | Data Purpose | Primary Objective |

| In Sample | Parameter Optimization | Maximize explanatory power |

| Out of Sample | Performance Validation | Verify predictive robustness |

This process is frequently structured using a Walk Forward Optimization technique. Rather than a static split, the model undergoes continuous testing where the training window slides forward in time. This methodology ensures that the strategy remains adaptive to changing market physics while maintaining the discipline of independent verification.

The logic follows a cyclical path:

- Training establishes the initial set of rules or coefficients based on a defined temporal window.

- Validation tests those rules against the subsequent period, generating a performance metric independent of the training data.

- Adjustment allows for the incorporation of new data, resetting the training window to capture the most recent market regime.

The validity of a trading model depends entirely on its performance when applied to data that played no role in its creation.

Approach

Modern implementation of Out of Sample Testing in crypto markets requires a sophisticated understanding of protocol-specific risks. Unlike traditional equities, crypto derivatives are influenced by on-chain events, such as smart contract upgrades or sudden changes in liquidation engine dynamics, which can render historical price patterns obsolete. Therefore, the approach must account for these exogenous shocks.

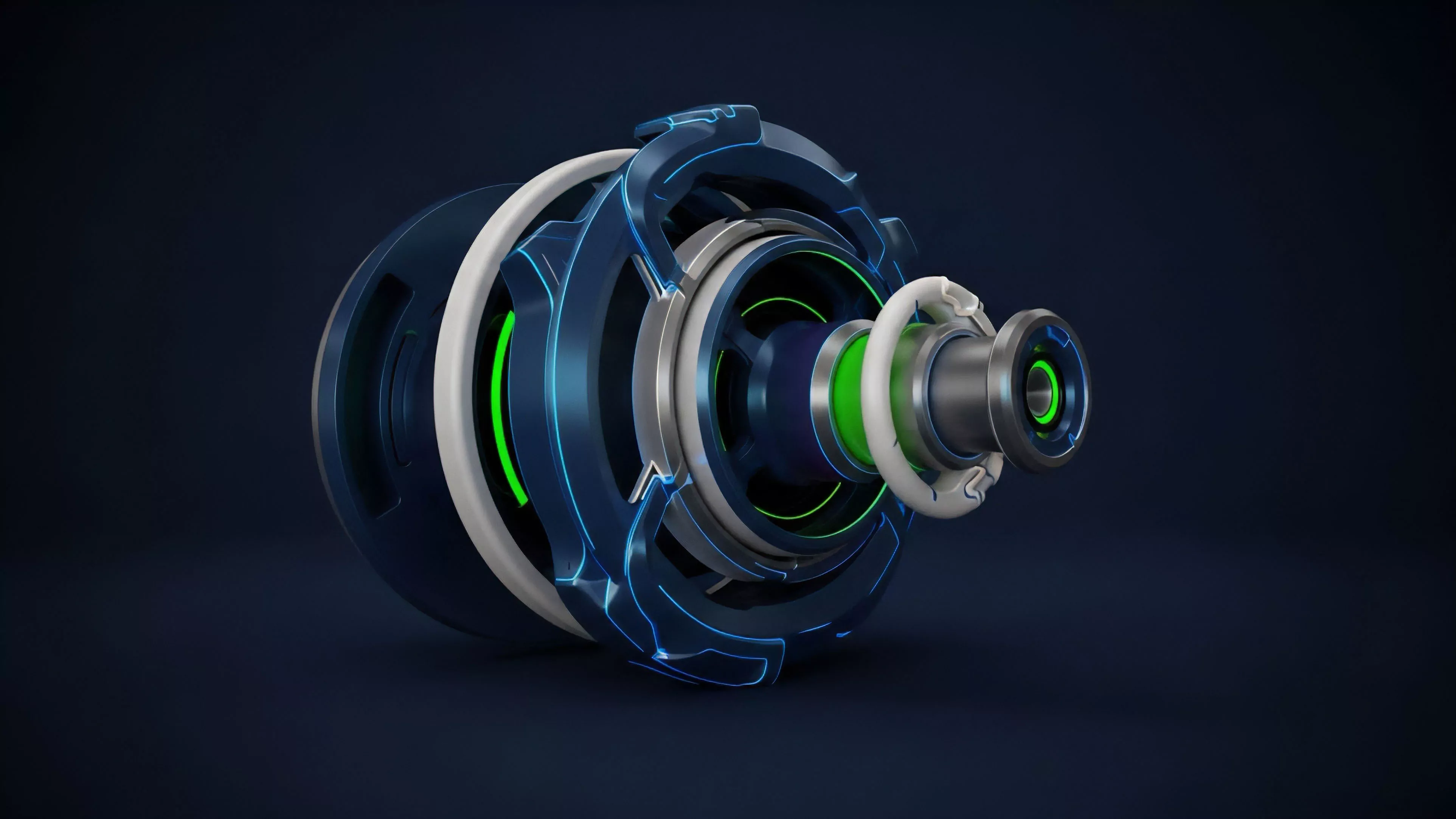

Architects now employ Monte Carlo simulations alongside traditional hold-out sets to stress-test strategies against thousands of potential future scenarios. This moves beyond simple historical backtesting by generating synthetic data paths that preserve the statistical properties of the original series while introducing random perturbations. This method effectively probes the limits of a strategy’s tolerance to extreme volatility or liquidity evaporation.

| Risk Vector | Testing Methodology | Systemic Impact |

| Liquidity Shocks | Synthetic Path Generation | Evaluates slippage and execution decay |

| Consensus Failure | Scenario Stress Testing | Assesses margin engine stability |

The integration of behavioral game theory also plays a role in contemporary testing. By modeling how adversarial agents might exploit specific order flow patterns, architects can adjust their models to survive not just random market noise, but deliberate attempts to manipulate price discovery mechanisms. This creates a defensive layer that standard statistical testing often misses.

Evolution

The methodology has transitioned from static, single-split validation to highly dynamic, continuous monitoring systems. Early quantitative efforts relied on simple splits, often leading to models that failed when market regimes shifted. The current state demands an iterative loop where Out of Sample Testing is not a terminal event, but a constant, automated background process.

This evolution mirrors the shift from centralized exchanges to decentralized protocols, where transparency allows for deeper inspection of order flow and participant behavior. We no longer treat the market as a black box; instead, we analyze the protocol’s internal physics to inform the parameters of our tests. The mathematical rigor has increased, with modern architects incorporating advanced Greeks and non-linear risk sensitivities into their validation suites to account for the unique gamma and vega profiles of crypto options.

Dynamic validation protocols allow systems to adapt to shifting market regimes without sacrificing the necessity of independent testing.

One might observe that the shift toward automated, real-time testing is akin to the development of error-correcting codes in digital communications, where noise is not just filtered but systematically identified and mitigated through constant verification. This transition marks the maturation of crypto derivatives from experimental constructs into robust financial infrastructure capable of supporting large-scale institutional participation.

Horizon

Future iterations of Out of Sample Testing will increasingly rely on machine learning frameworks that can autonomously identify regime shifts and adjust testing parameters in real time. As decentralized markets grow more complex, the ability to predict failure points before they occur will become the ultimate competitive advantage. The focus is shifting toward predictive maintenance of trading systems, where the testing engine itself learns to anticipate when a model is beginning to degrade due to structural changes in the underlying asset or protocol.

This path leads to a future where systemic risk is managed through continuous, transparent, and algorithmic validation. By embedding these protocols directly into the architecture of decentralized derivatives, the industry can create self-healing systems that remain resilient even when faced with unprecedented market stress. The ultimate goal is a state of perpetual, autonomous verification that ensures strategy viability across all possible market futures.