Essence

Structural Alignment of Liquidity Data

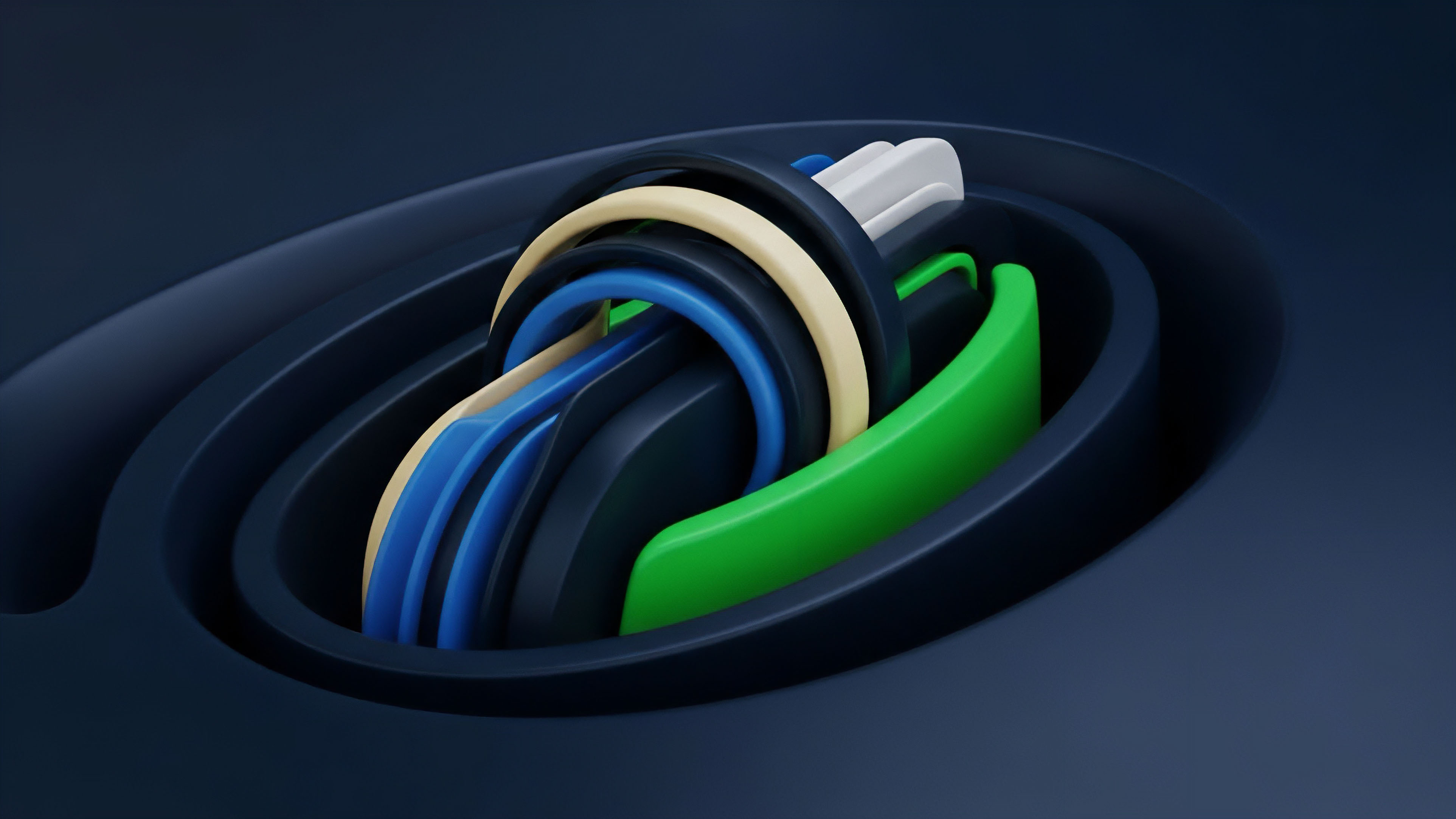

Liquid markets function through the continuous interaction of bids and asks, yet the digital asset landscape remains fragmented across dozens of venues. Order Book Normalization acts as the architectural layer that translates these heterogeneous data streams into a uniform, actionable format. This process removes the friction of disparate API schemas, varying tick sizes, and inconsistent asset naming conventions.

By establishing a single point of truth, participants can execute sophisticated cross-exchange strategies without manual recalibration for every new liquidity source.

Order Book Normalization provides the structural basis for comparing liquidity depth and price discovery across fragmented global exchanges.

Operational Interoperability

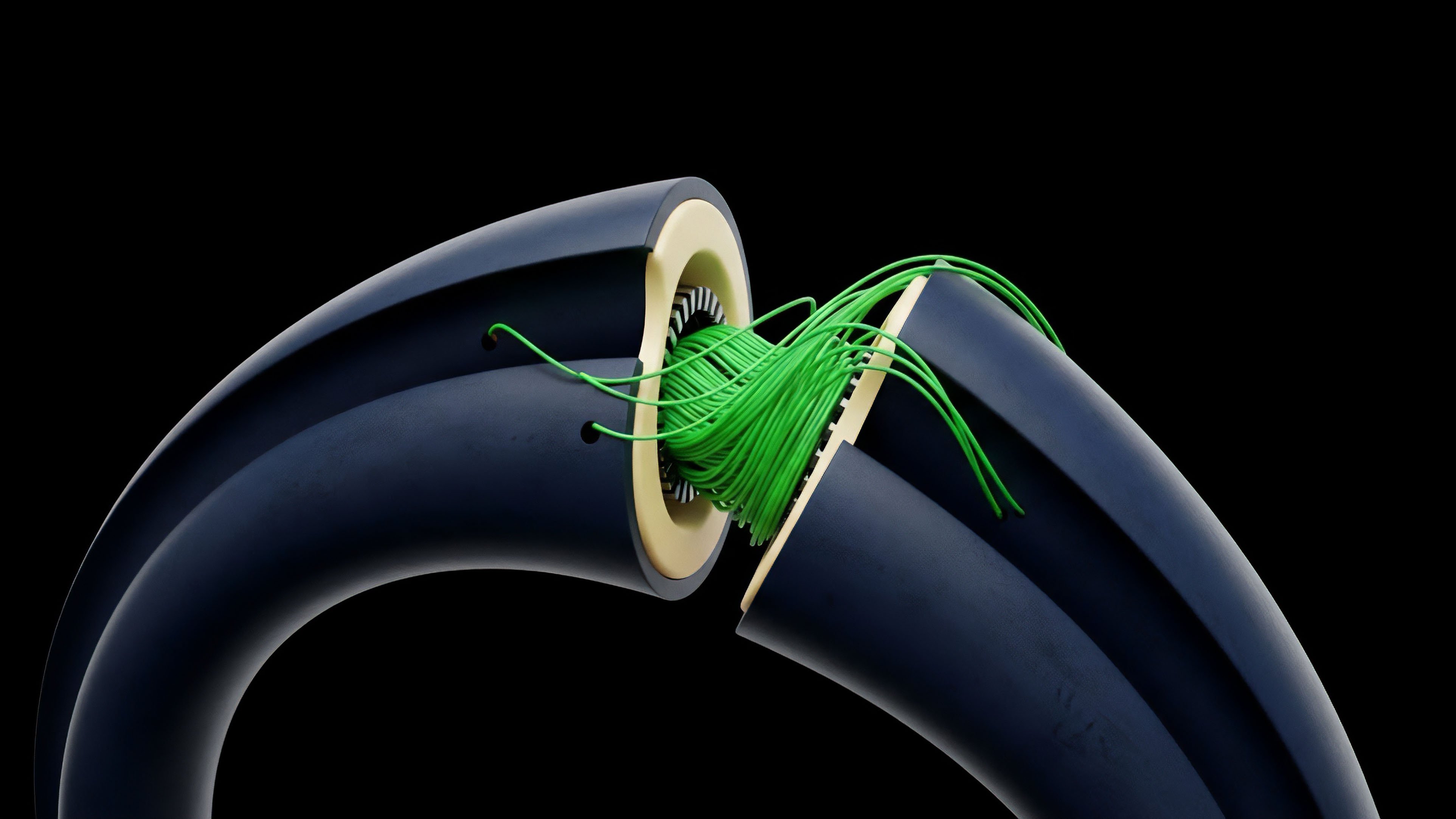

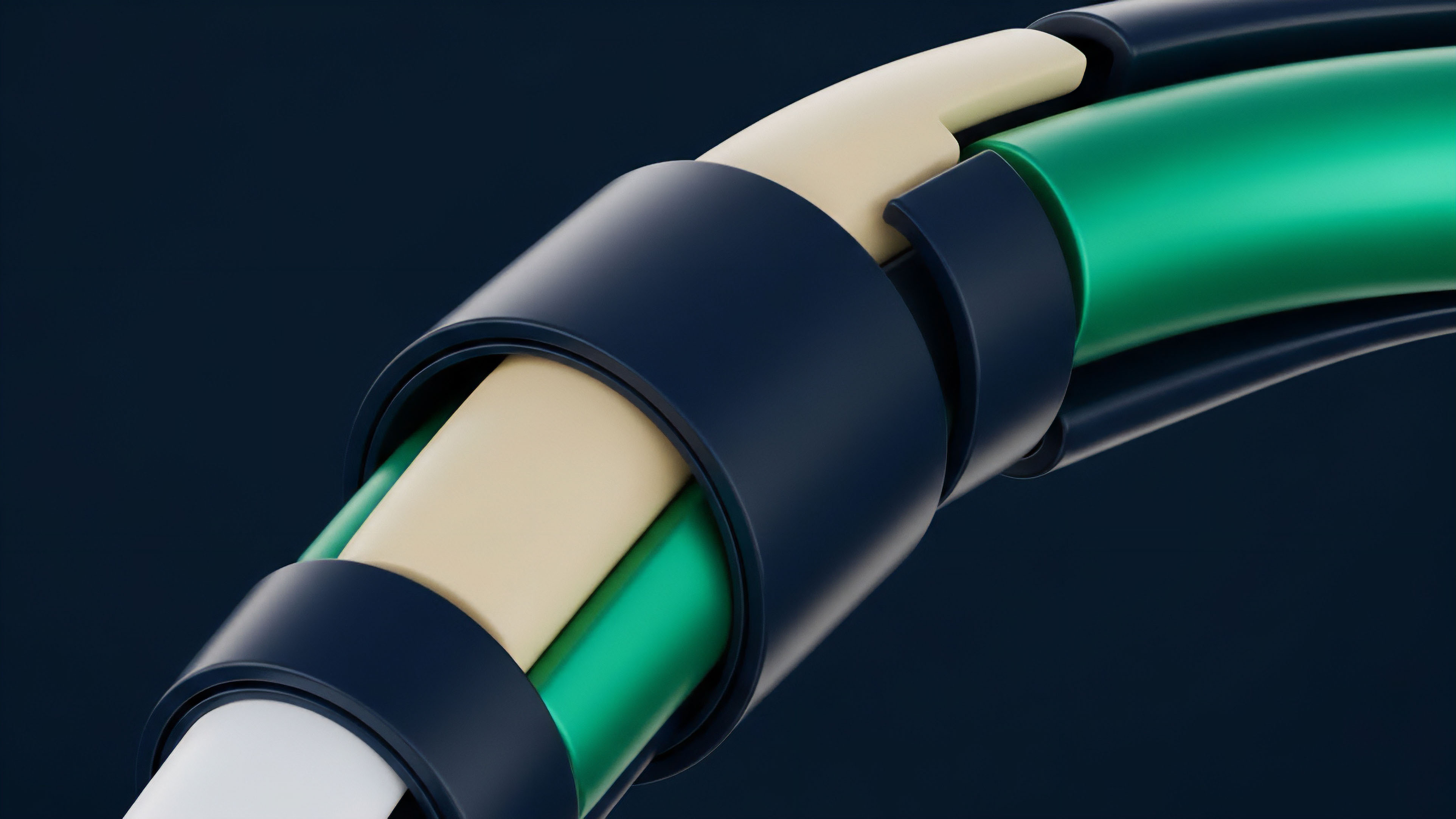

The technical requirement for this alignment stems from the lack of standardization in the crypto derivatives space. Each exchange employs unique methods for reporting order book depth, update frequencies, and error handling. Order Book Normalization solves this by mapping raw JSON responses or binary streams into a standardized internal model.

This model typically includes standardized fields for price, volume, side, and timestamp, ensuring that a buy order on one venue is computationally identical to a buy order on another.

- Price Alignment: Converting all asset valuations into a common quote currency, usually USD or a specific stablecoin, to facilitate direct comparison.

- Quantity Scaling: Adjusting contract sizes, which vary between inverse, linear, and quanto options, into a unified base asset measurement.

- Timestamp Synchronization: Correcting for clock drift and different time formats to ensure accurate sequence of events in high-frequency environments.

High-fidelity trading systems rely on this layer to maintain a real-time view of global supply and demand. Without Order Book Normalization, the risk of execution errors increases as the system might misinterpret the depth or price of a specific instrument due to a formatting mismatch. This layer is the bedrock of modern algorithmic trading, allowing for the rapid scaling of strategies across the entire ecosystem.

Origin

The Fragmentation Problem

The early years of crypto trading were defined by isolated islands of liquidity.

Exchanges operated as closed systems with proprietary technology stacks. As professional market makers and arbitrageurs entered the space, the need to interact with multiple venues simultaneously became a competitive necessity. Order Book Normalization emerged as a solution to the high overhead of maintaining custom integrations for every exchange.

The initial versions were simple scripts that polled REST endpoints, but the volatility of the market demanded more robust, event-driven architectures.

The shift from isolated exchange silos to a unified liquidity landscape necessitated a standard protocol for order book data processing.

Rise of Middleware and Aggregators

As the derivatives market matured, specialized firms began offering normalization as a service. These providers realized that the value was not just in the data itself, but in the reliability and speed of the transformation. Order Book Normalization moved from being a side-task of a trading bot to a dedicated component of the financial infrastructure.

This shift allowed smaller players to access the same level of data sophistication as large institutions, leveling the playing field for liquidity provision.

| Era | Data Method | Normalization Level |

|---|---|---|

| Early | Manual REST Polling | Basic Price/Size Mapping |

| Growth | WebSocket Streams | Real-time Field Standardization |

| Maturity | Low-latency Binary Feeds | Full Depth and Event-driven Sync |

The transition to decentralized exchanges (DEXs) added another layer of difficulty. On-chain order books and Automated Market Makers (AMMs) report data through smart contract events, which differ significantly from centralized exchange APIs. Order Book Normalization adapted to include these on-chain signals, creating a bridge between traditional order book dynamics and the unique properties of blockchain-based liquidity.

Theory

Mathematical Transformation of Depth

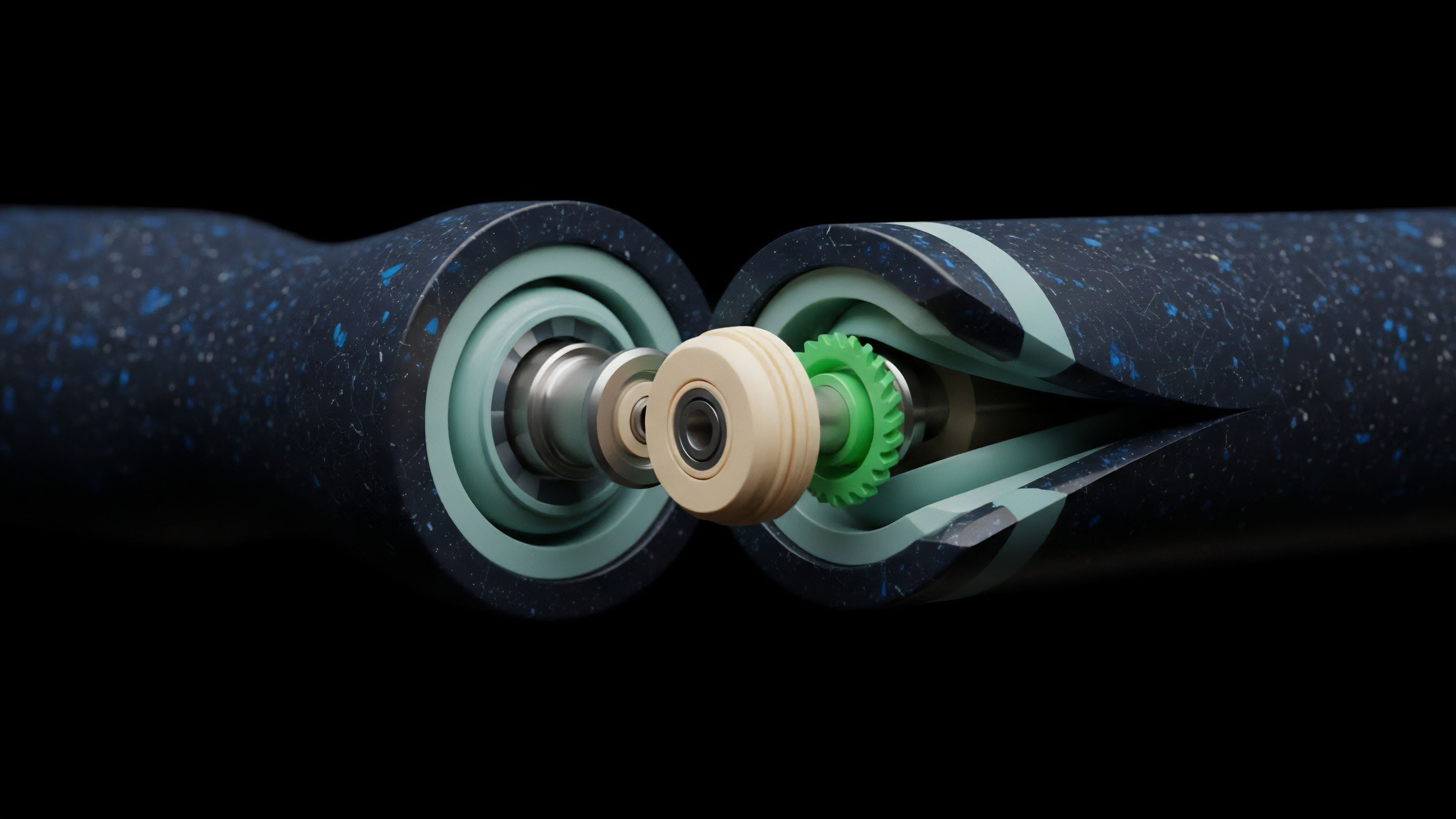

At its core, Order Book Normalization is a series of mathematical functions applied to raw data.

The goal is to preserve the information integrity while changing its form. For options, this involves normalizing the Greeks and implied volatility alongside the standard price and size data. A normalized order book must account for the non-linear nature of option pricing, ensuring that a change in the underlying price is reflected accurately across all normalized instruments.

Mathematical consistency in data transformation ensures that risk metrics remain accurate when aggregated from multiple disparate sources.

Data Integrity and Latency Trade-Offs

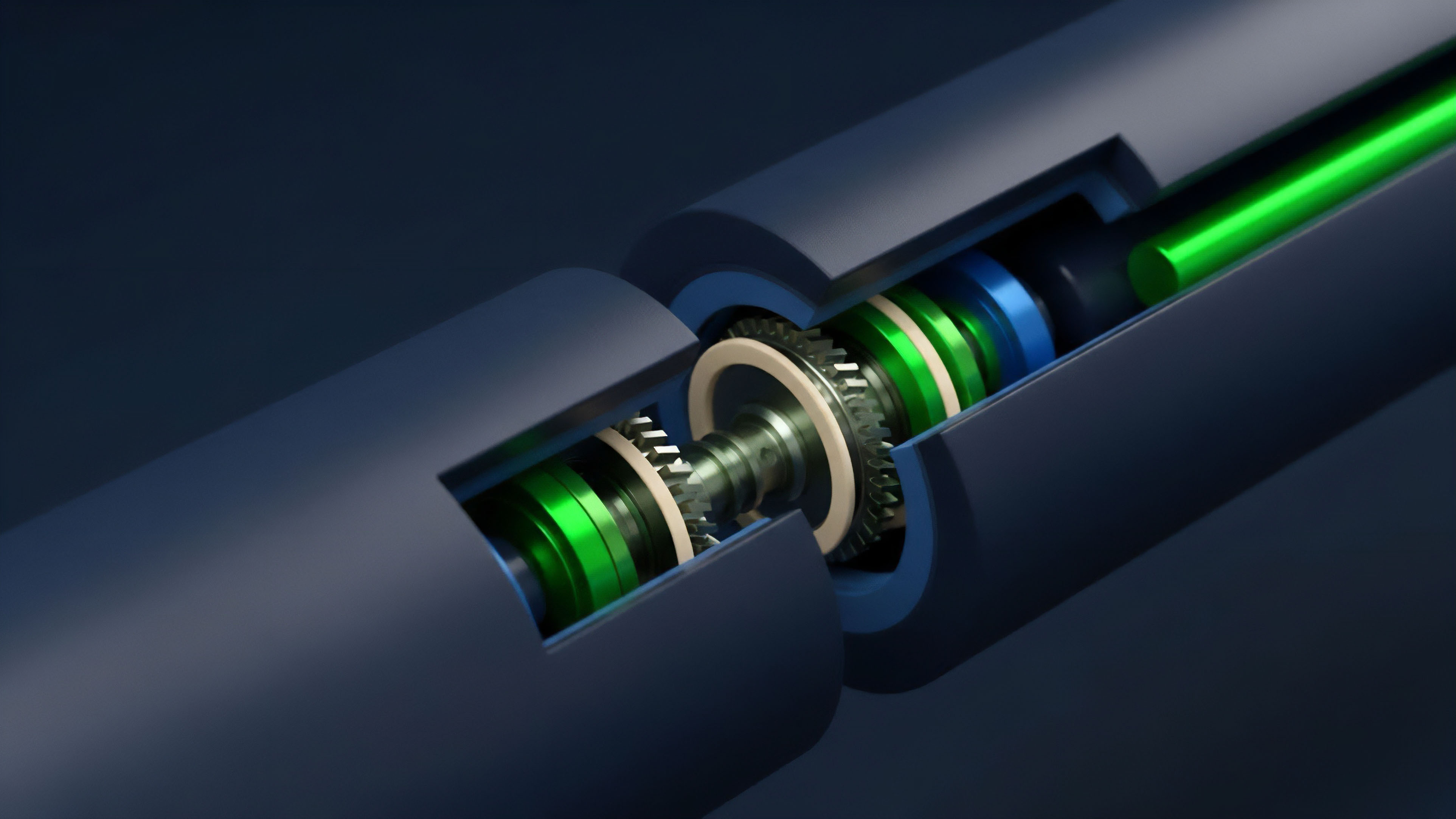

Every transformation step introduces a small amount of latency. The quantitative challenge is to minimize this delay while maximizing the accuracy of the data. Order Book Normalization engines use highly optimized serialization formats like Protocol Buffers or FlatBuffers to move data through the pipeline.

The theory suggests that a more consistent data set allows for better predictive modeling, even if there is a slight latency cost. This is because the noise from inconsistent data often outweighs the benefit of raw speed.

| Metric | Raw Data Property | Normalized Property |

|---|---|---|

| Tick Size | Variable per Exchange | Standardized Minimum Increment |

| Lot Size | Asset Specific | Base Asset Equivalent |

| Update Logic | Snapshot or Delta | Continuous Stream of Deltas |

Adversarial Data Environments

In a market where exchanges might experience downtime or provide stale data, the normalization engine must act as a filter. It identifies anomalies ⎊ such as a bid price higher than an ask price across different venues ⎊ and flags them for the trading logic. This adversarial perspective treats the raw data as potentially untrustworthy, using Order Book Normalization as a validation layer that protects the capital of the participant.

Approach

Building the Normalization Pipeline

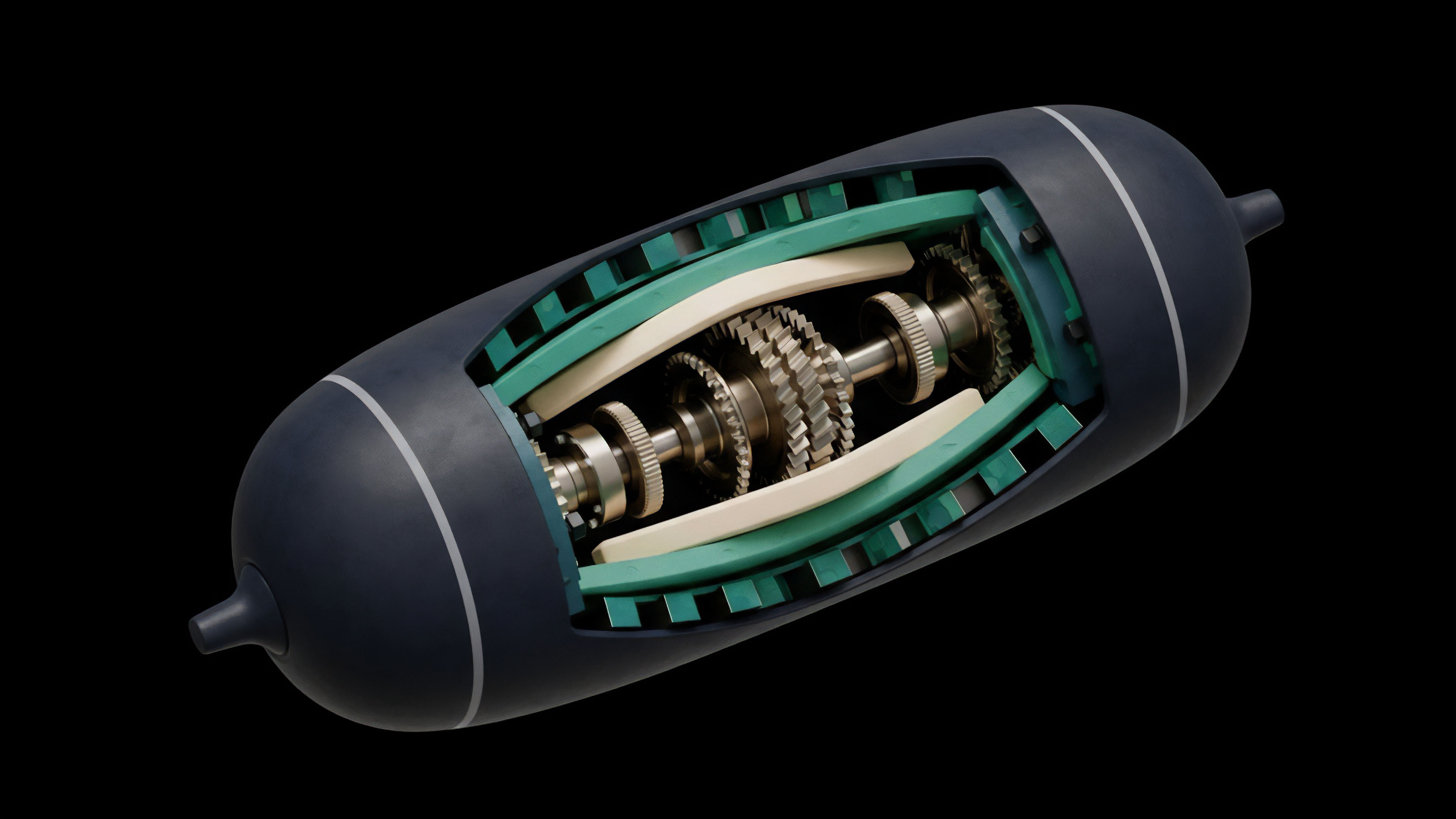

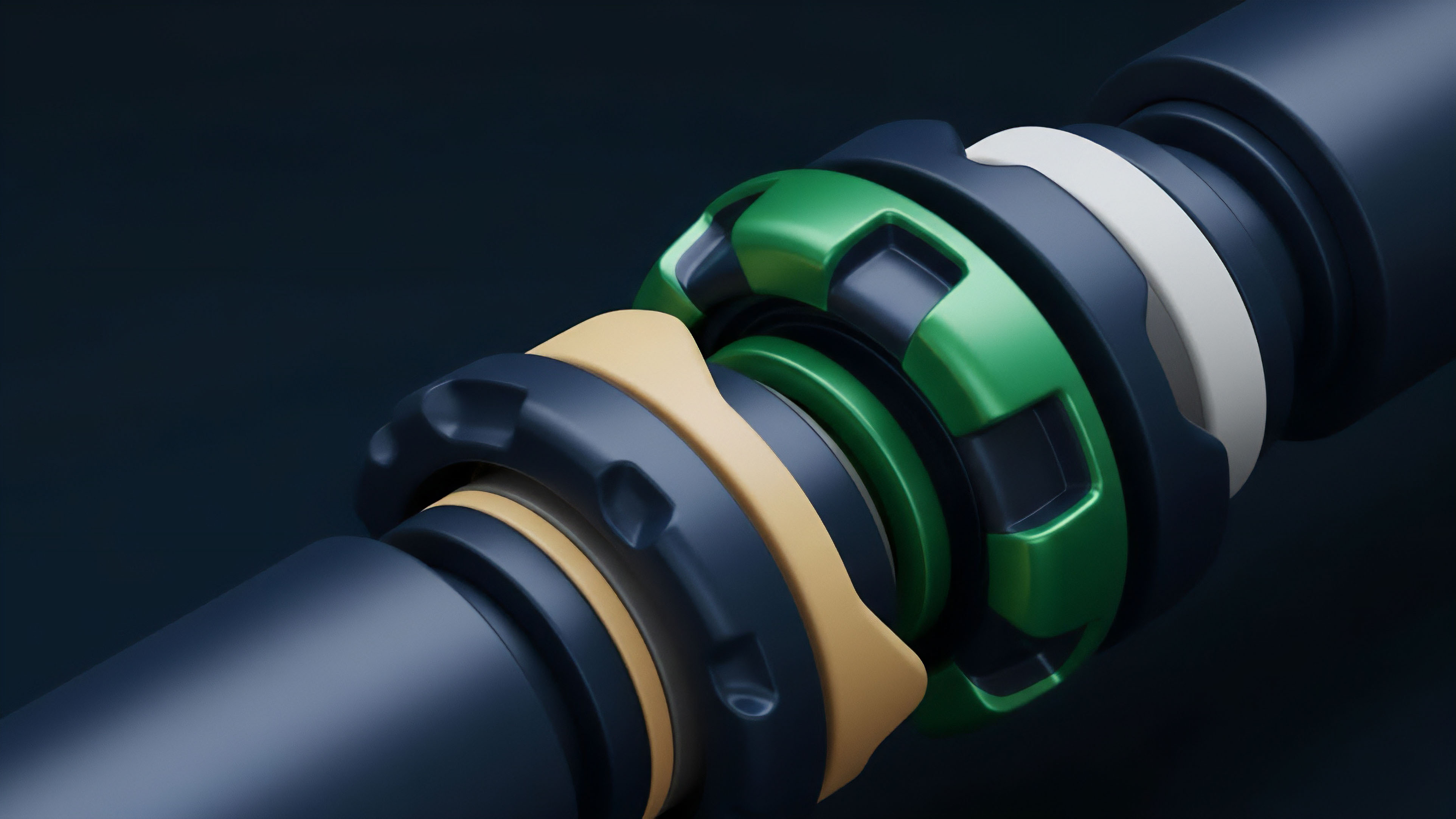

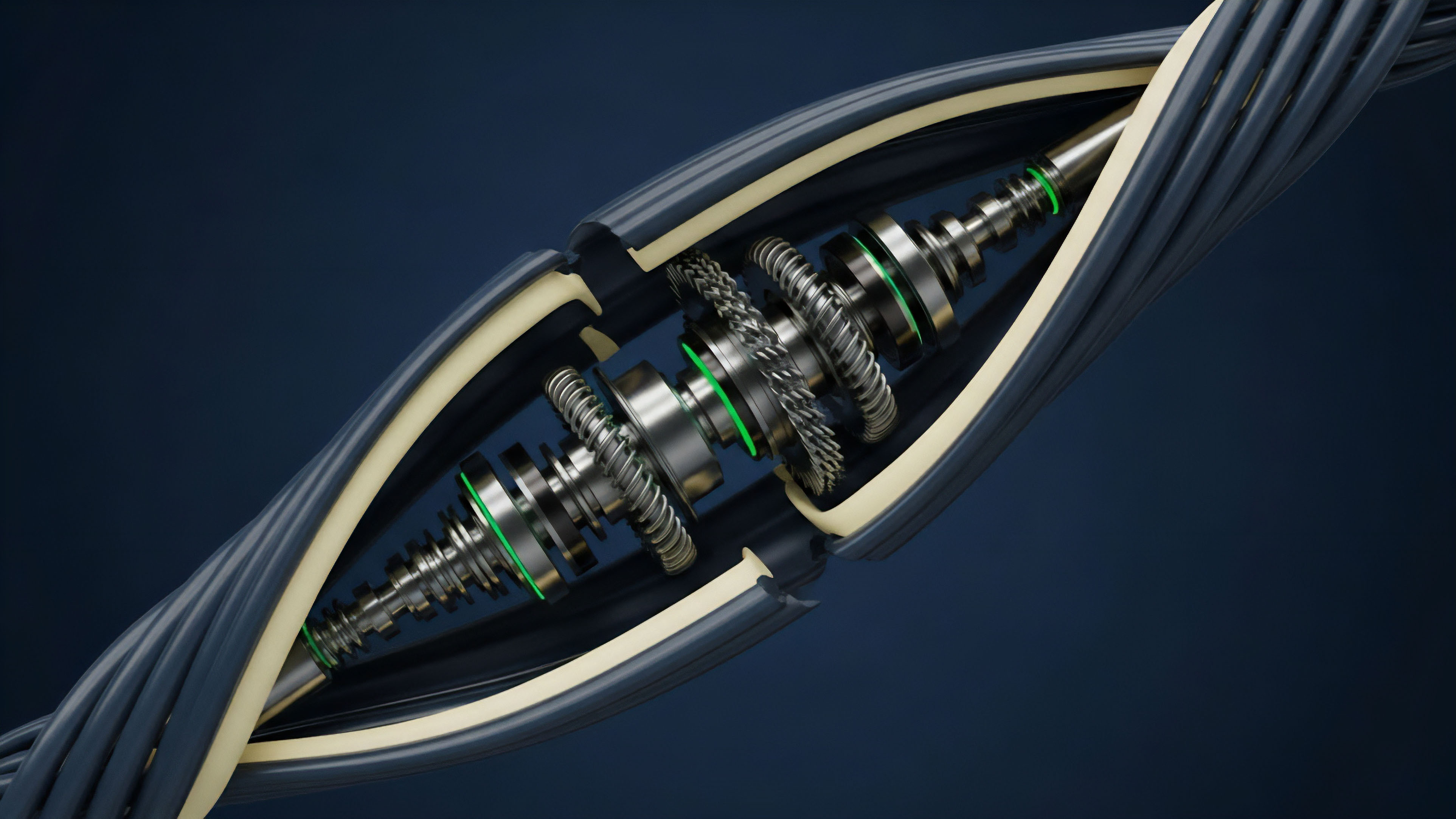

Modern implementations of Order Book Normalization utilize a microservices architecture.

A set of “adapters” connects to individual exchanges, consuming raw data and pushing it into a high-speed message bus. A central “normalizer” service then consumes these messages, applies the necessary transformations, and publishes the standardized data to the trading engines. This modularity allows for the quick addition of new exchanges without disrupting the existing system.

- Adapter Layer: Handles the specifics of each API, including authentication, rate limiting, and connection management.

- Transformation Layer: Executes the logic for price conversion, quantity scaling, and field mapping.

- Validation Layer: Checks for data sanity, ensuring that prices are within expected ranges and timestamps are sequential.

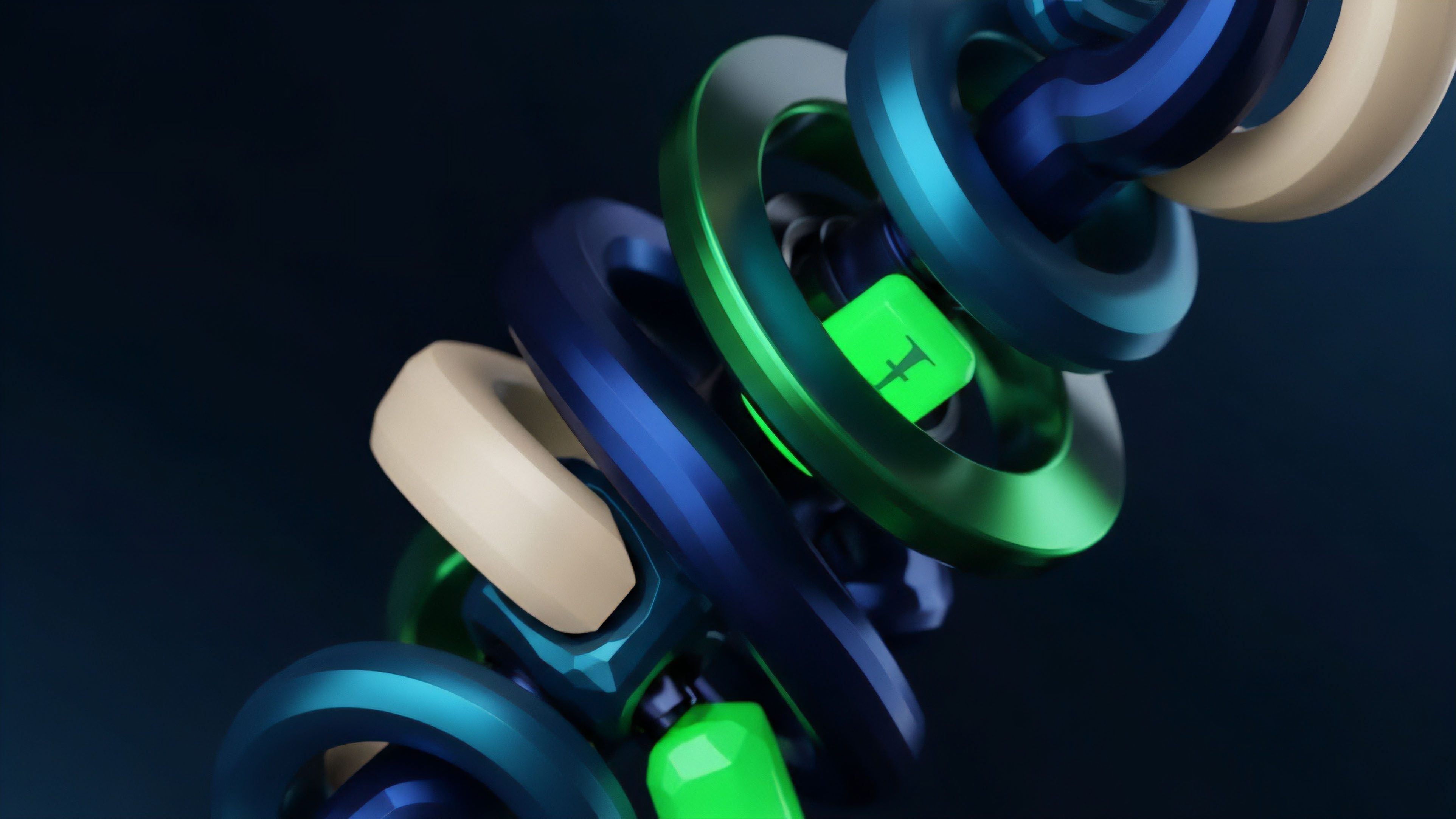

Handling High-Frequency Updates

The volume of data in crypto markets can be staggering, with some exchanges producing thousands of updates per second. Order Book Normalization must be capable of processing this load without falling behind the market. Techniques like thread-per-core architectures and lock-free data structures are used to ensure maximum throughput.

By offloading the normalization to dedicated hardware or optimized software, the trading logic can focus entirely on execution and risk management.

Cross-Venue Arbitrage Execution

When the normalization engine detects a price discrepancy between two venues, it triggers an arbitrage opportunity. Because the data is already normalized, the execution engine can immediately calculate the potential profit and risk without further data processing. This speed is the primary advantage of a robust Order Book Normalization system, as it allows the participant to capture fleeting opportunities before the rest of the market reacts.

Evolution

From Centralized to Decentralized Liquidity

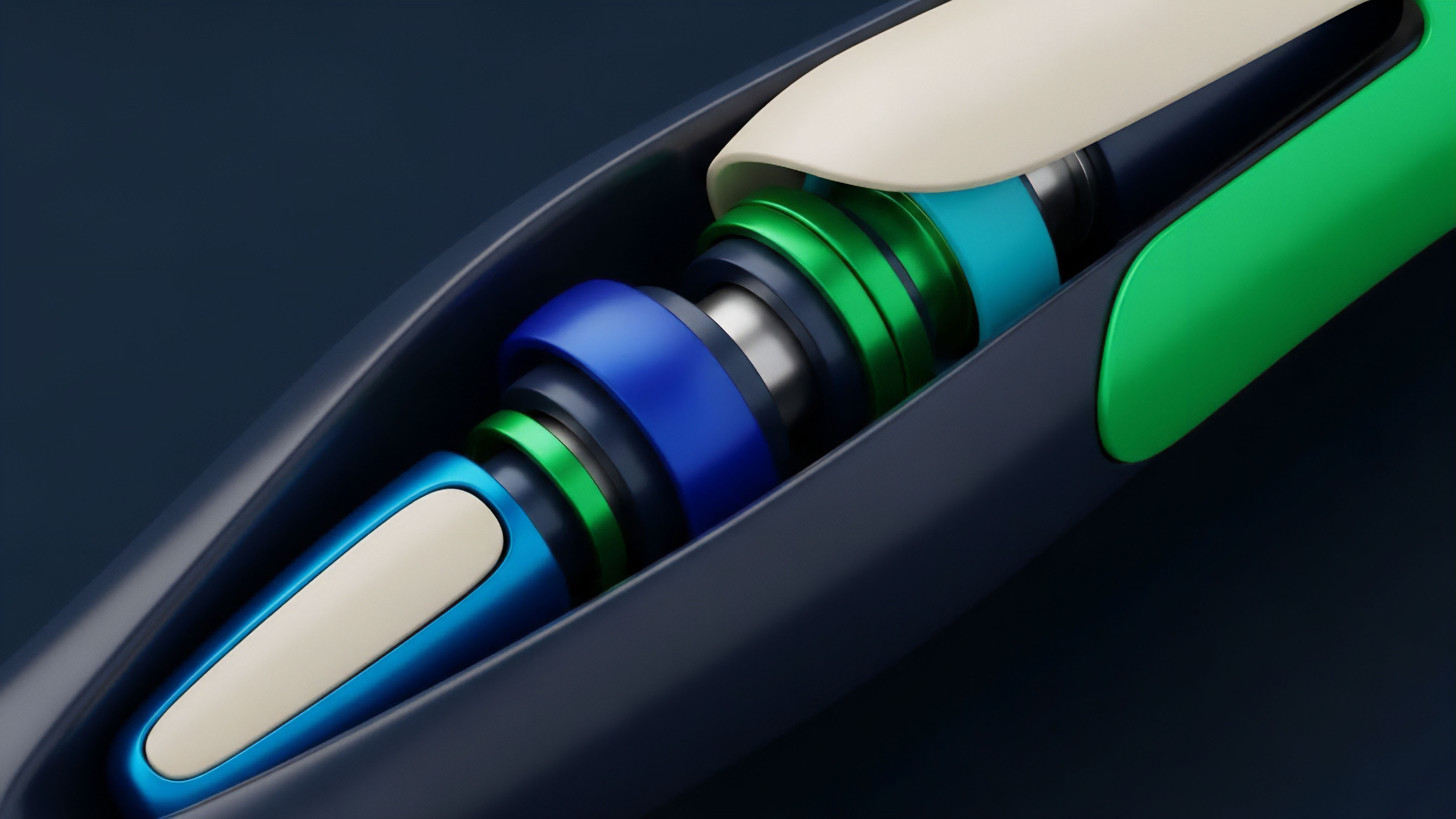

The most significant change in Order Book Normalization has been the inclusion of decentralized finance (DeFi) protocols.

Initially, these systems were too slow to be relevant for high-frequency trading. However, the emergence of high-performance blockchains and Layer 2 solutions has brought on-chain order books into the mainstream. Normalizing this data requires a deep understanding of blockchain state and event logs, which is a significant departure from traditional API-based normalization.

The integration of on-chain liquidity into normalized data streams represents the next phase of financial market transparency.

Machine Learning in Data Cleaning

Recent advancements have seen the introduction of machine learning models into the Order Book Normalization process. These models are trained to detect patterns of market manipulation or data errors that traditional rule-based systems might miss. By predicting the “true” state of the market based on historical data from multiple venues, these systems can provide a more accurate view of liquidity than any single exchange feed.

| Feature | Legacy Normalization | Modern Normalization |

|---|---|---|

| Source Type | CEX Only | CEX, DEX, and AMM |

| Error Handling | Static Rules | ML-based Anomaly Detection |

| Latency | Millisecond Range | Microsecond Range |

The evolution of Order Book Normalization reflects the broader trend of the crypto market becoming more institutionalized. As the stakes get higher, the tolerance for data errors disappears. The systems that can provide the most accurate, timely, and comprehensive view of global liquidity will be the ones that survive and thrive in this adversarial environment.

Horizon

Zero-Knowledge Data Verification

The future of Order Book Normalization lies in the verifiable accuracy of the data.

Zero-knowledge proofs (ZKPs) could be used to prove that a normalized order book is an accurate representation of the raw data from the exchanges without revealing the proprietary logic used in the transformation. This would allow for a new level of trust in third-party data providers, as users could verify the integrity of the data they are purchasing.

AI-Driven Liquidity Synthesis

As AI becomes more integrated into financial systems, we will see the rise of synthetic order books. These will not just normalize existing data but will use generative models to predict where liquidity will appear in the future. Order Book Normalization will be the input for these models, providing the clean data needed for accurate predictions.

This will shift the focus from reacting to the current market state to anticipating future liquidity shifts.

Global Unified Liquidity Layer

The ultimate goal is a single, global, normalized view of all digital asset liquidity. This “unified layer” would eliminate the concept of fragmentation, treating the entire world’s liquidity as a single pool. Order Book Normalization is the technology that makes this possible, turning a chaotic mess of data into a structured and efficient financial system. This will lead to lower spreads, better execution, and a more resilient market for all participants.

Glossary

Price Discovery Mechanism

Centralized Exchange Liquidity

Synthetic Order Books

Adversarial Market Modeling

Protocol Buffers

Bid-Ask Spread Normalization

Layer 2 Liquidity

Risk Management Systems

Market Depth Analysis