Essence of DepthMap Architectures

The class of tools known as Order Book Data Visualization Libraries , which we can refer to collectively as DepthMap Architectures , serves as the critical interface between raw Level 2 (L2) market microstructure and the cognitive processing of a derivatives trader. Its core function is the transformation of a high-velocity, discrete stream of price-level and volume updates into a continuous, probabilistic liquidity surface. This is a foundational element for options trading, where the speed of order book change directly impacts the implied volatility surface and, consequently, pricing models.

The visualization is not simply a display; it is a real-time risk filter , enabling the instantaneous identification of liquidity pools, price-level concentrations, and the tell-tale signs of algorithmic pressure.

Functional Requirement

The primary financial requirement is to model the Liquidity Horizon. This refers to the depth of capital available before a market order of a specific size will cause a significant price move, often quantified in terms of basis points slippage. For crypto options, where underlying assets exhibit high volatility and order book fragmentation is common, the visualization must aggregate data from multiple venues ⎊ centralized limit order books (CLOBs) and decentralized automated market makers (AMMs) ⎊ to construct a unified view of available delta.

A DepthMap Architecture translates high-frequency, discrete order book updates into a continuous, probabilistic liquidity surface essential for options risk management.

- Latency Sensitivity: Visualization must operate at near-zero rendering latency, ideally below 16 milliseconds to align with human visual persistence, ensuring the displayed depth is current with the true market state.

- Aggregated View: It must synthesize L2 data from multiple derivative protocols ⎊ a necessary step given the current fragmentation across the decentralized finance (DeFi) landscape.

- Price-Volume Skew: The visual representation must accurately depict the imbalance between bid and ask volumes, which serves as a primary, real-time indicator of directional market pressure and potential short-term skew shifts.

Origin of Market Ladders

The conceptual origin of DepthMap Architectures traces back to the ‘ladder’ or ‘TAPE’ displays of traditional futures and equity markets. These early systems, which simply listed price levels and corresponding volumes, were the first attempts to visualize the latent demand and supply forces that drive price discovery. In the crypto space, this concept was reborn out of Market Microstructure necessity.

Early crypto exchanges, operating with lower liquidity and less regulatory oversight, often exhibited erratic price action where large orders could clear entire levels of the book.

From Tape Reading to Depth Charts

The initial iteration in digital asset trading was the simple price-volume bar chart, which quickly proved insufficient. The sheer volatility of crypto assets, particularly in the options domain, required a more dynamic representation. The transition was marked by two key technical challenges: first, managing the enormous volume of tick data generated by high-frequency trading (HFT) bots; and second, the need to filter out spoofing and other manipulative practices that distort the true liquidity profile.

The visualization evolved from a static snapshot into a time-series of liquidity, allowing traders to see the flow of orders, not just the current state. This shift acknowledged the adversarial nature of the market, where displayed liquidity is frequently illusory ⎊ a concept well-understood from financial history, echoing the flash crashes and manipulative practices of centralized venues.

| Stage | Primary Venue | Data Challenge | Visualization Output |

|---|---|---|---|

| Traditional Ladder (Pre-2010) | Centralized Exchange (CME, NYSE) | Bandwidth/Transmission Speed | Static Price/Volume List |

| Crypto Depth Chart (2014-2018) | Early Crypto CLOBs | High-Volume Tick Data | Aggregated Price Curve |

| DepthMap Architecture (Post-2018) | Multi-Venue, Options Protocols | Fragmentation/Spoofing/Latency | Probabilistic Liquidity Surface |

Theory and Microstructure Invariance

The theoretical foundation of a robust visualization system lies in its ability to maintain Microstructure Invariance ⎊ the principle that the underlying market dynamics should be accurately represented regardless of the specific exchange’s tick size or message protocol. The system must operate on a unified data model that abstracts away the idiosyncrasies of the source. The core challenge here is the rigorous application of mathematical modeling to real-time data structures.

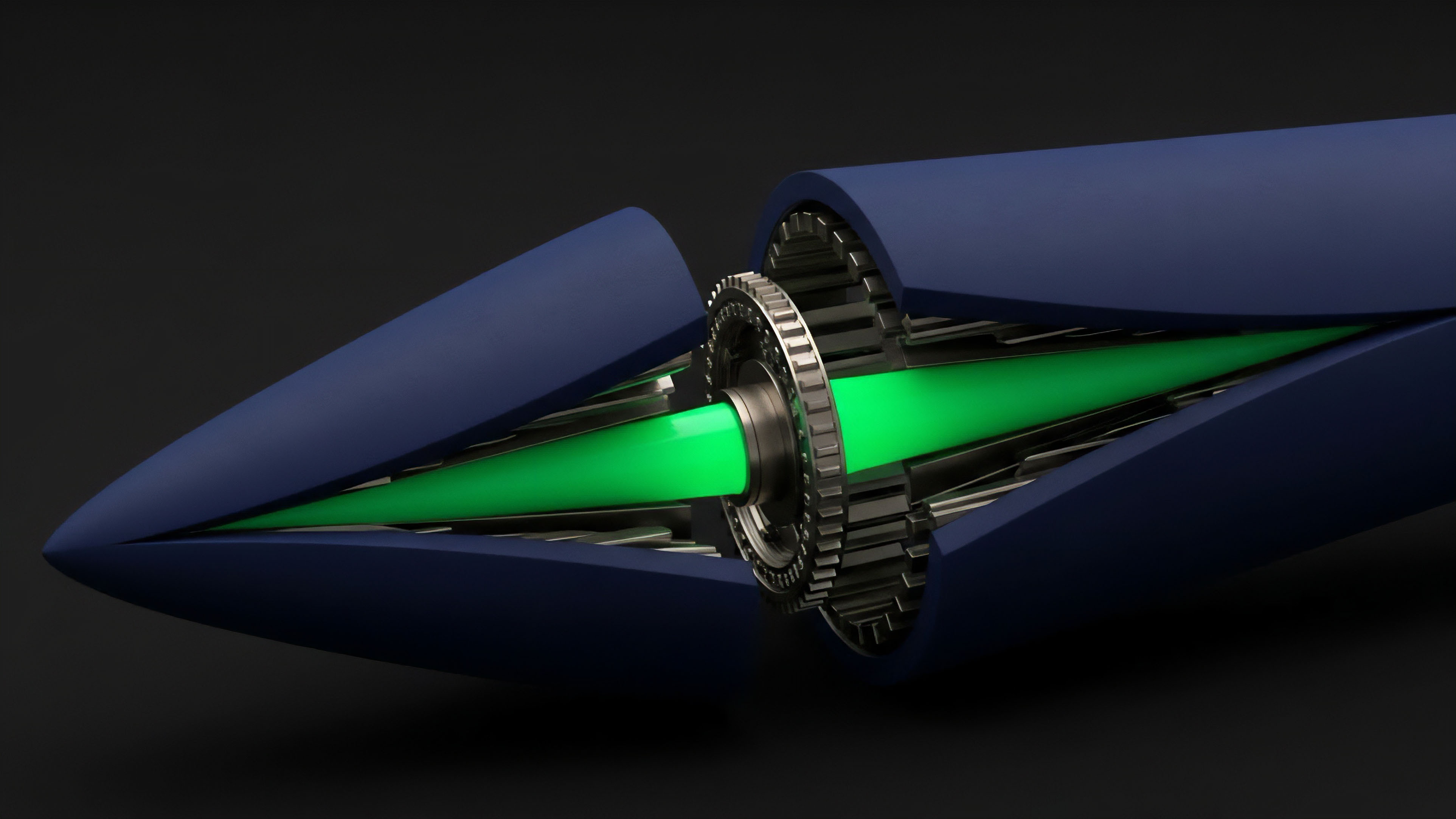

The visualization engine relies heavily on high-performance data structures like Red-Black Trees or Skip Lists to manage the bid and ask sides of the book, ensuring logarithmic time complexity for insertions, deletions, and updates, which is vital for maintaining a real-time view in a high-update environment. This is where the pricing model becomes truly elegant ⎊ and dangerous if ignored. The visualization must not only show volume but also implicitly represent the cost of immediacy.

A shallow book implies a high cost of immediacy, directly translating to a higher Gamma Risk for options market makers, as small moves in the underlying asset cause large changes in the option’s delta. The visual depth curve, therefore, becomes a proxy for the market’s collective estimate of volatility realized over the next few seconds. The architectural decision to use WebGL or specialized GPU-accelerated rendering pipelines is a direct acknowledgment of the physical limits of information transfer and human perception.

Our inability to respect the skew in this data is the critical flaw in many current trading models, leading to underestimation of liquidation cascades. The depth map is, in a way, a visual representation of the market’s Adversarial Game Theory ⎊ the displayed liquidity is a constant negotiation between genuine orders and manipulative attempts to induce price movement. The visualization tool must be architected to filter out the noise, revealing the true intent.

It’s a profound observation that the very structure of information flow dictates financial outcomes. The speed of light itself places an ultimate constraint on arbitrage, making local, low-latency data processing a persistent source of alpha.

Approach Data Pipeline and Rendering

The construction of a high-fidelity DepthMap Architecture requires a multi-stage, low-latency data pipeline. The process begins with ingestion and ends with GPU-accelerated rendering, where performance is paramount.

Data Ingestion and Aggregation

The first step involves establishing persistent, fault-tolerant connections to multiple exchange APIs, typically via WebSockets for real-time streaming of L2 updates. The key is the standardization of the disparate data formats.

- Protocol Normalization: Raw messages from different protocols (e.g. FIX, proprietary JSON) are converted into a single, canonical internal data structure, ensuring all price and volume fields are consistently typed and scaled.

- Delta Compression Handling: Most high-frequency exchanges transmit only the changes (deltas) to the book, rather than the full state. The visualization engine must accurately apply these deltas to its local copy of the order book, requiring precise sequencing and timestamping to handle out-of-order or dropped packets.

- Latency Buffering: A minimal buffer is necessary to re-sequence data packets and check for missing updates, but this buffer must be kept to an absolute minimum ⎊ often measured in single-digit milliseconds ⎊ to prevent the visualization from lagging the true market state.

Effective DepthMap visualization relies on robust delta compression handling and a canonical data model to harmonize disparate exchange protocols.

Visualization Rendering

The final output requires highly performant rendering to handle tens of thousands of data points updating multiple times per second. Traditional HTML DOM or SVG rendering is insufficient.

GPU Accelerated Drawing

Modern DepthMap Architectures rely on technologies like WebGL or native Canvas APIs for rendering. This offloads the drawing calculations to the client’s Graphics Processing Unit (GPU), allowing for complex, smooth animations and updates without bogging down the main application thread. The visual elements ⎊ the depth curve, the price ladder, and the volume bars ⎊ are drawn directly as geometry, enabling real-time manipulation and complex overlays.

The rendering must be able to project multiple data dimensions onto a 2D or 3D surface: price (X-axis), volume (Y-axis), and often implied volatility or time-weighted volume (color or Z-axis).

Evolution to Volatility Surfaces

The utility of visualization has rapidly progressed from a simple measure of liquidity to a diagnostic tool for volatility structure. As the crypto options market matured, participants recognized that the depth of the book is inextricably linked to the implied volatility (IV) surface.

From L2 Depth to IV Skew Visualization

The current evolution focuses on moving beyond the raw L2 data to visualize the synthetic liquidity derived from the options Greeks. The DepthMap now often incorporates an overlay that shows the distribution of open interest and volume across strikes, effectively creating a 3D visualization where the third dimension represents the Implied Volatility Skew at various maturities. This allows a strategist to immediately see where the market is pricing in tail risk ⎊ the “smile” or “smirk” of the IV surface.

| Generation | Core Data Displayed | Primary Strategic Insight | Systemic Challenge Addressed |

|---|---|---|---|

| 1st Gen (Depth) | Price, Volume | Execution Slippage | Illusory Liquidity |

| 2nd Gen (Flow) | Price, Volume Delta, Time | Order Flow Direction | Algorithmic Spoofing |

| 3rd Gen (Surface) | Price, Volume, Implied Volatility | Skew/Kurtosis Risk | Underpricing of Tail Events |

The shift toward visualizing the Implied Volatility Skew directly on the DepthMap allows market makers to instantaneously quantify the market’s consensus on tail risk.

Trade-Offs in Decentralized Liquidity

The challenge in DeFi is that many options protocols rely on AMMs, not CLOBs. Visualizing AMM-based liquidity requires a different approach: mapping the slippage curve of the pool’s bonding function rather than discrete orders. The evolution of DepthMap Architectures now includes the ability to toggle between these two fundamentally different representations of capital deployment ⎊ discrete limit orders versus continuous pool slippage ⎊ a pragmatic response to the current fragmentation of derivative venues.

This requires the system to calculate the theoretical L2 equivalent of an AMM based on its current reserves and bonding curve parameters.

Horizon Prescriptive Analytics

The future of DepthMap Architectures lies in moving toward prescriptive and predictive analytics, fundamentally changing the strategic landscape for crypto options. The next generation of tools will leverage advancements in Zero-Knowledge (ZK) technology and behavioral modeling to offer a level of transparency and foresight previously confined to the most sophisticated proprietary trading desks.

Integration of On-Chain Proofs

With the rise of ZK-rollups and verifiable computation, it becomes possible to prove the integrity of the L2 data stream without sacrificing privacy or performance. Future visualization tools will be able to verify that the displayed order book state is mathematically consistent with the underlying protocol’s state transition function. This is a profound leap in Smart Contract Security and market trust, allowing traders to rely on the visualization as an auditable truth rather than a feed susceptible to manipulation.

Behavioral Game Theory Overlays

The most compelling evolution is the integration of Behavioral Game Theory into the visualization layer. The DepthMap will move beyond displaying what is to displaying what is likely. This involves algorithms that analyze historical order book data to identify and project:

- Liquidation Clusters: Price levels where large, leveraged positions are statistically likely to be liquidated, which act as powerful magnets for price action.

- Iceberg Order Probabilities: Statistical models that estimate the total size of hidden liquidity based on the frequency and size of small, persistent order placements.

- Automated Market Maker (AMM) Feedback Loops: Visualizing the likely price impact of a large trade on a specific options AMM and the resulting instantaneous change in the pool’s implied volatility.

This future state positions the DepthMap as the primary tool for navigating systemic risk, allowing the Derivative Systems Architect to see the interconnected leverage dynamics and potential contagion pathways across multiple protocols, transforming a data display into a survival mechanism. The ultimate goal is a visualization that, by exposing the market’s vulnerabilities, forces a more robust and resilient financial architecture.

Glossary

High Frequency Trading

Market Resilience

Gamma Risk

Trade Execution Cost

Order Book

Price Discovery Mechanism

Market Makers

Red Black Trees

Open Interest Distribution