Essence

High-frequency market participants operate within a digital environment where information asymmetry dictates the boundary between profit and insolvency. Order Book Data Mining Tools represent the analytic infrastructure required to parse high-fidelity signals from the chaotic noise of algorithmic execution. These systems extract granular event data to reveal the distribution of liquidity across price levels, providing a transparent window into the structural health of the market.

By capturing every modification, cancellation, and execution within the Limit Order Book (LOB), these tools transform raw WebSocket streams into a structured record of intent. This capability allows for the identification of hidden patterns such as “spoofing” or “layering,” where participants place orders without the intention of execution to manipulate price perception. In the adversarial context of crypto derivatives, understanding the depth of the book at specific strike prices is a prerequisite for managing delta-neutral strategies.

Order Book Data Mining Tools provide the necessary transparency to identify the latent intent of market participants through the rigorous analysis of limit order book fluctuations.

The systemic relevance of these tools extends to the evaluation of market resiliency. During periods of extreme volatility, the thinning of the order book ⎊ often referred to as a liquidity vacuum ⎊ can lead to cascading liquidations. Order Book Data Mining Tools quantify these risks by measuring the volume required to move the price by a specific percentage, known as market depth.

This data informs the calibration of margin engines and the setting of risk parameters within decentralized protocols, ensuring that the system remains solvent under stress.

Origin

The genesis of high-resolution data extraction lies in the transition from floor-based trading to electronic matching engines. In traditional finance, access to the full depth of the book was a privileged commodity, often restricted to institutional entities via expensive proprietary feeds. The emergence of Bitcoin and subsequent decentralized exchanges shifted this dynamic, as the underlying architecture of blockchain technology and open APIs necessitated a more public approach to market data.

Early crypto market participants relied on basic REST API polling, which provided a static snapshot of the market. This method proved inadequate for the rapid price discovery cycles characteristic of digital assets. The requirement for sub-millisecond precision led to the adoption of WebSocket protocols, enabling real-time streaming of the LOB.

As the complexity of the market increased with the introduction of perpetual swaps and multi-leg options, the need for specialized Order Book Data Mining Tools became apparent to handle the massive throughput of data.

The transition from static snapshots to real-time streaming protocols enabled the democratization of high-frequency market data across the decentralized financial ecosystem.

This evolution was further accelerated by the rise of quantitative hedge funds entering the crypto space. These entities brought sophisticated methodologies from equities and forex markets, demanding tools that could provide normalized data across multiple fragmented venues. The resulting architecture focuses on data integrity and chronological synchronization, allowing for the reconstruction of the market state at any given microsecond.

Theory

The theoretical framework of order book mining is rooted in market microstructure, the study of the mechanisms that facilitate asset exchange.

At the center of this study is the Limit Order Book, a continuous-time double auction where buy and sell orders are matched according to price-time priority. Order Book Data Mining Tools apply statistical models to this data to calculate the probability of informed trading, often using the Volume-Synchronized Probability of Informed Trading (VPIN) metric.

Microstructure Metrics

To understand the dynamics of price discovery, analysts focus on several primary indicators derived from the LOB. These metrics provide a quantitative basis for assessing the balance of power between buyers and sellers.

| Metric | Definition | Systemic Implication |

|---|---|---|

| Bid-Ask Spread | The difference between the highest buy and lowest sell price. | Indicates immediate transaction costs and liquidity tightness. |

| Order Imbalance | The ratio of buy-side volume to sell-side volume at specific depths. | Predicts short-term price direction based on aggressive demand. |

| Book Depth | The cumulative volume available at various price levels. | Determines the capacity of the market to absorb large trades without slippage. |

| Tick Entropy | The randomness of price changes at the minimum increment. | Measures the efficiency and unpredictability of the matching engine. |

Adversarial Game Theory

In a decentralized environment, the order book is a battlefield of strategic interaction. Order Book Data Mining Tools analyze the behavior of automated agents to detect predatory algorithms. For instance, the detection of “iceberg orders” ⎊ large trades broken into small, visible portions ⎊ requires tracking the replenishment rate of liquidity at a specific price level.

This analysis reveals the presence of large institutional players who are attempting to minimize their market impact while accumulating or distributing significant positions. The study of order flow toxicity is a central component of this theoretical exploration. Toxic flow occurs when market makers provide liquidity to participants who possess superior information, leading to adverse selection.

By mining the order book, liquidity providers can adjust their spreads or withdraw during periods of high toxicity to protect their capital. This feedback loop is a defining characteristic of modern digital asset markets, where the speed of information processing is the primary competitive advantage.

Approach

The practical implementation of Order Book Data Mining Tools involves a multi-layered technical stack designed for high throughput and low latency. The process begins with data ingestion, where the system establishes concurrent connections to various exchange gateways.

- Data Normalization involves converting disparate API responses into a unified schema, ensuring that a “limit order” on one exchange is treated identically to a “limit order” on another for cross-venue analysis.

- Timestamp Synchronization is a requisite step to account for network latency and clock drift between geographically distributed servers, allowing for a coherent global view of the market.

- State Reconstruction requires the system to maintain a local copy of the order book, applying incremental updates (deltas) in real-time to ensure the local state perfectly mirrors the exchange matching engine.

- Feature Engineering transforms raw order events into mathematical inputs for machine learning models, such as calculating the decay rate of liquidity after a large execution.

Data Granularity Levels

The depth of analysis is determined by the granularity of the data collected. Different strategies require different levels of detail, as outlined in the following structure.

| Level | Data Type | Primary Use Case |

|---|---|---|

| L1 Data | Best Bid and Offer (BBO) only. | Basic price tracking and simple retail indicators. |

| L2 Data | Top 20-50 price levels with cumulative volume. | Standard technical analysis and mid-frequency trading. |

| L3 Data | Individual order IDs and every modification. | High-frequency trading and predatory algorithm detection. |

The analysis of Order Flow represents the most advanced application of these tools. By tracking the sequence of trades and their impact on the book, analysts can distinguish between “organic” retail flow and “informed” institutional flow. This distinction is vital for options traders who must hedge their Greeks in a market where the underlying asset’s volatility is often driven by concentrated order book events rather than external news.

Evolution

The utility of Order Book Data Mining Tools has shifted from simple observation to active defense.

In the early stages of the crypto market, these tools were used primarily for backtesting simple momentum strategies. As the environment matured, the rise of Maximal Extractable Value (MEV) on decentralized exchanges introduced a new layer of complexity. Traders began using mining tools to identify pending transactions in the mempool, effectively treating the mempool as a pre-execution order book.

This shift has led to an arms race between liquidity providers and arbitrageurs. Market makers now use real-time book mining to detect when they are being “front-run” and adjust their quotes accordingly. The integration of artificial intelligence has further transformed the field, with neural networks now capable of predicting order book imbalances seconds before they manifest in price action.

This predictive capability has turned the order book into a probabilistic map of future states rather than a static record of current offers.

The integration of predictive modeling and real-time state reconstruction has transformed the order book into a probabilistic map of future price movements.

The physical constraints of network topology have also become a factor in the evolution of these tools. Proximity to the exchange’s matching engine ⎊ known as co-location ⎊ is now a standard requirement for high-frequency mining. This physical reality creates a tension with the decentralized ethos of crypto, as the most effective Order Book Data Mining Tools often require centralized infrastructure to function at peak efficiency.

This paradox defines the current state of the market, where decentralized assets are traded using highly centralized, high-performance systems.

Horizon

The future of order book analysis lies in the intersection of privacy and transparency. As decentralized finance protocols evolve, the introduction of Privacy-Preserving Order Books using Zero-Knowledge Proofs (ZKPs) will challenge the current paradigm of data mining. In such a system, the full depth of the book might be hidden, with only the proofs of liquidity being public.

This would fundamentally alter the way Order Book Data Mining Tools operate, shifting the focus from raw data extraction to the verification of cryptographic proofs.

Emergent Architectural Shifts

- Cross-Chain Liquidity Aggregation will require tools that can mine data across multiple Layer 1 and Layer 2 environments simultaneously, accounting for the unique finality times and consensus mechanisms of each chain.

- AI-Driven Liquidity Provision will see market makers using autonomous agents that mine the book to provide “just-in-time” liquidity, further reducing spreads but increasing the risk of flash crashes if the agents react simultaneously to a perceived threat.

- Regulatory Integration may lead to the mandatory use of these tools by compliance departments to detect market manipulation in real-time, effectively turning mining tools into a form of automated oversight.

The convergence of these trends suggests a future where the order book is no longer a simple list of prices, but a complex, multi-dimensional data structure. The ability to mine this data will remain the primary differentiator between sophisticated market participants and those who are merely providing exit liquidity. As the digital asset operating system continues to be redesigned, the tools we use to interpret its internal state will become the most vital component of our financial strategy, ensuring resilience in an increasingly adversarial and automated global market.

Glossary

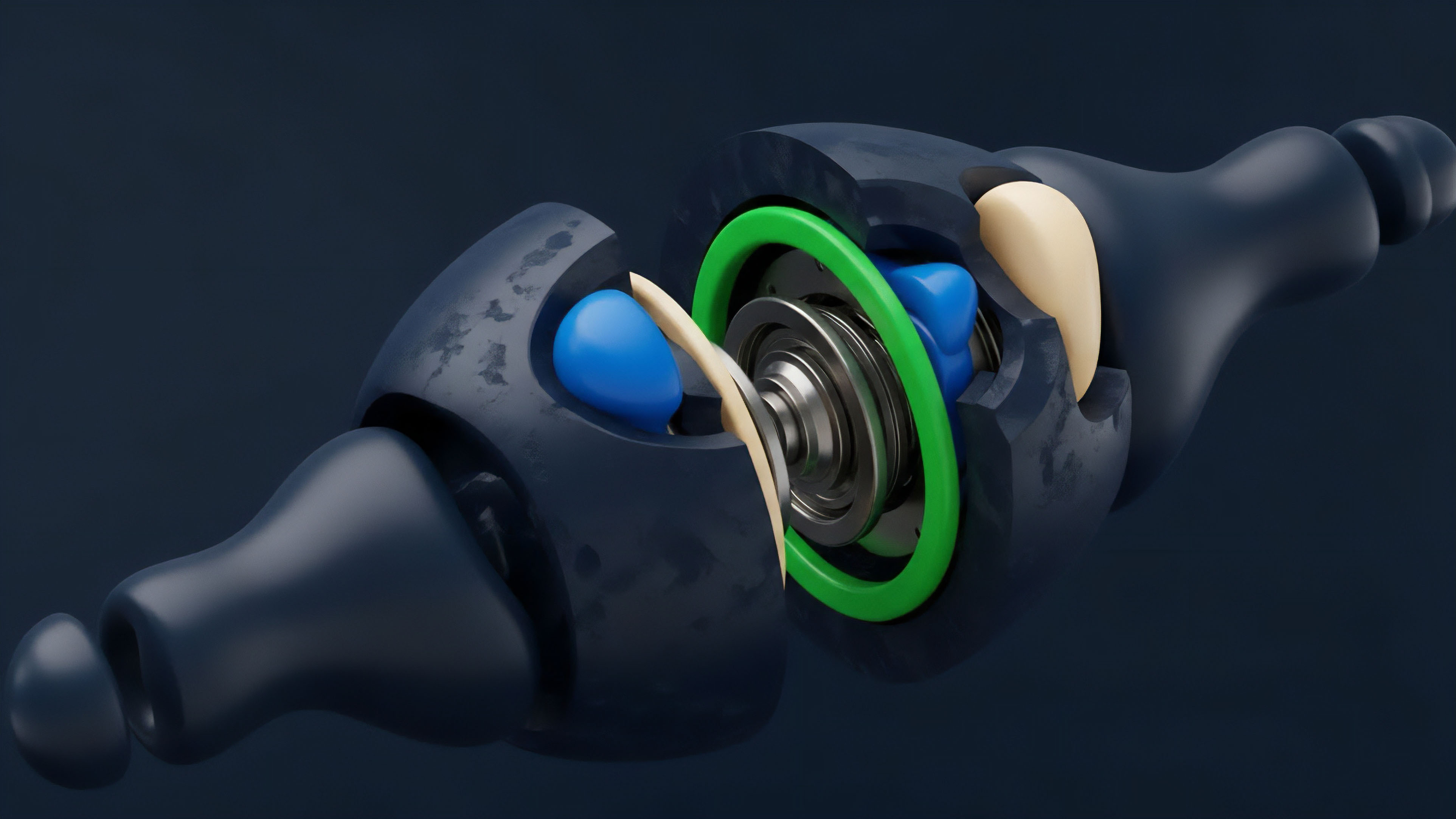

Liquidity Depth Metrics

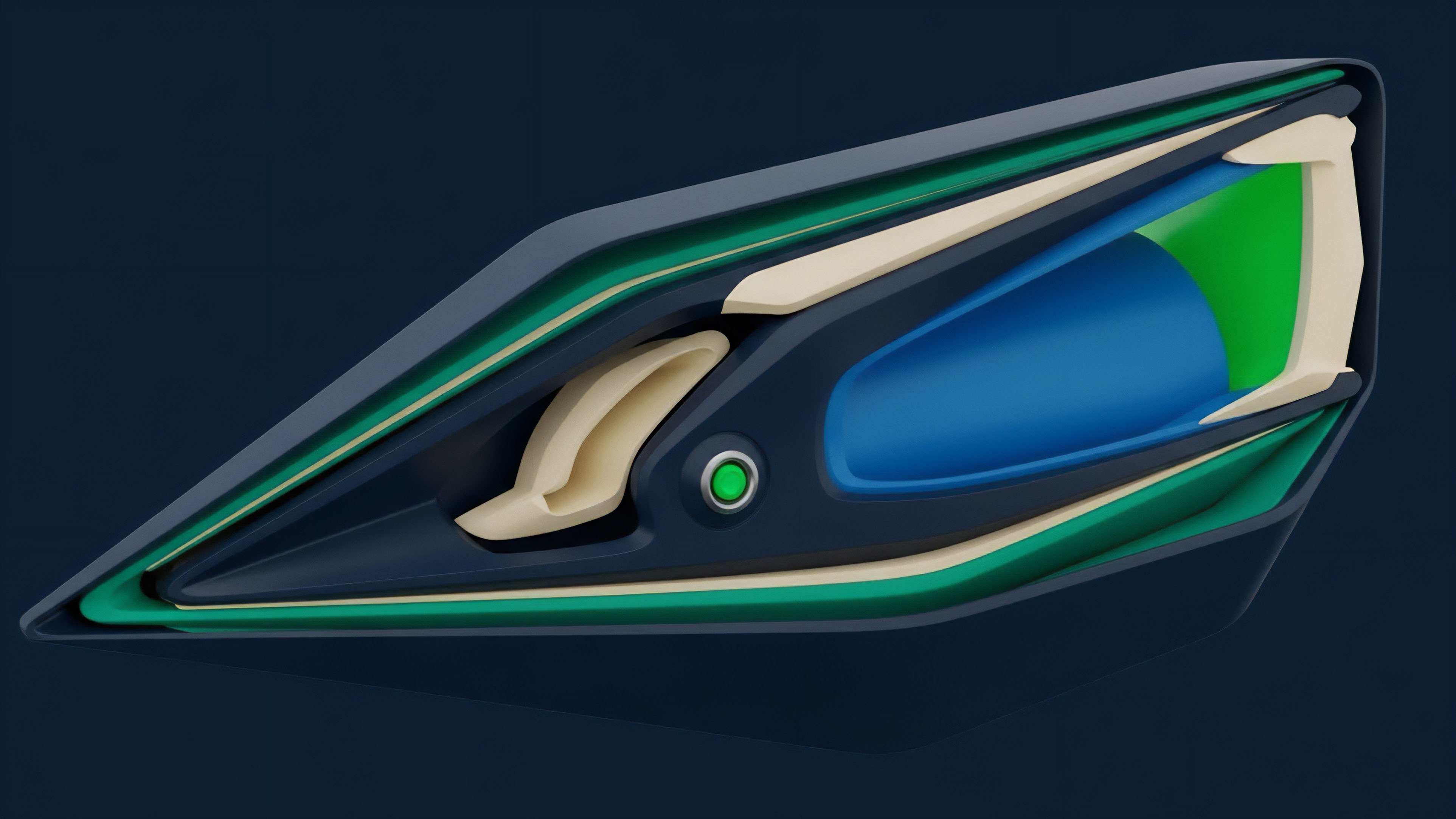

Risk Parameter Optimization

Order Book Imbalance

Delta Neutral Strategy

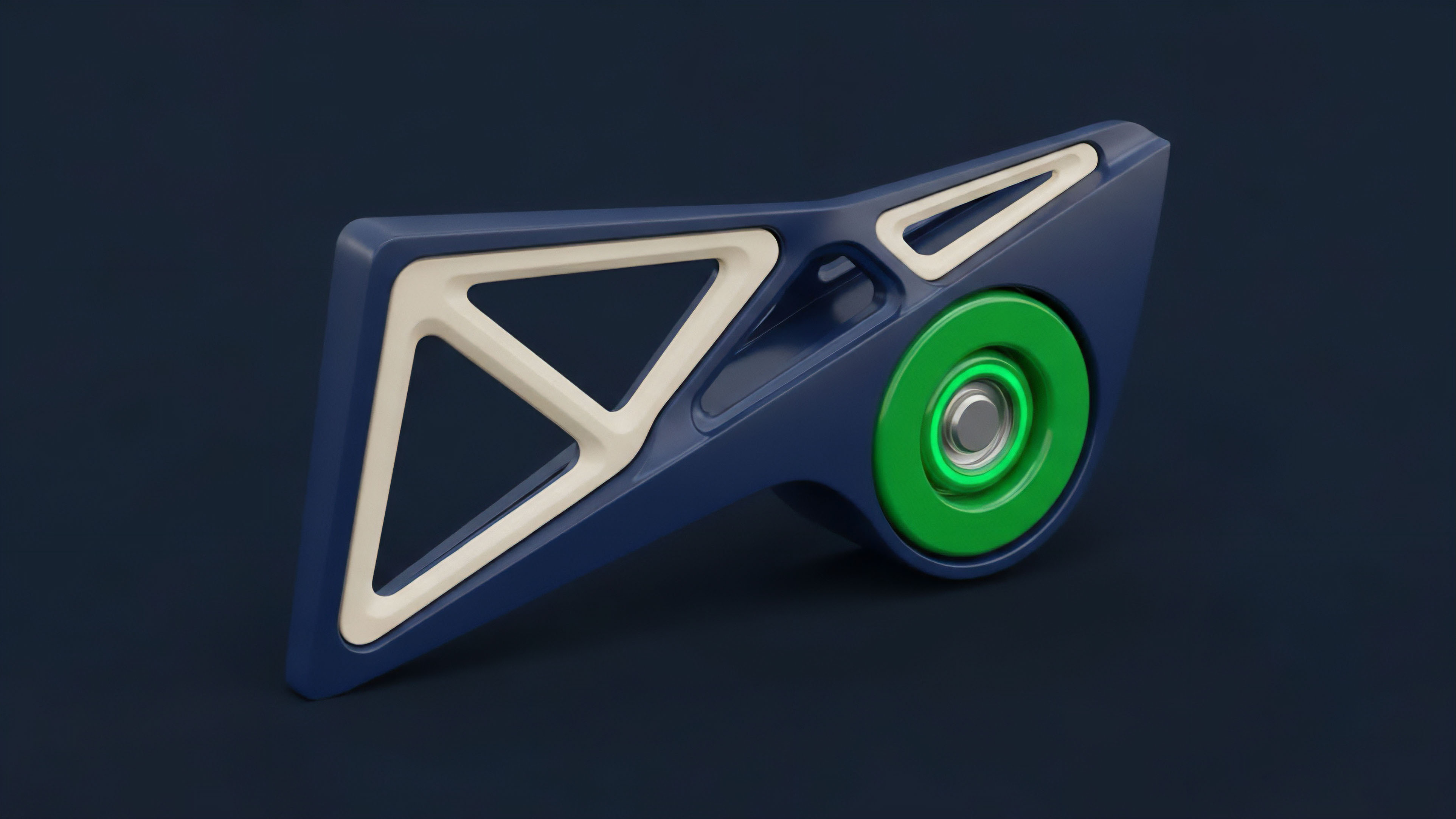

Hidden Liquidity Detection

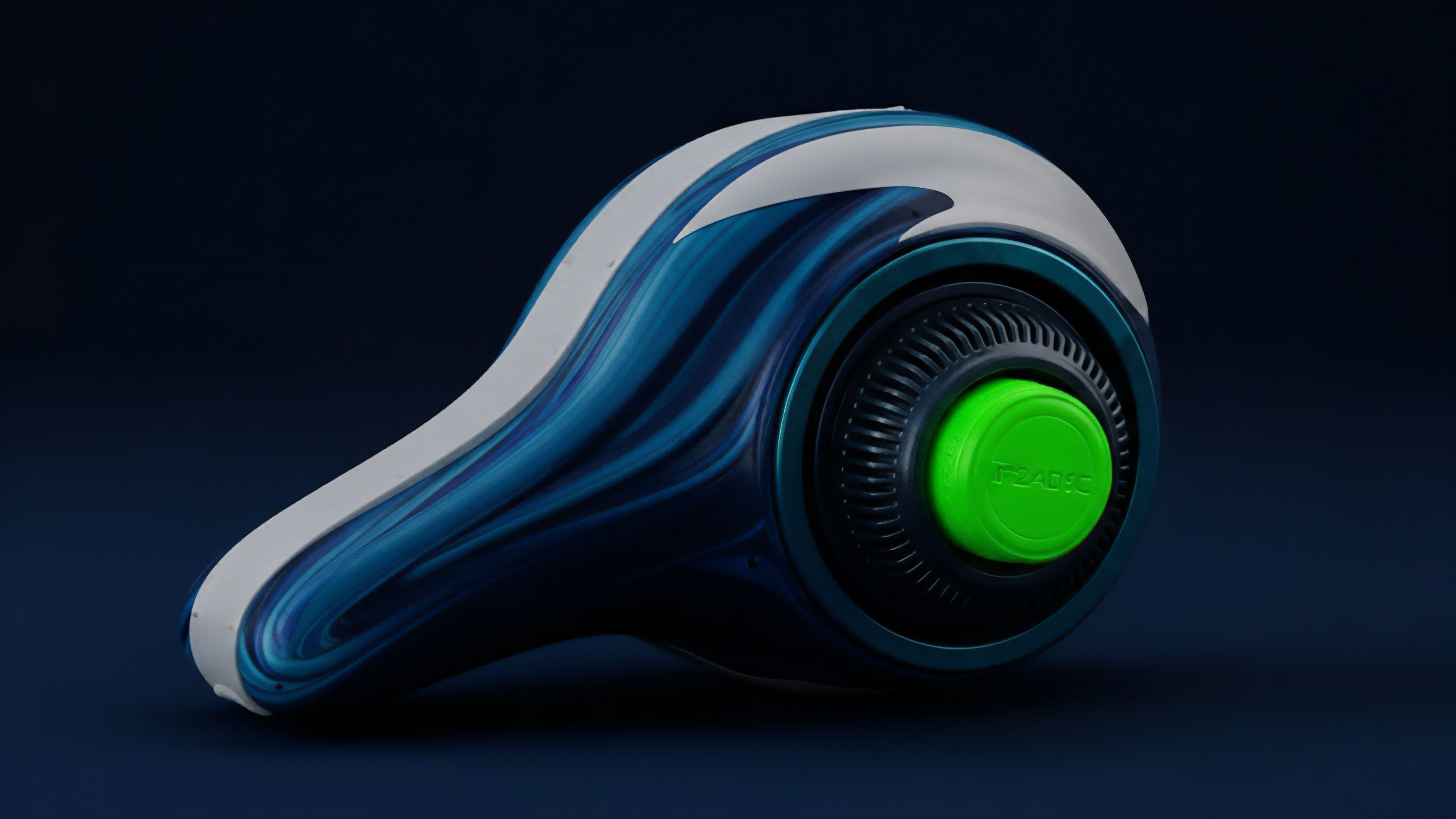

Market Participants

Order Flow Toxicity

Zero Knowledge Order Books

Market Maker Hedging