Essence

Order Book Data Analytics functions as the granular observation of liquidity distribution and price discovery mechanisms within decentralized exchange environments. It represents the conversion of raw, time-stamped limit order updates into actionable intelligence regarding market depth, intent, and potential price impact.

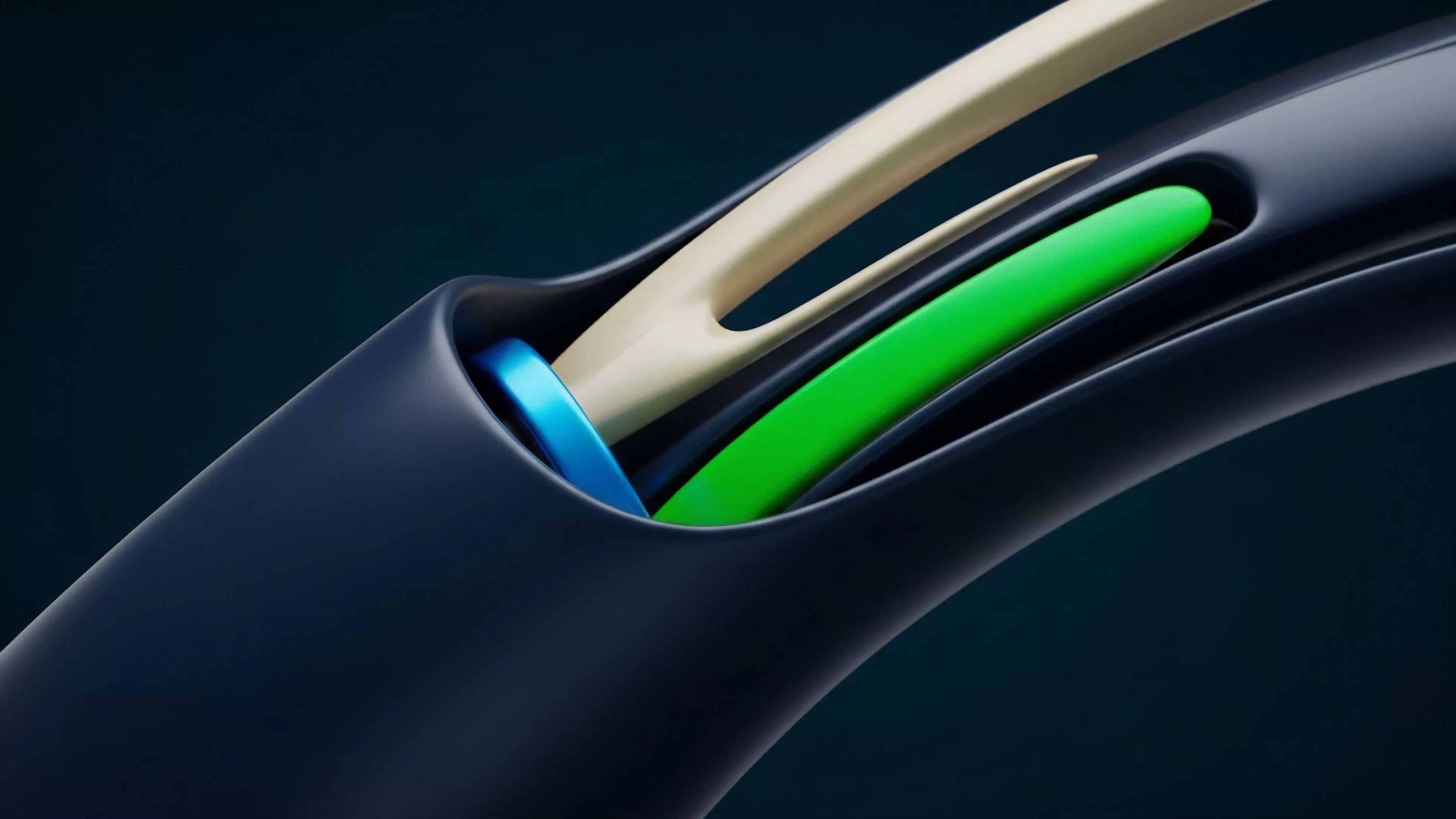

Order Book Data Analytics provides a high-fidelity reconstruction of supply and demand dynamics through the continuous processing of limit order updates.

By monitoring the bid-ask spread and the density of limit orders at varying price levels, participants quantify the friction associated with executing large-scale trades. This field moves beyond aggregate volume metrics to identify the specific behavioral patterns of market makers and liquidity providers, revealing the structural integrity of a trading venue.

Origin

The genesis of this analytical discipline resides in traditional equity market microstructure studies, adapted for the unique constraints of blockchain-based settlement. Early participants required methods to quantify the slippage inherent in Automated Market Makers versus the transparency of Central Limit Order Books.

- Price Discovery: The mechanism through which market participants reach a consensus value for an asset based on available order flow.

- Liquidity Fragmentation: The distribution of trade volume across multiple protocols, necessitating cross-venue data aggregation.

- Latency Sensitivity: The impact of block confirmation times on the validity and execution of orders within a decentralized ledger.

These origins highlight the transition from simple price monitoring to the sophisticated tracking of order flow toxicity and liquidity provision strategies. The need to understand how decentralization affects execution quality drove the development of specialized tools to parse on-chain order data.

Theory

The theoretical framework rests on the assumption that the Order Book serves as a living, breathing representation of collective market sentiment and risk appetite. Mathematical modeling of these structures involves calculating the Market Depth and Order Book Imbalance to predict short-term price movements.

Mathematical modeling of order book depth allows participants to anticipate price movement based on the asymmetry of limit orders.

| Metric | Financial Significance |

| Bid Ask Spread | Cost of immediate execution |

| Order Book Imbalance | Directional pressure indicator |

| Cumulative Depth | Resilience against large market orders |

The study of Protocol Physics dictates that the settlement engine’s speed directly influences the reliability of the observed order book. If the underlying chain experiences congestion, the data provided to participants becomes stale, rendering traditional quantitative models less effective and introducing significant systemic risk during periods of high volatility.

Approach

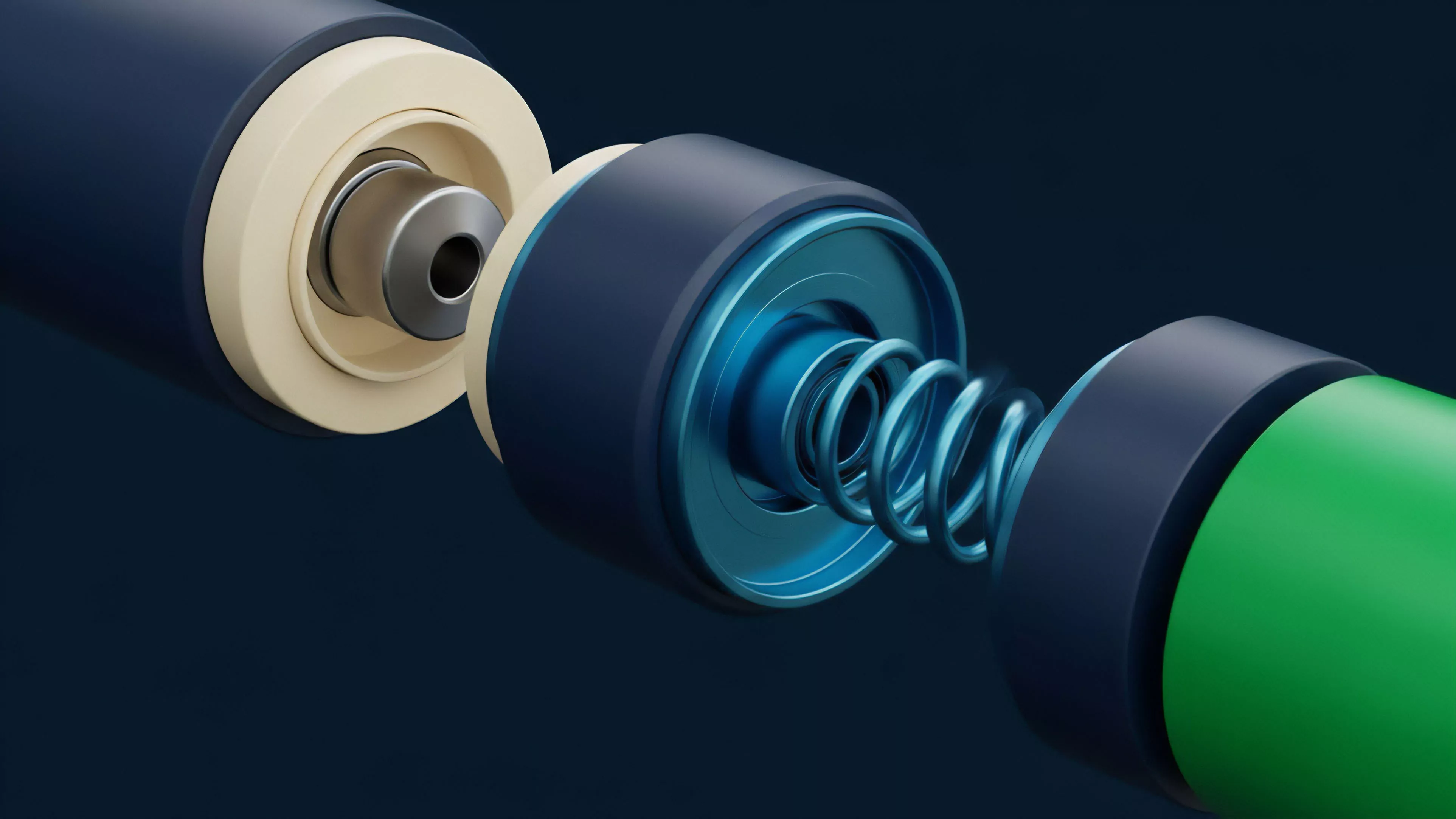

Current methodologies emphasize the real-time ingestion of websocket feeds to construct a local, synthetic representation of the order book. This requires robust infrastructure capable of handling massive throughput without compromising the integrity of the data stream.

- Quantitative Modeling: Utilizing stochastic calculus to estimate the probability of order execution at specific price points.

- Adversarial Analysis: Monitoring for predatory trading patterns such as order layering or spoofing intended to manipulate perceived liquidity.

- Execution Algorithms: Deploying smart contracts that automatically adjust limit orders based on real-time shifts in the order book.

Market participants now utilize high-frequency data to optimize their entry and exit points, effectively treating the order book as a strategic landscape rather than a passive list of prices. This proactive engagement shifts the focus from mere observation to active market manipulation defense and capital efficiency.

Evolution

The transition from centralized exchange dominance to decentralized, non-custodial trading architectures has fundamentally altered the requirements for Order Book Data Analytics. Early iterations relied on centralized APIs, whereas current systems must synthesize on-chain events with off-chain order matching data to achieve a complete picture.

Decentralized trading architectures require a synthesis of on-chain event data and off-chain matching records to achieve true visibility.

This evolution reflects a broader trend toward transparency, where the order flow is publicly verifiable, allowing for more rigorous audits of market maker performance. As protocols mature, the integration of Zero-Knowledge Proofs for order privacy presents a new challenge for analytics, as the visibility of the order book must be balanced against the need for user confidentiality.

Horizon

Future developments will likely focus on the application of predictive machine learning to identify latent signals within order flow before they manifest in price changes. This involves modeling the interaction between MEV bots and legitimate liquidity providers to understand the true cost of trade execution.

| Future Focus | Impact |

| Predictive Latency | Optimized order routing |

| Cross Protocol Analytics | Unified liquidity assessment |

| AI Driven Market Making | Dynamic spread management |

The trajectory points toward a fully autonomous, transparent market structure where Order Book Data Analytics becomes a foundational component of all decentralized financial strategies. Participants will increasingly rely on these analytical systems to survive in an environment where speed and data precision are the primary determinants of competitive advantage.