Essence

Oracle Node Consensus functions as the definitive mechanism for determining the canonical state of off-chain data within decentralized financial environments. It bridges the gap between fragmented data feeds and deterministic smart contract execution by requiring independent nodes to validate and aggregate external price or state information before updating on-chain parameters. This process transforms raw, disparate data into a single, authoritative truth required for the accurate pricing of complex derivatives.

Oracle Node Consensus provides the deterministic foundation required for decentralized protocols to ingest external data while maintaining trustless integrity.

The systemic relevance of this mechanism centers on its ability to mitigate manipulation risks inherent in price discovery. By distributing the responsibility of data reporting across a decentralized set of participants, the protocol ensures that no single point of failure dictates the settlement price of an option contract. The architecture relies on economic incentives to punish malicious actors and reward accurate reporting, aligning the interests of the node operators with the stability of the financial system.

Origin

The necessity for Oracle Node Consensus grew from the inherent limitations of blockchain architectures, which remain isolated from real-world events by design.

Early attempts to solve this problem involved centralized feeds, which created massive systemic vulnerabilities. A single compromised server could manipulate the entire price discovery mechanism, leading to catastrophic liquidations and protocol insolvency. The shift toward decentralized consensus was a direct response to these security failures.

| System Type | Data Source | Trust Assumption |

| Centralized Feed | Single Provider | Total Trust in Operator |

| Decentralized Oracle Node Consensus | Aggregated Network | Trust in Cryptographic Game Theory |

The architectural evolution drew heavily from Byzantine Fault Tolerance research and game-theoretic models of distributed systems. Developers recognized that the problem of data integrity was isomorphic to the problem of block validation. If a network can agree on the order of transactions, it can theoretically agree on the value of an underlying asset.

This realization spurred the creation of decentralized networks where node operators stake capital to guarantee the veracity of their data contributions.

Theory

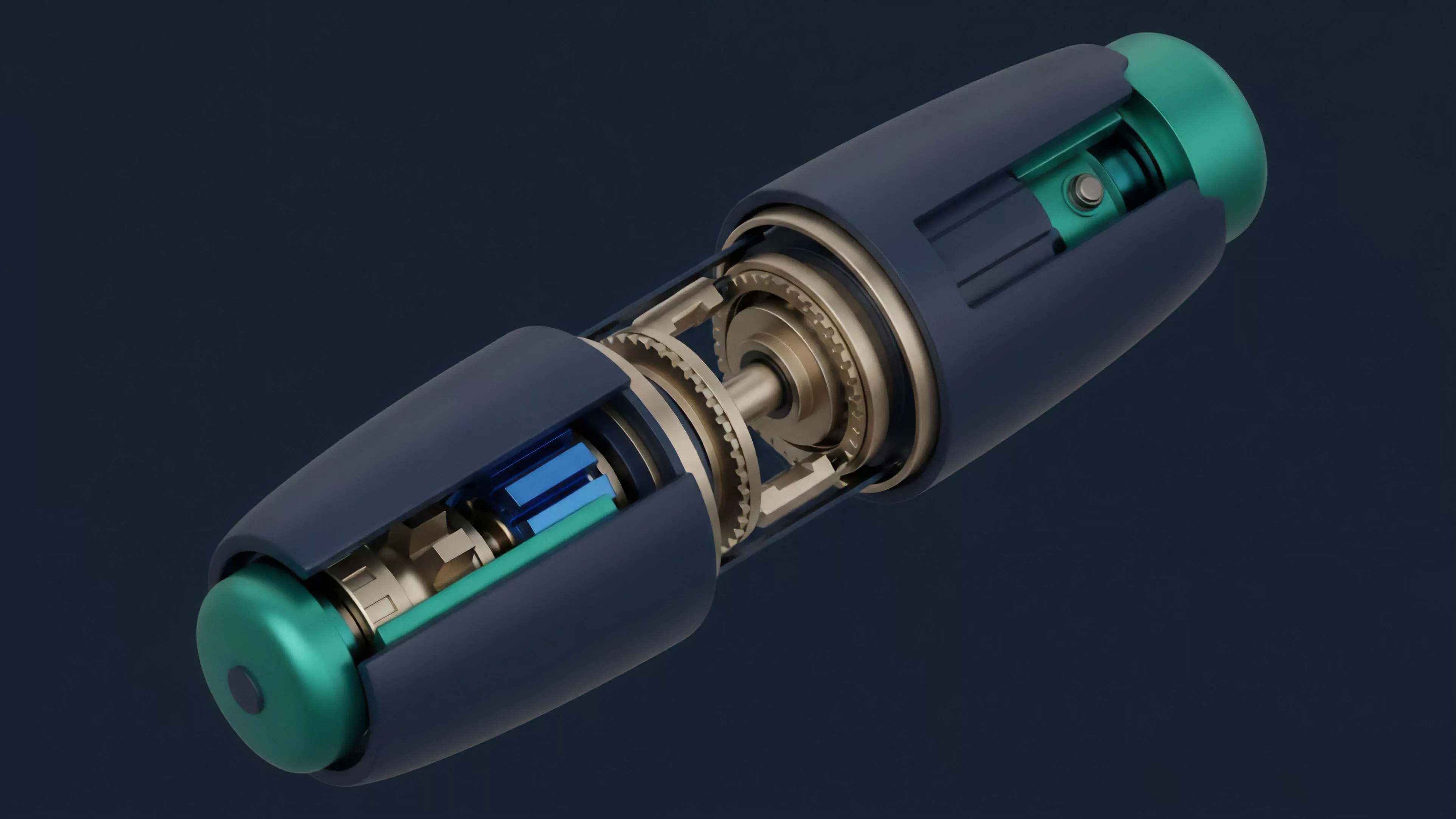

The mechanical operation of Oracle Node Consensus relies on a multi-stage aggregation process designed to filter noise and adversarial inputs. Each node in the network queries multiple independent data providers and calculates a local estimate. These estimates are then broadcast to the peer-to-peer network, where a statistical consensus algorithm ⎊ often a weighted median or a trimmed mean ⎊ is applied to produce the final, authoritative data point.

Statistical Robustness

The mathematical modeling of consensus must account for varying degrees of data latency and potential manipulation attempts. By utilizing a weighted median, the protocol naturally discards outliers that deviate significantly from the network norm, effectively neutralizing the impact of a minority of malicious or malfunctioning nodes.

- Data Aggregation involves the mathematical synthesis of multiple raw inputs into a singular, representative value.

- Stake-Weighted Validation ensures that nodes with higher economic exposure exert greater influence over the final consensus value.

- Slashing Mechanisms impose direct financial penalties on nodes that report data falling outside of statistically significant thresholds.

This structure is highly sensitive to the volatility of the underlying asset. During periods of extreme market stress, the consensus mechanism must adapt to prevent stale data from triggering erroneous liquidations. The physics of the protocol dictate that consensus speed and data accuracy exist in a trade-off; higher latency allows for broader consensus, while lower latency increases the risk of incorporating unverified data into the settlement engine.

Approach

Current implementations of Oracle Node Consensus prioritize capital efficiency and resilience against flash loan attacks.

Market makers and protocol architects now deploy advanced filtering algorithms that adjust for localized volatility spikes, ensuring that settlement prices reflect true market equilibrium rather than transient price deviations on a single exchange.

Modern oracle architectures utilize stake-weighted aggregation to insulate decentralized financial protocols from the influence of malicious data actors.

The practical deployment of these systems requires a rigorous assessment of the economic cost of manipulation versus the potential profit from triggering a liquidation. Protocols now incorporate dynamic update frequencies, where the reporting interval accelerates as the variance of the underlying asset increases. This adaptive behavior provides a more accurate reflection of market conditions during turbulent cycles.

| Mechanism | Function | Risk Mitigation |

| Medianization | Outlier Removal | Prevents Price Manipulation |

| Slashing | Incentive Alignment | Discourages Dishonest Reporting |

| Dynamic Frequency | Volatility Response | Reduces Latency Risk |

Strategic participants in the options market must evaluate the oracle’s update latency relative to the expiration time of their positions. A systemic lag in price updates can lead to significant basis risk, where the reported settlement price deviates from the actual market price at the moment of expiration. Understanding the specific consensus parameters is a prerequisite for executing robust hedging strategies in decentralized markets.

Evolution

The transition from simple price feeds to complex state verification marks the current trajectory of Oracle Node Consensus.

Initial iterations focused solely on spot price reporting for basic lending protocols. The current generation supports the high-fidelity requirements of complex derivative platforms, providing not only price data but also volatility surfaces, open interest metrics, and implied probability distributions. The movement toward modular oracle design allows protocols to choose the level of decentralization and security that aligns with their specific risk profile.

Some protocols now leverage zero-knowledge proofs to verify the source and integrity of data without exposing the underlying raw data streams to the public. This shift enhances privacy while maintaining the verifiable nature of the consensus process.

- Protocol Modularization allows for the selection of specific data validation parameters based on the asset class.

- Zero-Knowledge Verification enables the cryptographically secure proof of data integrity without full transparency.

- Cross-Chain Consensus provides the capability to synchronize price state across disparate blockchain environments.

The integration of off-chain computation allows nodes to perform complex risk analysis before reporting, effectively turning the oracle into a decentralized risk engine. This advancement represents a significant shift from passive data reporting to active financial intelligence, providing a more robust foundation for the future of decentralized options trading.

Horizon

The future of Oracle Node Consensus resides in the convergence of high-frequency data streams and automated risk management. As protocols move toward sub-second settlement, the oracle mechanism must transition to a continuous, rather than interval-based, consensus model.

This will necessitate the development of low-latency peer-to-peer protocols that can handle the throughput required for real-time derivative settlement.

The integration of real-time state proofs will define the next generation of decentralized derivative market integrity.

Future architectures will likely incorporate machine learning-based anomaly detection directly into the consensus layer, allowing the network to identify and ignore suspicious data patterns before they reach the aggregation phase. This proactive defense mechanism will be vital for maintaining systemic stability as derivative complexity increases. The ultimate goal is the creation of a global, verifiable, and low-latency financial truth layer that operates independently of any single institutional provider.