Essence

Oracle Network Architecture functions as the foundational middleware layer bridging external real-world data with blockchain-based execution environments. It facilitates the ingestion, validation, and dissemination of off-chain information ⎊ such as asset prices, interest rates, or geopolitical event outcomes ⎊ into smart contracts. By establishing a reliable data feed, this architecture enables the existence of decentralized financial derivatives, prediction markets, and automated collateral management systems that require accurate, tamper-proof inputs to maintain operational integrity.

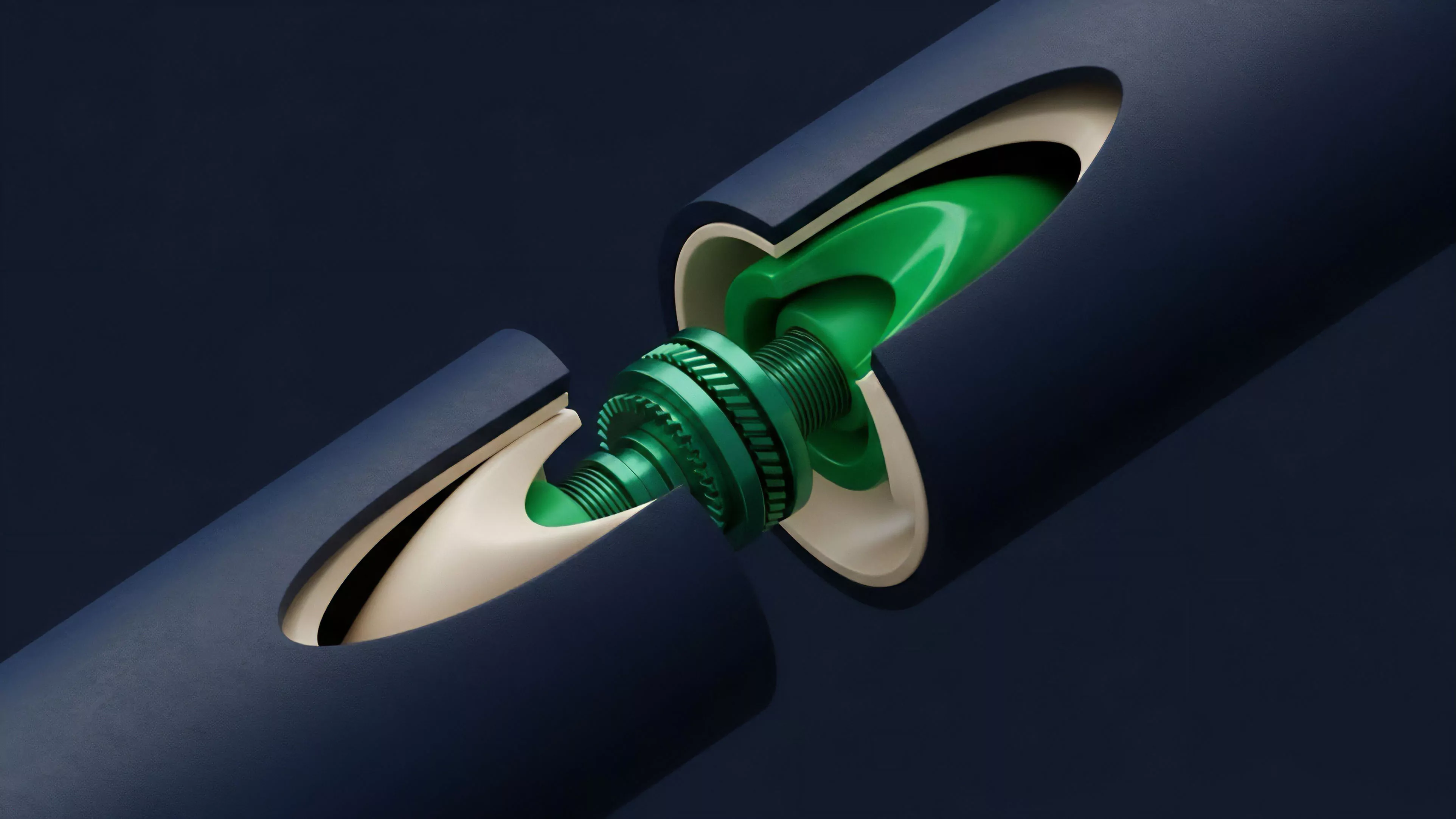

Oracle Network Architecture serves as the vital translation layer that converts external reality into verifiable on-chain state for decentralized applications.

The systemic relevance of these networks lies in their ability to mitigate the information asymmetry inherent in distributed ledgers. Without a mechanism to securely fetch and verify external data, smart contracts remain isolated, unable to interact with the broader financial ecosystem. This architecture solves the fundamental problem of trust in data acquisition, ensuring that automated financial instruments execute according to precise, pre-defined parameters rather than relying on centralized, opaque intermediaries.

Origin

The genesis of Oracle Network Architecture emerged from the technical constraints of early blockchain platforms that lacked native mechanisms to access data beyond their own distributed ledger state.

Developers identified a critical bottleneck: smart contracts were effectively blind to external events, rendering them useless for complex financial applications like insurance, derivatives, or lending protocols that depend on real-time market data.

- Data Availability The initial drive focused on overcoming the inherent isolation of smart contracts by establishing decentralized channels for information flow.

- Verification Protocols Early designs prioritized cryptographic proofs to validate data authenticity, ensuring that incoming feeds were not manipulated by malicious actors.

- Economic Incentive Models The transition toward decentralized networks necessitated the development of game-theoretic structures to reward honest reporting and penalize data providers for malicious behavior.

This evolution was spurred by the rise of Decentralized Finance, where the demand for high-fidelity price feeds became a primary constraint for scaling capital-efficient protocols. The transition from simple, centralized data pushers to complex, decentralized networks reflects the broader maturation of the industry, shifting focus from pure ledger security toward robust, interoperable data infrastructure.

Theory

The architecture relies on a multi-layered approach to consensus and data aggregation. At its core, the system employs a network of independent Node Operators who independently fetch data from multiple high-quality off-chain sources.

These nodes perform a local aggregation ⎊ often a median calculation ⎊ before submitting their results to an on-chain smart contract, which then calculates a final, aggregated data point for use by consumer protocols.

The integrity of decentralized price discovery relies on the statistical aggregation of independent node observations to minimize the impact of individual data source failures.

Consensus Mechanics

The protocol physics governing these networks must account for adversarial conditions. If a node submits data that deviates significantly from the network median, the protocol can trigger automated penalties, effectively removing the economic incentive for dishonesty. This mechanism forces nodes to prioritize accuracy over speed or cost, creating a reliable, high-fidelity data stream that acts as the truth-source for the entire application layer.

| Component | Functional Responsibility |

| Data Source | Originating off-chain market data providers |

| Node Operator | Fetching, signing, and submitting data to the chain |

| Aggregation Layer | On-chain logic to calculate final consensus value |

| Consumer Contract | Protocol utilizing the verified data for execution |

The mathematical rigor of this architecture is found in the distribution of node operators. By requiring a high degree of decentralization among providers, the network ensures that the cost of attacking the system ⎊ the amount of capital required to control a majority of nodes ⎊ far exceeds the potential gains from manipulating a single price feed.

Approach

Current implementations focus on minimizing latency while maximizing the security of the data feed. The most advanced systems utilize Threshold Cryptography to ensure that data submissions are aggregated off-chain before being committed to the ledger, which significantly reduces gas consumption and increases the throughput of the data feed.

This approach allows protocols to update their internal pricing models with millisecond-level frequency, a prerequisite for institutional-grade derivative trading.

- Staking Mechanisms Node operators must lock collateral within the network, creating an economic deterrent against malicious data reporting.

- Reputation Systems Historical performance data tracks node accuracy, allowing consumer protocols to filter feeds based on reliability scores.

- Multi-Chain Compatibility Modern architectures operate as middleware, providing standardized interfaces for multiple blockchain ecosystems to consume the same data feed.

The strategic implementation of these networks involves balancing the trade-off between decentralization and performance. Excessive decentralization can lead to latency issues that undermine the utility of high-frequency trading platforms, whereas centralized, low-latency feeds introduce systemic risk. The most successful protocols navigate this tension by dynamically adjusting the number of nodes required for consensus based on the volatility and liquidity of the underlying asset.

Evolution

The architecture has transitioned from static, request-response models to continuous, push-based data streams.

Initially, protocols were required to manually trigger data requests, which introduced significant overhead and timing vulnerabilities. The current state utilizes proactive streaming, where the oracle network pushes data to the smart contract whenever a significant price deviation occurs, ensuring that financial systems are always operating on the most current market information.

Systemic stability in decentralized derivatives requires the continuous, autonomous updating of state variables through resilient, decentralized data pipelines.

The evolution also includes the integration of Zero-Knowledge Proofs to verify the integrity of data feeds without exposing the raw underlying source data. This advancement addresses concerns regarding data privacy and intellectual property, allowing proprietary data providers to monetize their feeds while maintaining the security requirements of decentralized applications.

| Evolutionary Stage | Primary Characteristic |

| First Generation | Centralized, single-source data feeds |

| Second Generation | Decentralized, request-response mechanisms |

| Third Generation | Proactive, streaming, and privacy-preserving networks |

The shift toward modular, cross-chain infrastructure indicates a future where data feeds are treated as a commodity, liquid and accessible across any execution environment. This trajectory mirrors the development of traditional financial data providers, yet it remains anchored in the trust-minimized, permissionless principles that define the current decentralized landscape.

Horizon

Future developments will likely focus on Computational Oracles, which move beyond simple price reporting to execute complex off-chain computations. By offloading heavy data processing to specialized nodes, smart contracts will gain the ability to perform risk assessment, portfolio optimization, and complex derivative pricing calculations that were previously impossible due to on-chain resource constraints. This transition will redefine the boundaries of what is possible within decentralized protocols, moving them closer to the capabilities of traditional high-frequency trading desks. The integration of Hardware-Based Security, such as Trusted Execution Environments, will provide a secondary layer of verification, ensuring that the data ingestion process itself is shielded from tampering at the physical level. As these systems become more deeply embedded in the financial infrastructure, the focus will shift toward managing systemic risk and contagion, ensuring that the failure of a single data feed does not trigger cascading liquidations across the broader market. The ultimate goal remains the creation of a seamless, global financial system where information moves as freely and securely as value itself. What structural limits within the current cryptographic verification process will ultimately define the maximum speed at which decentralized markets can achieve price equilibrium?