Essence

Oracle Failure Mitigation represents the architectural design layer tasked with maintaining protocol integrity when external data feeds deviate from reality or cease operation. Decentralized finance protocols rely upon these conduits to bridge off-chain asset pricing with on-chain execution, creating a dependency that acts as a singular point of systemic risk. When an oracle reports a corrupted, stale, or manipulated price, the derivative engine risks executing liquidations based on phantom valuations.

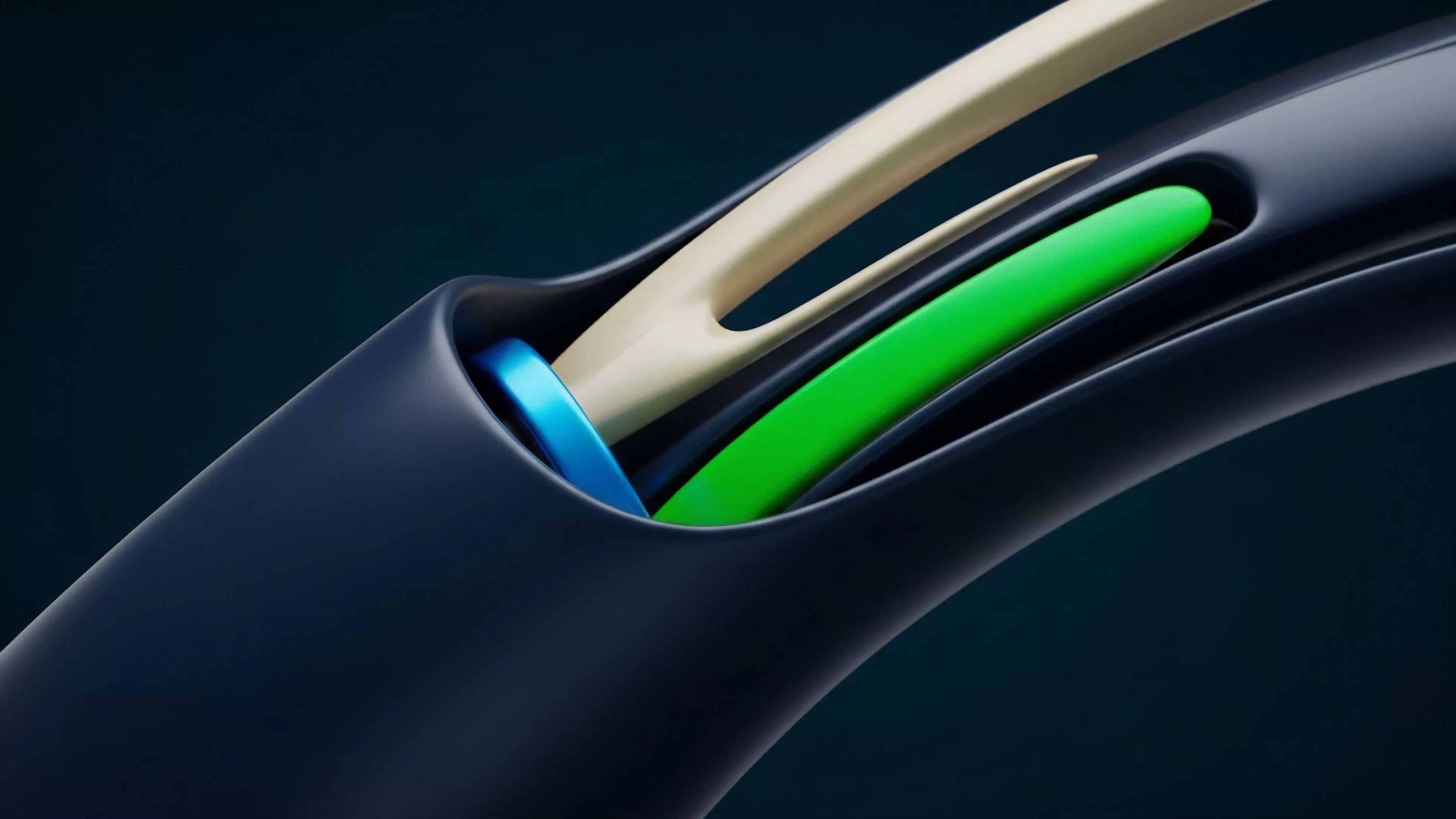

Oracle failure mitigation acts as the essential safety buffer preventing systemic insolvency caused by erroneous external price data inputs.

Effective mitigation strategies involve redundant data sourcing, cryptographic verification of feed integrity, and circuit-breaker mechanisms that halt trading when volatility thresholds are breached. These systems must function autonomously, assuming that any individual data source remains susceptible to compromise or technical failure.

Origin

The necessity for robust Oracle Failure Mitigation traces back to early decentralized lending and synthetic asset protocols that suffered catastrophic drain events due to price manipulation. Market actors exploited the latency between centralized exchange price movements and on-chain oracle updates, creating arbitrage windows that allowed for the draining of liquidity pools.

These early vulnerabilities exposed the fragility of simple, single-source price feeds.

- Manipulation events occurred when attackers artificially inflated asset prices on thin-liquidity exchanges, triggering erroneous collateral liquidations.

- Latency arbitrage exploited the delay between global market price changes and the periodic updates pushed to the blockchain.

- Smart contract exploits targeted the lack of validation logic within the price-fetching functions, allowing arbitrary data injection.

Developers responded by transitioning toward decentralized, aggregated oracle networks that utilize multi-node consensus to verify pricing. This shift marked the move from trust-based, centralized reporting to cryptographically-secured, multi-source validation frameworks.

Theory

The quantitative framework for Oracle Failure Mitigation rests on the principle of minimizing the probability of state-space corruption. Mathematical models for these systems prioritize the detection of outliers through statistical variance analysis.

If a reported price falls outside a pre-defined standard deviation relative to a basket of verified sources, the protocol triggers an automated defensive posture.

| Mitigation Mechanism | Technical Objective | Risk Sensitivity |

|---|---|---|

| Medianizer Aggregation | Reduce impact of single-node corruption | Low |

| Circuit Breakers | Prevent execution on stale data | High |

| Volume Weighted Averaging | Ensure price reflects liquidity depth | Medium |

Protocol stability depends on the mathematical rejection of outlier price data that deviates from established market consensus.

Adversarial game theory informs these designs, as participants have economic incentives to manipulate feeds for liquidation gains. Mitigation protocols therefore implement slashing conditions for malicious nodes, aligning the financial outcome of the oracle providers with the health of the protocol.

Approach

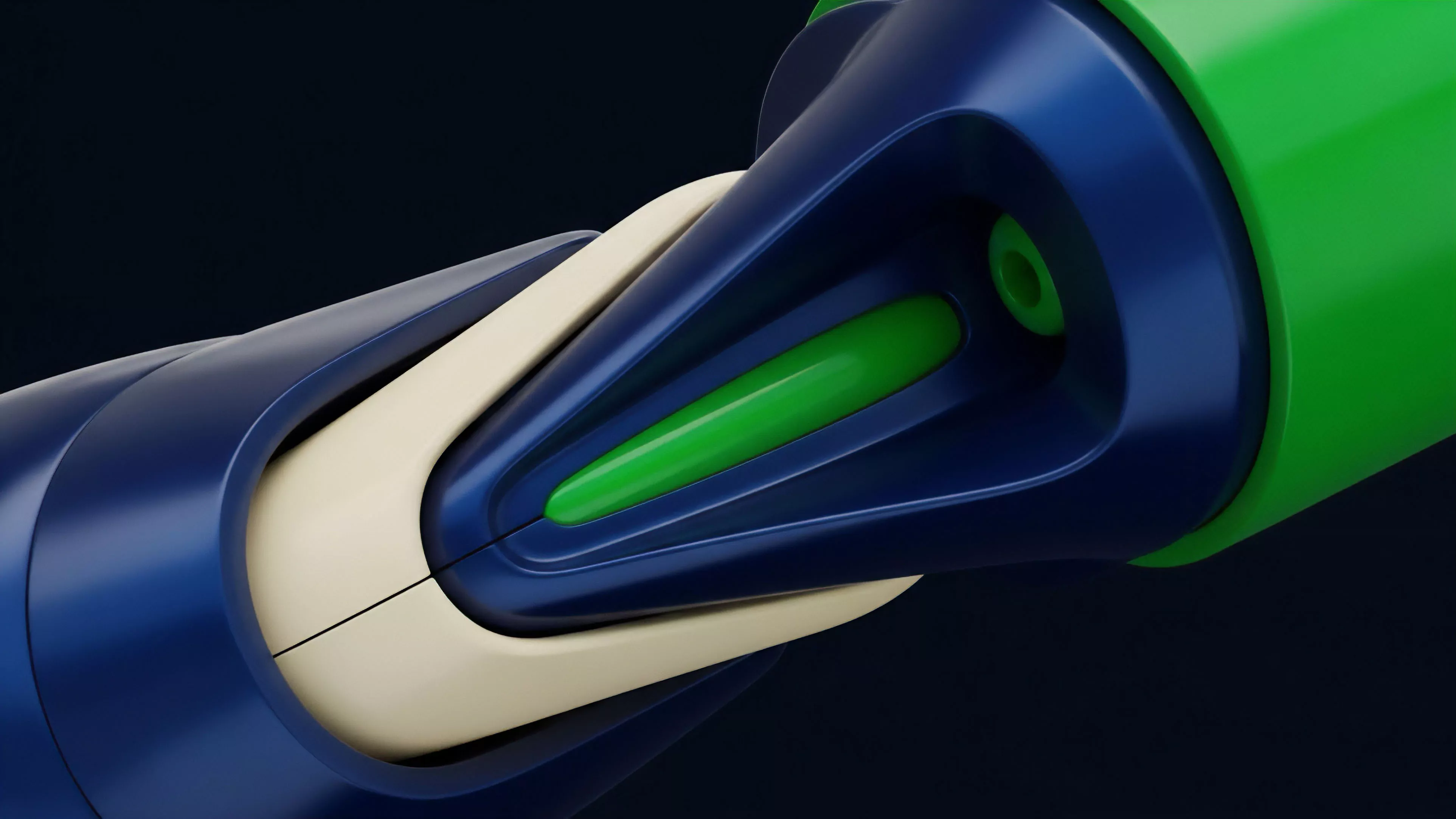

Current operational standards for Oracle Failure Mitigation employ multi-layered validation logic. Developers now implement time-weighted average prices to smooth out transient volatility and prevent short-term manipulation from triggering mass liquidations.

Furthermore, modern architectures require dual-source verification, often combining decentralized oracle networks with internal, liquidity-pool-based price observations. The system acts under constant pressure from automated arbitrage bots designed to identify and exploit any divergence between the oracle price and the spot market. Security audits now focus heavily on the interaction between the oracle interface and the margin engine, ensuring that no single transaction can bypass the validation layer.

- Stale data detection monitors the heartbeat of the oracle feed, freezing positions if the last update exceeds a specific temporal threshold.

- Volatility circuit breakers monitor the rate of price change, halting automated liquidation processes if the delta surpasses extreme bounds.

- Multi-signature verification ensures that updates originate from a quorum of independent nodes rather than a single source.

One might observe that the history of financial engineering, from the early days of exchange-traded funds to the complexities of high-frequency trading, mirrors this current struggle to synchronize fragmented data sources. We are essentially rebuilding the plumbing of global markets in an environment where trust is replaced by code.

Evolution

The trajectory of Oracle Failure Mitigation has shifted from reactive patching to proactive, system-wide resilience. Early models relied on simple, hard-coded price updates that failed during periods of high network congestion.

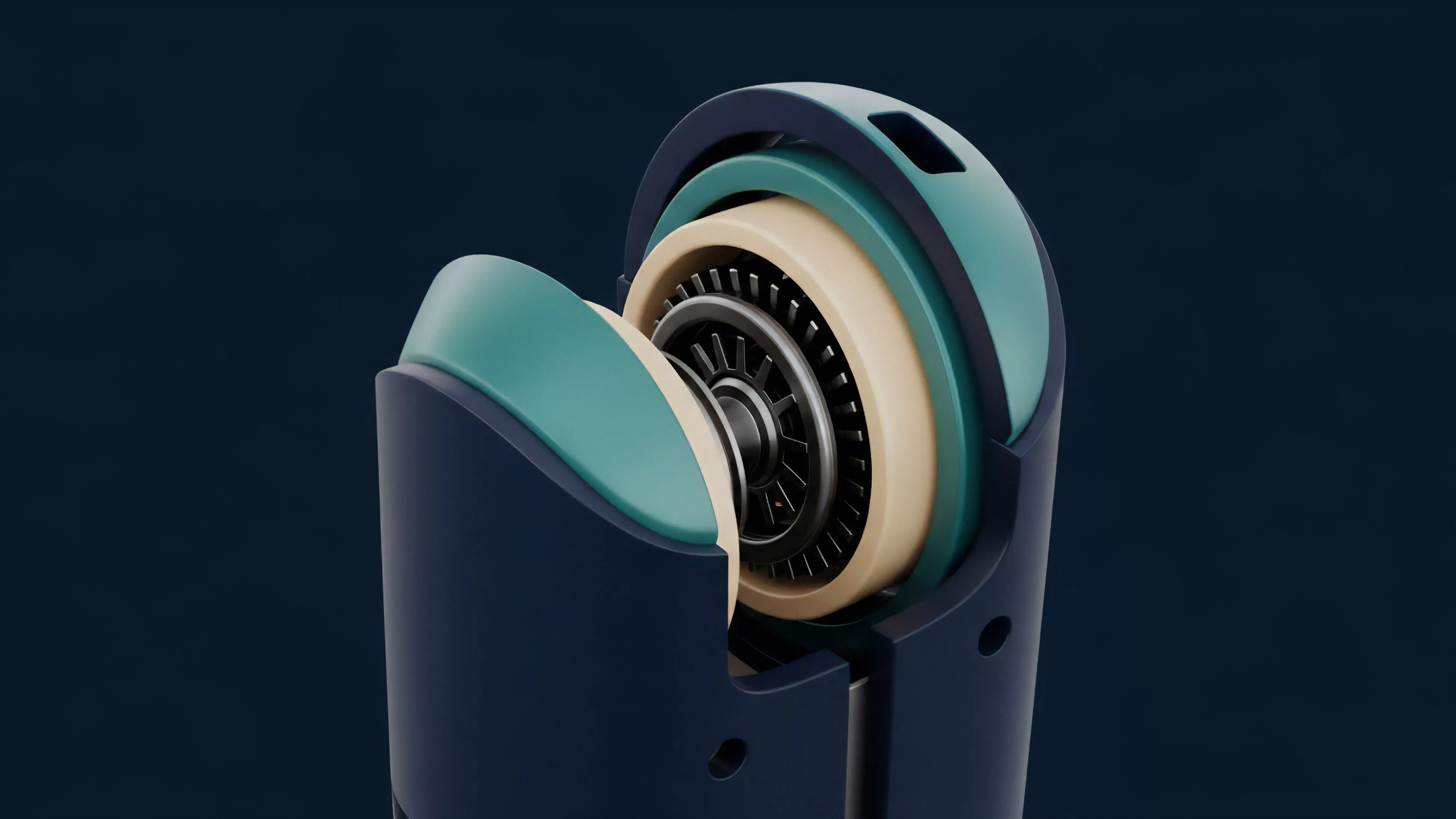

Evolution occurred through the integration of decentralized consensus layers, where oracle providers must stake collateral to guarantee the accuracy of their reporting. The focus has moved toward composable oracle architectures, where protocols can swap or update data sources without requiring full contract migrations. This flexibility allows protocols to adapt to changing market conditions or the emergence of new, more secure data-provisioning technologies.

| Generation | Mechanism | Limitation |

|---|---|---|

| First Gen | Single centralized feed | Single point of failure |

| Second Gen | Decentralized multi-node aggregation | Latency during high congestion |

| Third Gen | Proof-of-stake based reputation systems | Complexity of incentive alignment |

Advanced mitigation architectures utilize modular design to ensure that price feed failure does not compromise the underlying protocol solvency.

Horizon

Future developments in Oracle Failure Mitigation point toward zero-knowledge proofs and decentralized identity for oracle nodes. These technologies will allow for the verification of price data authenticity without revealing the underlying source nodes, significantly reducing the attack surface for sybil attacks. We expect the rise of self-healing protocols that dynamically adjust their reliance on specific oracle sources based on real-time performance metrics.

The synthesis of divergence between legacy financial data providers and on-chain oracle networks will define the next cycle. My conjecture is that the most robust protocols will eventually move toward probabilistic pricing, where the protocol assigns a confidence score to every price input based on real-time market liquidity and network health.

- Zero-knowledge price proofs enable verifiable, private data transmission to ensure feed integrity.

- Dynamic weight adjustment allows protocols to automatically deprioritize oracle nodes exhibiting high variance or latency.

- Cross-chain oracle bridges standardize price discovery across fragmented blockchain environments to reduce arbitrage opportunities.

What remains unresolved is the fundamental paradox of decentralized data: how to maintain an objective, immutable truth when the sources of that truth are themselves subject to the inherent volatility and adversarial pressures of the digital asset market?