Essence

Oracle Data Transformation represents the technical bridge between off-chain probabilistic reality and on-chain deterministic execution. In decentralized derivative markets, financial contracts rely on accurate, tamper-proof, and timely external information to trigger liquidations, settle options, or adjust collateral requirements. This process involves capturing raw data from disparate sources, normalizing these inputs through aggregation algorithms, and delivering the refined output into the smart contract environment.

Oracle data transformation ensures that decentralized derivatives maintain parity with global financial reality by converting raw external signals into executable on-chain truth.

The core function addresses the latency and integrity challenges inherent in decentralized systems. Without a robust transformation layer, protocols remain vulnerable to price manipulation, stale data exploits, and fragmented liquidity. The transformation process itself acts as a critical filter, mitigating noise and identifying outliers that would otherwise trigger erroneous contract settlements or systemic liquidation cascades.

Origin

The genesis of Oracle Data Transformation lies in the fundamental design constraint of blockchain networks: their inability to natively access external information.

Early decentralized finance experiments relied on centralized feeds, which created significant counterparty risk and single points of failure. The subsequent shift toward decentralized oracle networks emerged to solve the dependency on trusted intermediaries, moving toward cryptographic verification of data.

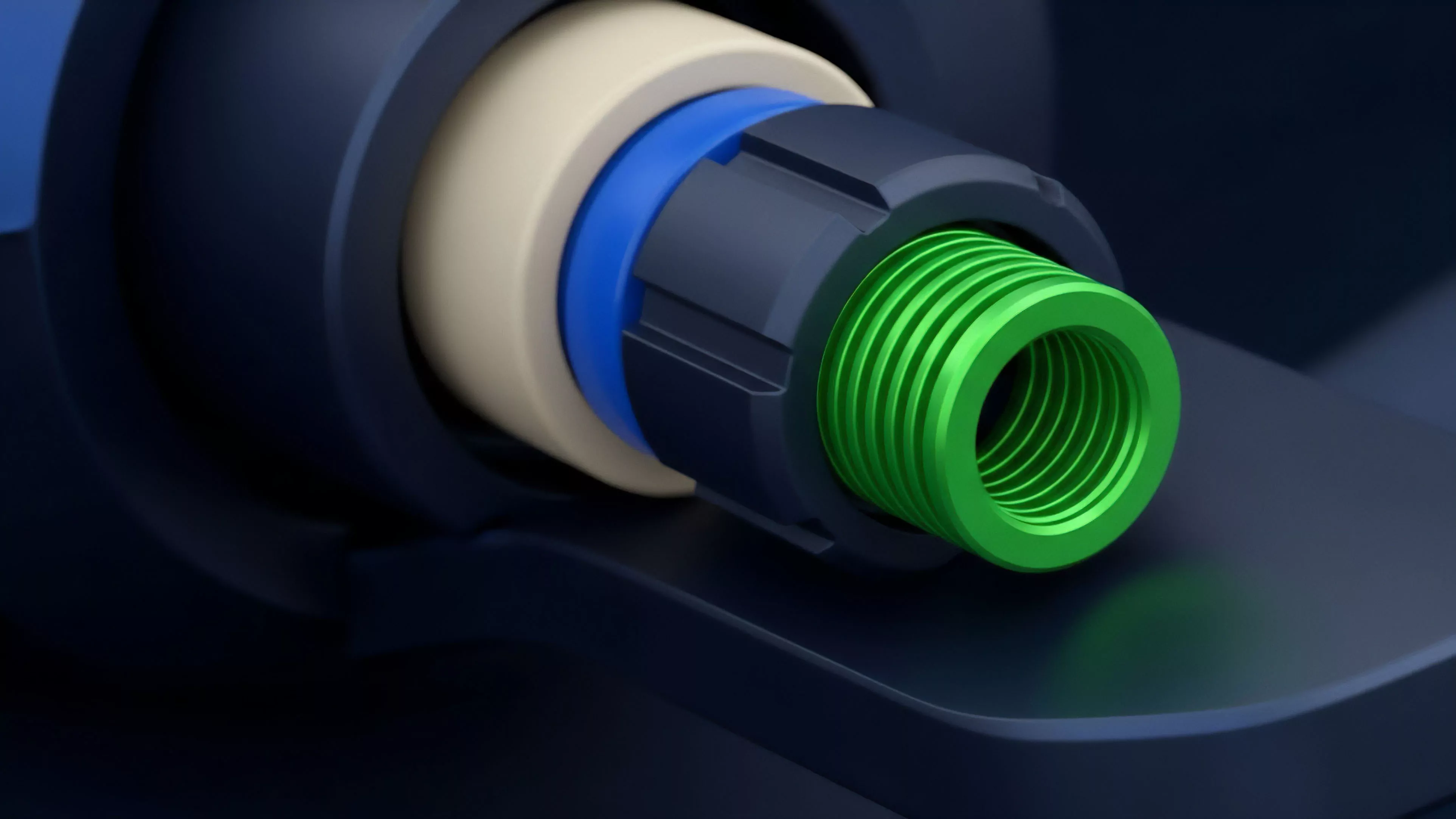

- Data Ingestion refers to the initial collection of price points or event triggers from centralized exchanges and decentralized liquidity pools.

- Aggregation Protocols employ medianizing, volume-weighted averages, or more complex statistical models to derive a single representative price from multiple sources.

- Cryptographic Proofs allow for the verification of data integrity, ensuring that the transformed output has not been altered during transmission.

This evolution reflects a transition from simplistic data fetching to sophisticated, multi-stage processing architectures. As derivative protocols grew in complexity, the need for high-frequency, low-latency updates necessitated the creation of specialized transformation layers capable of handling massive throughput while maintaining rigorous security standards.

Theory

The mechanics of Oracle Data Transformation rest on the application of statistical rigor to volatile asset streams. At its peak, this involves calculating sensitivity metrics such as implied volatility or greeks, which are then encoded directly into the smart contract’s state.

The objective is to minimize the tracking error between the protocol’s internal state and the broader market equilibrium.

| Transformation Stage | Mechanism | Risk Mitigation |

| Filtering | Outlier detection | Flash loan manipulation |

| Smoothing | Moving averages | High-frequency noise |

| Normalization | Standardization | Asset class heterogeneity |

The systemic implications of these transformations are profound. When a protocol uses an aggressive transformation algorithm, it increases capital efficiency but raises the risk of false-positive liquidations. Conversely, a conservative approach protects users from transient volatility but risks insolvency during rapid market movements.

The interplay between these parameters defines the protocol’s overall risk profile and its ability to withstand adversarial market conditions.

Mathematical rigor in oracle transformation acts as the primary defense against systemic insolvency by ensuring that derivative pricing remains tethered to actual market liquidity.

Consider the subtle tension between data freshness and decentralization. While faster updates reduce arbitrage opportunities for sophisticated actors, they also increase the computational burden on the network, potentially leading to congestion. This is where the pricing model becomes truly elegant ⎊ and dangerous if ignored.

Approach

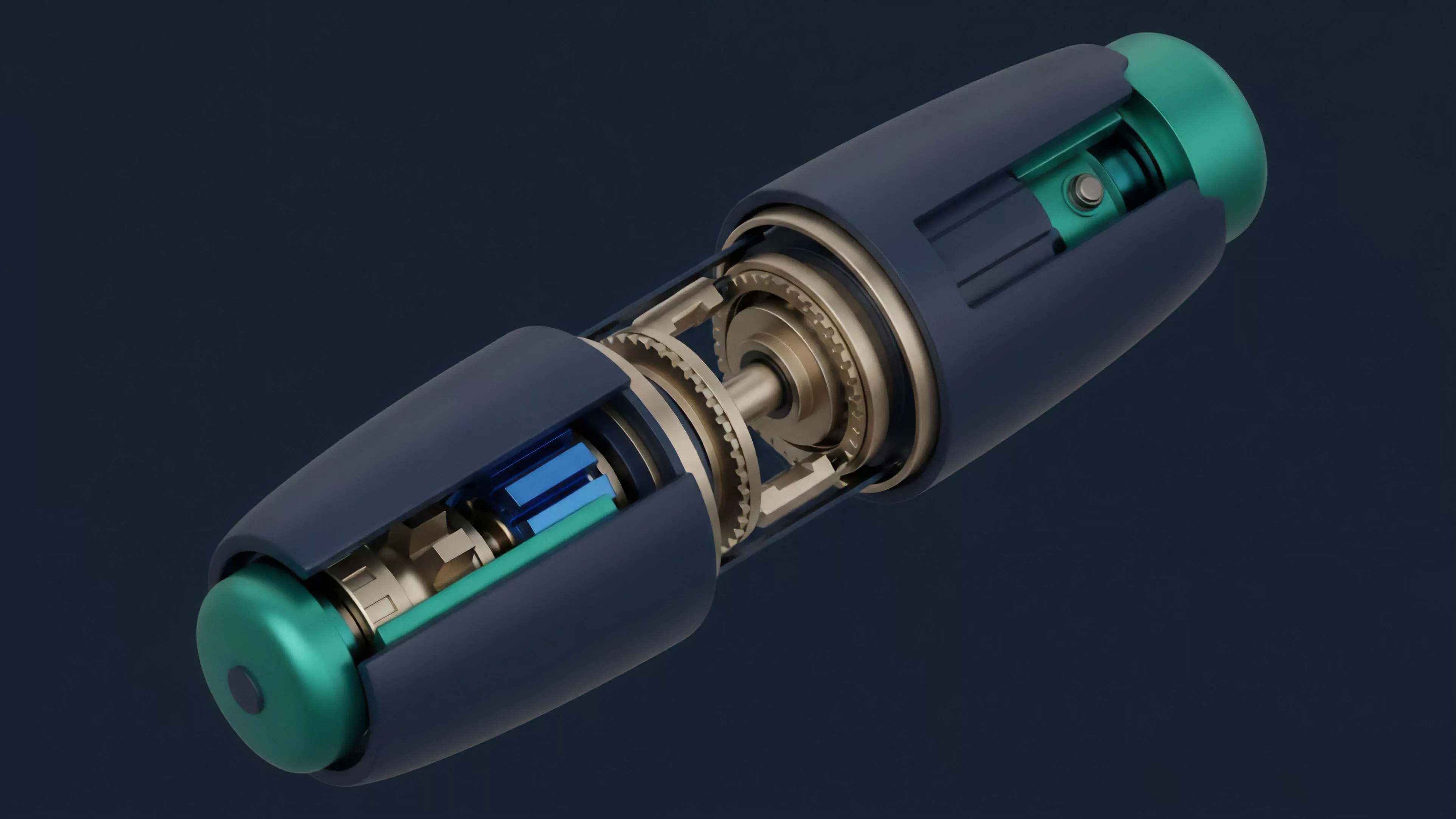

Modern implementations of Oracle Data Transformation leverage decentralized compute environments to process data before it ever touches the settlement layer.

This involves off-chain nodes performing heavy computations, such as calculating Black-Scholes greeks or volatility surfaces, and submitting the result along with a proof of computation. This architecture effectively shifts the computational load away from the mainnet while maintaining trustless guarantees.

- Node Consensus ensures that a majority of data providers agree on the transformed output before committing to the chain.

- Latency Management prioritizes throughput for highly volatile assets to prevent front-running.

- Fallback Mechanisms trigger automated pauses or circuit breakers when data variance exceeds predefined safety thresholds.

The current landscape emphasizes modularity. Protocols now treat the oracle layer as a plug-and-play component, allowing for the rapid deployment of new derivative products. This modularity enables developers to optimize the transformation pipeline specifically for the asset type, whether it is a stablecoin, a volatile crypto asset, or a real-world asset.

Evolution

The trajectory of Oracle Data Transformation has moved from simple price feeds to complex, state-aware computation engines.

Early systems merely reported a spot price. Today, advanced transformation layers provide context-rich data, including order book depth, funding rates, and cross-venue volume, allowing protocols to make nuanced decisions regarding margin requirements and liquidation thresholds.

Evolution in oracle design demonstrates a shift from basic price reporting to the delivery of complex, context-aware financial state information.

The rise of Zero-Knowledge proofs has enabled a new generation of data transformation. Protocols can now prove that a transformation was performed correctly without revealing the underlying raw data sources, enhancing privacy and reducing the risk of data source exploitation. This evolution is driven by the necessity to maintain competitiveness in an environment where speed and security are the primary determinants of liquidity.

Horizon

The future of Oracle Data Transformation lies in the integration of machine learning for predictive data modeling.

Instead of reacting to past price movements, transformation engines will likely incorporate forward-looking metrics to anticipate volatility regimes. This will allow for dynamic, self-adjusting collateral requirements that automatically tighten during periods of projected instability.

| Trend | Implication |

| AI-Driven Filtering | Enhanced resilience against sophisticated price manipulation |

| Zk-Oracle Proofs | Increased privacy for high-volume institutional participants |

| Cross-Chain Interoperability | Unified liquidity across fragmented blockchain ecosystems |

The next frontier involves the decentralization of the transformation logic itself. By allowing governance to vote on the specific algorithms used for data normalization, protocols can ensure that the oracle layer remains aligned with the community’s risk appetite. The ultimate objective is to create an immutable, transparent, and self-correcting financial infrastructure that operates with the efficiency of centralized systems but retains the trustless guarantees of decentralized networks.