Essence

An Oracle Data Strategy functions as the definitive mechanism for bridging off-chain asset pricing with on-chain derivative execution. It dictates the selection, aggregation, and verification of price feeds that determine liquidation thresholds, margin requirements, and settlement values within decentralized option protocols.

The integrity of an option contract relies entirely on the accuracy and availability of external price data during volatile market conditions.

These systems manage the inherent tension between decentralized transparency and the requirement for high-frequency, tamper-proof market inputs. Protocols must reconcile the latency of blockchain finality with the rapid movement of underlying spot prices, ensuring that collateralization ratios remain functional even during extreme liquidity events.

Origin

Early decentralized finance experiments relied upon simplistic, single-source price feeds, which exposed protocols to immediate manipulation and systemic failure. Adversarial actors exploited these vulnerabilities by inducing localized price spikes on thin order books, triggering automated liquidations and draining protocol liquidity pools.

- Manipulation Resistance became the primary design constraint for early architects.

- Decentralized Aggregation models were developed to replace single-point failures with distributed node networks.

- Latency Mitigation emerged as a secondary requirement to align on-chain state with global spot market reality.

This evolution was driven by the necessity to maintain solvency in adversarial environments where profit-seeking agents actively target price-feed vulnerabilities. The transition from centralized reliance to decentralized verification represents the foundational shift in building robust, censorship-resistant derivative infrastructure.

Theory

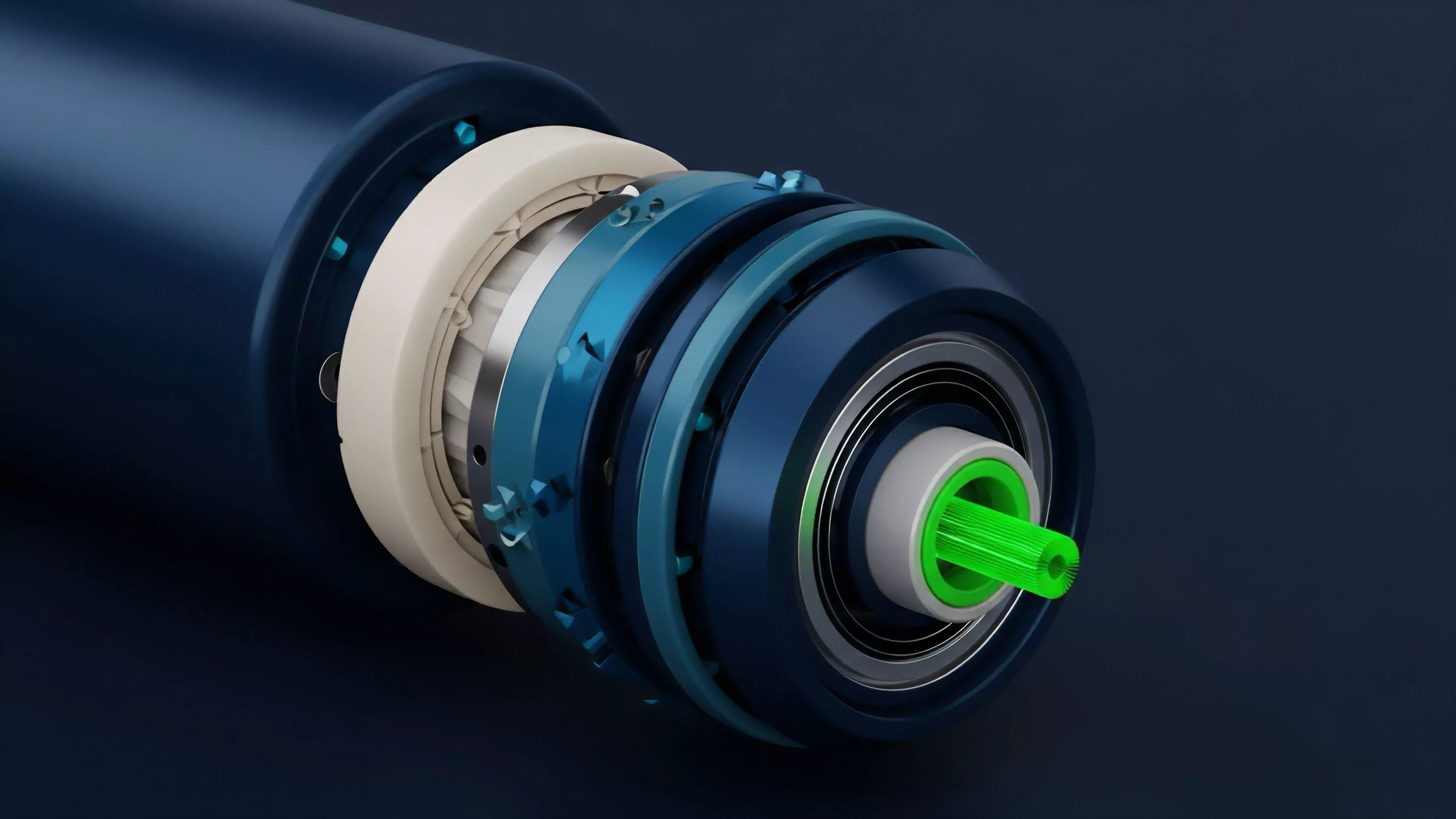

The mechanical structure of an Oracle Data Strategy involves multi-layered data ingestion and consensus-based validation. Algorithms must account for data source quality, historical volatility, and the statistical likelihood of stale or malicious updates.

Mathematical Modeling

Pricing engines utilize weighted averages and median-based consensus to filter outliers from the data stream. This statistical approach prevents single-source corruption while ensuring that the aggregated value remains representative of the broader market equilibrium.

Effective data aggregation protocols minimize variance while maximizing the speed of state updates for margin-sensitive derivative contracts.

Adversarial Dynamics

System designers treat data feeds as an active attack surface. Protocols implement challenge-response mechanisms where market participants are incentivized to dispute incorrect data. This game-theoretic approach ensures that the cost of manipulating the oracle significantly exceeds the potential profit from triggering fraudulent liquidations.

| Oracle Model | Risk Profile | Latency |

| Push-based | High | Low |

| Pull-based | Moderate | High |

| Hybrid-consensus | Low | Moderate |

Approach

Modern implementations utilize hybrid architectures to balance computational efficiency with security. Developers prioritize off-chain computation for heavy data processing, while using on-chain verification for the final, critical settlement inputs.

- Stale Data Protection mechanisms trigger circuit breakers if updates fall outside predefined temporal thresholds.

- Volatility Scaling adjusts the frequency of updates based on real-time market turbulence to maintain precision.

- Liquidity-Weighted Feeds prioritize inputs from high-volume exchanges to reflect true market depth.

This approach acknowledges the constant stress exerted by automated agents seeking to exploit discrepancies between oracle inputs and actual market prices. The focus remains on maintaining a coherent view of value across disparate liquidity venues, ensuring that derivative instruments remain correctly priced relative to their underlying assets.

Evolution

The transition from simple data polling to complex, reputation-based validator networks marks the current phase of development. Protocols now incorporate real-time cross-chain messaging to verify prices across multiple networks, reducing reliance on single-chain liquidity.

Market participants now demand cryptographic proof of data origin to ensure complete transparency in derivative settlement processes.

The field has moved toward zero-knowledge proof verification, allowing protocols to confirm the accuracy of external data without requiring the full disclosure of all underlying source details. This development mitigates the risk of data leakage while enhancing the speed of secure settlement, reflecting a broader movement toward sophisticated, privacy-preserving financial infrastructure.

Horizon

Future designs will emphasize autonomous, self-healing oracle networks that adapt their own weighting algorithms in response to changing market microstructure. These systems will utilize machine learning to detect anomalies in data streams before they reach the protocol layer, effectively preempting potential exploits.

- Autonomous Weighting algorithms will dynamically adjust based on venue liquidity and historical reliability.

- Cross-Protocol Liquidity will be synthesized into a single, unified risk-adjusted price index.

- Predictive Latency Compensation will allow protocols to account for network congestion before settlement occurs.

The shift toward predictive modeling will redefine the standard for capital efficiency, enabling tighter margin requirements and reduced slippage for users. As these systems mature, the reliance on human-governed parameters will diminish, leaving the infrastructure to operate according to strictly defined, mathematically-verifiable rules that are resistant to both systemic contagion and external manipulation.