Essence

Oracle Data Sources function as the essential bridges between external financial reality and the deterministic execution environment of blockchain protocols. These systems translate off-chain asset pricing, interest rates, and volatility indices into cryptographically verifiable formats. Without these mechanisms, decentralized derivative platforms would remain isolated from global liquidity and real-time market movements, rendering complex instruments such as options or perpetual swaps impossible to price accurately.

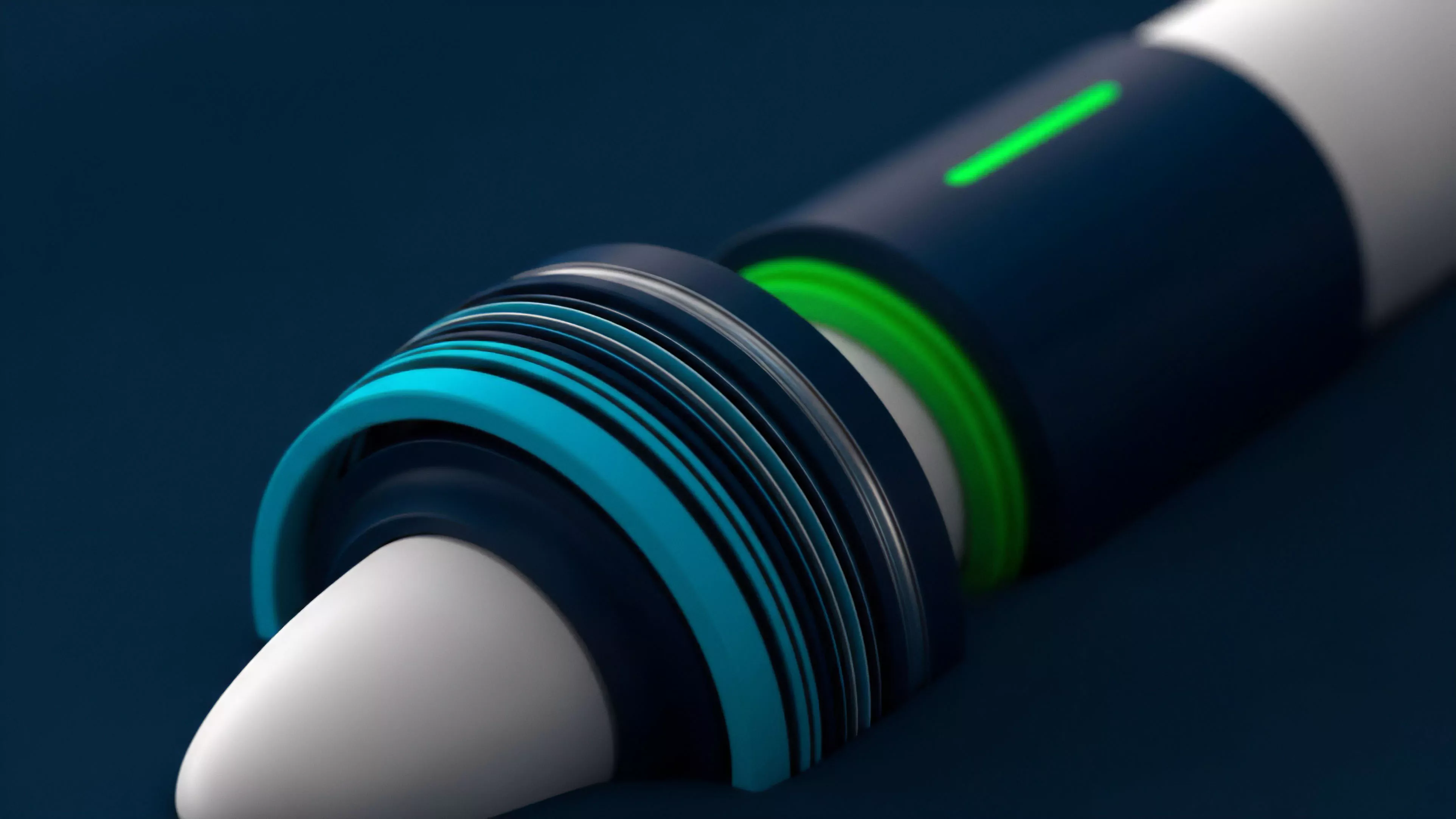

Oracle data sources act as the foundational translation layer that converts external financial reality into machine-readable blockchain inputs.

These providers operate through varied architectural models, ranging from decentralized networks of independent nodes to permissioned validator sets. The integrity of the entire decentralized finance stack rests upon the assumption that the data provided remains untampered and reflective of true market conditions. When this assumption fails, the systemic risk propagates instantly through liquidation engines and automated margin calls, often leading to rapid insolvency across interconnected protocols.

Origin

The requirement for external data surfaced immediately upon the deployment of the first programmable collateralized debt positions.

Early implementations relied on centralized, single-source feeds, which proved highly susceptible to manipulation and point-of-failure vulnerabilities. As derivative complexity increased, the need for robust, multi-source aggregation became clear to prevent catastrophic price discrepancies that could be exploited by malicious actors seeking to trigger artificial liquidations.

| Generation | Data Mechanism | Risk Profile |

| First | Centralized API | Single Point Failure |

| Second | Decentralized Aggregation | Node Collusion |

| Third | Cryptographic Proofs | Complexity Overhead |

The transition from basic price tickers to sophisticated, cryptographically signed data streams represents a shift in how protocols perceive truth. Developers realized that relying on a single off-chain endpoint created a toxic incentive structure for attackers to manipulate that specific data point. This recognition forced the development of consensus-based oracle designs that aggregate multiple data providers, thereby increasing the cost of an attack while ensuring a higher degree of statistical reliability for derivative settlement.

Theory

The mechanics of these data providers revolve around minimizing the latency between global market discovery and on-chain settlement.

Price discovery in crypto derivatives relies heavily on the accuracy of the underlying index, which is typically calculated as a volume-weighted average across major centralized exchanges. When the oracle input diverges from the true market spot price, the resulting basis risk creates opportunities for predatory arbitrage that drains liquidity from the protocol.

Accurate oracle inputs are the primary defense against systemic liquidation spirals caused by price manipulation.

Protocol physics dictate that data must be pushed or pulled based on strict validation rules. Pushing data incurs significant gas costs but ensures up-to-date state, while pulling data on-demand reduces congestion but introduces potential staleness risks. The most resilient designs employ a hybrid approach, where data is updated based on predefined thresholds of price deviation, effectively balancing the trade-off between gas efficiency and data freshness.

The volatility of digital assets necessitates that these systems operate with extremely low latency. Consider the thermodynamic constraints of consensus; information propagation across a distributed network always requires finite time, creating a unavoidable gap where the data on-chain reflects a past state of the world. This is the inherent latency problem that every derivative platform must manage through careful margin and liquidation buffer design.

Approach

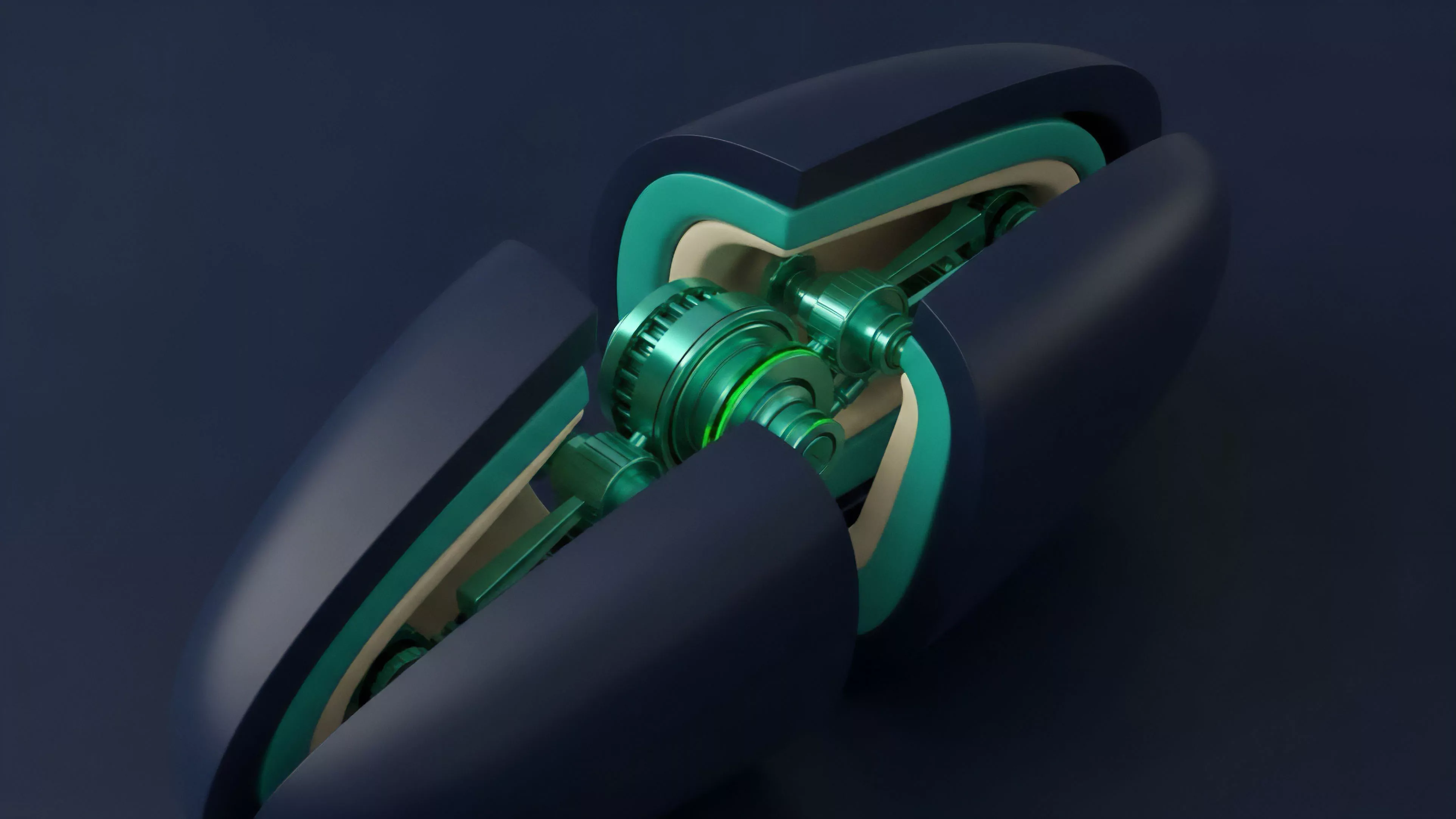

Modern systems utilize sophisticated aggregation logic to filter out noise and malicious outliers.

By employing median-based calculations across multiple nodes, these providers ensure that a single compromised source cannot skew the reported price beyond a predetermined tolerance. This statistical approach is bolstered by cryptographic signatures, which allow the protocol to verify the provenance of every data point before committing it to the smart contract state.

- Aggregator nodes calculate the weighted median of submitted prices to eliminate extreme volatility spikes.

- Cryptographic signatures ensure that every piece of data is linked to a specific, verified provider.

- Deviation thresholds trigger updates only when price movements exceed a predefined percentage, optimizing protocol throughput.

These architectures also incorporate rigorous monitoring of data freshness. If an oracle fails to update within a set timeframe, the protocol enters a circuit-breaker mode, halting trading to prevent exploitation. This defensive stance acknowledges that a stale price is often more dangerous than no price at all, as it invites traders to execute strategies against an outdated market state.

Evolution

Initial designs prioritized simplicity, often utilizing basic request-response patterns that struggled under high load.

As the volume of crypto derivatives grew, the industry shifted toward persistent, high-frequency data streams. This change enabled more complex instruments like binary options and volatility-linked tokens, which require constant, granular price updates to maintain accurate pricing models and risk parameters.

| Feature | Legacy Systems | Current Systems |

| Update Frequency | On-demand | Continuous/Threshold |

| Validation | Manual/Basic | Cryptographic Proof |

| Security Model | Trust-based | Adversarial/Game-Theoretic |

The current landscape favors protocols that leverage zero-knowledge proofs to verify the accuracy of the data aggregation process itself. By proving that the median was calculated correctly without revealing the underlying individual submissions, these systems provide a layer of privacy and integrity that was previously unattainable. This development significantly reduces the attack surface, moving the security guarantee from social trust to mathematical verification.

Horizon

Future developments will focus on the integration of decentralized identity and reputation systems for oracle nodes.

By tying the historical performance of a node to a verifiable identity, protocols can dynamically adjust the weight of data inputs based on the reliability of the source. This move toward reputation-weighted aggregation will create a more resilient network where high-performing providers are incentivized to maintain uptime, while malicious or incompetent nodes are automatically marginalized.

Reputation-weighted data aggregation will define the next generation of oracle security and reliability.

Another area of development involves the creation of domain-specific oracles that specialize in niche asset classes, such as real-world assets or complex derivative indices. These specialized providers will offer higher resolution data for specific sectors, reducing the basis risk that currently plagues cross-asset derivative products. As these systems mature, they will become the backbone of a fully automated, global financial layer that operates with the transparency and speed of decentralized networks, while maintaining the rigor required by institutional participants.