Essence

Price integrity represents the terminal layer of trust in any synthetic financial instrument. Oracle Data Security Standards constitute the architectural safeguards protecting the ingestion, transport, and delivery of off-chain data to on-chain settlement engines. These protocols ensure that the valuation of a derivative contract remains tethered to verifiable market reality, preventing the decoupling of price from value that characterizes systemic failure in decentralized markets.

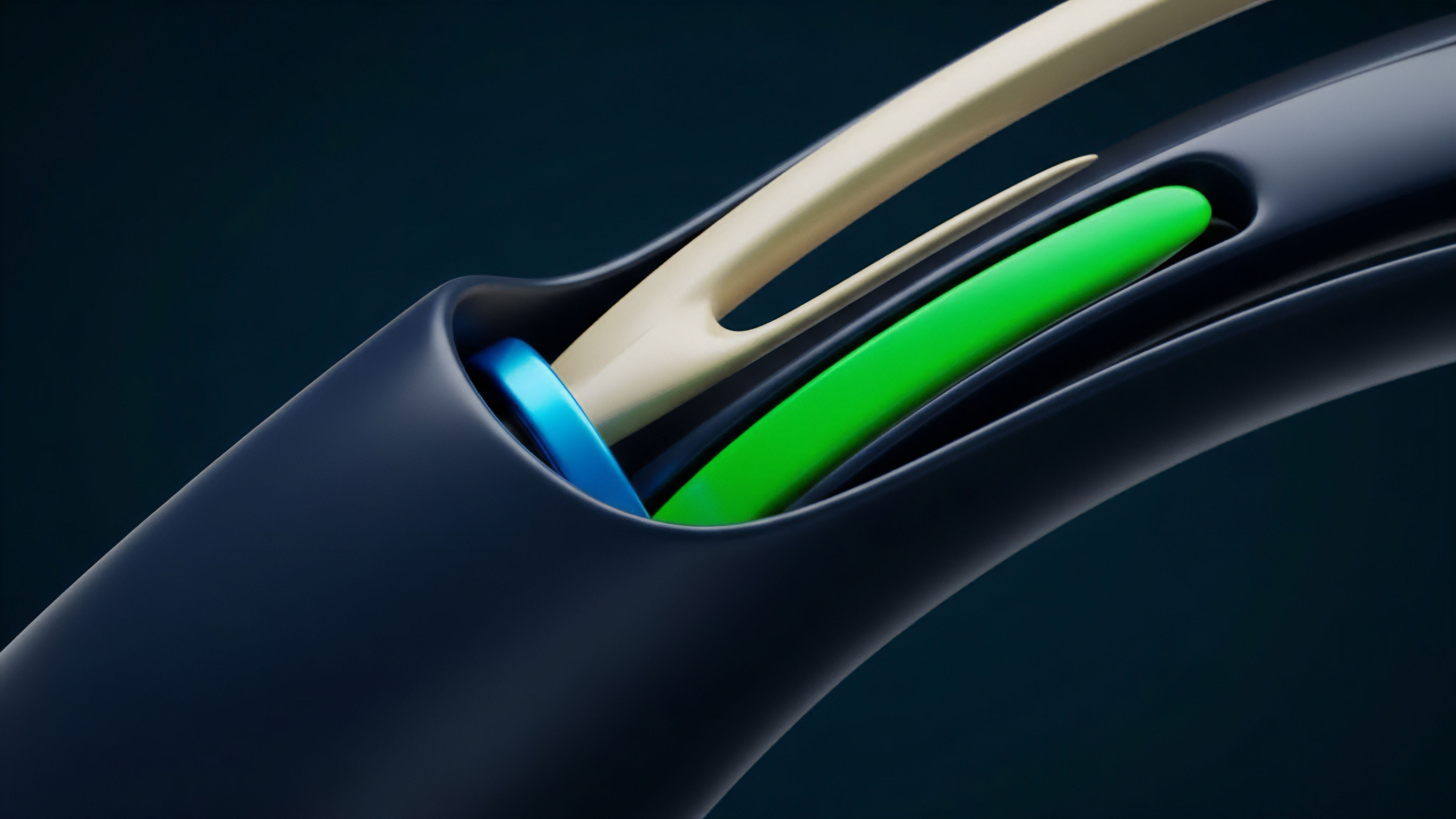

Oracle Data Security Standards define the cryptographic boundary between external market reality and on-chain settlement logic.

The nature of these standards involves a multi-layered verification process where data provenance is as vital as the numerical value itself. By enforcing strict cryptographic signatures and decentralized consensus at the data layer, the system mitigates the risk of arbitrary price manipulation. This architecture transforms a simple API call into a robust financial primitive capable of supporting billions in geared capital.

The identity of a secure oracle system lies in its ability to withstand adversarial environments where market participants are incentivized to corrupt the feed. Oracle Data Security Standards provide the necessary friction against such corruption, ensuring that the cost of attacking the data feed exceeds the potential profit from the resulting market distortion. This economic security model is the basal requirement for any resilient derivative protocol.

Origin

The early decentralized finance environment operated with a dangerous lack of data rigor.

Initial protocols relied on single-source price feeds or centralized API aggregators, creating obvious points of failure. The necessity for Oracle Data Security Standards emerged from a series of high-profile exploits where flash loans were utilized to manipulate illiquid on-chain price pools, leading to the catastrophic liquidation of healthy positions. As the complexity of instruments increased, the industry moved toward decentralized oracle networks.

This shift was driven by the realization that price discovery is a social and mathematical consensus problem rather than a simple data retrieval task. The birth of Oracle Data Security Standards can be traced to the integration of Byzantine Fault Tolerance into data delivery, where multiple independent nodes must agree on a price before it is accepted by the smart contract. The transition from “best effort” data delivery to formal security standards was accelerated by the demand for institutional-grade derivatives.

Market makers and liquidity providers required guarantees that the settlement price of an option would not be subject to the whims of a single exchange or a compromised server. This led to the formalization of deviation thresholds, heartbeat requirements, and multi-party computation as standard components of the data architecture.

Theory

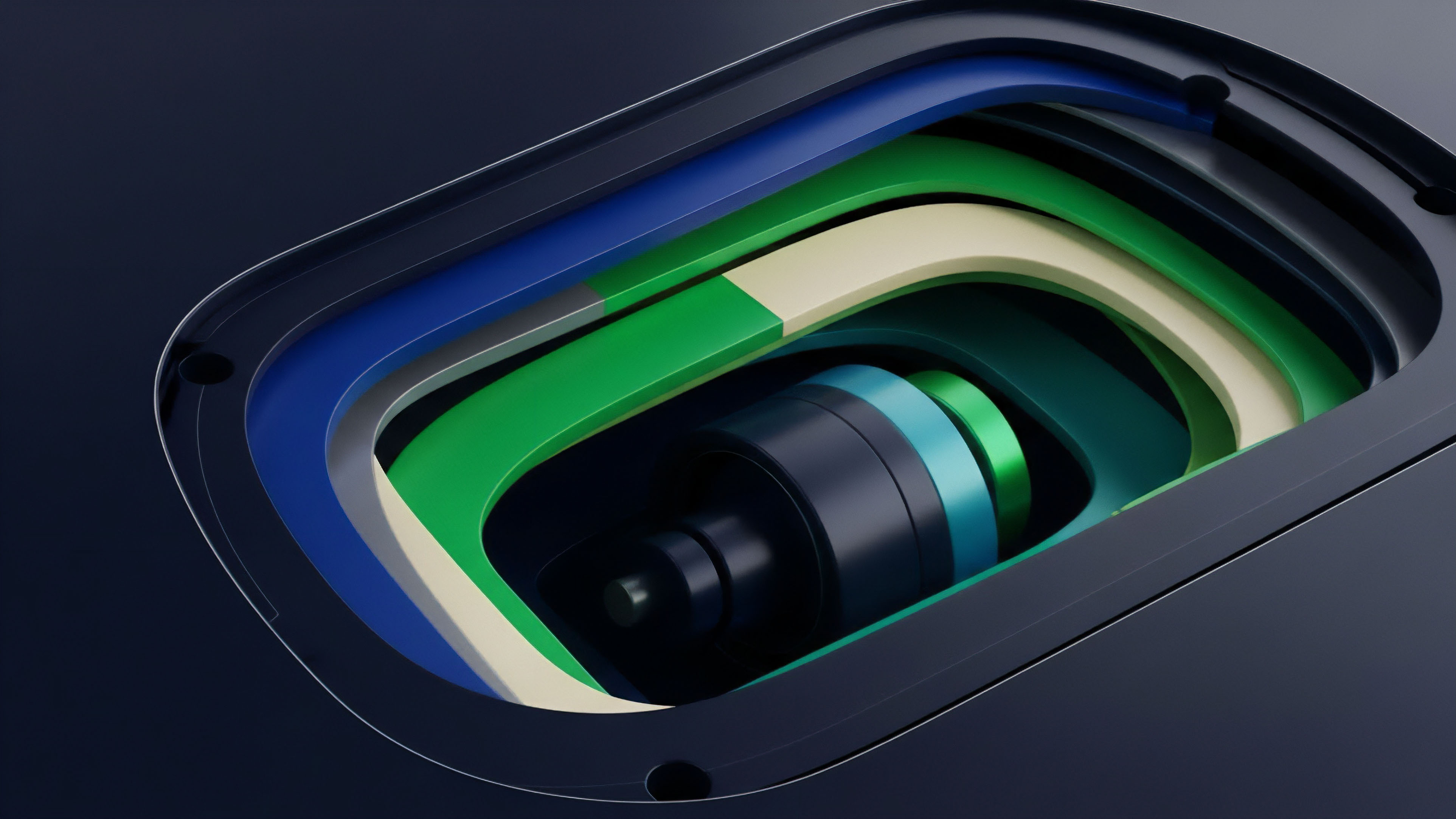

The mathematical foundation of Oracle Data Security Standards rests on the principle of medianization and outlier rejection. By aggregating data from a diverse set of independent sources and applying a median-based consensus, the system ensures that a minority of corrupted nodes cannot steer the final price.

This Byzantine Fault Tolerance approach is mathematically modeled to provide security as long as less than one-third of the participants are malicious.

Security Models

| Mechanism | Security Property | Primary Trade-off |

| Multi-Party Computation | Private data aggregation without exposure | High computational overhead |

| Threshold Signatures | Single valid signature from multiple nodes | Complex key management |

| Verifiable Random Functions | Unpredictable and verifiable node selection | Latency in selection process |

Entropy in physical systems mirrors the decay of data accuracy over time without active verification, a principle that dictates the necessity of constant heartbeat updates in high-frequency trading environments. This decay requires a rigorous theoretical framework for “data freshness,” where the security of the standard is tied to the temporal relevance of the information provided.

Mathematical consensus in data feeds mitigates the risk of single-point-of-failure price manipulation in high-geared derivative markets.

Adversarial game theory plays a central role in the design of these standards. Participants are subjected to cryptoeconomic incentives where honest reporting is rewarded and malicious behavior results in the slashing of staked collateral. This creates a self-reinforcing loop of integrity.

The theoretical limit of such a system is the total value of the staked assets compared to the potential profit from a successful manipulation.

Threat Vectors

- Sybil Attacks involve a single actor creating multiple identities to dominate the consensus mechanism and dictate the price feed.

- Data Source Corruption occurs when the underlying exchange or API provides false information, bypassing the security of the transport layer.

- Latency Arbitrage exploits the delay between off-chain price movements and on-chain updates to front-run the settlement engine.

- Oracle Extractable Value represents the profit an oracle node can capture by reordering or delaying price updates to favor their own trading positions.

Approach

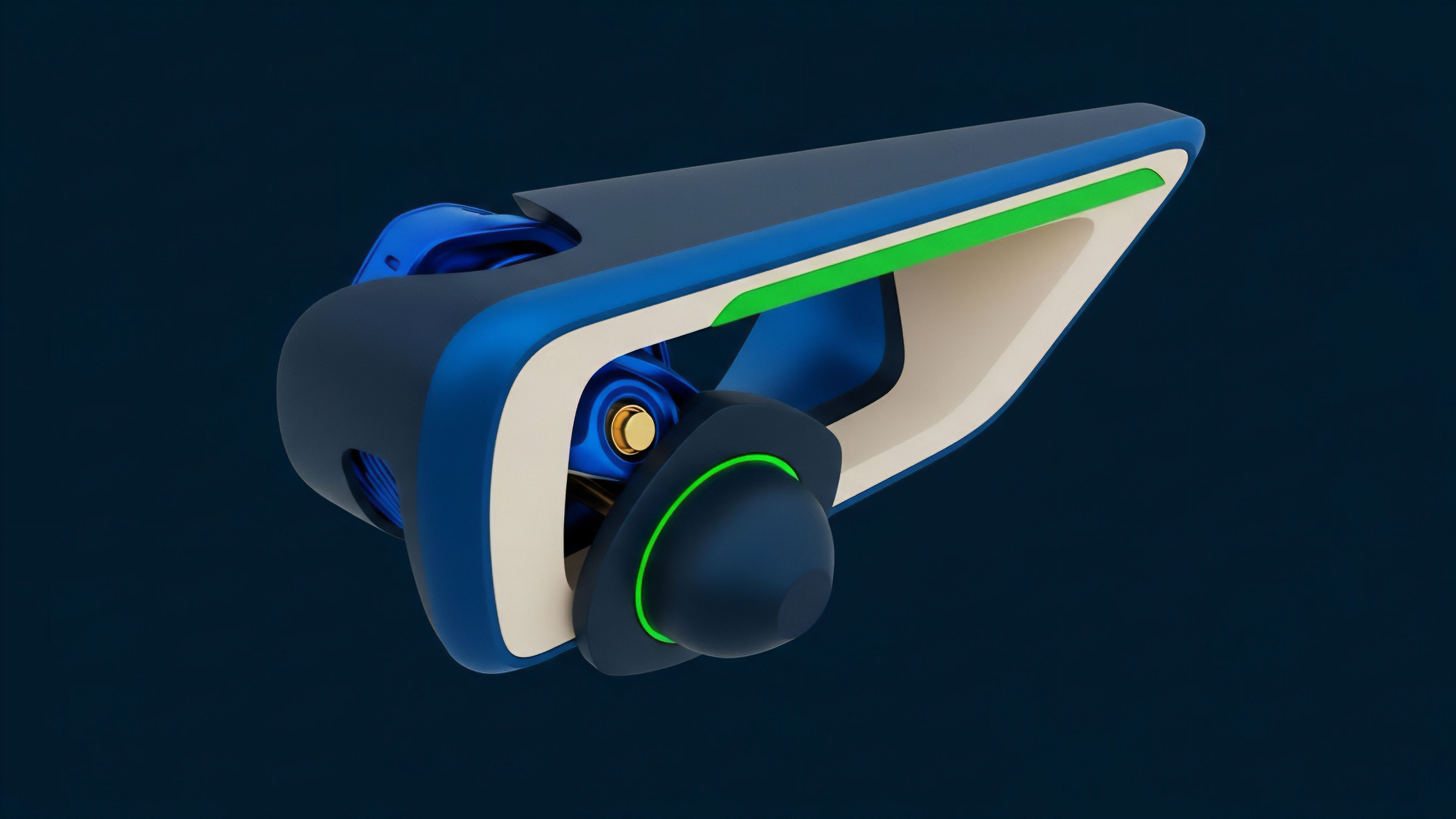

Current implementation of Oracle Data Security Standards focuses on the use of Trusted Execution Environments and Verifiable Random Functions to harden the data pipeline. Nodes operate within secure enclaves that prevent even the operator from tampering with the data being processed. This hardware-level security is paired with cryptographic proofs that verify the data was retrieved from the intended source without modification.

Validation Pipeline

- Data Acquisition involves pulling information from multiple premium APIs and decentralized exchanges simultaneously.

- Cryptographic Attestation requires each node to sign the retrieved data, creating a permanent record of provenance.

- Aggregation and Sanitization applies statistical filters to remove outliers and calculate the median price.

- On-Chain Verification checks the validity of the aggregate signature and ensures the price meets the deviation threshold.

- Final Settlement triggers the execution of derivative contracts based on the verified price feed.

The use of Threshold Signatures allows for the aggregation of multiple node responses into a single, compact signature that is gas-efficient for on-chain verification. This approach reduces the cost of maintaining high-fidelity price feeds while preserving the security of a decentralized network. Protocols now prioritize “pull-based” oracles where the user or the contract initiates the price update, shifting the cost of data delivery to the transaction level and ensuring the most recent data is used for every execution.

Evolution

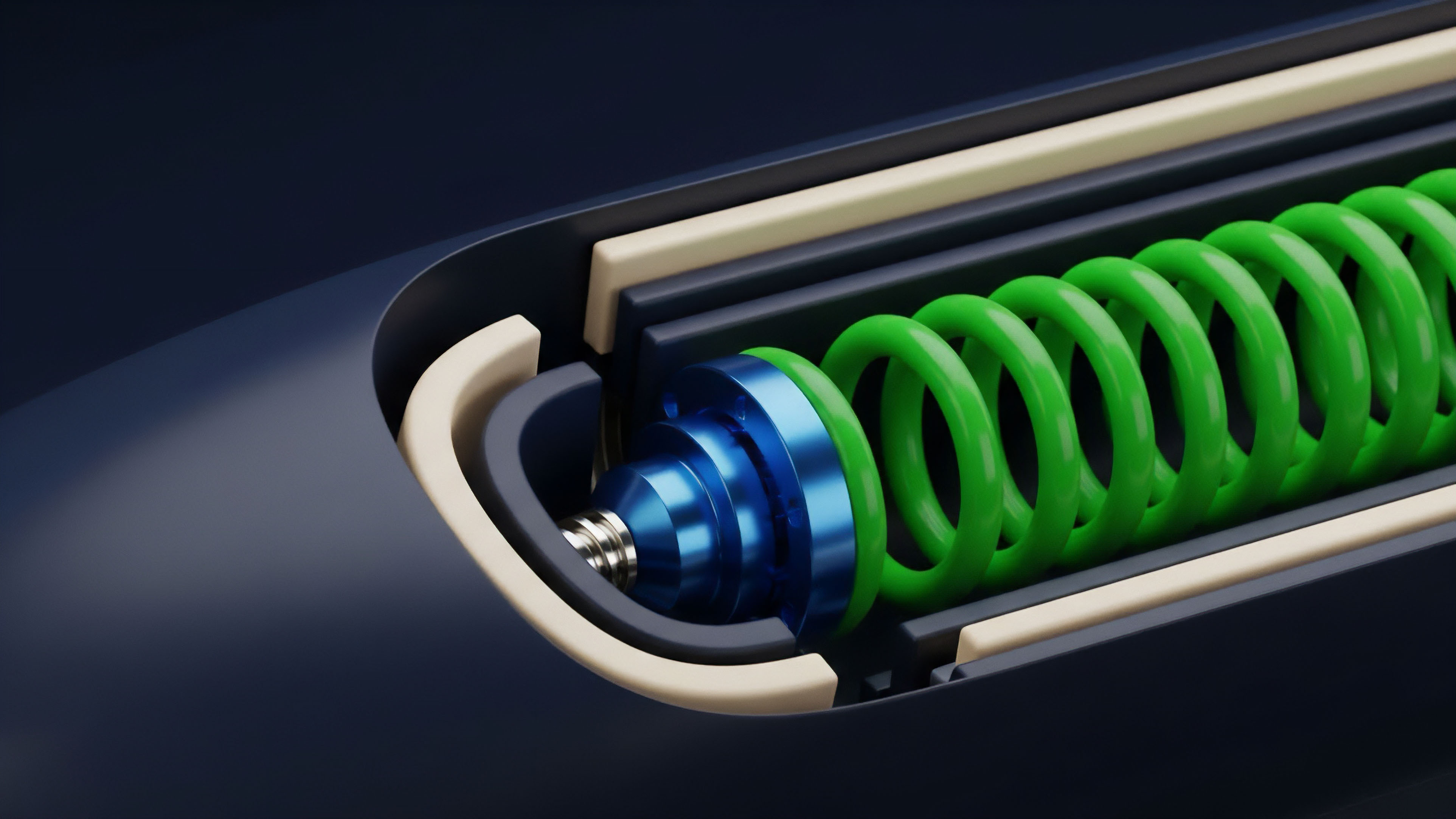

The transition from static, interval-based updates to high-frequency, streaming data has redefined the performance metrics of Oracle Data Security Standards.

Early systems updated every few minutes or upon a significant price deviation, which proved insufficient for the volatility of crypto markets. Modern standards demand sub-second latency and continuous data availability to support complex instruments like perpetual swaps and exotic options.

Performance Metrics

| Metric | Legacy Standard | Modern Standard |

| Update Frequency | 10 – 60 Minutes | Sub-second / Real-time |

| Deviation Threshold | 0.5% – 1.0% | 0.05% – 0.1% |

| Node Diversity | 3 – 5 Nodes | 30+ Independent Nodes |

The rise of MEV-aware oracles represents a significant shift in the evolution of these standards. Protocols now design their data feeds to be resistant to front-running by searchers and validators. This involves encrypting price updates until they are committed to a block or using specialized commit-reveal schemes to prevent pre-emptive liquidations based on pending oracle transactions.

Horizon

The future of Oracle Data Security Standards lies in the integration of Zero-Knowledge Proofs to verify off-chain state without revealing sensitive data.

This will allow for the use of private financial data, such as credit scores or dark pool liquidity, in public derivative markets while maintaining absolute privacy. ZK-oracles will provide a level of attestation that makes the current multi-sig models appear primitive.

Future data architectures prioritize zero-knowledge proofs to verify off-chain state without exposing sensitive underlying data sets.

We are moving toward a state of “Oracle-less” design for certain primitives, where the protocol derives its own internal price through automated market maker logic, yet even these systems will require Oracle Data Security Standards as a circuit breaker. The convergence of cross-chain state proofs and decentralized data networks will enable a unified liquidity layer where an option on one chain can be settled using the verified price of an asset on another, entirely without trust in a centralized intermediary. The final stage of this evolution is the total automation of the security standard, where AI-driven agents monitor data feeds for anomalous patterns and automatically adjust security parameters in real-time. This proactive defense mechanism will be the vital safeguard against increasingly sophisticated algorithmic attacks on the financial infrastructure of the future.

Glossary

Implied Volatility Oracles

Threshold Signatures

Data Availability Proofs

Outlier Detection

Staking Incentives

Push-Based Oracles

State Root Verification

Verifiable Random Functions

Data Freshness Guarantees