Essence

Oracle Data Mining in decentralized finance represents the systematic extraction of actionable intelligence from distributed ledger state transitions to price and manage derivative instruments. It transforms raw, asynchronous blockchain event logs into synchronized, low-latency inputs for automated market makers and risk management engines. By bridging the gap between fragmented on-chain activity and structured financial modeling, it enables the construction of high-fidelity synthetic assets that track real-world volatility with greater precision than static, time-delayed price feeds.

Oracle data mining provides the foundational mechanism for converting decentralized transaction history into structured, actionable financial signals.

The primary function involves parsing, filtering, and aggregating heterogeneous data points ⎊ such as liquidation triggers, funding rate fluctuations, and order book depth ⎊ to inform option pricing models. Unlike traditional data warehouses, this process operates within an adversarial environment where information asymmetry dictates profitability. The architecture requires cryptographic verification of the data pipeline to ensure that the inputs driving automated execution remain tamper-proof and resistant to manipulation by malicious actors seeking to trigger artificial liquidations or price discrepancies.

Origin

The emergence of Oracle Data Mining stems from the limitations inherent in early decentralized exchange designs that relied exclusively on exogenous, centralized price feeds.

Developers realized that internalizing the data discovery process directly within the protocol layer allowed for more resilient, self-contained financial systems. This evolution mirrors the historical progression of quantitative finance, where the need for proprietary, high-speed data acquisition drove the development of specialized trading infrastructures.

- Blockchain State History: Serving as the immutable ledger for all trade executions, providing the raw substrate for retrospective analysis.

- Decentralized Liquidity Pools: Acting as the primary source for real-time order flow data and slippage metrics.

- Validator Consensus Mechanisms: Ensuring the integrity of the state updates that feed into the mining process.

Early implementations prioritized simplicity, often utilizing basic volume-weighted average price calculations to determine settlement values. As the complexity of derivative instruments increased, the demand for more sophisticated extraction techniques grew, necessitating a transition toward decentralized, multi-node data validation networks. This shift moved the industry away from reliance on single points of failure, grounding price discovery in the collective activity of the network itself rather than external, potentially compromised data providers.

Theory

The theoretical framework governing Oracle Data Mining rests upon the application of stochastic calculus to the high-frequency, granular data streams extracted from blockchain state transitions.

By modeling market participants as agents in a game-theoretic environment, analysts can derive the implied volatility surface of decentralized assets with significantly reduced latency. This quantitative approach allows for the dynamic adjustment of margin requirements and option premiums based on the current state of liquidity, rather than lagging, off-chain benchmarks.

Stochastic modeling of on-chain event streams allows for the dynamic calibration of risk parameters in decentralized derivative protocols.

| Metric | Application |

| State Transition Velocity | Latency-sensitive volatility estimation |

| Liquidation Queue Depth | Tail-risk assessment for margin engines |

| Funding Rate Asymmetry | Arbitrage-driven price discovery modeling |

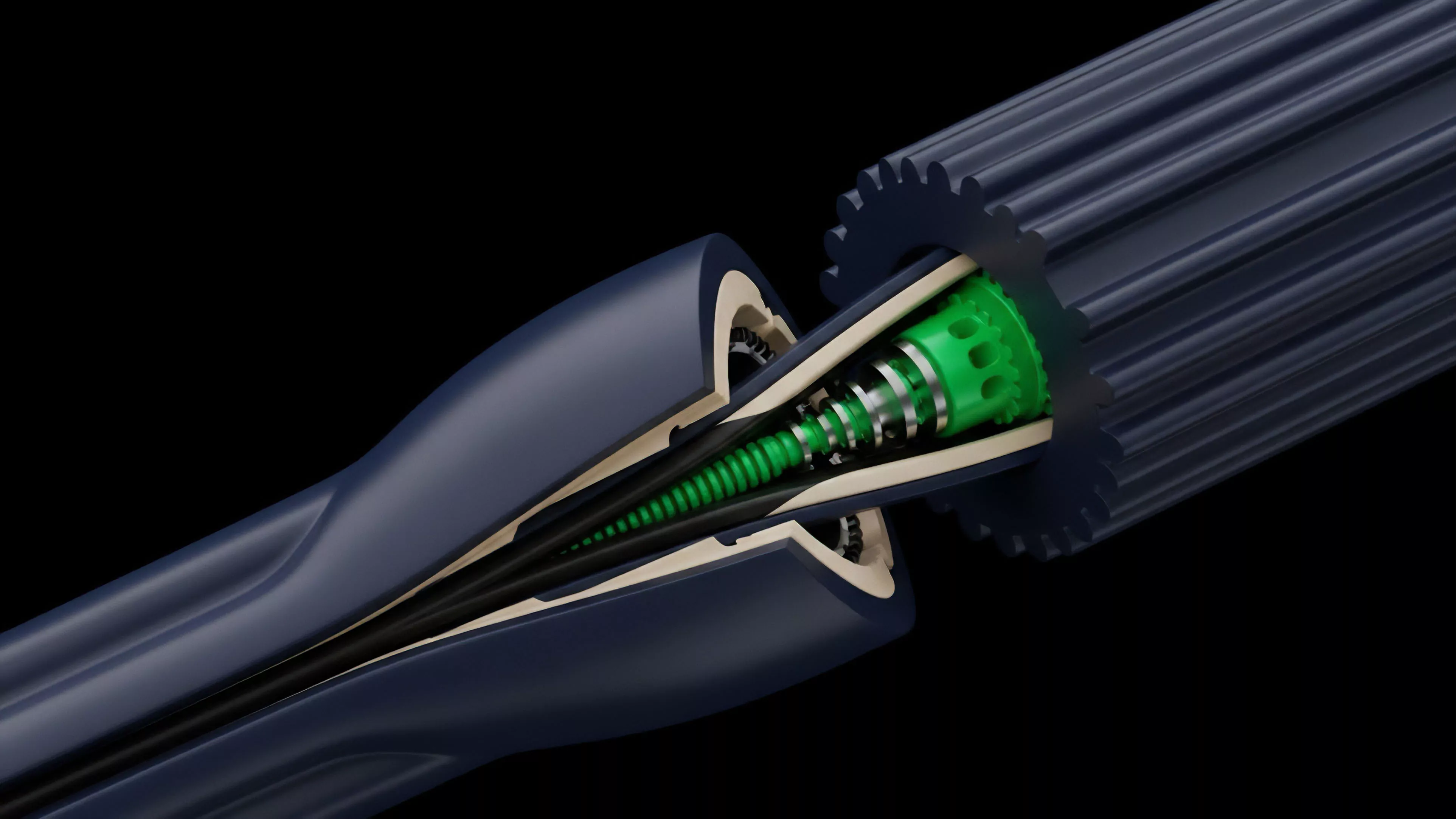

The internal structure of these mining operations typically follows a tiered architecture. First, a data-parsing layer interprets raw bytecode into human-readable financial events. Second, a computational layer applies statistical filters to remove noise and identify significant market shifts.

Finally, an execution layer translates these insights into updated protocol parameters. This pipeline must maintain strict synchronization with the underlying consensus mechanism to prevent stale data from distorting the pricing of derivative instruments.

Approach

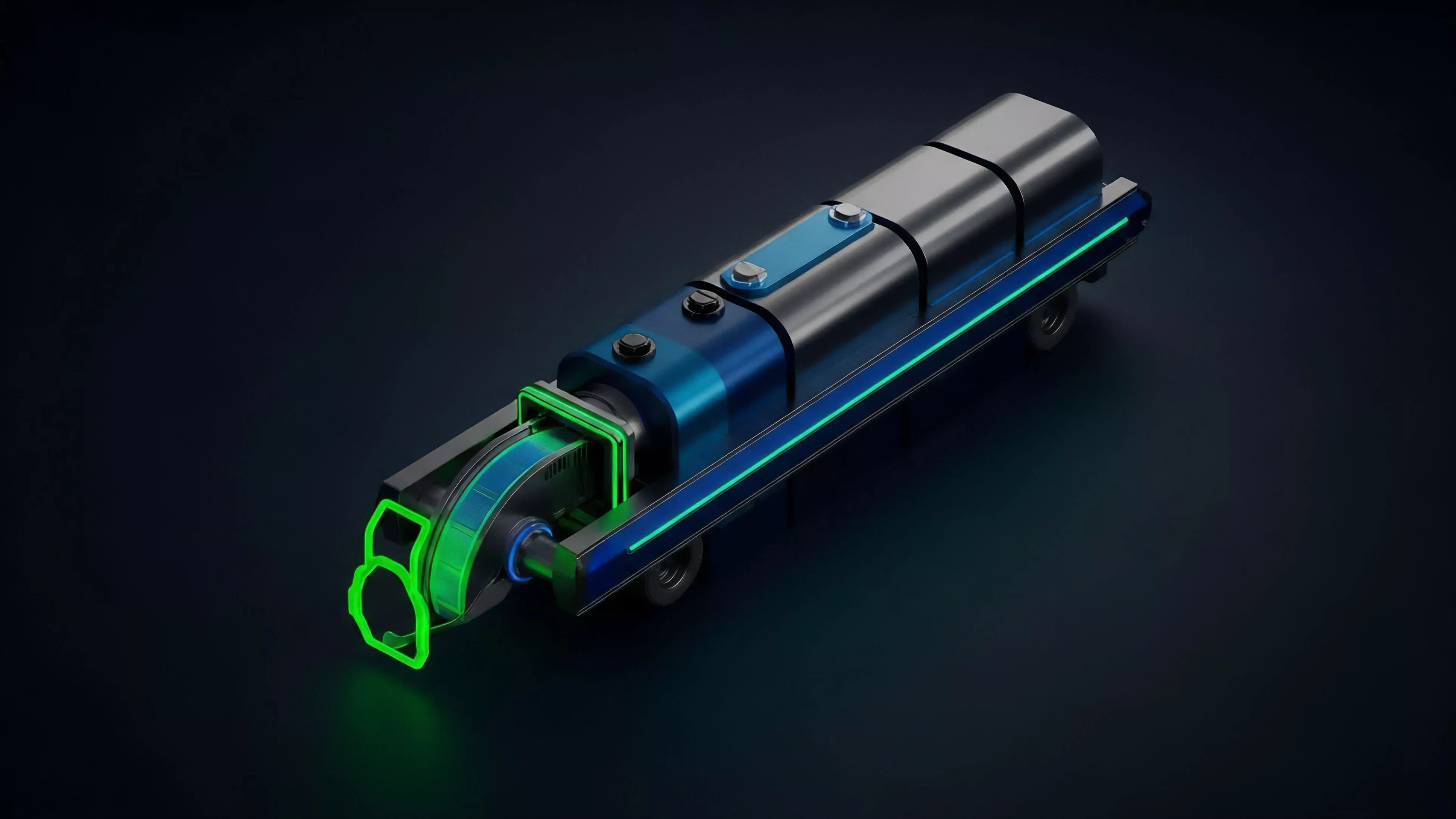

Current implementations of Oracle Data Mining leverage distributed computing networks to achieve consensus on the validity of extracted data. These networks operate by incentivizing independent nodes to perform the heavy lifting of indexing and processing blockchain state data.

This decentralized approach mitigates the risk of systematic failure associated with centralized data providers while simultaneously enhancing the robustness of the financial instruments that rely on these inputs.

- Node Incentive Structures: Governance models designed to align the financial interests of data providers with the accuracy of the mined information.

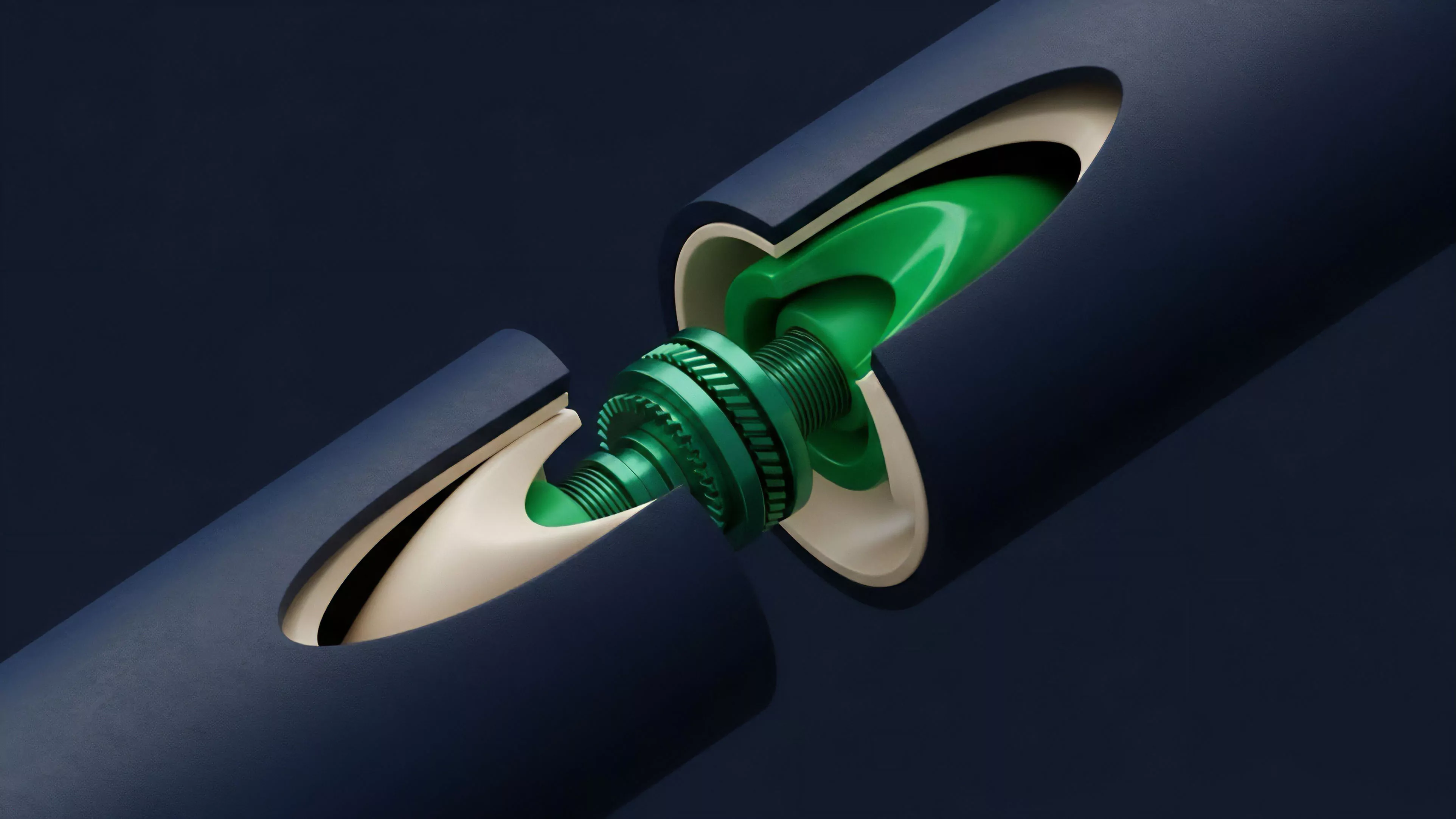

- Zero-Knowledge Proof Integration: Technical mechanisms that verify the correctness of processed data without revealing the underlying sensitive transaction history.

- Dynamic Weighting Algorithms: Adaptive logic that adjusts the influence of specific data sources based on their historical reliability and latency performance.

A critical challenge involves managing the trade-offs between computational overhead and data precision. Excessive filtering can introduce unwanted lag, while insufficient processing exposes the protocol to market manipulation. Advanced strategies now incorporate machine learning models that predict periods of high volatility, allowing the system to pre-emptively tighten margin thresholds and increase the sampling frequency of the mining process.

This proactive stance is essential for maintaining systemic stability during periods of extreme market stress.

Evolution

The trajectory of Oracle Data Mining has moved from basic, reactive index calculation to sophisticated, predictive analytics. Initially, protocols treated on-chain data as a static repository, extracting information only when a settlement event occurred. This limited the ability of decentralized derivatives to respond effectively to rapid shifts in market conditions.

The transition toward real-time, streaming data analysis has redefined the capabilities of these financial instruments.

The evolution of data extraction techniques has enabled a transition from reactive settlement to predictive, high-frequency risk management.

| Stage | Data Methodology | Systemic Capability |

| Foundational | Periodic batch extraction | Static settlement |

| Intermediate | Real-time event monitoring | Dynamic margin adjustment |

| Advanced | Predictive state modeling | Proactive systemic risk mitigation |

As the ecosystem matured, the integration of cross-chain data mining became a standard requirement. Protocols now aggregate state information from multiple, interoperable blockchains to construct a more comprehensive view of liquidity and volatility. This broader scope significantly improves the pricing accuracy of complex options and synthetic assets.

The shift also reflects a growing recognition that isolated, single-chain data sets are insufficient for modeling the interconnected nature of global digital asset markets.

Horizon

Future developments in Oracle Data Mining will likely focus on the integration of hardware-level acceleration and more resilient cryptographic verification. The demand for sub-millisecond price updates will drive the adoption of specialized execution environments that can process state transitions with minimal latency. This technical advancement will enable the creation of decentralized derivative products that rival the efficiency and sophistication of traditional, high-frequency trading platforms.

- Hardware-Accelerated Mining: Utilizing trusted execution environments to secure the data extraction pipeline at the processor level.

- Autonomous Risk Calibration: AI-driven models that automatically adjust protocol parameters in response to shifting global macro-crypto correlations.

- Privacy-Preserving Analytics: Advanced cryptographic techniques allowing for the extraction of market-wide signals without compromising individual user privacy or trade secrecy.

The ultimate goal remains the construction of a self-sustaining financial architecture where price discovery and risk management occur entirely on-chain, free from external dependencies. This vision necessitates a profound shift in how we conceive of data ownership and verification within decentralized systems. As these technologies mature, the distinction between on-chain and off-chain financial intelligence will continue to blur, leading to a more unified and efficient global market for digital assets.