Essence

Oracle Data Compliance functions as the structural verification layer for external information entering decentralized financial protocols. It encompasses the cryptographic and procedural methodologies required to ensure that off-chain data feeds ⎊ price indices, interest rate benchmarks, or collateral valuations ⎊ adhere to predefined standards of accuracy, latency, and source integrity. This framework mitigates the risk of manipulation within automated market makers and lending protocols that rely on exogenous variables for execution.

Oracle Data Compliance serves as the foundational mechanism for maintaining truth in decentralized financial environments by validating external data inputs.

The necessity for this discipline arises from the inherent friction between deterministic smart contract logic and the non-deterministic nature of real-world markets. When protocols utilize synthetic assets or leverage-based derivatives, the data feed becomes the most vulnerable attack vector. Oracle Data Compliance transforms raw data streams into trusted inputs through:

- Data Sanitization involving the removal of outliers and anomalous noise from aggregated feed sources.

- Latency Threshold Monitoring ensuring that stale data does not trigger erroneous liquidations or arbitrage opportunities.

- Source Provenance Auditing verifying the cryptographic signature of the primary data provider against registered whitelist identities.

Origin

The emergence of Oracle Data Compliance traces back to the systemic failures observed in early decentralized lending protocols where simple, centralized price feeds were manipulated via rapid asset price swings on low-liquidity exchanges. Market participants recognized that the reliance on single-source APIs created a single point of failure, necessitating a transition toward decentralized oracle networks and more rigorous validation requirements.

The evolution of data verification protocols directly addresses the systemic vulnerability created by reliance on unverified external information sources.

Initial iterations relied on simple medianizer contracts, which proved insufficient against sophisticated adversarial agents who could influence localized exchange prices. As financial products became more complex ⎊ moving from basic spot lending to perpetual options and delta-neutral vaults ⎊ the requirement for Oracle Data Compliance shifted from simple value checking to comprehensive verification of the entire data lifecycle. The shift mirrors the historical development of auditing standards in traditional finance, adapted for the permissionless and high-frequency environment of digital asset markets.

Theory

The theoretical framework governing Oracle Data Compliance relies on the interaction between game theory and statistical signal processing.

Protocols must incentivize honest data reporting while penalizing malicious or negligent behavior through economic stakes. The core of this theory posits that data accuracy is a function of the cost of corruption versus the potential gain from manipulating the system.

| Compliance Metric | Technical Objective | Risk Mitigation |

| Aggregation Consensus | Redundancy across N nodes | Single point failure |

| Time-Weighted Average | Smoothing volatility impact | Flash crash exploitation |

| Cryptographic Attestation | Source identity verification | Sybil attack injection |

The mathematical modeling of Oracle Data Compliance utilizes Greeks to measure sensitivity, specifically looking at how deviations in the oracle price affect the delta and gamma of option positions. If an oracle feed diverges beyond a statistical threshold ⎊ often modeled using standard deviation bands ⎊ the protocol triggers a circuit breaker to pause activity, preventing the propagation of erroneous valuations throughout the system. Sometimes, I contemplate how this resembles the early days of high-frequency trading, where the speed of information mattered more than the quality of the information itself; now, we prioritize the integrity of that signal above all else.

This tension between speed and accuracy remains the central challenge for protocol architects.

Approach

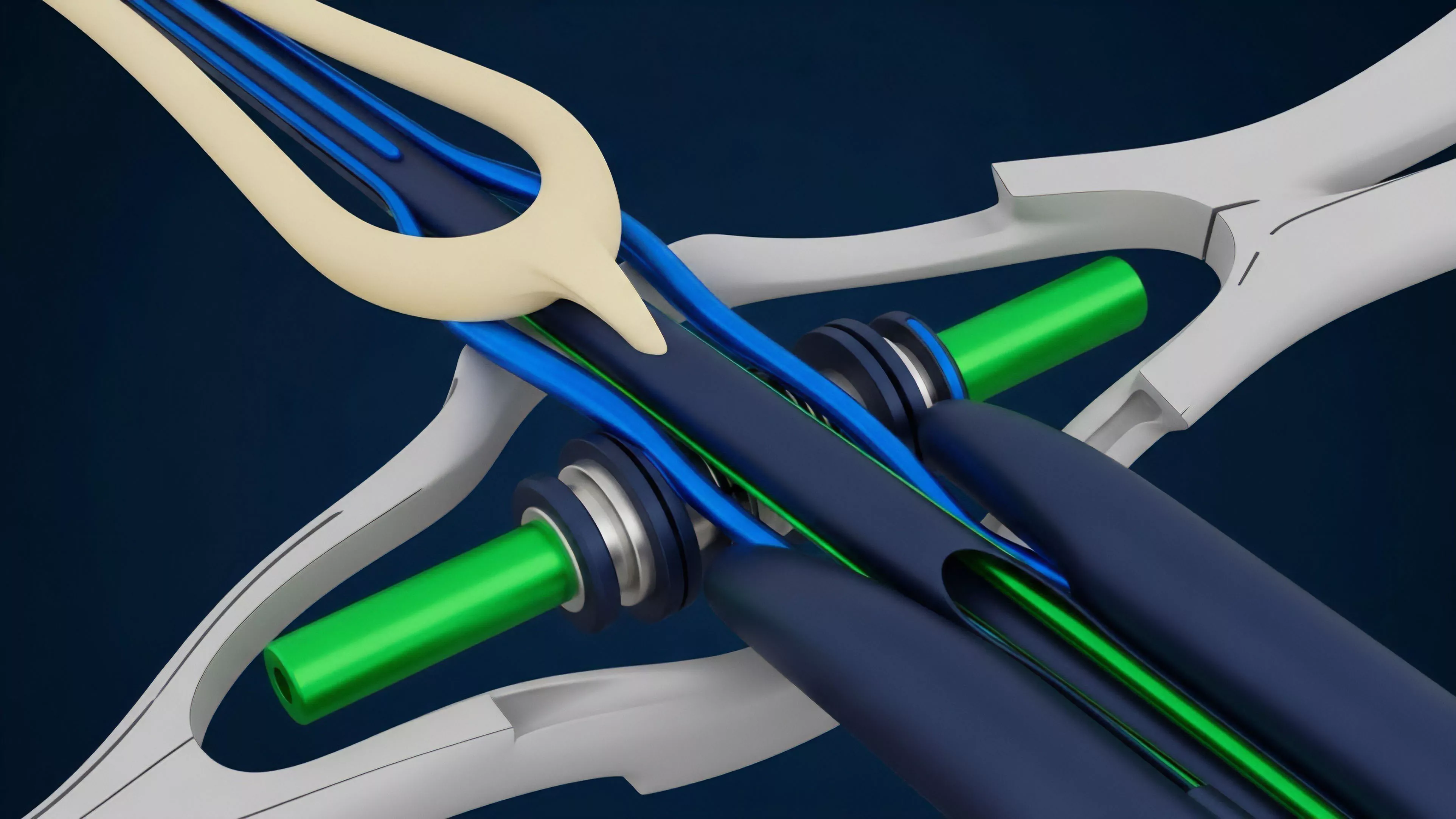

Current implementation strategies focus on multi-layer verification architectures. Systems now deploy decentralized oracle networks that require nodes to provide collateral, which is subject to slashing if the reported data deviates significantly from the median of the aggregate set. This economic bonding ensures that the cost of providing non-compliant data outweighs any potential benefit derived from market manipulation.

Economic bonding and multi-source consensus represent the current standard for achieving robust oracle data integrity in decentralized protocols.

Strategists emphasize the use of Proof of Authority combined with decentralized aggregation to ensure that the source of the data is reputable while the processing remains transparent. The approach involves:

- Dynamic Deviation Thresholds where the allowable variance of a data point adjusts based on the underlying asset’s historical volatility.

- Cross-Chain Verification which involves querying multiple independent chains to confirm the consistency of a price point before updating the local state.

- Circuit Breaker Integration that halts contract execution if the oracle data fails to meet pre-established compliance parameters.

Evolution

The trajectory of Oracle Data Compliance moved from centralized, single-source feeds to highly complex, decentralized, and multi-layered verification systems. Early protocols suffered from excessive reliance on single-point API endpoints, leading to frequent exploits. The sector responded by developing decentralized oracle networks that utilize distributed nodes to provide consensus-based data.

| Development Stage | Compliance Mechanism | Primary Limitation |

| Centralized Feed | Direct API call | Single point of failure |

| Medianized Aggregate | Simple consensus logic | Outlier sensitivity |

| Staked Decentralized Network | Economic slashing/bonding | Latency/throughput trade-offs |

This evolution reflects a maturing understanding of the Systems Risk inherent in decentralized finance. As protocols have expanded to support exotic derivatives and complex financial instruments, the requirements for Oracle Data Compliance have increased, necessitating faster, more resilient, and cryptographically secure data pipelines.

Horizon

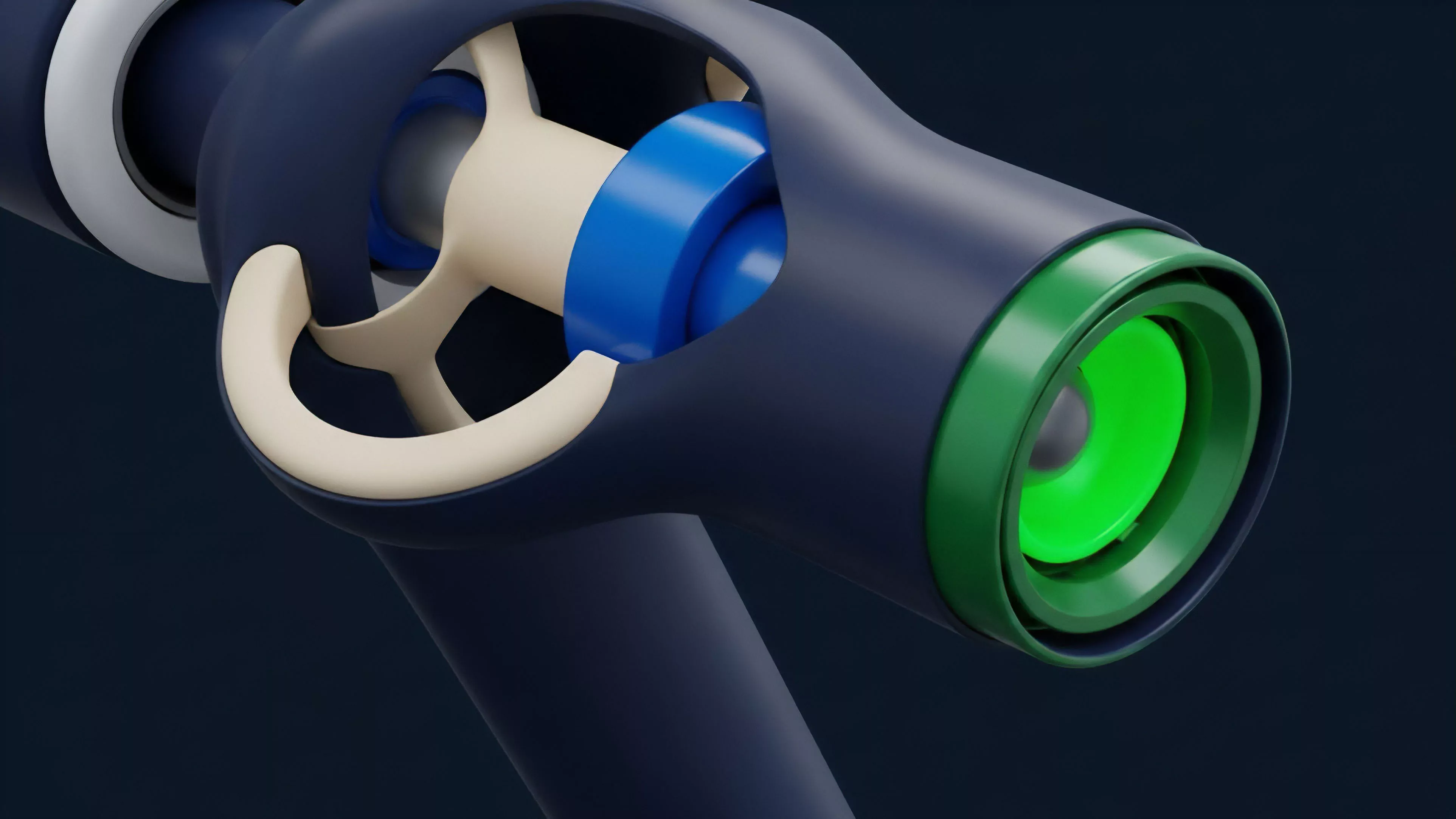

The future of Oracle Data Compliance lies in the integration of Zero-Knowledge proofs to verify the authenticity of off-chain data without revealing the underlying source infrastructure. This development will allow protocols to ingest high-fidelity data while maintaining strict privacy and security standards.

The goal is to create a trustless data environment where the compliance layer is embedded directly into the cryptographic proof of the data itself.

Zero-knowledge verification represents the next frontier for ensuring data integrity without compromising the privacy of information providers.

We anticipate a shift toward automated compliance monitoring agents that operate autonomously within the protocol, adjusting deviation thresholds in real-time based on live market conditions. This evolution will reduce the reliance on manual governance interventions, creating more resilient systems capable of handling extreme market volatility. The ultimate aim is a self-healing data architecture where the compliance layer automatically reconfigures to maintain systemic stability under any conditions.