Essence

Oracle Data Auditing constitutes the rigorous verification process applied to external data inputs feeding into decentralized financial protocols. These systems depend upon accurate price feeds, volatility indices, and collateral valuations to trigger smart contract executions. Without a robust validation layer, the entire derivative infrastructure risks collapse due to malicious or erroneous information injection.

Oracle Data Auditing ensures the integrity of external data inputs that drive decentralized financial contract settlements.

At the center of this mechanism lies the requirement for trustless consensus on off-chain state transitions. Financial derivatives operate on precise mathematical models where a marginal deviation in the underlying asset price leads to significant discrepancies in margin calls and liquidation events. This discipline demands that every data point, whether sourced from centralized exchanges or decentralized aggregators, undergoes cryptographic scrutiny before integration into the protocol state.

Origin

The inception of Oracle Data Auditing traces back to the realization that blockchain networks remain isolated from real-world events.

Early decentralized applications suffered from single-point-of-failure risks where a single compromised data feed could drain liquidity pools. Developers observed that relying on a solitary source for asset pricing provided an irresistible target for adversarial actors seeking to manipulate contract outcomes.

- Protocol Vulnerability identified the necessity for redundant, multi-source validation to mitigate oracle-based exploits.

- Cryptographic Verification introduced the requirement for signatures to confirm data provenance and prevent spoofing.

- Aggregation Models evolved to combine multiple price feeds into a single weighted average to reduce the impact of outliers.

These historical failures forced a paradigm shift toward modular architectures. The community recognized that data accuracy determines the solvency of automated market makers and lending protocols. Consequently, the focus shifted from simple data transmission to complex auditing frameworks that monitor for anomalies, latency, and source reliability in real-time.

Theory

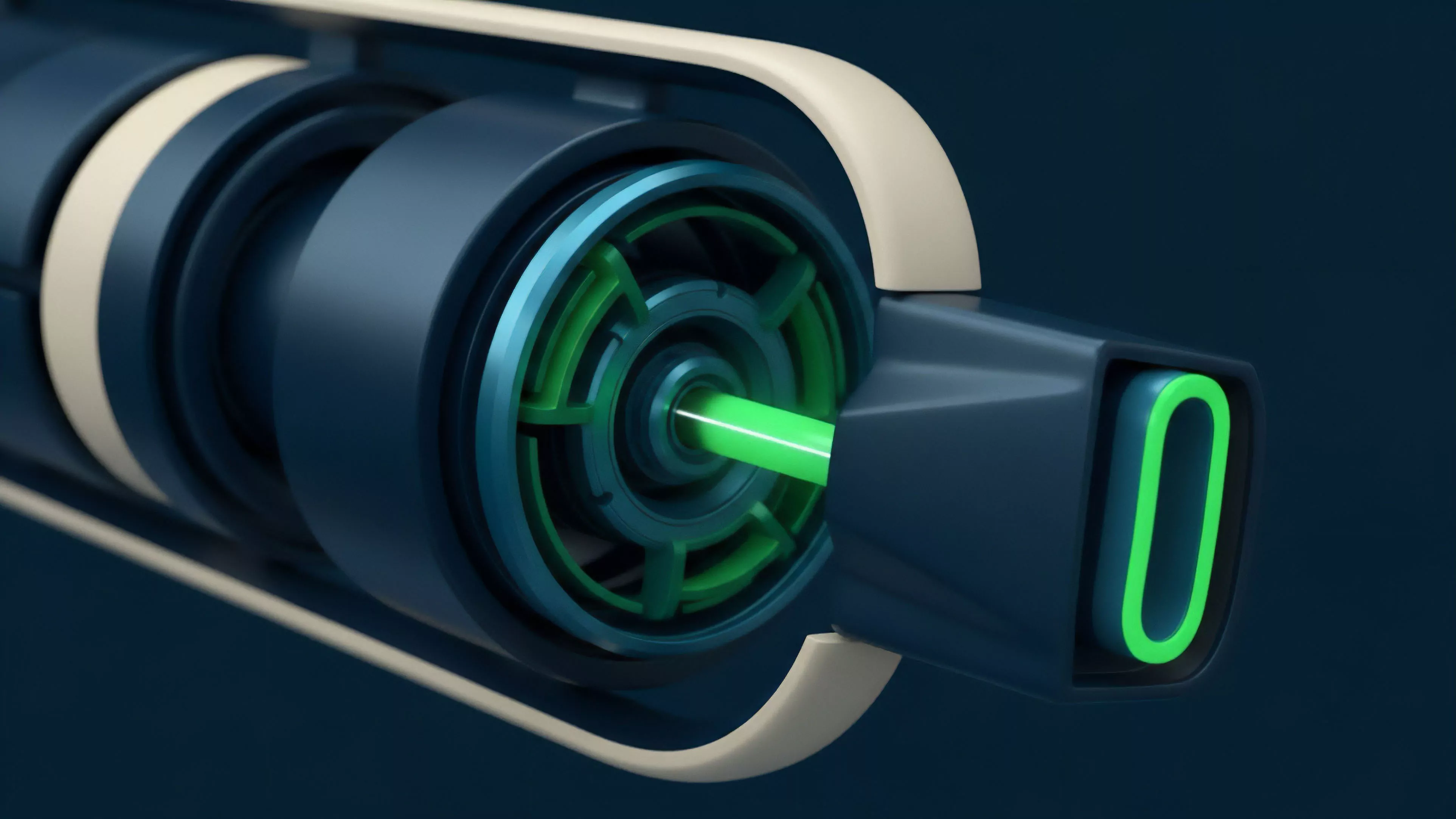

The architecture of Oracle Data Auditing relies on the interaction between game-theoretic incentives and statistical analysis.

Protocols must distinguish between legitimate market volatility and intentional price manipulation. This requires an understanding of how liquidity fragmentation across disparate trading venues impacts the global price discovery process.

| Validation Metric | Technical Function |

| Latency Analysis | Detects stale data points that fail to reflect current market conditions |

| Deviation Thresholds | Triggers halts when incoming data exceeds predefined volatility bands |

| Consensus Weighting | Assigns reputation scores to nodes based on historical data accuracy |

The mathematical foundation rests on probability distributions of asset prices. When an oracle reports a value outside the expected confidence interval, the auditing layer initiates a secondary verification process. This often involves cross-referencing multiple decentralized nodes or querying historical order flow data to determine if the reported price aligns with broader market trends.

Sophisticated auditing frameworks employ statistical deviation thresholds to isolate malicious data inputs from genuine market movements.

The system must also account for the cost of corruption. If the incentive to manipulate an oracle outweighs the penalty for providing false data, the protocol will eventually fail. Effective designs therefore integrate staking mechanisms where validators lose capital upon submitting incorrect or manipulated data.

Approach

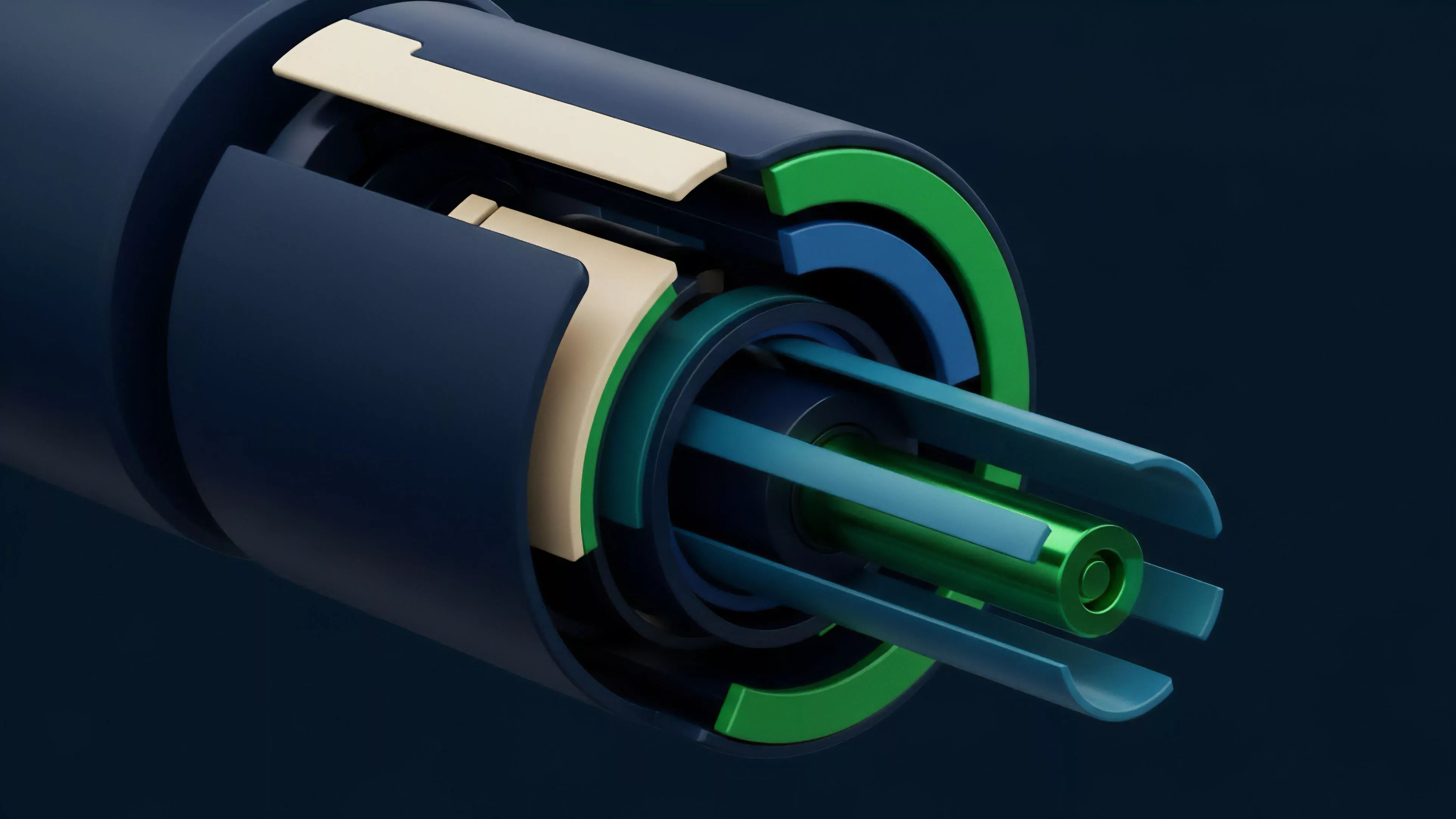

Modern implementations utilize automated agents that monitor the feed stream for discrepancies.

These agents operate as decentralized watchers, constantly comparing incoming oracle data against on-chain liquidity depth and external exchange volume. This continuous surveillance allows protocols to preemptively pause trading or adjust margin requirements before a faulty data point can trigger a systemic liquidation.

- Real-time Monitoring ensures that any deviation in reported price versus actual liquidity is flagged immediately.

- Cross-Chain Verification involves comparing asset prices across different blockchain networks to detect regional anomalies.

- Reputation Management dynamically adjusts the weight of individual data providers based on their uptime and accuracy performance.

This approach shifts the burden of security from reactive patches to proactive, code-enforced rules. The protocol acts as an adversarial environment where every data point is treated as a potential vector for exploitation. By implementing these rigorous checks, architects reduce the reliance on human intervention and move toward fully autonomous, resilient financial systems.

Evolution

The trajectory of Oracle Data Auditing has moved from simple, centralized gateways to complex, multi-layered consensus networks.

Initial designs focused on raw data transmission, but the rising complexity of derivative instruments necessitated more granular validation. As liquidity cycles tighten, the speed and accuracy of these audits have become the primary determinant of protocol competitiveness.

Protocol resilience depends on the ability of auditing layers to adapt to rapid changes in market volatility and liquidity conditions.

Recent developments incorporate machine learning models to predict potential oracle failure before it occurs. These predictive systems analyze historical data patterns to identify when a specific source or validator node is exhibiting signs of instability. Such advancements reflect a broader transition toward systems that prioritize structural robustness over simple throughput.

| Stage | Key Characteristic |

| Generation 1 | Single-source data feeds |

| Generation 2 | Decentralized multi-source aggregation |

| Generation 3 | Automated, risk-aware auditing layers |

Horizon

The future of Oracle Data Auditing lies in the integration of zero-knowledge proofs to verify data integrity without revealing the underlying sources. This allows for privacy-preserving audits while maintaining high standards of transparency. As decentralized finance scales, the ability to perform these computations efficiently will determine which protocols dominate the market. Further progress will likely involve the creation of decentralized reputation markets where data providers are ranked by cryptographic evidence of their performance. This incentive structure will force providers to maintain higher standards of data quality, reducing the overall systemic risk within the derivative landscape. The goal is a self-healing system that automatically excludes unreliable nodes and optimizes for precision in every transaction. What mechanisms will emerge to reconcile data integrity with the increasing demand for near-instantaneous settlement in high-frequency decentralized markets?