Essence

On Chain Volatility Metrics function as the definitive barometer for risk assessment within decentralized financial architectures. These metrics derive directly from verifiable blockchain data, specifically monitoring state changes, transaction frequency, and liquidity distribution across automated market makers. Unlike traditional finance where latency often obscures true market conditions, these tools provide instantaneous visibility into the raw mechanics of price discovery.

On Chain Volatility Metrics provide a real-time, trustless assessment of market turbulence by directly observing transactional throughput and liquidity shifts on the blockchain.

The core utility rests in the ability to quantify the intensity of capital movement without relying on centralized exchange reporting. By analyzing Realized Volatility through the lens of block-by-block state updates, market participants gain a precise understanding of the speed at which value shifts across protocols. This transparency enables a more rigorous approach to hedging and capital allocation in adversarial environments.

Origin

The genesis of these metrics traces back to the limitations inherent in legacy financial data feeds when applied to decentralized protocols.

Early participants realized that off-chain price data failed to account for the unique Protocol Physics ⎊ the specific rules governing how assets are locked, minted, or burned during high-stress events. The need to quantify risk within permissionless liquidity pools necessitated a move toward data extraction directly from the ledger.

- Transaction Entropy: Measuring the irregularity of block inclusion times to gauge network congestion and stress.

- Liquidity Concentration: Mapping the distribution of capital within concentrated liquidity pools to identify potential slippage vectors.

- Gas Price Correlation: Utilizing fee market volatility as a high-fidelity signal for upcoming price volatility.

This shift toward on-chain observation allowed for the development of models that treat the blockchain as a living, breathing market microstructure. The move away from centralized proxies reflects the broader objective to build self-contained financial systems capable of autonomous risk management.

Theory

The quantitative foundation of these metrics relies on the assumption that market participant behavior is permanently etched into the ledger. By applying Stochastic Calculus to the sequence of state transitions, one can model the probability of extreme price deviations ⎊ tail risks ⎊ with greater accuracy than models reliant on aggregated exchange data.

The protocol acts as the primary source of truth, where every trade, liquidation, and minting event is a data point in a continuous distribution.

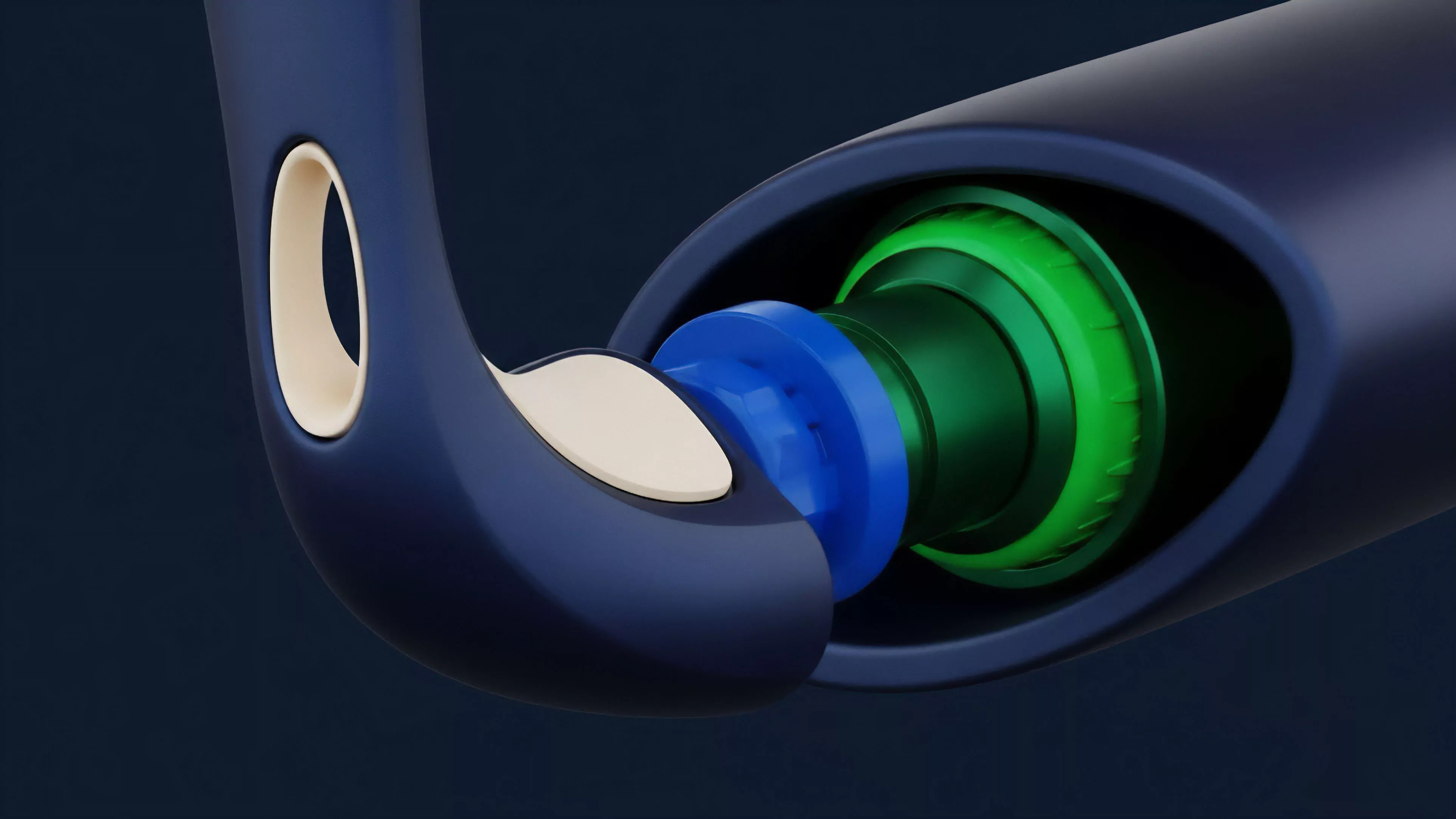

Quantifying on-chain volatility requires modeling the interplay between smart contract execution speed and the underlying liquidity depth available at any given block height.

The framework assumes an adversarial environment where participants continuously probe for inefficiencies. Liquidation Thresholds become the primary drivers of volatility, as automated agents trigger cascading sell-offs when collateral ratios reach critical levels. This creates feedback loops where volatility generates further volatility, a phenomenon that can be mapped using Order Flow Toxicity metrics.

| Metric | Technical Focus | Risk Implication |

| Block Delta | Time-weighted price changes | Short-term liquidity crunch |

| Pool Depth | Concentrated liquidity range | Slippage and execution cost |

| Gas Sensitivity | Fee market pressure | Congestion-driven price spikes |

The mathematical rigor here is not about predicting future prices but about mapping the structural integrity of the liquidity surface. The human tendency to panic during liquidation cycles introduces a behavioral component that is statistically observable through increased transaction volume and higher gas bids.

Approach

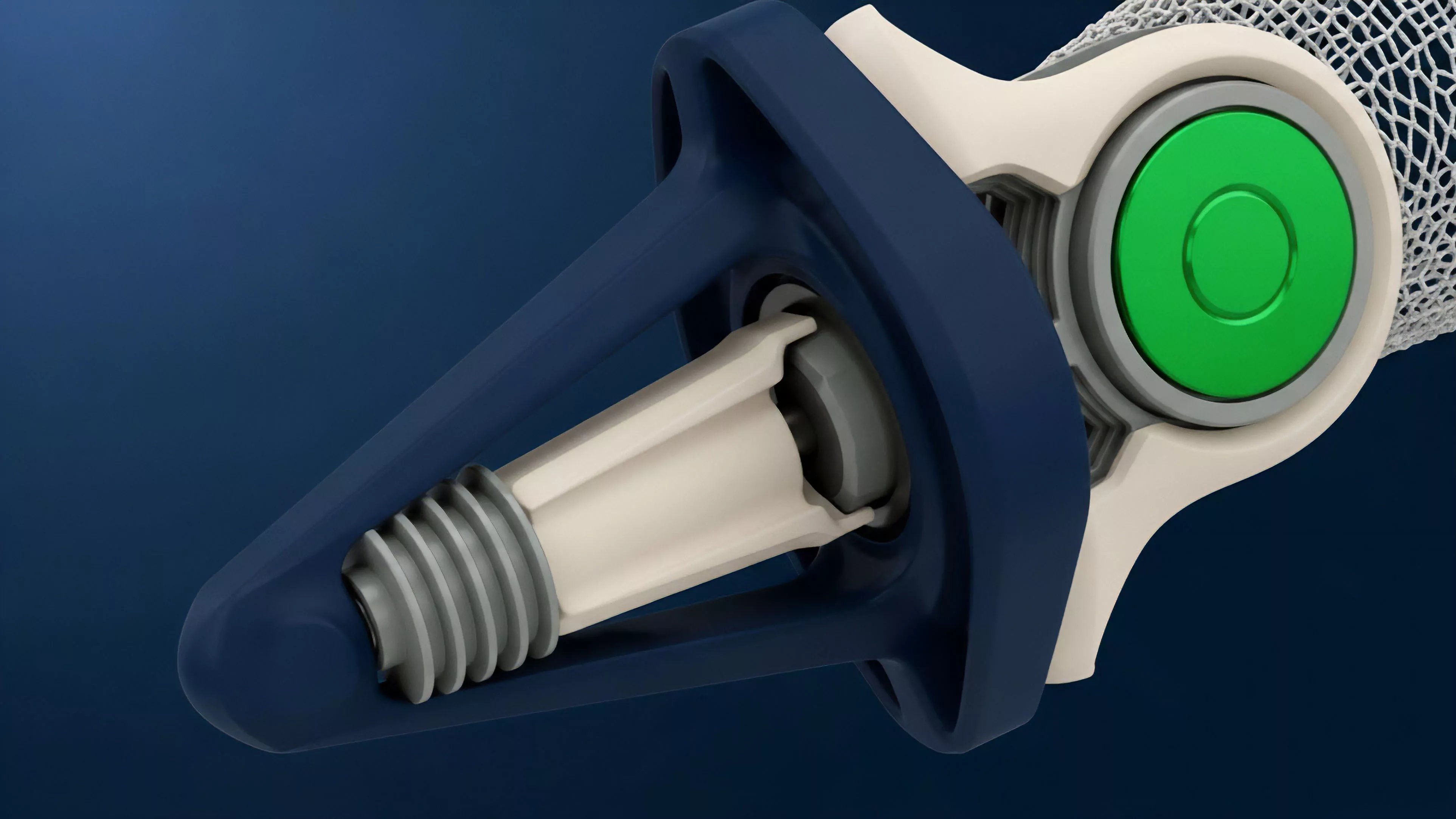

Current methodologies prioritize the ingestion of raw event logs to reconstruct the order book state in real-time. This involves running dedicated nodes to capture Mempool Dynamics, allowing for the anticipation of volatility before it is finalized on-chain.

Analysts focus on the delta between the spot price and the synthetic price derived from protocol-specific parameters.

- Order Flow Analysis: Identifying the presence of sophisticated arbitrageurs or automated liquidators within the mempool.

- Implied Volatility Mapping: Extracting volatility signals from decentralized option protocols by analyzing the premium distribution across strike prices.

- Systemic Contagion Tracking: Monitoring the cross-protocol collateralization of assets to identify potential points of failure.

This approach demands significant technical infrastructure. The ability to parse logs and execute complex queries against the state trie provides a competitive advantage. It is a game of speed and analytical depth, where the objective is to remain one step ahead of the automated liquidation engines.

Evolution

Initial implementations focused on simple moving averages of price deviations, a rudimentary method that failed to capture the non-linear nature of decentralized market shocks.

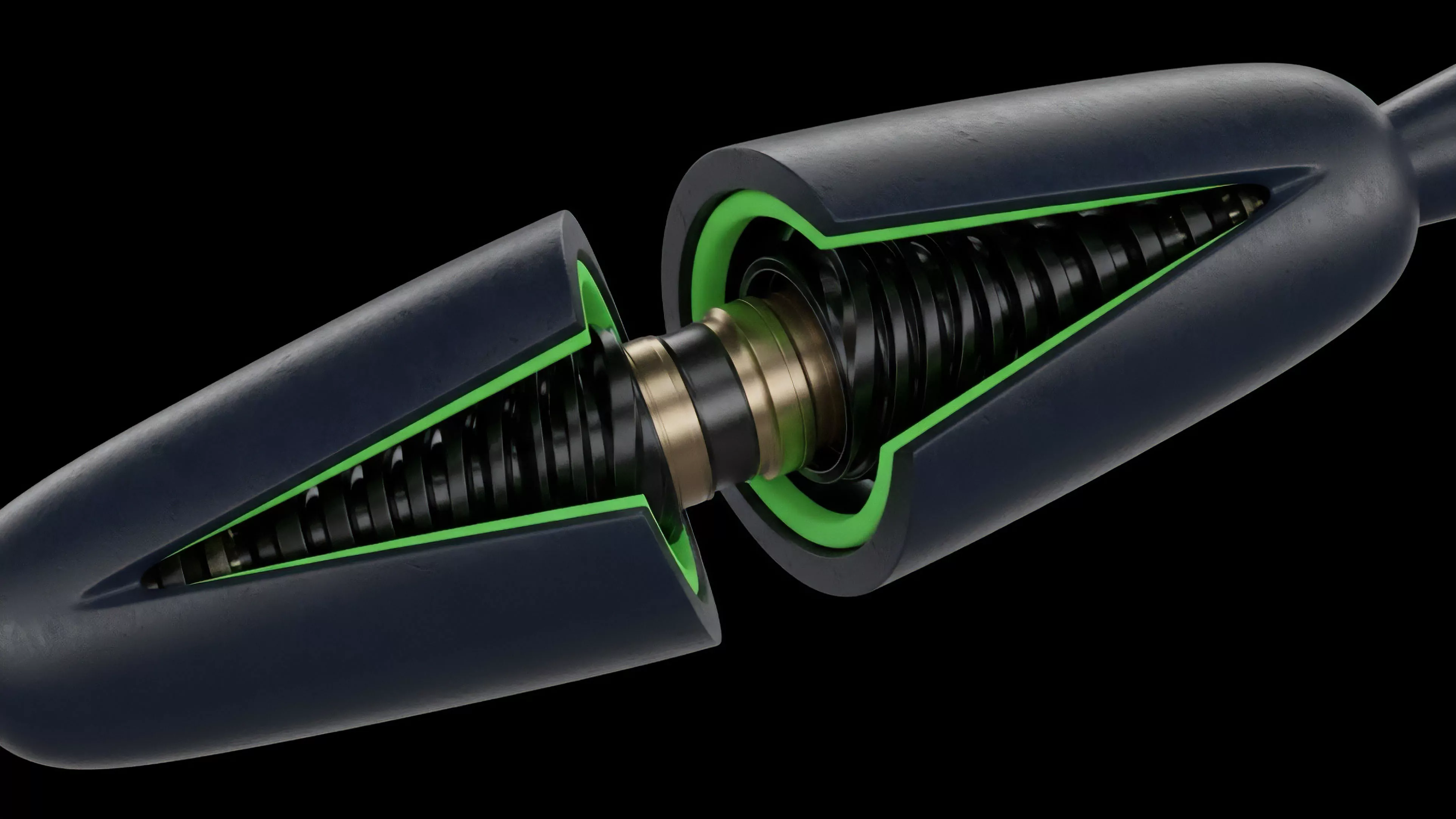

The field has since matured into sophisticated Systemic Risk modeling, where the focus has shifted toward understanding how inter-protocol dependencies amplify volatility. The development of cross-chain bridges and modular architectures has forced a reassessment of what constitutes a single market, leading to more granular, multi-dimensional metrics.

Systemic risk within decentralized finance stems from the tight coupling of collateral assets across multiple independent protocols.

This evolution mirrors the maturation of quantitative finance in traditional markets, yet it remains distinct due to the transparent nature of the underlying data. The shift from reactive to proactive modeling signifies a move toward Autonomous Risk Management, where protocols adjust their own parameters in response to observed volatility without human intervention.

Horizon

The future of these metrics lies in the integration of Machine Learning models that can process massive datasets of historical state changes to identify subtle patterns in market microstructure. We are moving toward a state where volatility metrics are not just passive observations but active components of smart contract governance.

This will allow for dynamic adjustment of collateral requirements and interest rates based on the real-time volatility of the underlying assets.

| Development Stage | Focus Area | Anticipated Outcome |

| Phase One | Mempool Analytics | Predictive liquidation modeling |

| Phase Two | Cross-Protocol Integration | Systemic risk containment |

| Phase Three | Autonomous Protocol Adjustment | Self-stabilizing financial systems |

The ultimate goal is the creation of a truly resilient decentralized financial infrastructure that can withstand extreme market conditions without relying on centralized intervention. This trajectory suggests a shift from human-centric risk management to algorithmic, protocol-native resilience, where volatility is managed as a fundamental property of the system rather than an external threat.