Essence

On-Chain Data Integration represents the systematic ingestion, normalization, and contextualization of distributed ledger activity into derivative pricing engines. It functions as the bridge between raw, immutable blockchain events and the high-frequency requirements of modern financial risk management. By converting decentralized network state into actionable quantitative inputs, this process enables market participants to calibrate volatility surfaces, delta exposure, and liquidation thresholds with real-time accuracy.

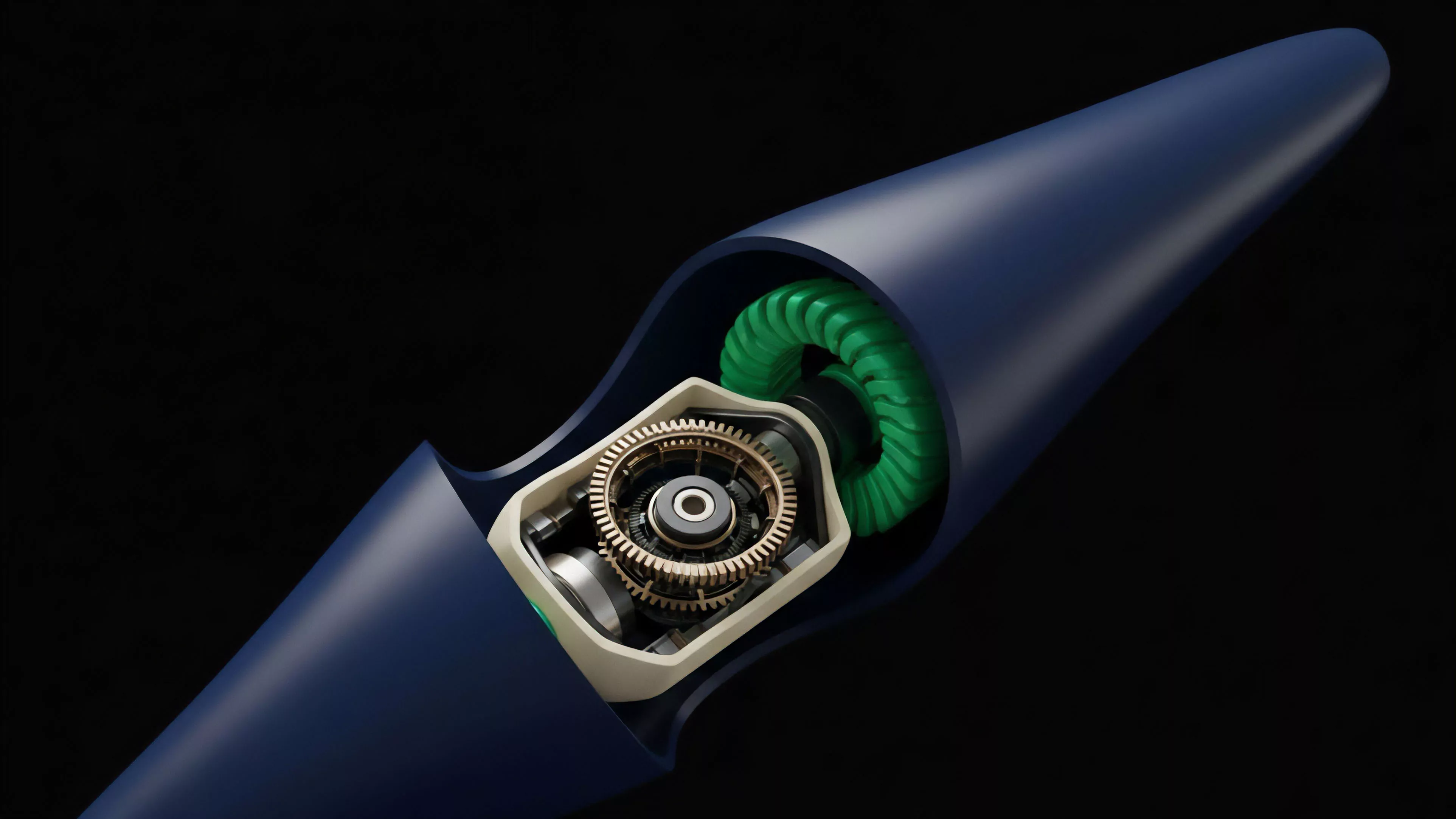

On-Chain Data Integration transforms static ledger states into dynamic inputs for derivative pricing and risk management frameworks.

At its core, this architecture replaces traditional, siloed market data feeds with transparent, verifiable streams. It allows for the synchronization of asset collateralization levels, open interest fluctuations, and smart contract execution speeds directly into the valuation models that govern crypto options. The result is a tighter coupling between the underlying protocol health and the derivative contract value, reducing information asymmetry in decentralized market venues.

Origin

The genesis of On-Chain Data Integration traces back to the early limitations of decentralized exchanges, where fragmented liquidity and delayed price discovery hindered sophisticated hedging strategies.

Initial efforts relied on centralized oracles, which introduced single points of failure and latency, creating discrepancies between the intended derivative payoff and the realized protocol state.

- Decentralized Oracle Networks provided the first mechanisms to verify external data, yet they often lacked the granular depth required for complex option pricing.

- Automated Market Maker designs exposed the need for more sophisticated telemetry, specifically regarding impermanent loss and liquidity provider behavior.

- High-Frequency Trading requirements necessitated a shift from batch-processed data to streaming, event-driven architectures capable of handling millisecond-level state changes.

This evolution necessitated a transition from passive data indexing to active, protocol-native integration. Architects recognized that to scale decentralized derivatives, the pricing engines must reside closer to the consensus layer, ensuring that every tick of data utilized in a Black-Scholes or binomial model reflects the true, unadulterated state of the network.

Theory

The theoretical framework for On-Chain Data Integration rests on the principle of information symmetry within adversarial environments. By treating the blockchain as a deterministic state machine, quantitative analysts construct pricing models that are inherently responsive to protocol-level shocks.

This requires a rigorous mapping of on-chain events to derivative risk sensitivities, often termed Greeks.

| Data Metric | Derivative Impact |

| Gas Price Volatility | Option Execution Cost |

| Collateral Utilization | Liquidation Threshold Sensitivity |

| Smart Contract TVL | Systemic Risk Premium |

Rigorous integration of on-chain telemetry allows derivative models to account for protocol-specific risks often overlooked in traditional finance.

The technical architecture involves complex feedback loops. As market participants adjust their delta hedging strategies based on integrated data, their actions generate new on-chain transactions, which in turn update the data feed. This recursive process requires sophisticated dampening mechanisms to prevent feedback-driven volatility spikes from destabilizing the margin engines of derivative protocols.

Approach

Current methodologies emphasize the construction of low-latency indexing pipelines that bypass traditional bottlenecked APIs.

Systems architects now deploy specialized nodes that perform real-time decoding of smart contract logs, transforming opaque byte-code into structured quantitative variables. This approach prioritizes data integrity over sheer volume, ensuring that the input for option pricing remains robust against front-running and oracle manipulation.

- Log Decoding involves parsing transaction receipt data to extract precise execution metrics without reliance on third-party aggregators.

- State Trie Analysis allows for the verification of account balances and collateral ratios directly from the merkle roots of the current block.

- Event Streaming utilizes asynchronous messaging queues to broadcast state changes to off-chain pricing engines, minimizing the latency between network consensus and trade execution.

These methods demand a deep understanding of protocol physics. One must consider the block production interval as the fundamental time-step for the derivative model, acknowledging that crypto markets operate in discrete, rather than continuous, time. This reality dictates the choice of discretization methods within the pricing formulas to avoid model drift during periods of extreme network congestion.

Evolution

The path toward current implementation began with simple price feeds and has progressed toward full-stack state integration.

Early models functioned on crude approximations, often lagging behind actual market movements by several blocks. The shift occurred when developers realized that the derivative contract itself could hold the logic for data verification, effectively turning the contract into a self-contained pricing machine.

The evolution of data integration reflects a shift from external reliance toward protocol-native, trust-minimized pricing mechanisms.

Today, we observe the rise of modular data availability layers that provide verifiable proofs of state, allowing derivative protocols to scale without sacrificing security. This transition mirrors the broader maturation of decentralized finance, moving from experimental, high-risk constructs to sophisticated, institutional-grade instruments that require verifiable, real-time data to function under stress.

Horizon

The future of On-Chain Data Integration lies in the development of zero-knowledge proof systems that allow for the verification of off-chain pricing computations on-chain. This will enable the deployment of highly complex, exotic option structures that were previously restricted by the computational limits of the base layer.

We are moving toward a reality where the derivative contract is not just a reflection of the market, but an active participant in the governance and stability of the underlying asset.

| Development Phase | Primary Focus |

| Zero Knowledge Proofs | Verifiable Off-Chain Computation |

| Cross-Chain Interoperability | Unified Liquidity Surfaces |

| Autonomous Margin Engines | Self-Correcting Risk Parameters |

The ultimate goal remains the total alignment of derivative payoff structures with the fundamental physics of the blockchain. As these systems become more autonomous, the reliance on human-curated data will diminish, replaced by mathematical proofs that ensure the integrity of the entire decentralized derivative stack.