Essence

On-chain data aggregation transforms raw blockchain event logs into coherent, structured financial metrics for decentralized derivatives. The process is foundational for pricing, risk management, and market efficiency in decentralized finance (DeFi) options protocols. Raw data on a blockchain is often fragmented and difficult to interpret directly; it consists of transaction logs, smart contract events, and state changes.

Aggregation provides the necessary processing layer to convert these inputs into actionable information, such as open interest, trading volume, and implied volatility surfaces. This structured data allows protocols to calculate risk parameters, manage collateralization ratios, and execute automated liquidation processes.

On-chain data aggregation is the process of converting fragmented blockchain events into coherent financial metrics necessary for decentralized risk management and pricing models.

Without this layer, decentralized options markets would operate with high information asymmetry and significant systemic risk. The aggregation process must account for the specific characteristics of different options protocols, including their specific collateral mechanisms and pricing curves. It requires real-time processing to ensure data accuracy for time-sensitive operations like liquidations.

Data Integrity and Systemic Risk

The integrity of aggregated data directly impacts the stability of the entire options protocol. If the data feed is manipulated or delayed, the protocol’s risk engine can make incorrect decisions regarding collateral requirements. This vulnerability creates opportunities for arbitrageurs and increases the likelihood of cascading liquidations during periods of high volatility.

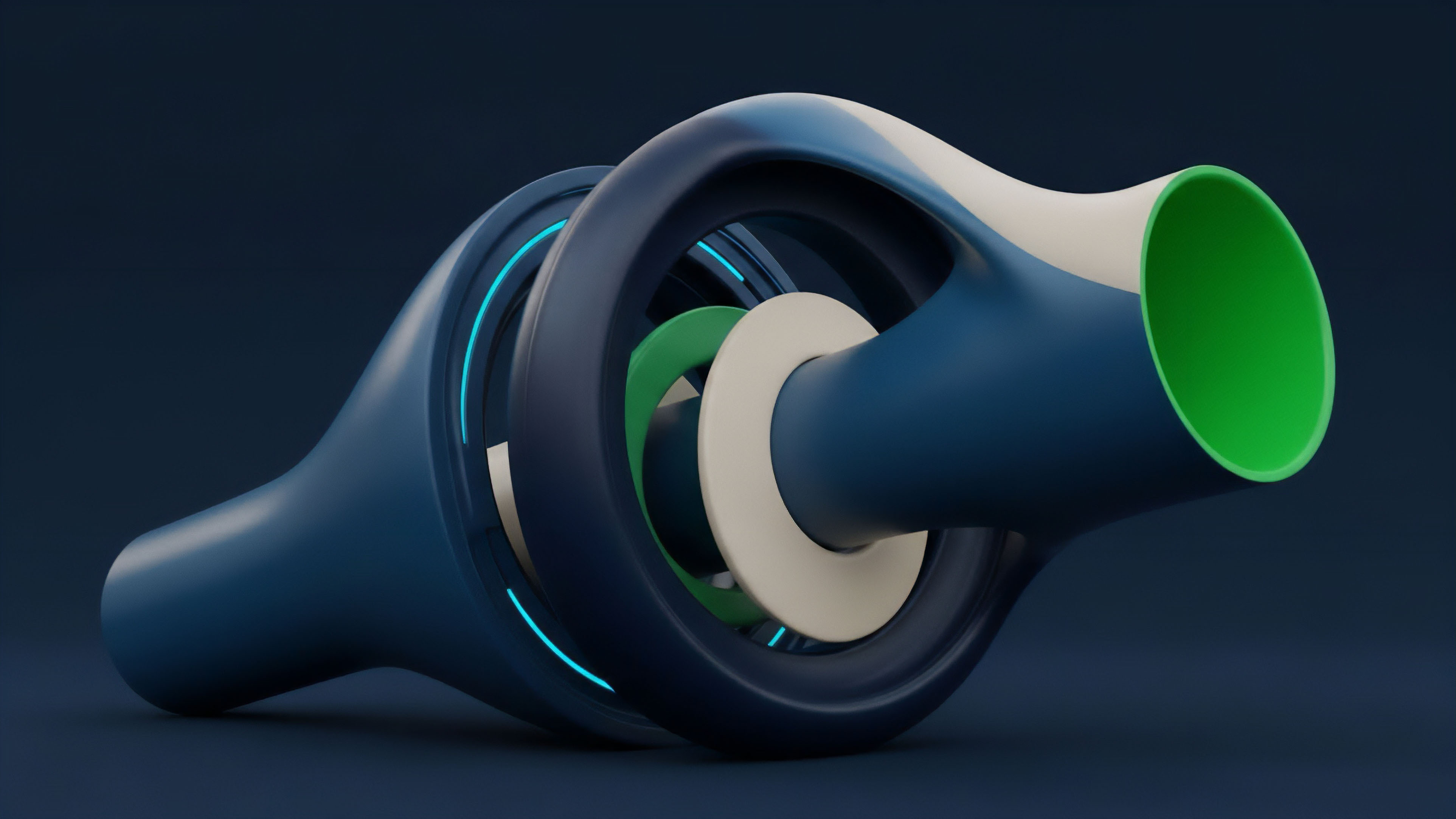

The design of the aggregation process must prioritize data security and censorship resistance to protect against these systemic risks. The aggregation process essentially acts as the market’s nervous system, translating external stimuli into internal operational decisions.

Origin

The necessity for on-chain data aggregation emerged from the fundamental architectural shift from centralized exchanges (CEX) to decentralized protocols.

In traditional finance and CEX environments, market data is proprietary and centralized. A single entity controls the order book, transaction history, and risk calculations, providing a clean, single source of truth for all participants. When options markets began to form on decentralized blockchains, this centralized data model was no longer viable.

The early challenge for DeFi options protocols was the lack of standardized data feeds. Unlike CEXs, where data is readily available via APIs, decentralized applications (dApps) must extract data directly from the blockchain’s state. Early attempts at data analysis involved manually scraping transaction logs, which was inefficient and prone to errors.

The proliferation of different options protocols, each with unique smart contract architectures and collateral models, exacerbated the problem. The market needed a mechanism to unify this fragmented data into a single, reliable source for risk calculations.

The Shift from Centralized to Decentralized Data

The transition from centralized to decentralized derivatives required a new data infrastructure. The first generation of DeFi protocols often relied on simple metrics like total value locked (TVL) and basic liquidity pool data. As options protocols grew in complexity, a more sophisticated data layer became essential.

This led to the development of dedicated data indexing solutions that could parse complex smart contract events and calculate financial primitives. The goal was to replicate the data integrity and accessibility of traditional financial markets in a trustless environment.

Theory

On-chain data aggregation for options relies on a synthesis of quantitative finance principles and protocol physics.

The primary theoretical objective is to accurately calculate key risk parameters, particularly implied volatility (IV) and open interest (OI), which are necessary inputs for pricing models like Black-Scholes or binomial trees. In a decentralized environment, IV cannot be simply quoted from a central source; it must be derived from the market’s activity on-chain.

Reconstructing Volatility Surfaces

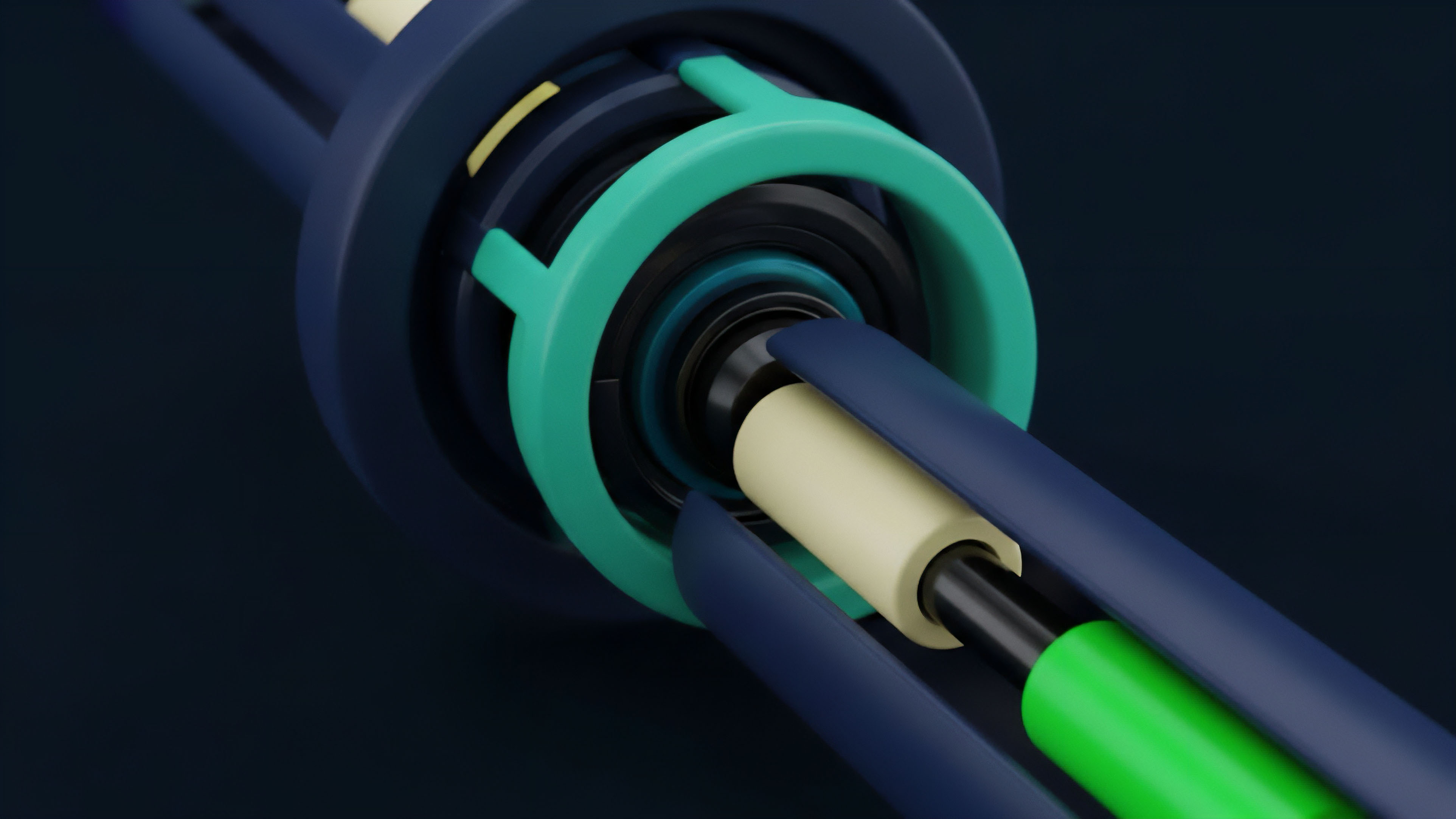

The process begins with extracting transaction data from options protocols. Every time an option is minted, traded, or exercised, a corresponding event log is generated on the blockchain. Aggregation involves collecting these logs and calculating the option’s current price.

This price data is then used to back-solve for the implied volatility, a key input in option pricing models. The resulting data set must be structured to create a volatility surface, which maps implied volatility across different strike prices and expiration dates. This surface provides a visual representation of market expectations regarding future price movements.

A high-quality aggregation process must account for:

- Liquidity Depth: The amount of capital available at different strike prices and expirations. Low liquidity can lead to significant price discrepancies and make volatility calculations unreliable.

- Greeks Calculations: The aggregated data serves as the basis for calculating risk sensitivities like Delta, Gamma, Theta, and Vega. These calculations are essential for market makers to hedge their positions and manage portfolio risk.

- Protocol-Specific Parameters: Different protocols have different settlement mechanisms and collateral requirements. The aggregation process must adapt to these specific parameters to accurately reflect the true risk profile of the protocol.

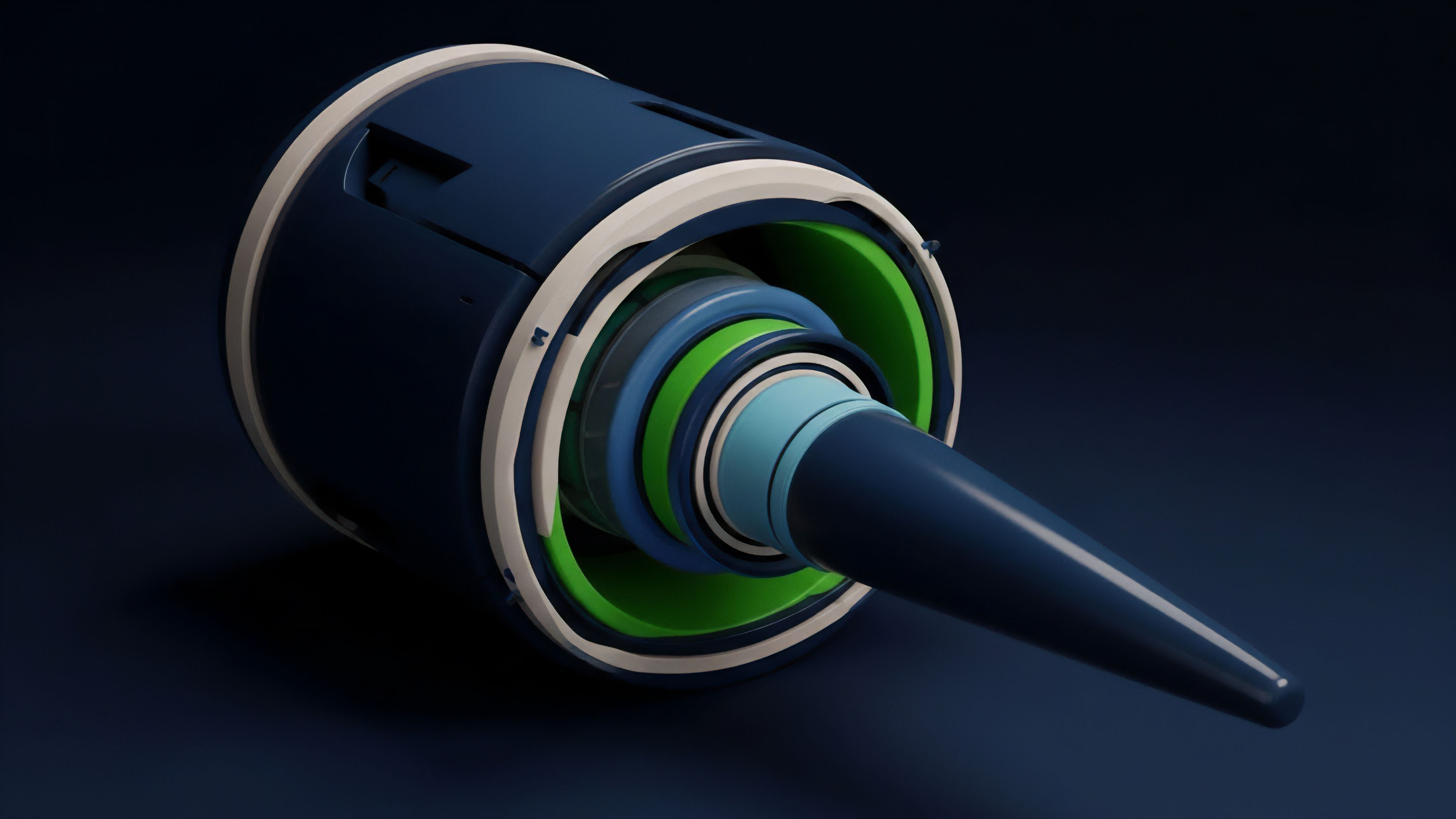

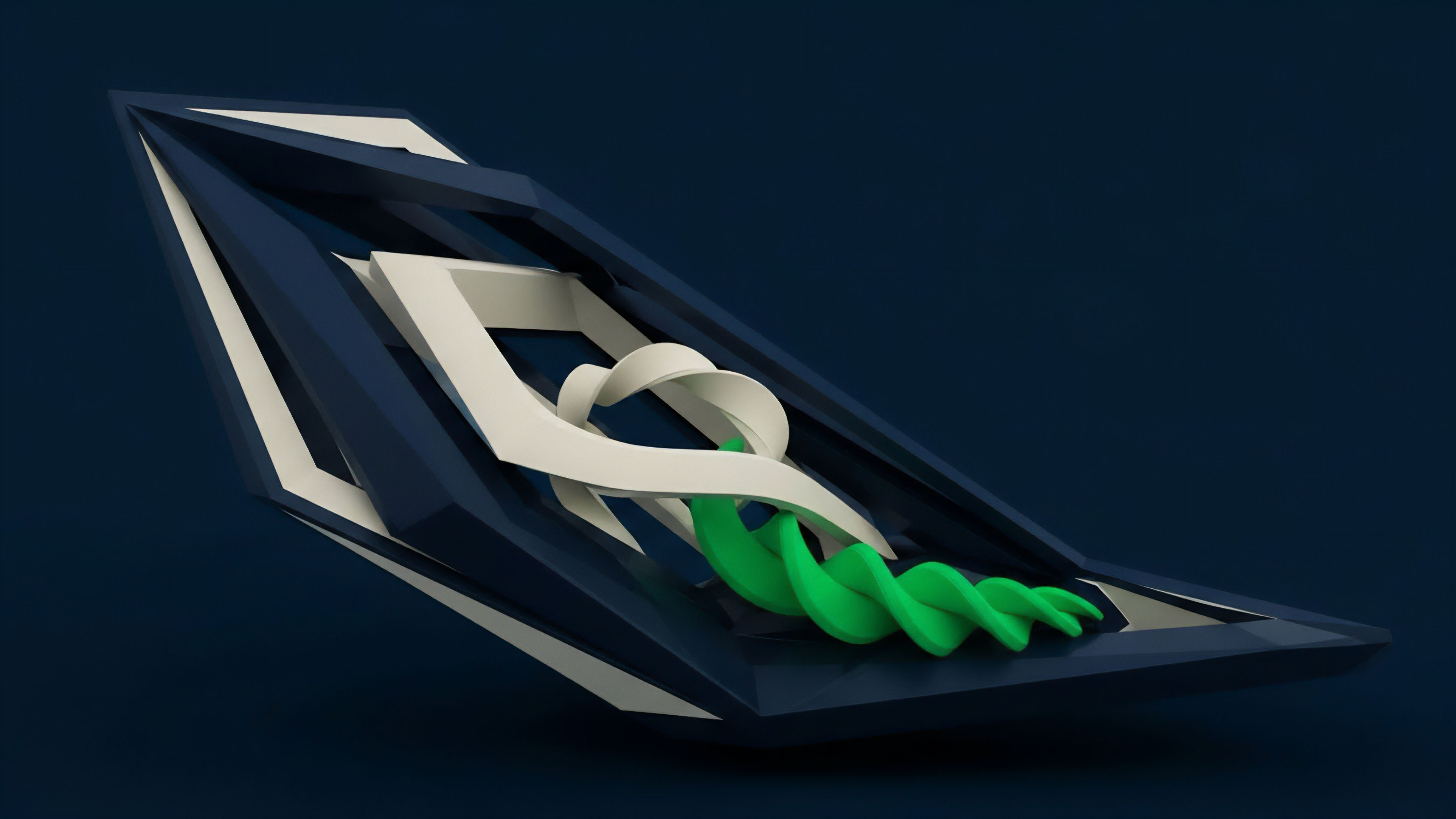

Data Pipeline Architecture

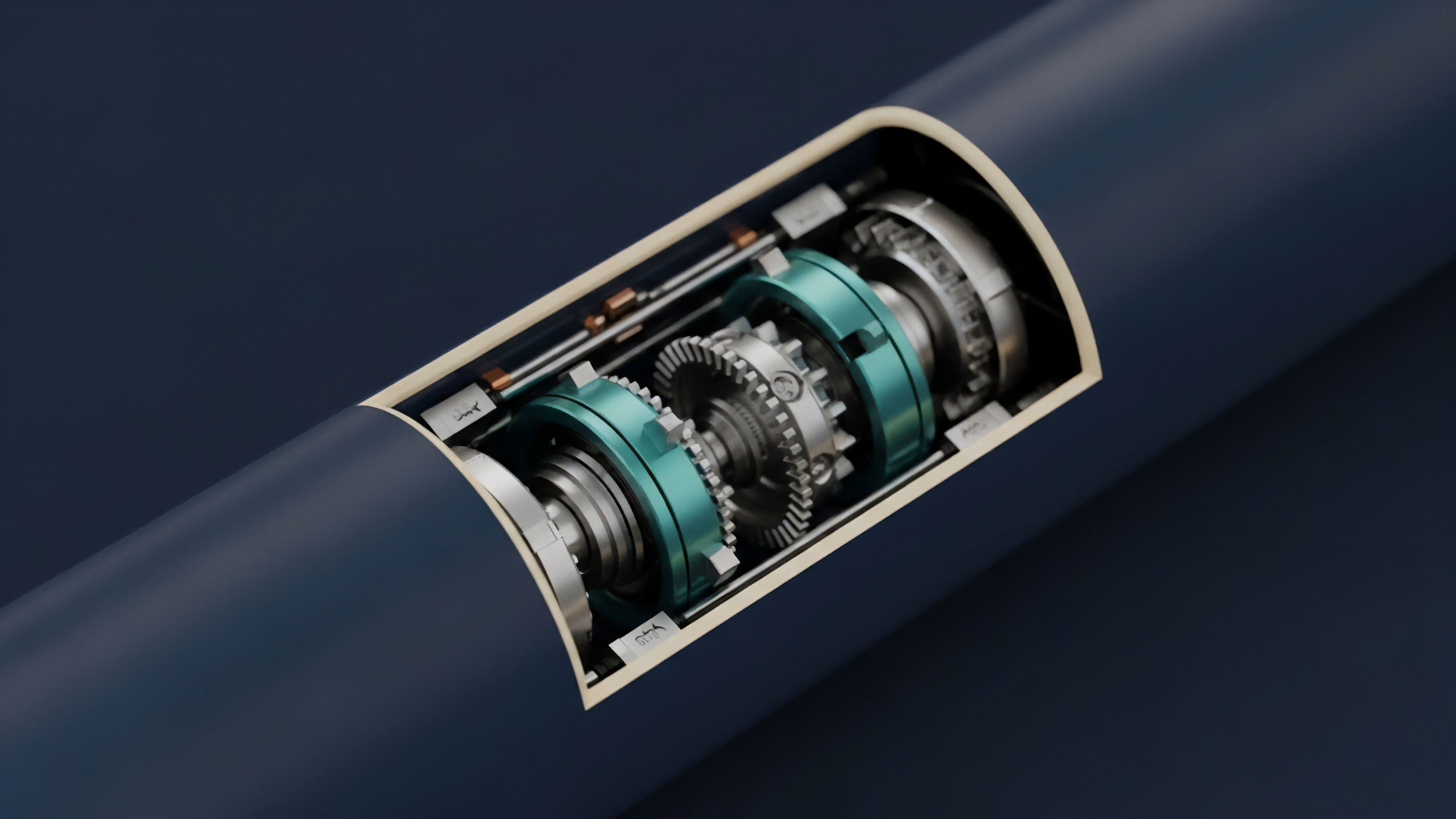

The technical implementation of aggregation typically follows a pipeline model. Raw event data from the blockchain is first extracted, then transformed into a structured format, and finally loaded into a database or data warehouse for querying. This process requires significant computational resources to keep up with the real-time stream of transactions, especially on high-throughput blockchains.

Approach

The current approach to on-chain data aggregation involves a variety of architectural solutions, each presenting different trade-offs in terms of latency, cost, and data integrity. The choice of aggregation method significantly impacts a protocol’s performance and risk profile.

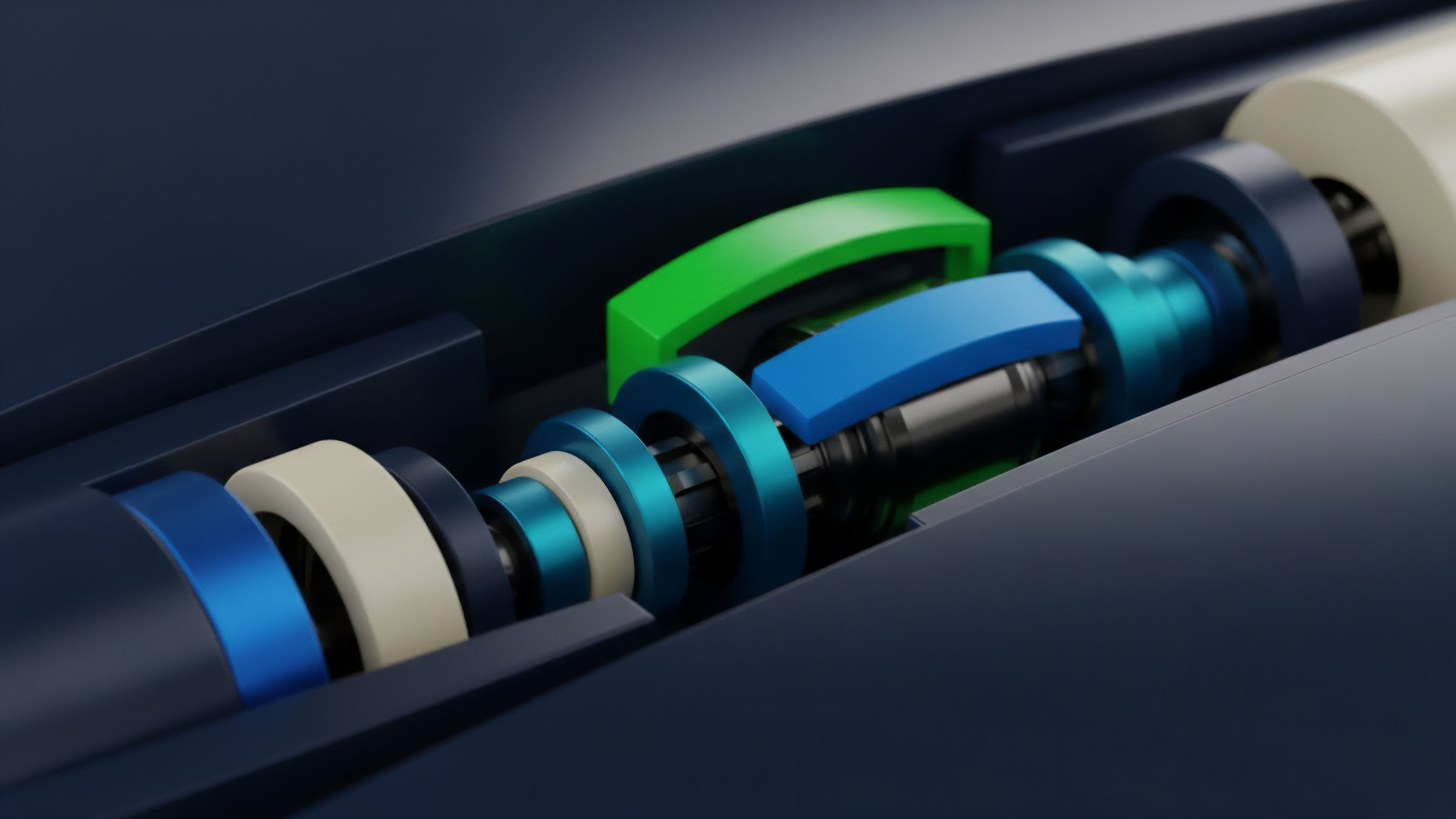

Centralized Indexers versus Decentralized Oracles

A common approach utilizes centralized data providers or indexers that listen to blockchain events and provide structured data feeds via an API. While efficient, this approach introduces a single point of failure and reintroduces centralization risks. The alternative involves decentralized oracles or data feeds, where multiple nodes contribute data and reach consensus on its accuracy.

The challenge in options markets is that a simple price feed (like for spot assets) is insufficient. Options require a real-time volatility surface. This has led to the development of specialized “volatility oracles” that perform complex calculations on-chain or off-chain to provide this specific data point.

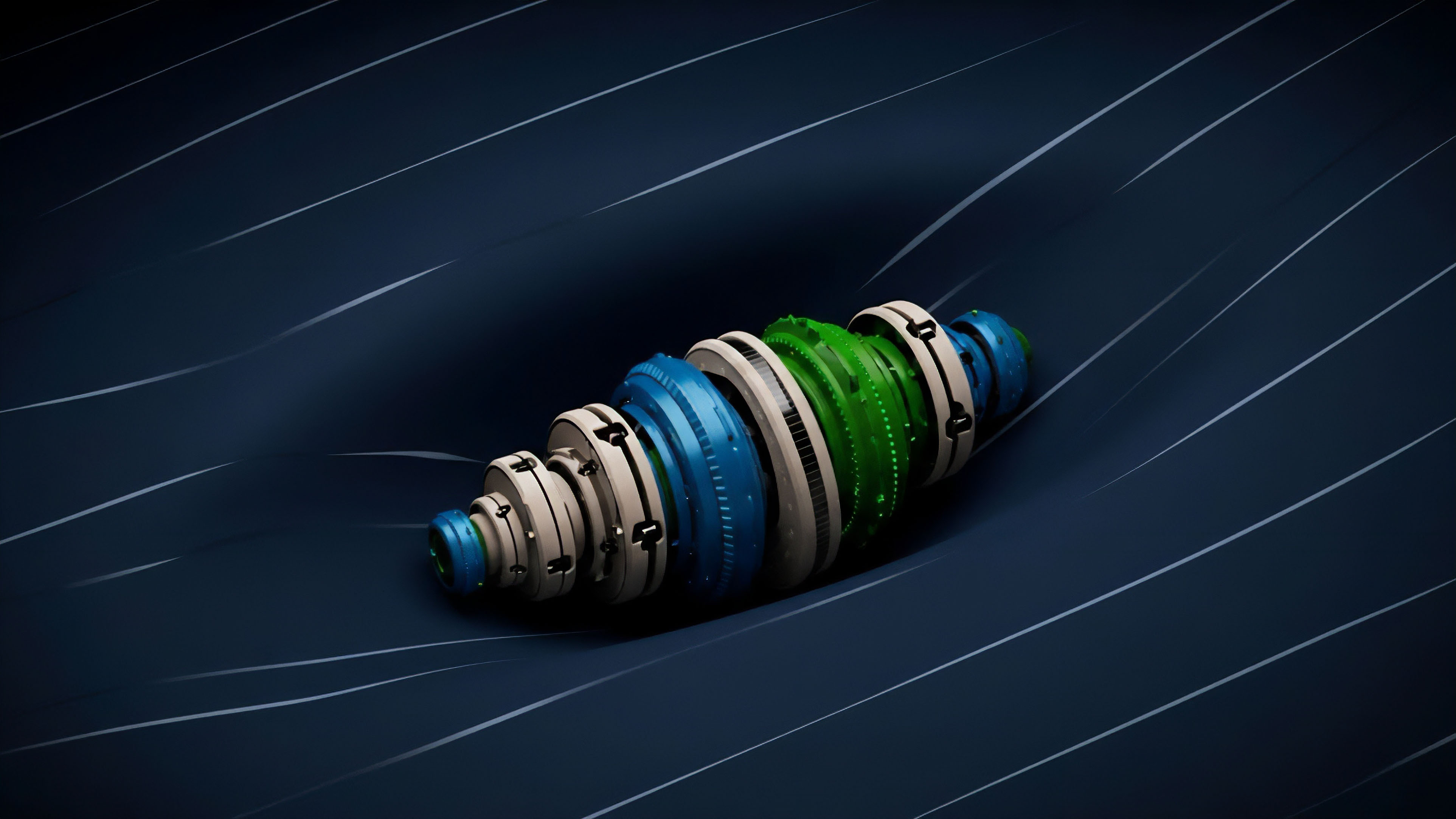

| Aggregation Method | Description | Latency Trade-off | Trust Model |

|---|---|---|---|

| Centralized Indexing | Single entity processes raw data and provides an API. | Low latency, high speed. | Requires trust in the indexer’s integrity. |

| Decentralized Oracles | Multiple nodes reach consensus on data before publishing. | Higher latency due to consensus mechanism. | Trustless, censorship resistant. |

| In-Protocol Calculation | Protocol’s smart contracts calculate data internally. | Highest latency and gas cost. | Highest security and trustlessness. |

Risk Management Applications

Market makers rely heavily on aggregated data to manage their risk exposures. The aggregated data provides a real-time view of their portfolio’s Greek sensitivities. For example, if aggregated data shows a sudden spike in implied volatility, a market maker can adjust their hedge positions to maintain a neutral risk profile.

This requires data to be not only accurate but also delivered with minimal delay.

Evolution

The evolution of on-chain data aggregation reflects a move toward higher precision and greater efficiency in calculating complex financial metrics. Initially, aggregation focused on simple metrics like token balances and transaction counts.

The next stage involved building custom indexing solutions to track specific smart contract events, allowing for the calculation of basic open interest and trading volume for options protocols.

From Simple Metrics to Volatility Surfaces

The critical leap in data aggregation was the transition from simple metrics to the calculation of real-time volatility surfaces. Early protocols struggled to accurately price options due to the lack of reliable volatility data. The evolution of aggregation introduced sophisticated methods for calculating implied volatility from on-chain transactions, often requiring significant computational resources.

This data allows for the creation of a volatility surface, which is essential for advanced risk management and pricing strategies.

The development of on-chain data aggregation has progressed from simple transaction monitoring to sophisticated, real-time calculation engines capable of generating dynamic volatility surfaces.

The challenge in this evolution has been maintaining data integrity while increasing calculation speed. The data aggregation layer has become increasingly complex, incorporating elements of machine learning to predict future volatility based on historical on-chain activity. This allows protocols to proactively manage risk rather than reacting to market changes.

Interoperability and Standardization

The current state of aggregation emphasizes interoperability between different protocols. Standardization of data formats allows for a more cohesive view of the entire options market. This reduces fragmentation and enables cross-protocol strategies.

The future evolution points toward fully decentralized data layers where data providers are incentivized to provide accurate information through token-based rewards and penalties.

Horizon

The future of on-chain data aggregation will center on creating fully automated risk engines and new forms of structured products. The aggregated data will move beyond simple monitoring and become an active component of protocol logic.

We are moving toward a system where collateral requirements dynamically adjust based on real-time volatility data derived from on-chain aggregation.

Dynamic Collateral Management

The next generation of options protocols will use aggregated data to dynamically adjust collateral requirements based on market conditions. If the aggregated data shows a sudden increase in implied volatility, the protocol will automatically increase collateral requirements for short option positions. This reduces systemic risk by preventing undercollateralization during periods of market stress.

This level of automation allows for more efficient capital utilization by reducing unnecessary collateral buffers during stable periods.

The Automated Risk Engine

The ultimate goal is to create fully autonomous risk engines that operate without human intervention. These engines will continuously monitor aggregated data, identify potential risks, and execute automated responses. The engine will use aggregated data to:

- Automated Hedging: Market makers can program their strategies to automatically hedge their positions based on real-time changes in aggregated Greek sensitivities.

- Dynamic Pricing: Options prices will dynamically adjust based on real-time implied volatility calculations, reducing opportunities for arbitrage.

- Systemic Contagion Monitoring: The engine will monitor aggregated data across different protocols to identify potential contagion risks and proactively mitigate them.

This automated system requires a robust and reliable data aggregation layer. The development of these automated risk engines will define the next phase of decentralized options markets, moving toward greater capital efficiency and stability.

Glossary

Yield Aggregation Protocols

Data Aggregation Logic

Data Latency

Data Source Aggregation Methods

Volatility Surface Aggregation

Risk Aggregation Efficiency

Dex Data Aggregation

Cross-Venue Liquidity Aggregation

Private Data Aggregation