Essence

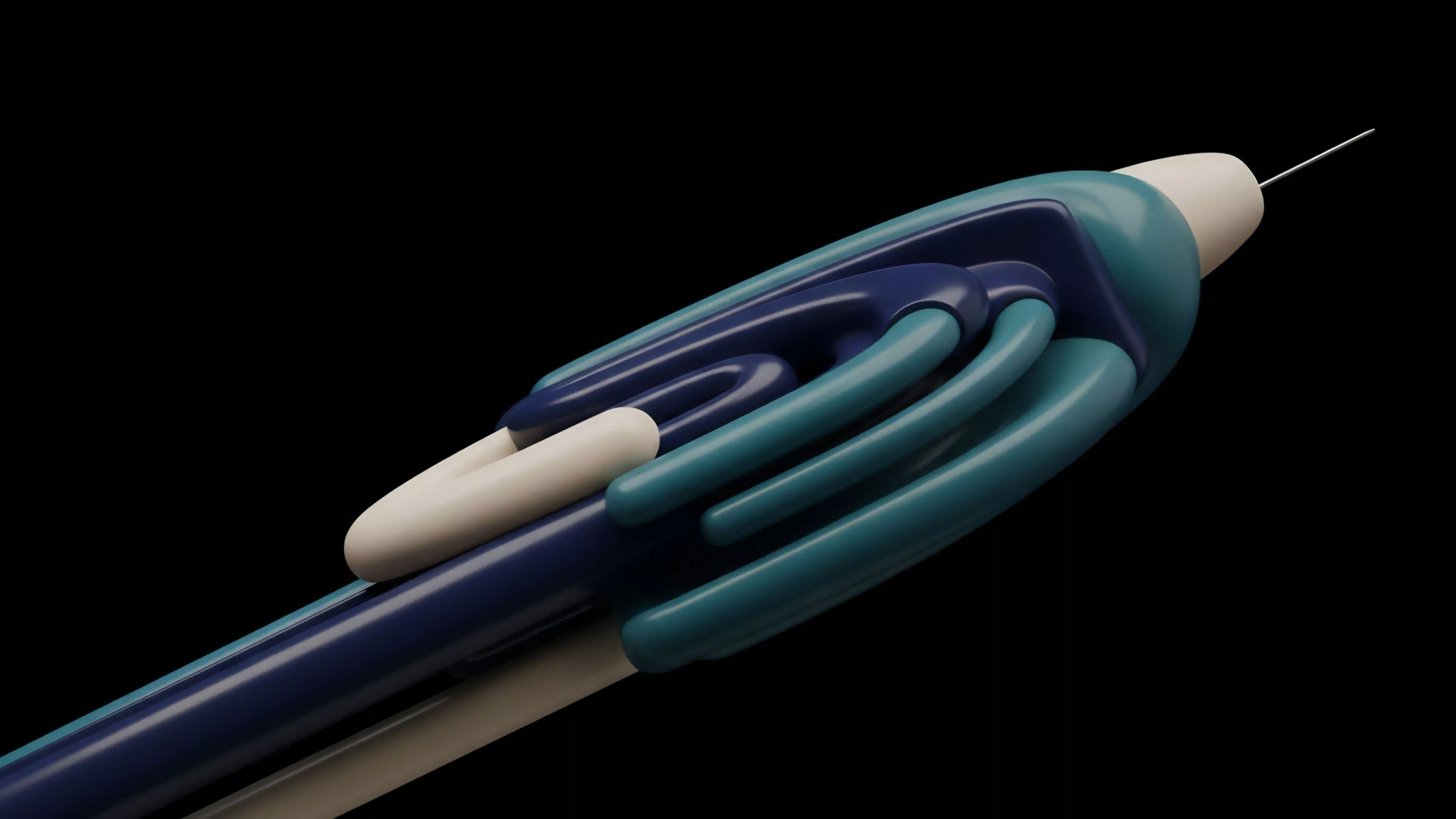

Off Chain Computation Layer represents a specialized architectural framework designed to shift intensive financial calculations, such as option pricing, volatility surface modeling, and margin risk assessments, away from the resource-constrained environment of the main blockchain. This separation enables the execution of complex mathematical operations that exceed the gas limits and computational throughput inherent to base-layer consensus protocols. By moving these processes to a verifiable, external environment, protocols gain the ability to maintain real-time responsiveness and high-frequency updates without compromising the integrity of on-chain settlement.

Off Chain Computation Layer enables the execution of complex derivative pricing models by decoupling intensive mathematical processes from blockchain consensus constraints.

The core utility resides in the ability to process large datasets and execute iterative algorithms ⎊ such as Black-Scholes variations or Monte Carlo simulations ⎊ that are otherwise prohibitively expensive to compute directly on-chain. This structural choice transforms the blockchain from a general-purpose processor into a secure, final settlement ledger, while the computational layer functions as a high-performance engine for derivatives market activity. Participants rely on cryptographic proofs to ensure that the results produced off-chain remain consistent with the state transitions authorized by the underlying smart contracts.

Origin

The necessity for an Off Chain Computation Layer arose from the fundamental performance limitations of early decentralized finance protocols, which struggled to support the latency and throughput requirements of professional-grade options trading.

Initial attempts to implement automated market makers or order books directly on-chain encountered severe bottlenecks, as every price update or margin adjustment required a costly consensus transaction. Developers recognized that the bottleneck was not merely the speed of the underlying network, but the inherent incompatibility between sequential, consensus-heavy validation and the parallel, high-speed nature of derivative pricing engines.

- Computational Overhead refers to the excessive gas consumption required when executing complex derivative math directly within smart contracts.

- Latency Sensitivity dictates the requirement for sub-second updates in volatility surfaces to maintain accurate pricing in competitive markets.

- State Bloat describes the long-term accumulation of unnecessary data on-chain that degrades network performance over time.

This realization drove the industry toward hybrid architectures where the blockchain serves as the arbiter of truth and collateral custody, while dedicated off-chain servers handle the heavy lifting of order matching, risk management, and pricing. Early iterations relied on trusted centralized operators, but the evolution of zero-knowledge proofs and optimistic verification methods allowed for a shift toward decentralized, trust-minimized computational environments. This transition marks the maturation of the sector, moving from simplistic on-chain vaults to sophisticated derivative exchanges capable of mimicking the efficiency of traditional financial institutions.

Theory

The theoretical framework of Off Chain Computation Layer relies on the principle of verifiable outsourcing.

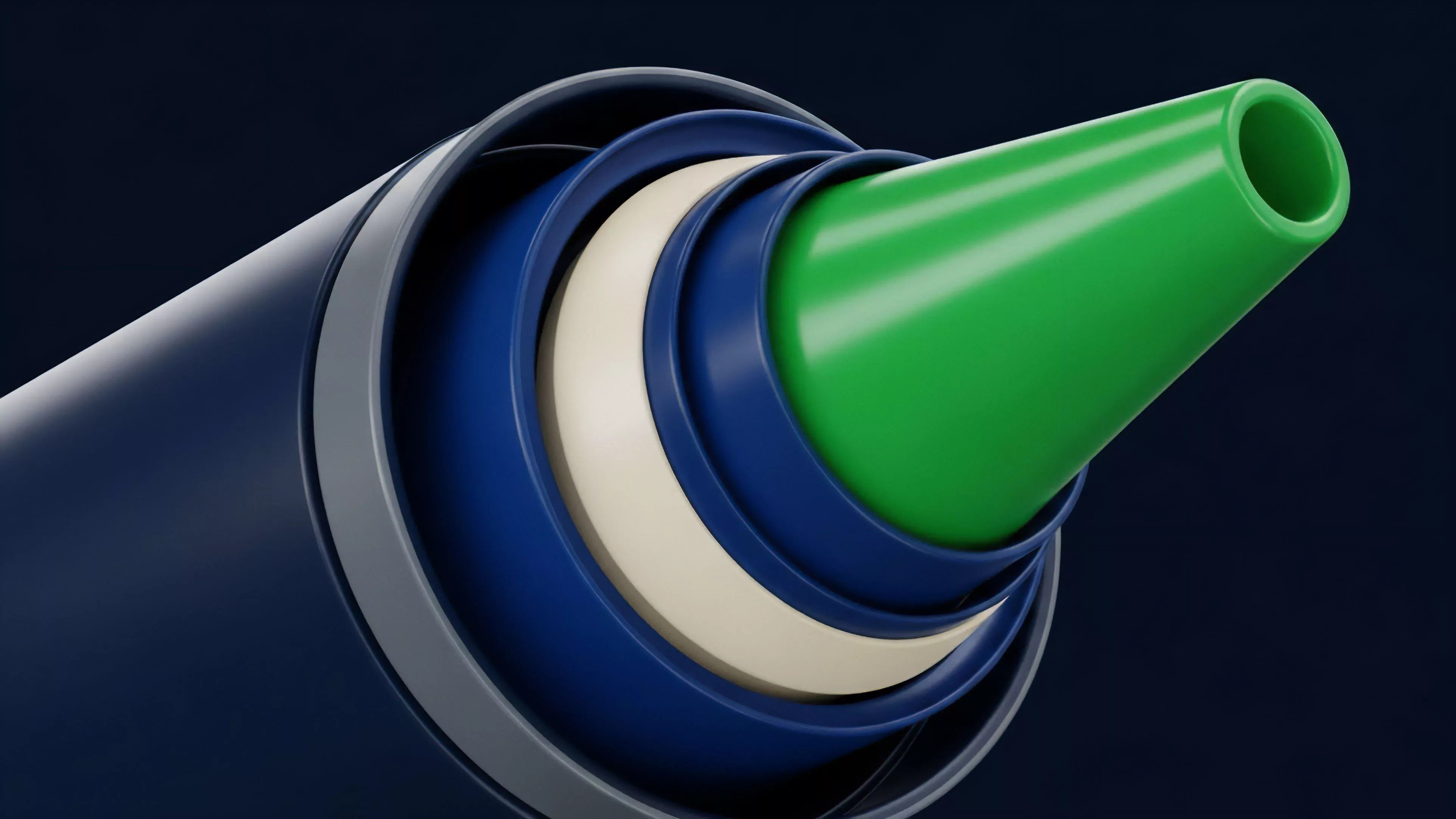

The system splits functionality into two distinct domains: the settlement layer and the computation layer. The settlement layer remains on-chain, enforcing the rules of collateralization, liquidation, and final asset transfer. The computation layer operates in an environment optimized for speed, performing the intensive mathematical tasks necessary to calculate the Greeks ⎊ Delta, Gamma, Vega, Theta, and Rho ⎊ and updating order books in real time.

| Component | Function | Execution Environment |

| Settlement Layer | Collateral Custody | On-Chain |

| Computation Layer | Pricing Models | Off-Chain |

| Verification Mechanism | State Consistency | Hybrid |

The verification mechanism ensures that off-chain calculations align with on-chain collateral states, providing cryptographic assurance of market integrity.

This structure necessitates a robust verification mechanism, such as a zero-knowledge proof or a fraud-proof challenge window, to ensure the computation layer does not deviate from the protocol rules. Without these cryptographic safeguards, the computation layer becomes a potential point of failure where an operator could manipulate pricing data to favor specific participants. By anchoring the off-chain outputs to the on-chain state, the architecture forces the computation layer to behave as an honest agent, even when the underlying operators have an incentive to deviate.

It is a classic problem of information asymmetry in distributed systems ⎊ the market requires speed, yet the protocol demands absolute, verifiable truth.

Approach

Current implementations of Off Chain Computation Layer utilize a combination of trusted execution environments, decentralized sequencers, and cryptographic verification protocols to manage risk and pricing. Market makers now push volatility surface updates to these layers, which then propagate those prices to the user interface and the settlement engine. This approach allows for the dynamic adjustment of margin requirements based on real-time market conditions, rather than relying on stale data or overly conservative, static collateralization parameters.

- Zero Knowledge Proofs allow for the compact verification of massive off-chain computations without exposing sensitive pricing logic.

- Optimistic Rollups enable the batching of derivative transactions into single on-chain proofs, significantly reducing the cost per trade.

- Trusted Execution Environments provide hardware-level isolation for running proprietary pricing algorithms while maintaining confidentiality.

This design shift allows for the introduction of complex derivative products like exotic options and volatility-linked instruments that require continuous, multi-factor calculations. The challenge remains in minimizing the delay between the off-chain calculation and the on-chain enforcement of liquidations. If the computation layer fails to communicate a sudden volatility spike to the settlement layer in time, the protocol risks under-collateralization.

Consequently, sophisticated risk managers are building multi-tiered redundancy into these layers to ensure that even during network congestion, the system maintains accurate risk assessment and protects the solvency of the liquidity pools.

Evolution

The progression of Off Chain Computation Layer has moved from simple off-chain order books to fully decentralized, verifiable computational fabrics. Initial designs utilized centralized sequencers to manage the order flow, creating a dependency on the integrity of the exchange operator. The industry recognized this vulnerability and transitioned toward architectures that distribute the computational burden across a network of nodes.

This decentralization of the computation layer reduces the systemic risk of a single point of failure and increases the resistance to censorship or manipulation.

The evolution of these layers reflects a transition from centralized operator models to trust-minimized, decentralized computational fabrics.

These systems now incorporate advanced game-theoretic incentives, where nodes are financially penalized for providing inaccurate pricing data or failing to update the state in a timely manner. This evolution has also seen the adoption of modular blockchain stacks, where the Off Chain Computation Layer can be optimized for specific derivative types, such as perpetuals, binary options, or complex multi-leg spreads. This modularity allows developers to iterate on pricing models independently of the base-layer consensus, leading to faster innovation and more competitive market dynamics.

The infrastructure is now capable of handling the volatility of high-leverage environments with increasing reliability.

Horizon

The future of Off Chain Computation Layer lies in the seamless integration of cross-chain liquidity and the standardization of cryptographic verification protocols. As these layers become more sophisticated, they will facilitate the creation of global, unified order books that span multiple blockchain networks, effectively solving the problem of liquidity fragmentation. This expansion will allow derivatives markets to operate with the same depth and efficiency as traditional exchanges, while maintaining the non-custodial, permissionless properties of the decentralized ecosystem.

| Future Trend | Anticipated Impact |

| Cross-Chain Settlement | Unified global liquidity |

| Hardware Acceleration | Microsecond latency pricing |

| Modular Proof Systems | Lower verification costs |

The next stage of development will involve the integration of artificial intelligence into the computation layer to dynamically optimize pricing and risk parameters based on historical data and real-time order flow. This will likely lead to the emergence of autonomous market-making agents that can adjust strategies faster than any human-led team. These systems will fundamentally change how liquidity is provided, reducing the cost of hedging and increasing the accessibility of sophisticated derivative strategies to a broader range of participants. The convergence of these technologies is positioning the computation layer as the critical backbone for the next cycle of institutional-grade decentralized finance.