Essence

Non Cooperative Game Theory serves as the mathematical framework for modeling strategic interactions where participants act independently to maximize personal utility without the possibility of binding agreements. In the decentralized finance landscape, this translates to an adversarial environment where protocols must assume that every actor ⎊ liquidity providers, arbitrageurs, or governance participants ⎊ will prioritize self-interest above systemic stability.

Non Cooperative Game Theory defines the strategic equilibrium where individual utility maximization dictates market participant behavior.

The core utility lies in predicting outcomes when agents operate under asymmetric information and limited trust. By identifying Nash Equilibria, architects can design incentive structures that align individual profit motives with protocol health. This approach acknowledges that decentralized systems cannot rely on external enforcement, forcing developers to bake resilience directly into the mechanism design.

Origin

The intellectual foundations trace back to John von Neumann and Oskar Morgenstern, further refined by John Nash through the formalization of the Nash Equilibrium.

These early developments moved beyond the rigid assumptions of classical economics, which often required perfect competition or altruistic cooperation.

- Strategic Independence: The foundational shift toward modeling agents as autonomous decision makers.

- Equilibrium Analysis: The mathematical identification of states where no player gains by unilaterally changing their strategy.

- Adversarial Modeling: The conceptual leap that allowed for the analysis of conflict rather than just cooperation.

This history provides the bedrock for modern crypto finance, where the absence of a central clearinghouse necessitates that the protocol itself functions as the arbiter of interaction. The transition from game theory in social sciences to its application in programmable money represents a fundamental change in how financial systems are constructed and maintained.

Theory

The mechanics of Non Cooperative Game Theory in derivatives focus on the interplay between risk-neutral pricing and the strategic actions of liquidity providers. In an options market, the Greeks ⎊ delta, gamma, vega, theta ⎊ are not static variables but dynamic outputs of agent behavior.

When an arbitrageur observes a mispriced option, their decision to trade alters the underlying market microstructure, effectively forcing the system toward a new state of equilibrium.

| Component | Mechanism |

| Liquidity Provision | Incentive-driven capital allocation |

| Arbitrage Action | Correction of pricing discrepancies |

| Protocol Settlement | Enforcement of payoff functions |

The mathematical rigor required here involves modeling the liquidation threshold as a barrier option. If a borrower’s collateral falls below a specific value, the game shifts from a cooperative-like lending state to an aggressive, non-cooperative liquidation race.

Systemic resilience relies on the alignment of individual liquidation incentives with the protocol solvency requirements.

Market participants operate under constant threat of automated agents, which exploit tiny latency differences or oracle updates. This is where the pricing model becomes elegant ⎊ and dangerous if ignored. The physics of the protocol, such as the latency between block confirmation and order matching, dictates the boundaries of what is possible for a rational agent.

Approach

Current strategies prioritize Capital Efficiency and Risk Mitigation by embedding game-theoretic constraints directly into smart contracts.

Market makers utilize advanced quantitative models to adjust spreads dynamically based on the observed behavior of other participants.

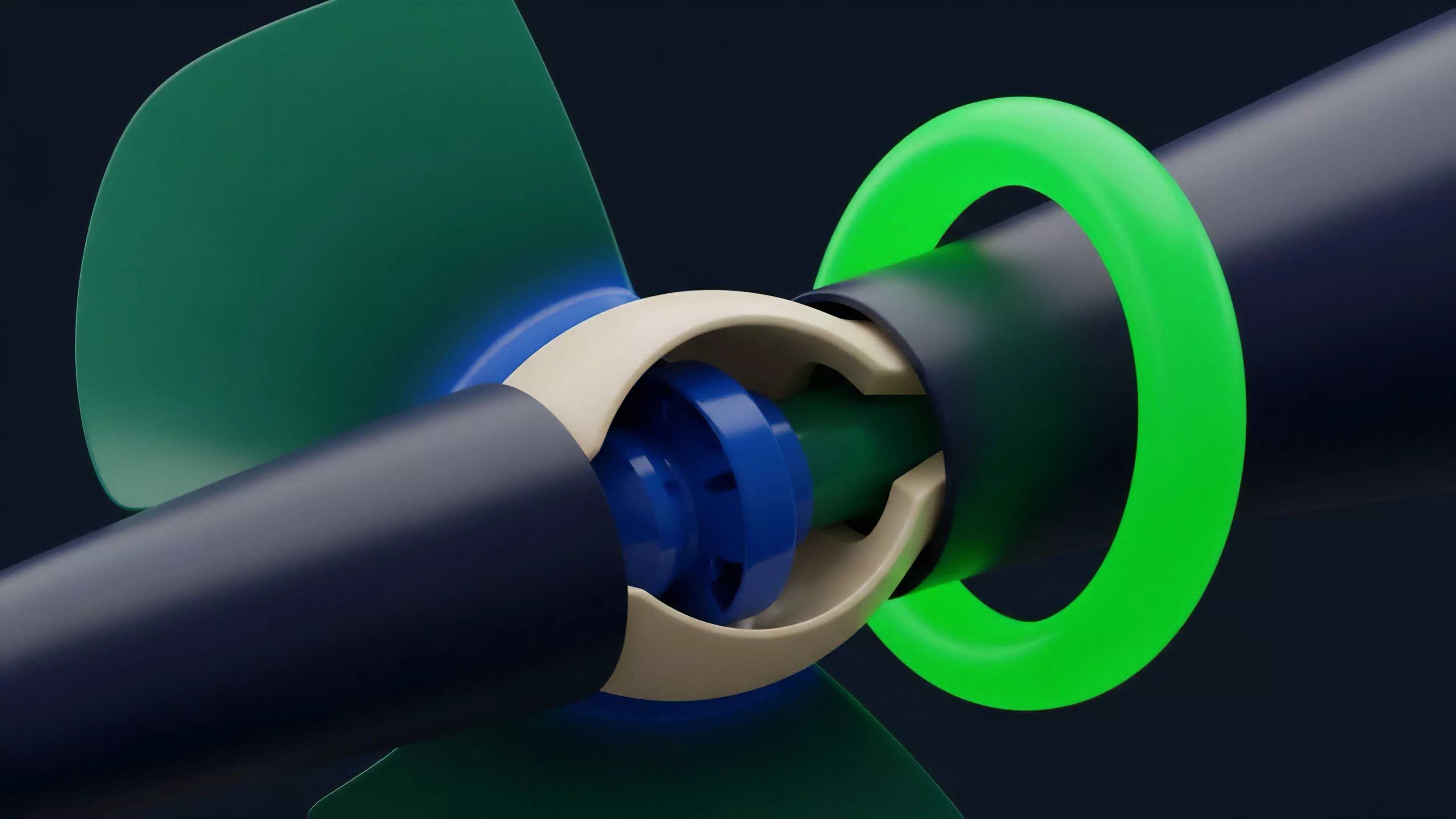

- Automated Market Makers: Protocols utilizing invariant functions to force liquidity provision.

- Governance Staking: Economic penalties designed to disincentivize malicious voting patterns.

- Margin Engines: Algorithmic assessment of risk that triggers liquidation upon breach.

This approach replaces human trust with mathematical certainty. By simulating thousands of market scenarios, architects can test whether the incentive structure survives under extreme volatility. The goal is to ensure that even if every participant acts to maximize their own gain, the system remains solvent and functional.

Evolution

The transition from centralized exchanges to decentralized protocols necessitated a radical redesign of Incentive Structures.

Early iterations relied on simple, static models that frequently failed during periods of high market stress. Current designs incorporate multi-stage games where reputation, time-weighted voting, and dynamic collateralization ratios provide layers of defense against strategic exploitation.

Market evolution forces protocols to adapt incentive designs against increasingly sophisticated and automated adversarial agents.

We have observed a shift from basic order books to complex Automated Market Maker designs, where liquidity is concentrated to maximize efficiency. This change reflects a deeper understanding of how capital flows behave in a permissionless environment. The maturation of these systems demonstrates that decentralized finance is moving away from experimental code toward robust, game-theoretically sound financial infrastructure.

Horizon

Future developments will focus on Cross-Protocol Liquidity and the mitigation of systemic contagion through modular, game-theoretic risk assessment.

As systems become more interconnected, the strategies employed by agents will span multiple chains and protocols simultaneously, creating new, complex forms of arbitrage and risk.

| Focus Area | Strategic Impact |

| Interoperability | Increased complexity in multi-chain games |

| Privacy | Shift toward hidden information strategies |

| AI Agents | Acceleration of equilibrium discovery |

The integration of artificial intelligence into these markets will drastically reduce the time required to reach equilibrium, potentially leading to hyper-efficient but highly fragile systems. The challenge lies in building protocols that remain stable even when confronted with near-instantaneous, perfectly rational, and non-cooperative machine agents.