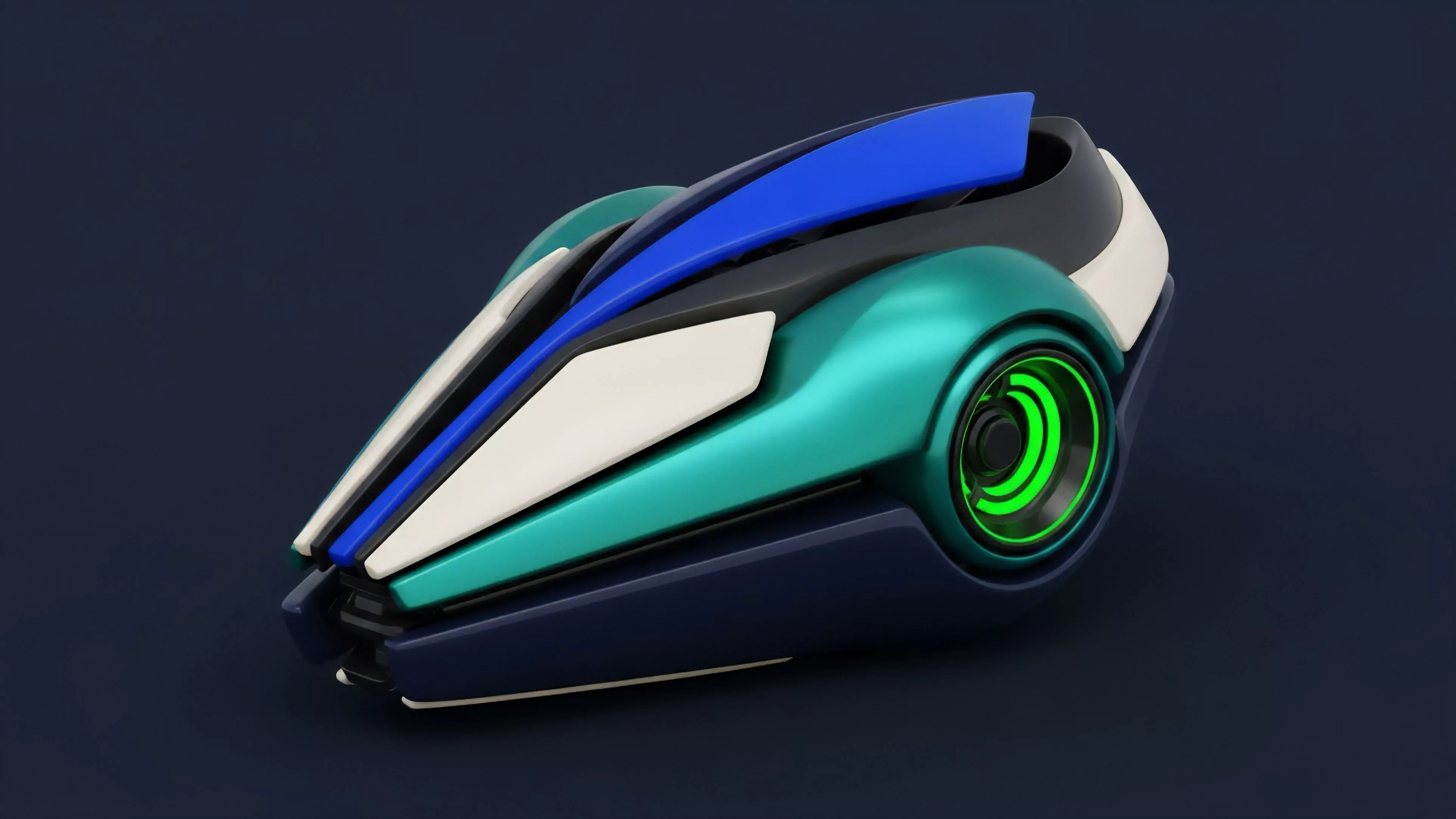

Essence

Neural Network Models within decentralized finance function as non-linear computational frameworks designed to approximate complex functions mapping market inputs to predictive outputs. These architectures leverage interconnected layers of artificial neurons to identify patterns in high-dimensional datasets that traditional linear econometric models fail to detect. By processing vast streams of order flow, volatility surfaces, and on-chain liquidity metrics, these models facilitate autonomous price discovery and risk assessment.

Neural Network Models serve as adaptive computational engines that transform high-dimensional market data into predictive signals for derivative pricing and risk management.

The systemic relevance of these models lies in their ability to handle the non-stationarity inherent in digital asset markets. Unlike static formulas that assume constant volatility or normal distributions, these structures evolve alongside the data they process. This capacity allows for the dynamic adjustment of hedge ratios and margin requirements in response to sudden shifts in market regime, effectively serving as the nervous system for automated market makers and algorithmic trading strategies.

Origin

The lineage of these computational tools traces back to foundational research in connectionism and statistical learning theory.

Initial implementations in financial markets utilized simple perceptrons to forecast asset returns, yet their adoption remained constrained by limited computational power and sparse historical data. The shift toward modern deep learning architectures occurred as decentralized venues began generating granular, immutable transaction logs, providing the necessary high-fidelity training data for sophisticated models.

- Backpropagation algorithms enable the iterative refinement of weight parameters across multiple hidden layers to minimize prediction error.

- Recurrent architectures allow models to maintain internal state representations, facilitating the analysis of time-series dependencies in option Greeks.

- Attention mechanisms prioritize specific features within massive order books, enhancing the accuracy of volatility forecasting.

This transition from static statistical estimation to adaptive learning mirrors the broader evolution of decentralized protocols. As liquidity moved on-chain, the requirement for automated, trustless pricing mechanisms became acute. Developers synthesized these machine learning foundations with smart contract logic, creating the current generation of autonomous derivative protocols capable of self-correcting their pricing models without centralized oversight.

Theory

The structural integrity of Neural Network Models rests on their ability to optimize objective functions within an adversarial environment.

In the context of crypto options, the model typically aims to minimize the discrepancy between the theoretical fair value and the realized market price. This involves a continuous cycle of forward propagation, where input features ⎊ such as implied volatility skew, time-to-expiry, and underlying asset velocity ⎊ are passed through non-linear activation functions.

| Component | Functional Role |

| Input Layer | Ingests raw order book and blockchain telemetry |

| Hidden Layers | Extract non-linear features and latent market patterns |

| Output Layer | Generates probability distributions for asset pricing |

The mathematical rigor of these models demands strict attention to overfitting. When a model captures noise rather than signal, its predictive utility collapses under market stress. Practitioners mitigate this through regularization techniques and cross-validation against historical liquidity crunches.

The interplay between these models and the underlying protocol physics remains delicate; if a model miscalculates tail risk, the resulting liquidation cascades can propagate rapidly through interconnected lending and derivative venues.

The efficacy of a model is defined by its ability to generalize across diverse market regimes while maintaining computational efficiency within the constraints of on-chain execution.

One might consider the parallel between these digital architectures and biological nervous systems, where synaptic plasticity allows for survival in unpredictable environments. Just as a biological entity must filter sensory input to react to immediate threats, a protocol must parse massive data streams to maintain solvency. The model is not merely a tool for profit, but a mechanism for system survival.

Approach

Current implementation strategies focus on integrating these models directly into the margin engines of decentralized exchanges.

Instead of relying on off-chain oracles that may introduce latency, architects deploy lightweight neural structures that execute on-chain or via decentralized compute layers. This reduces reliance on centralized data providers and enhances the resilience of the protocol against external manipulation.

- Data Preprocessing involves cleaning raw trade execution data to remove anomalous spikes that could skew model training.

- Feature Engineering selects variables with high predictive power, such as funding rate divergence and open interest concentration.

- Model Deployment utilizes optimized smart contract interfaces to update pricing parameters in real-time based on the model output.

Risk management remains the primary constraint. Quantitative analysts employ these models to calculate dynamic Greeks, specifically focusing on Delta and Gamma hedging in volatile conditions. By simulating thousands of market scenarios, the models determine optimal collateral requirements, ensuring that the protocol remains over-collateralized even during extreme market moves.

The transition toward these autonomous models represents a shift from reactive to proactive financial engineering.

Evolution

The trajectory of these models has moved from simple, centralized price predictors to distributed, protocol-native agents. Early attempts suffered from latency and high gas costs, preventing real-time integration with high-frequency trading venues. Today, advancements in zero-knowledge proofs and layer-two scalability solutions allow for more complex models to operate within the constraints of decentralized environments.

| Development Phase | Technical Focus |

| First Generation | Linear regression and basic statistical models |

| Second Generation | Deep learning with centralized off-chain processing |

| Third Generation | Protocol-native models with decentralized compute |

This progression highlights the increasing demand for trust-minimized financial infrastructure. The reliance on external data feeds created a systemic vulnerability, leading to the development of self-contained pricing models that derive their validity from on-chain liquidity alone. This shift reduces the attack surface for bad actors and increases the overall stability of the derivative market, as the pricing logic becomes as transparent and immutable as the ledger itself.

Horizon

Future developments will likely center on the synthesis of reinforcement learning with decentralized governance.

Models will not only price assets but also autonomously adjust protocol parameters such as interest rates and liquidation thresholds in response to changing systemic risk profiles. This transition toward autonomous protocol management poses significant challenges for regulatory compliance and auditability.

Autonomous models represent the next frontier in decentralized finance, shifting protocol management from human governance to algorithmic, real-time adaptation.

As these systems grow in complexity, the risk of emergent behaviors ⎊ where independent models interact in unforeseen ways ⎊ will become the primary focus for system architects. Designing protocols that can withstand the compounding effects of multiple, autonomous agents requires a new framework for testing and validation. The path forward involves creating transparent, interpretable models that allow participants to understand the logic behind pricing and risk decisions, ensuring that the decentralized financial system remains robust and equitable for all participants. What mechanisms will define the boundary between autonomous model efficiency and the requirement for human-supervised oversight in the event of systemic liquidity collapse?