Essence

Network Performance Metrics define the operational throughput, latency, and reliability parameters governing decentralized financial infrastructure. These indicators provide the raw data required to assess whether a blockchain or derivative protocol can sustain the high-frequency demands of institutional-grade trading without succumbing to congestion or settlement failures.

Network performance metrics quantify the technical capacity of a protocol to process financial transactions reliably under varying levels of market stress.

At the architectural level, these metrics function as the health monitors for the underlying settlement layer. Without precise tracking of block finality times, transaction propagation speeds, and validator uptime, derivative instruments lose their predictable relationship with underlying spot assets. The market participants rely on these data points to calibrate risk models and execute complex hedging strategies in environments where microsecond advantages dictate solvency.

Origin

The genesis of these metrics traces back to the fundamental trade-offs identified in early distributed systems research.

Early practitioners sought to balance decentralization, security, and scalability ⎊ the core components of the blockchain trilemma. As trading venues migrated on-chain, the focus shifted from simple transaction speed to the specific requirements of financial order flow.

- Throughput emerged as the primary metric for measuring the capacity of a ledger to handle concurrent orders during high volatility.

- Latency became the critical variable for market makers needing to adjust quotes in response to external price movements.

- Finality serves as the definitive indicator for when a trade is irreversibly settled within the protocol state.

These metrics were adapted from traditional high-frequency trading infrastructure, where hardware-level performance dictates success. In decentralized finance, however, these variables are governed by consensus mechanisms rather than centralized hardware stacks. This transition necessitated a shift in perspective, viewing network performance as an emergent property of validator incentives and protocol design rather than a static hardware specification.

Theory

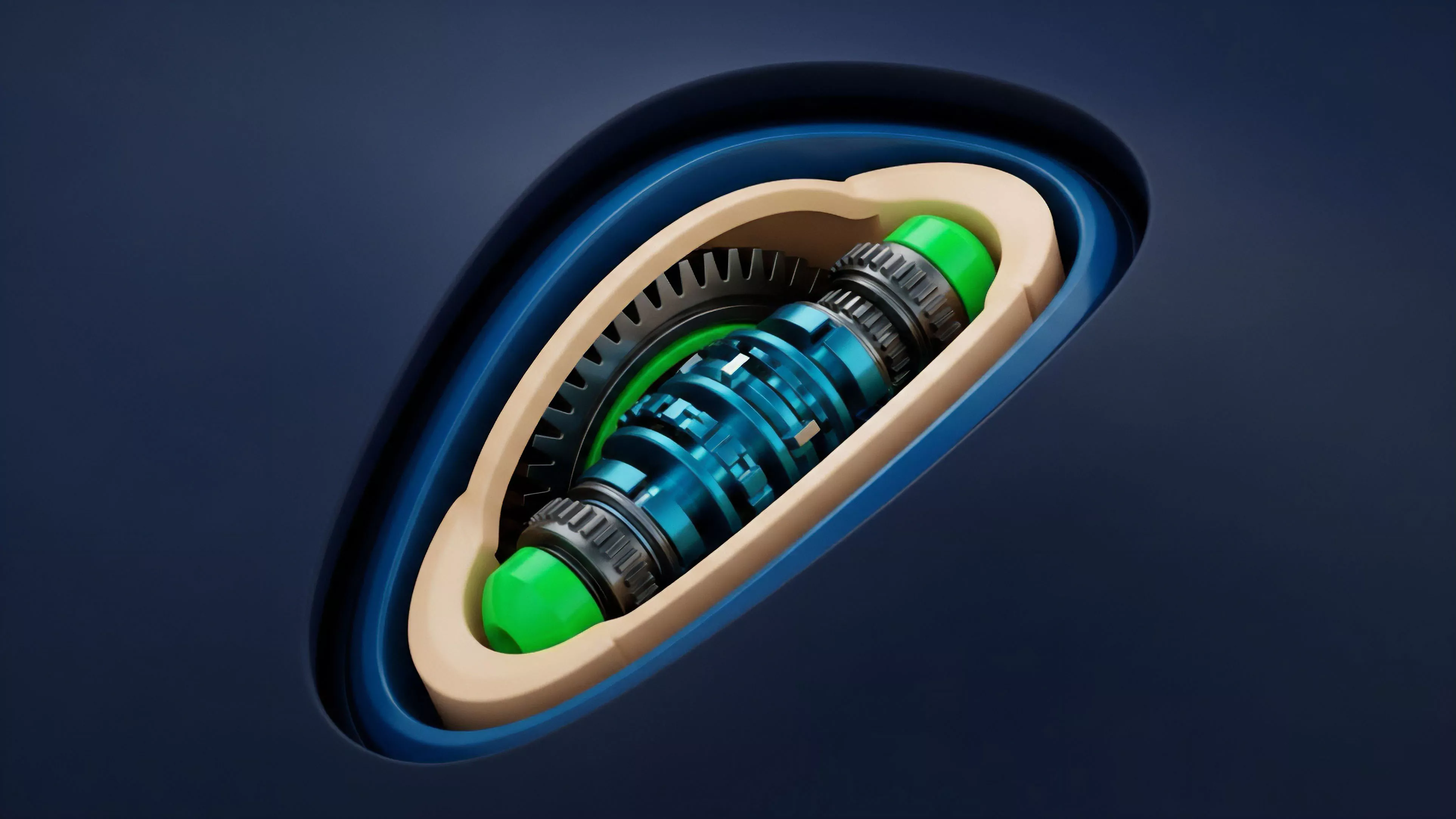

The quantitative analysis of Network Performance Metrics centers on the interaction between consensus physics and market microstructure.

When transaction demand spikes, the relationship between gas prices, block space utilization, and queue depth becomes non-linear. This creates feedback loops where delays in transaction inclusion lead to slippage, which in turn triggers further liquidations and heightened network activity.

| Metric | Financial Impact |

| Block Latency | Execution risk for time-sensitive orders |

| Gas Volatility | Unpredictable transaction costs for margin calls |

| Finality Time | Duration of counterparty risk exposure |

The mathematical modeling of these metrics involves stochastic processes to predict how transaction queues behave under adversarial conditions. If the network experiences a surge in message volume, the resulting propagation delay acts as a hidden tax on liquidity providers. My own research into these dynamics suggests that most pricing models fail to account for the variance in finality times, which leads to mispriced options during periods of extreme market stress.

Understanding the statistical distribution of block times is the prerequisite for calculating true counterparty risk in decentralized derivative contracts.

One might consider how the physical constraints of light speed and node synchronization mimic the limitations faced by classical telecommunications engineers in the mid-twentieth century. Just as signal degradation plagued early long-distance telephony, network congestion forces a re-evaluation of how we structure trustless financial agreements. The system architecture dictates the financial outcome; ignore the protocol physics, and the market will eventually enforce the error.

Approach

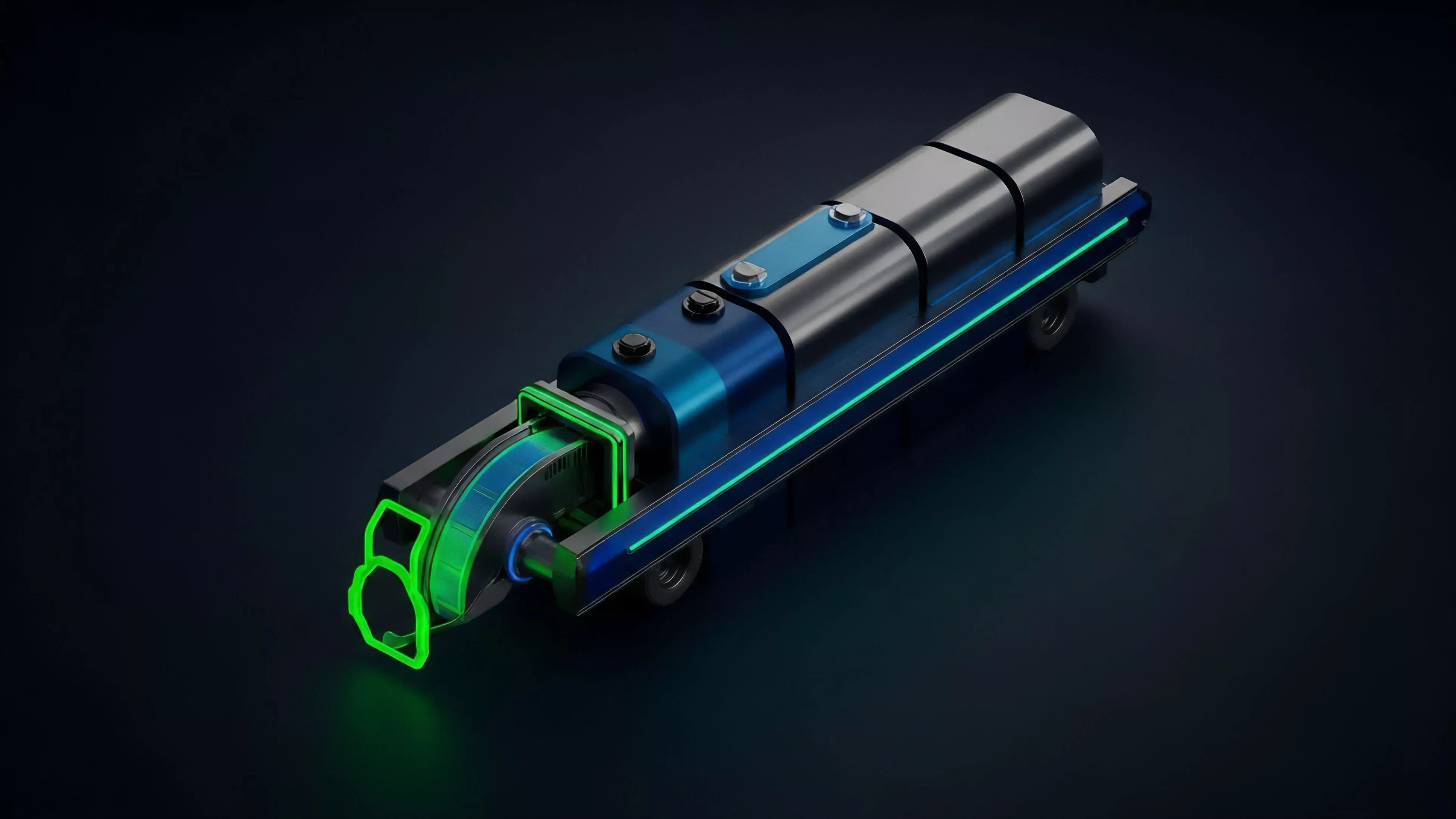

Current strategies for monitoring these metrics rely on multi-layered data ingestion from both public nodes and private indexing services.

Sophisticated participants employ custom infrastructure to observe the mempool, identifying order flow patterns before they are committed to the ledger. This proactive stance allows for the estimation of expected inclusion times, which is vital for maintaining delta-neutral positions.

- Real-time observability involves running validator nodes to bypass the latency of public API providers.

- Mempool analysis allows traders to anticipate pending liquidations or large-scale order cancellations.

- Historical backtesting correlates past network congestion events with slippage observed in derivative order books.

This technical rigor is the only barrier against systemic failure. When a protocol experiences a performance degradation, the most informed participants are the first to adjust their margin requirements. The ability to translate these technical metrics into actionable financial signals represents the current frontier of competitive advantage in decentralized markets.

Evolution

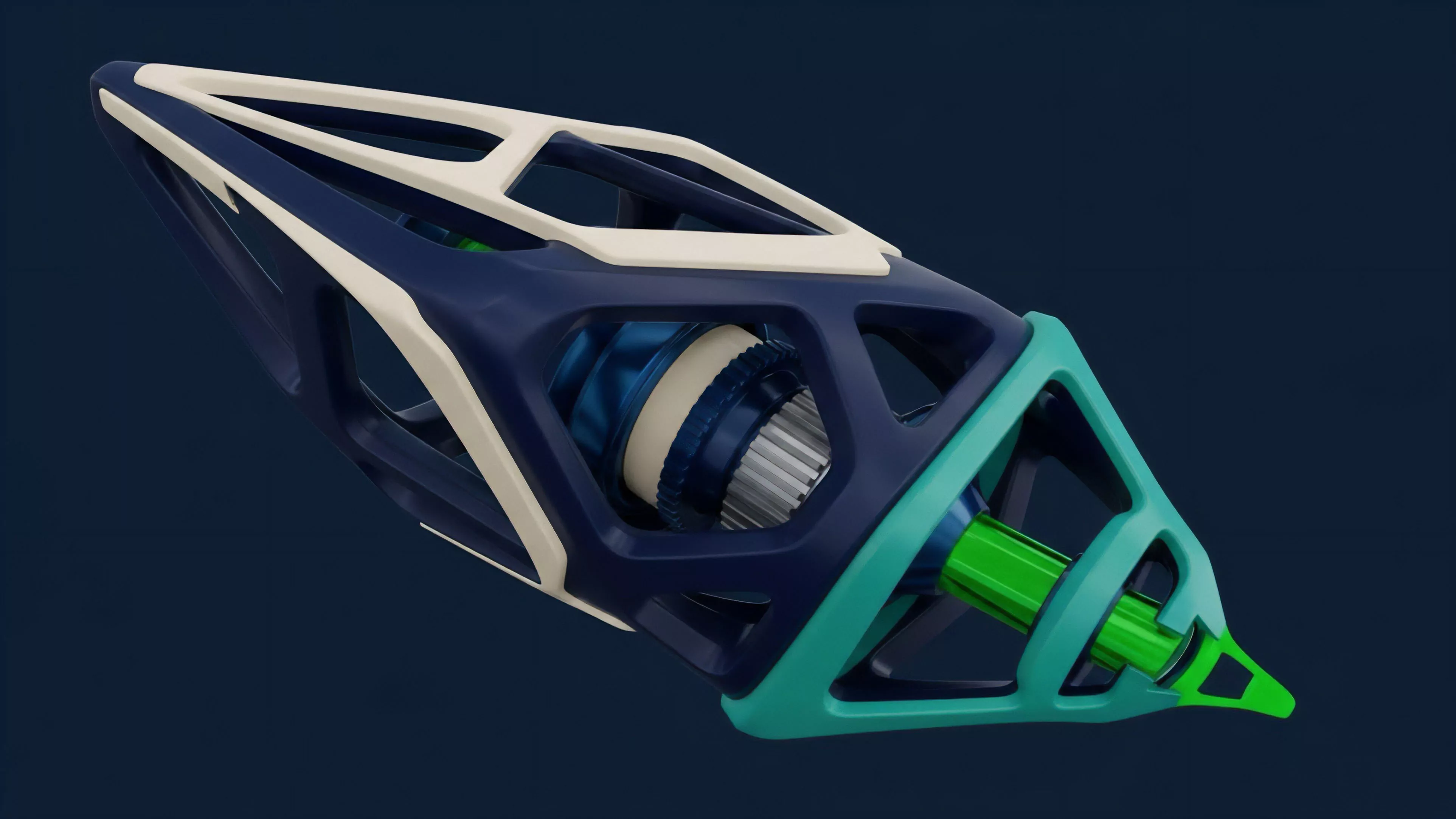

The transition from simple monolithic chains to modular architectures has fundamentally altered the interpretation of performance data.

In earlier cycles, metrics focused on single-chain throughput, ignoring the impact of cross-chain bridges and asynchronous settlement. Today, the focus has shifted toward the performance of execution layers and the cost of state proofs.

| Era | Primary Focus | Constraint |

| Early | Transaction per second | Monolithic block space |

| Intermediate | Gas price stability | Network congestion |

| Current | Modular finality | Cross-chain latency |

This progression highlights a move toward specialized infrastructure designed specifically for financial applications. Developers now optimize consensus protocols to minimize the duration of state uncertainty, directly benefiting derivative platforms that require rapid settlement. The industry has learned that raw speed is useless without deterministic finality, a lesson paid for by the loss of liquidity during past market disruptions.

Horizon

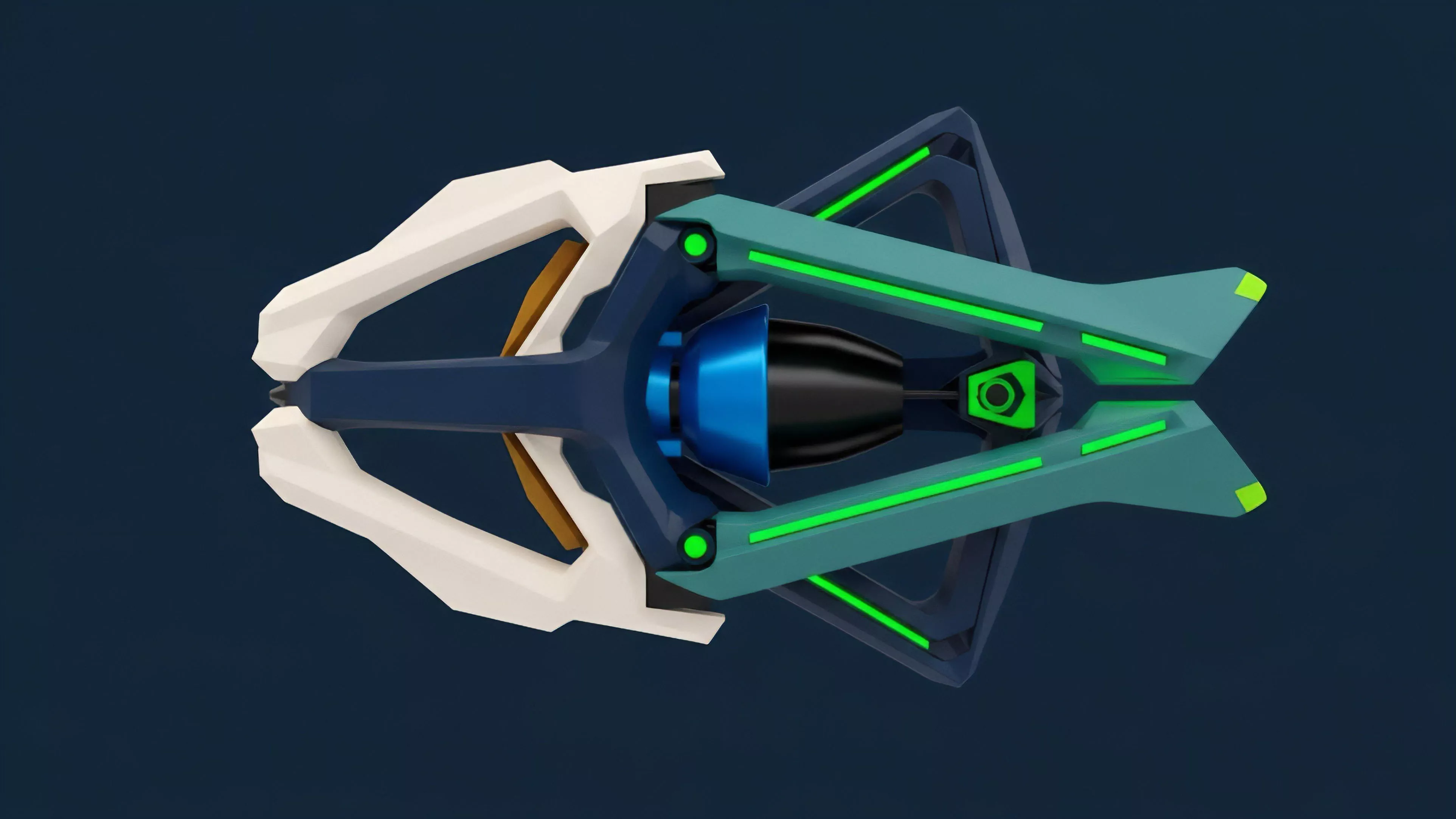

Future developments will likely center on the integration of hardware-accelerated consensus and zero-knowledge proofs to decouple performance from validator decentralization.

These advancements aim to provide sub-second finality while maintaining the integrity of the ledger, effectively removing the performance-related risks that currently plague derivative protocols.

The future of decentralized derivatives depends on protocols that provide deterministic settlement regardless of network congestion levels.

We are moving toward an environment where the infrastructure layer becomes invisible, and performance metrics are no longer a concern for the average participant. However, for those building the next generation of financial systems, the focus will remain on the underlying physics of the network. The challenge will be to design systems that remain robust even when the underlying network experiences unprecedented levels of activity, ensuring that the promise of open finance is not undermined by technical bottlenecks.