Essence

Network Economic Modeling functions as the architectural blueprint for understanding how decentralized protocols distribute value, incentivize participation, and maintain equilibrium under adversarial conditions. It transcends simple token velocity metrics by mapping the causal links between consensus mechanics, liquidity provision, and participant behavior within a programmable financial environment. This analytical framework treats every protocol as a closed system where code dictates the rules of engagement and economic incentives steer user activity toward or away from systemic stability.

Network Economic Modeling serves as the foundational framework for analyzing how protocol architecture influences participant behavior and system equilibrium.

At its core, this discipline requires evaluating the feedback loops inherent in decentralized systems. When a protocol adjusts its fee structure or collateral requirements, it triggers a cascade of responses from market participants, liquidity providers, and arbitrageurs. Network Economic Modeling provides the tools to quantify these responses, identifying where the system remains resilient and where it risks collapse due to misaligned incentives or unforeseen dependencies.

Origin

The genesis of this field lies in the convergence of distributed systems engineering and classical game theory.

Early blockchain protocols introduced programmable scarcity, but the maturation of decentralized finance necessitated a shift toward rigorous analysis of how these systems handle capital flows. The transition from simple value transfer to complex financial primitives ⎊ such as automated market makers and collateralized debt positions ⎊ forced architects to adopt methods from traditional market microstructure to ensure the longevity of their designs.

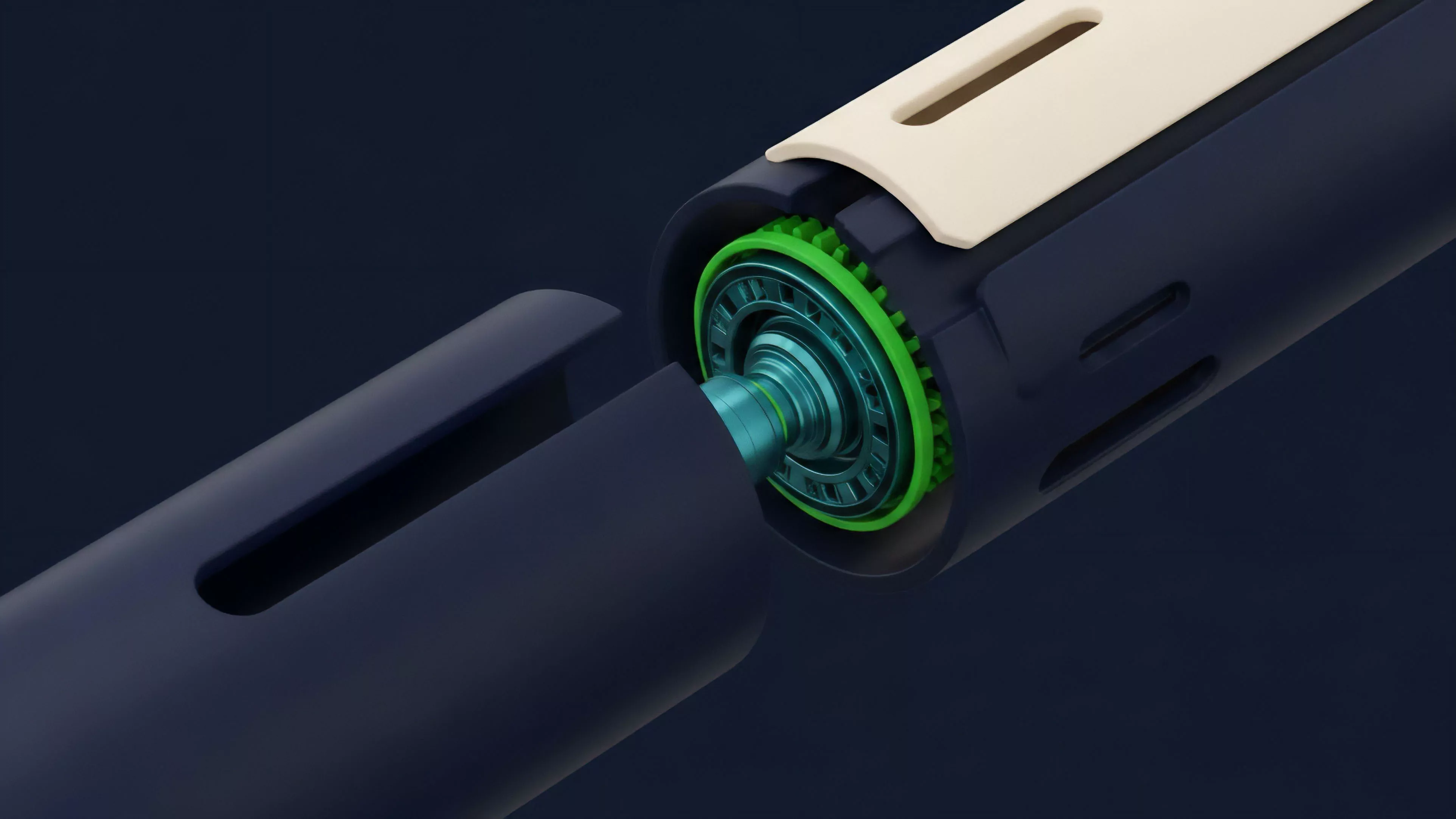

- Protocol Physics defines the underlying rules of asset movement and validation.

- Behavioral Game Theory explains how participants respond to programmed incentive structures.

- Market Microstructure models the mechanics of price discovery and liquidity depth within decentralized venues.

This evolution was driven by the realization that code security is insufficient if the economic logic governing the protocol is flawed. Early failures in liquidity mining and algorithmic stablecoins exposed the fragility of systems that ignored the long-term impact of emission schedules and reflexive leverage. Consequently, researchers began applying quantitative finance principles to predict how network-level variables interact with broader crypto market volatility.

Theory

The structure of Network Economic Modeling relies on three distinct pillars that quantify systemic health.

These pillars provide a standardized approach to evaluating the viability of any decentralized protocol, ensuring that incentive design aligns with long-term network growth rather than short-term extraction.

| Model Component | Primary Function | Risk Factor |

|---|---|---|

| Tokenomics Architecture | Defining supply emission and utility | Inflationary pressure and dilution |

| Liquidity Mechanics | Facilitating efficient asset exchange | Fragmentation and slippage |

| Governance Design | Adjusting parameters to market shifts | Centralization and voter apathy |

The mathematical rigor required here involves modeling state changes as probabilistic events. When analyzing a protocol, one must account for the Greeks of the system ⎊ specifically how changes in underlying asset prices affect the solvency of collateralized positions. Smart Contract Security serves as the hard constraint, while the economic model acts as the software-defined engine that either absorbs or amplifies external shocks.

Systemic stability in decentralized protocols depends on the precise calibration of incentive feedback loops and collateral sensitivity.

Occasionally, one might observe parallels between these digital systems and the biological regulation of complex ecosystems, where small shifts in environmental inputs trigger significant structural adaptations. The behavior of a decentralized exchange is no different, as automated agents and human traders constantly rebalance the system in response to price signals and protocol parameters.

Approach

Current methodologies focus on stress-testing protocol architecture against extreme market scenarios. Instead of relying on historical backtesting, which often fails to capture the unique risks of decentralized environments, analysts now employ agent-based simulations.

These simulations replicate thousands of market participants with varying risk profiles to observe how the protocol handles high volatility, network congestion, or liquidity crunches.

- Data Collection involves aggregating on-chain transaction logs and governance voting patterns to map participant behavior.

- Simulation Modeling utilizes agent-based frameworks to test how specific changes in parameters affect system-wide outcomes.

- Sensitivity Analysis identifies the critical thresholds where collateralization ratios or liquidity depth become insufficient to prevent cascading liquidations.

This quantitative rigor is balanced by an understanding of Macro-Crypto Correlation, recognizing that no protocol exists in a vacuum. The liquidity cycles of global finance exert direct pressure on the internal mechanisms of decentralized platforms. Professionals in this space now prioritize the analysis of cross-protocol contagion, where a failure in one venue ripples through interconnected lending markets and derivative exchanges.

Evolution

The field has moved from simplistic static models to dynamic, reflexive frameworks that account for the non-linear nature of decentralized markets.

Initially, developers focused on maximizing TVL (Total Value Locked) as the primary indicator of success. This metric proved insufficient, leading to the current emphasis on sustainable revenue generation and protocol-owned liquidity. The shift reflects a growing maturity in the sector, where capital efficiency and risk management have replaced growth at any cost as the primary objectives.

Sustainable protocol design requires shifting from vanity metrics like total value locked toward metrics prioritizing capital efficiency and organic revenue.

Regulatory pressure has also forced a change in how these models are constructed. Protocols now integrate jurisdictional awareness into their architecture, using permissioned pools or modular designs to comply with local requirements without sacrificing the core promise of decentralization. This adjustment demonstrates the ongoing tension between the pursuit of open financial systems and the reality of global legal constraints.

Horizon

Future developments will center on automated governance and real-time risk adjustment. As protocols gain the capacity to programmatically update their own parameters based on market data, the role of the Network Economic Modeling analyst will shift from manual parameter setting to designing the meta-rules that govern these autonomous adjustments. This transition will require a deeper integration of machine learning to predict volatility regimes and adjust risk parameters before a crisis manifests. The ultimate objective is the creation of self-healing financial systems that maintain stability through algorithmic response rather than manual intervention. As the industry advances, the intersection of cryptography and economics will produce instruments capable of managing risk at a scale and speed that traditional finance cannot match. This progression will likely redefine the role of central liquidity providers, moving toward a world where decentralized markets operate with unprecedented transparency and efficiency.