Essence

Network Data Metrics constitute the quantitative pulse of decentralized financial architectures. These indicators translate raw ledger activity ⎊ transaction volume, address clustering, gas consumption, and validator participation ⎊ into actionable financial intelligence. They bypass intermediary reporting to provide a direct, immutable view of protocol health and participant behavior.

Network Data Metrics represent the real-time conversion of blockchain transaction logs into verifiable indicators of protocol utility and economic activity.

The primary utility of these metrics lies in their capacity to reveal the structural integrity of a network. By monitoring on-chain liquidity depth and validator decentralization scores, architects gain a high-fidelity view of the system’s resilience against adversarial conditions. This data acts as the foundational layer for pricing risk in derivative markets, where the underlying asset’s volatility is inextricably linked to its network-level throughput and security parameters.

Origin

The genesis of Network Data Metrics tracks the transition from speculative asset tracking to fundamental protocol analysis. Early observers relied upon rudimentary block explorers to visualize simple transfer activity. As decentralized finance expanded, the requirement for sophisticated tooling to assess Total Value Locked and protocol revenue generation necessitated a shift toward structured, indexable datasets.

- Transaction throughput provided the initial baseline for assessing network congestion and user demand.

- Address activity patterns emerged as a proxy for identifying user retention and whale distribution.

- Validator consensus stability became a critical metric following the migration of major networks to proof-of-stake mechanisms.

This evolution mirrors the maturation of traditional market microstructure analysis. Just as exchange order flow data informs price discovery in equities, on-chain metrics now dictate the risk parameters for decentralized option vaults and perpetual futures. The shift from anecdotal observation to rigorous, automated data extraction marks the current state of professionalized crypto finance.

Theory

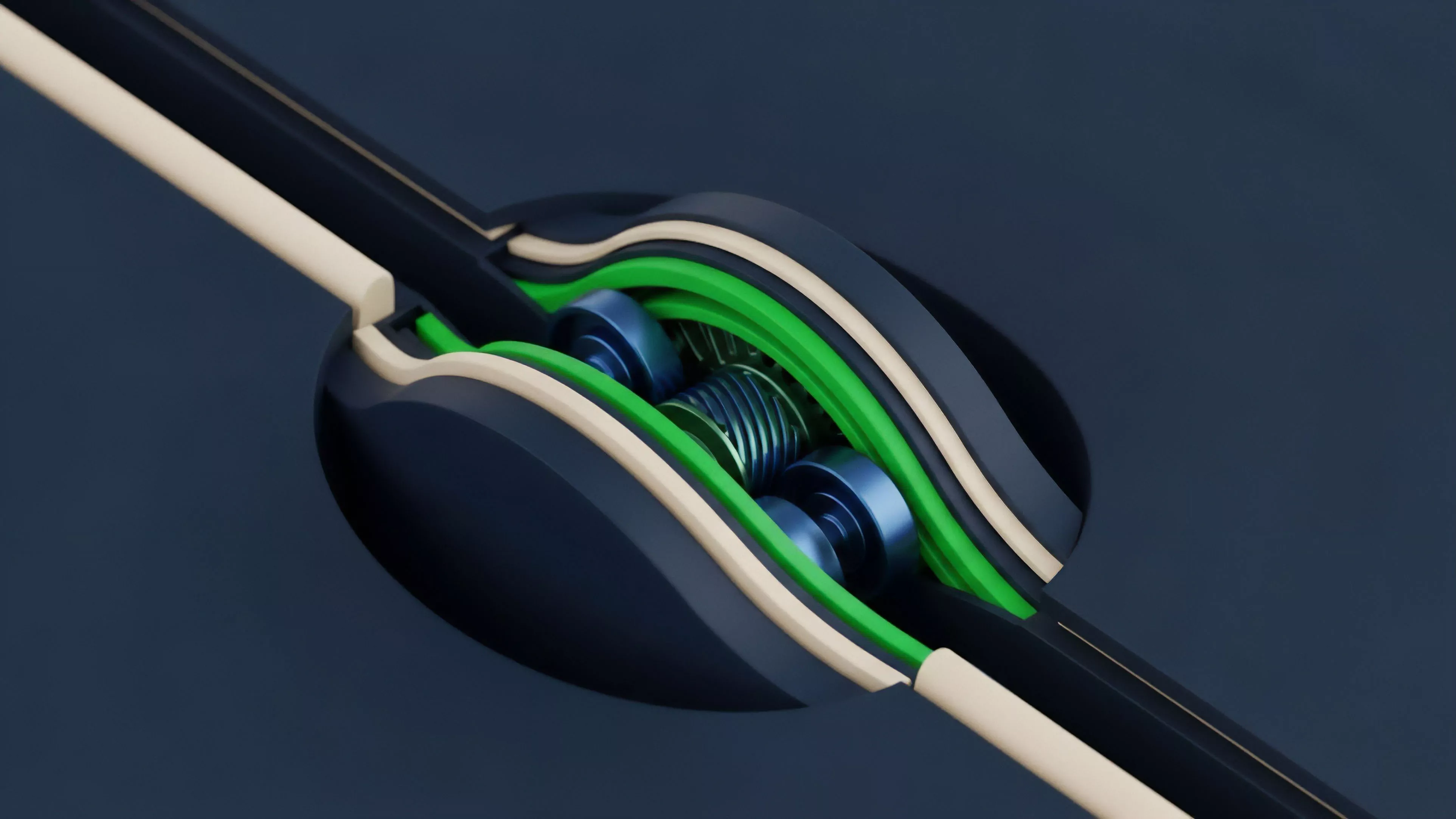

The theoretical framework for Network Data Metrics rests upon the principle of total transparency. Unlike legacy financial systems where order books and clearing data remain siloed, decentralized protocols publish their entire state history. Analysts apply quantitative models to this state to extract velocity of circulation, miner extractable value impact, and token concentration ratios.

Market Microstructure and Protocol Physics

Understanding these metrics requires an appreciation of the interaction between consensus rules and market participants. Protocol Physics dictate the latency and cost of transaction settlement, which directly influences the arbitrage efficiency within derivative venues. High network latency often results in wider bid-ask spreads for options, as market makers must account for the risk of stale pricing during periods of high chain congestion.

Systemic risk propagates through networks when low liquidity metrics align with high leverage ratios, creating feedback loops that accelerate liquidations.

| Metric Category | Analytical Focus | Financial Implication |

| Throughput | TPS and Latency | Execution slippage and margin risk |

| Concentration | Gini coefficients | Governance risk and sell-side pressure |

| Participation | Active validator count | Consensus security and network stability |

Market participants often overlook the subtle interplay between gas market volatility and option pricing. When base fees spike, the cost of rebalancing automated market makers increases, causing the realized volatility of the underlying asset to decouple from implied volatility models. This discrepancy represents a tangible edge for those capable of monitoring network-level data in real time.

Approach

Modern practitioners employ a tiered approach to synthesize Network Data Metrics into coherent strategies. This process begins with data ingestion from full nodes, followed by normalization and indexing, and concludes with the application of quantitative finance models to assess risk sensitivities.

- Data extraction involves querying indexed ledger states to capture precise transaction metadata.

- Pattern identification relies on clustering algorithms to distinguish between exchange hot wallets, smart contract interactions, and retail users.

- Risk modeling incorporates these findings into Greeks calculations, adjusting for the specific liquidity characteristics of the protocol.

The current landscape demands an adversarial perspective. Every metric is a potential target for manipulation. Sophisticated actors may artificially inflate daily active addresses or transaction counts to create an illusion of protocol growth.

Consequently, the approach necessitates a critical eye, prioritizing economic activity metrics ⎊ such as actual fee generation or collateral utilization ⎊ over vanity metrics like raw transaction volume.

Evolution

The trajectory of these metrics has shifted from retrospective reporting to predictive modeling. Initial efforts focused on describing historical usage. Current frameworks attempt to anticipate shifts in market sentiment and liquidity cycles by identifying anomalies in on-chain flow before they manifest in price action.

Advanced quantitative models now integrate real-time network congestion data to refine the pricing of short-dated crypto options.

The rise of modular blockchain architectures has complicated the data landscape. Metrics are no longer confined to a single chain; they must now account for cross-chain bridging activity and liquidity fragmentation. This expansion requires a more robust understanding of systems risk, as the failure of a single bridge or liquidity aggregator can trigger cascading liquidations across multiple protocols.

One might compare this to the interconnected nature of global shipping lanes ⎊ where a disruption in one chokepoint ripples through the entire supply chain, regardless of the individual health of each vessel.

Horizon

The future of Network Data Metrics lies in the integration of zero-knowledge proofs and decentralized oracle networks to provide verifiable, privacy-preserving data streams. As protocols increase in complexity, the ability to monitor smart contract security health in real time will become the primary determinant of institutional adoption. We are moving toward a state where network metrics will be consumed by autonomous agents to dynamically adjust collateral requirements and interest rates without human intervention.

| Future Focus | Technological Driver | Strategic Outcome |

| Privacy-Preserving Data | Zero-Knowledge Proofs | Institutional access to on-chain insights |

| Automated Risk Mitigation | Decentralized Oracles | Real-time collateral adjustment |

| Cross-Chain Intelligence | Interoperability Protocols | Unified liquidity risk assessment |

Success in this evolving environment will depend on the ability to synthesize disparate data points into a unified view of systemic health. Those who master the translation of raw protocol state into predictive financial models will define the next generation of decentralized market participants.