Essence

Network congestion solutions represent the architectural responses to the inherent throughput limitations of decentralized ledgers. When transaction volume exceeds the capacity of a consensus mechanism, the resulting backlog creates latency and escalating costs. These solutions optimize how data propagates and settles, ensuring financial activity remains viable under heavy load.

Network congestion solutions function as capacity expansion mechanisms designed to maintain transaction throughput and predictable costs during periods of peak demand.

At the systemic level, these mechanisms prevent the degradation of user experience and preserve the utility of decentralized markets. By managing state growth and block space utilization, protocols avoid the catastrophic failure modes associated with transaction stagnation, such as the freezing of margin accounts or the failure of liquidation engines.

Origin

The genesis of these solutions traces back to the fundamental trade-offs identified in the early development of distributed ledger technology. Developers recognized that increasing block size or frequency directly impacts decentralization by raising the hardware requirements for node operators.

- Transaction Mempool The initial staging area for pending operations where congestion first manifests as price discovery for block space.

- Block Size Constraints The rigid technical parameters that define the maximum data capacity per interval, necessitating secondary layers.

- Gas Price Auctions The economic mechanism that naturally emerges to prioritize transactions, effectively rationing space through price discovery.

These early constraints forced the industry to move beyond monolithic architecture. The shift toward modular design allowed for the separation of execution, settlement, and data availability, forming the modern foundation for handling throughput spikes.

Theory

Mathematical modeling of network capacity requires an understanding of queueing theory and the physics of state synchronization. The objective involves maximizing throughput while minimizing the entropy of the consensus set.

When participants compete for finite block space, the system behaves as a competitive market where the clearing price is determined by the marginal utility of the transaction.

| Mechanism | Function | Risk Profile |

| Rollup Compression | Batched state updates | Sequencer centralization |

| Sharding | Parallel state processing | Cross-shard atomicity |

| State Pruning | Database footprint reduction | Node sync complexity |

Effective congestion management requires balancing the cost of computation against the systemic requirement for global state consensus and verifiability.

The strategic interaction between validators and users resembles a game-theoretic equilibrium where incentives must be aligned to prevent malicious spam. If the cost of submitting a transaction remains below the expected value of the state change, the network becomes vulnerable to deliberate congestion attacks.

Approach

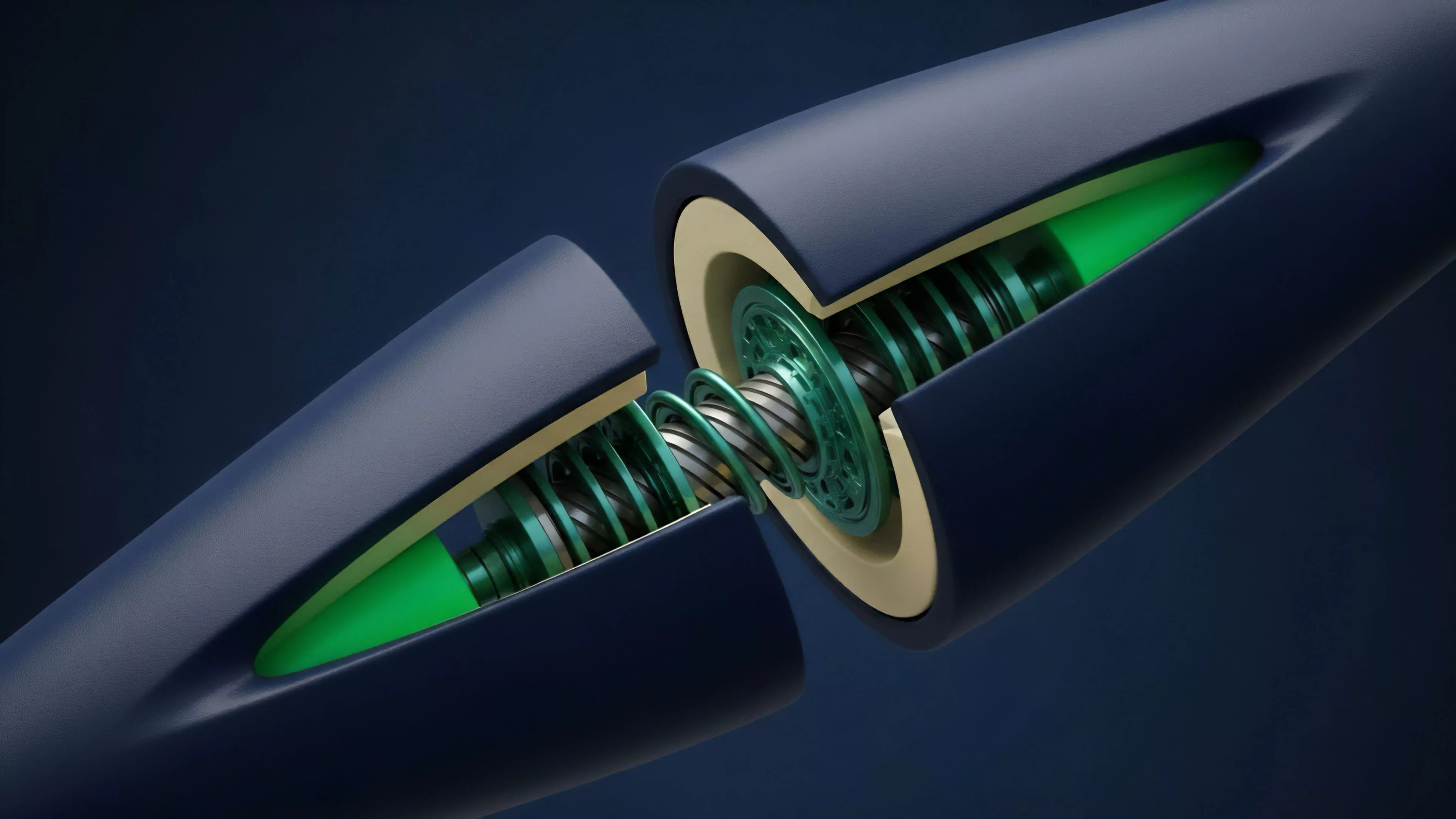

Modern implementation focuses on moving intensive computation off-chain while maintaining cryptographic proof of validity on the primary settlement layer. This shift represents a transition from global execution to verifiable off-chain batching.

- Optimistic Rollups Rely on fraud proofs to assume state validity, providing a low-latency pathway for execution until a challenge occurs.

- Zero Knowledge Proofs Enable cryptographic verification of batch validity, ensuring correctness without requiring the full re-execution of transactions.

- Parallel Execution Environments Allow distinct transactions to process simultaneously if they do not share dependencies, significantly increasing throughput.

One might observe that the current reliance on centralized sequencers introduces a new class of systemic risk ⎊ the potential for censorship or state withholding. The move toward decentralized sequencing protocols remains the critical requirement for achieving truly robust, congestion-resistant financial infrastructure.

Evolution

The transition from simple block size adjustments to complex Layer 2 architectures marks a significant maturity phase for decentralized finance. Early systems relied on manual adjustment of parameters, whereas contemporary protocols utilize dynamic fee markets and automated scaling factors to respond to demand volatility in real-time.

Protocol evolution moves from static capacity limits toward adaptive systems that scale transaction throughput in response to real-time market demand.

The industry has progressed through three distinct phases: the monolithic era of fixed constraints, the transition to modular multi-chain architectures, and the current focus on inter-chain interoperability. This trajectory highlights a move toward specialized environments where financial applications operate in high-performance execution zones while leveraging the security of a global settlement layer.

Horizon

Future development aims to achieve seamless execution across heterogeneous environments without sacrificing the integrity of the underlying asset. The next frontier involves the integration of advanced cryptographic primitives that enable private, high-speed transaction validation.

- Asynchronous State Channels Allowing for instantaneous value transfer without immediate block inclusion.

- Data Availability Sampling Ensuring that large datasets remain accessible to light clients, preventing the centralization of data storage.

- Predictive Fee Markets Utilizing machine learning to optimize transaction timing and cost, reducing the impact of sudden network spikes.

The critical pivot point for the future lies in how protocols manage cross-chain liquidity fragmentation. My hypothesis suggests that the winners will be those who successfully unify state across disparate layers without re-introducing the trust-based vulnerabilities of traditional financial intermediaries. The challenge remains the synthesis of speed and security within an adversarial, permissionless landscape.