Essence

Network Congestion Monitoring functions as the real-time telemetry of decentralized settlement layers. It provides the observable data regarding transaction mempool saturation, gas price volatility, and block inclusion latency. Market participants utilize these metrics to adjust their execution strategies for derivative positions, ensuring that margin calls or hedging trades reach the validator set before liquidation thresholds occur.

Network Congestion Monitoring serves as the critical diagnostic tool for assessing the temporal viability of executing time-sensitive financial transactions on decentralized ledgers.

The operational reality of crypto options trading demands high-frequency interactions with smart contracts. When network demand spikes, transaction fees rise, and inclusion times extend. This creates a direct risk to traders who rely on rapid collateral movement or position adjustment.

Understanding these dynamics transforms raw blockchain throughput data into actionable intelligence for capital allocation.

Origin

The requirement for Network Congestion Monitoring emerged from the inherent limitations of proof-of-work and early proof-of-stake architectures. As transaction volume grew, the first-price auction models for block space caused unpredictable spikes in settlement costs. Traders realized that relying on base-layer throughput without visibility into pending transaction volume left them exposed to execution failures during market volatility.

- Mempool Analysis provides the initial signal of impending congestion by measuring the count and fee distribution of unconfirmed transactions.

- Gas Fee Tracking offers a granular view of the competitive landscape for block space at specific points in time.

- Block Latency Metrics reveal the structural delays between transaction submission and finality within the consensus engine.

Early market participants relied on basic block explorers to gauge activity. These manual methods proved insufficient for the precision required in derivatives trading. The industry transitioned toward automated monitoring systems that ingest node-level data to calculate optimal gas prices and estimate confirmation probabilities for time-critical financial operations.

Theory

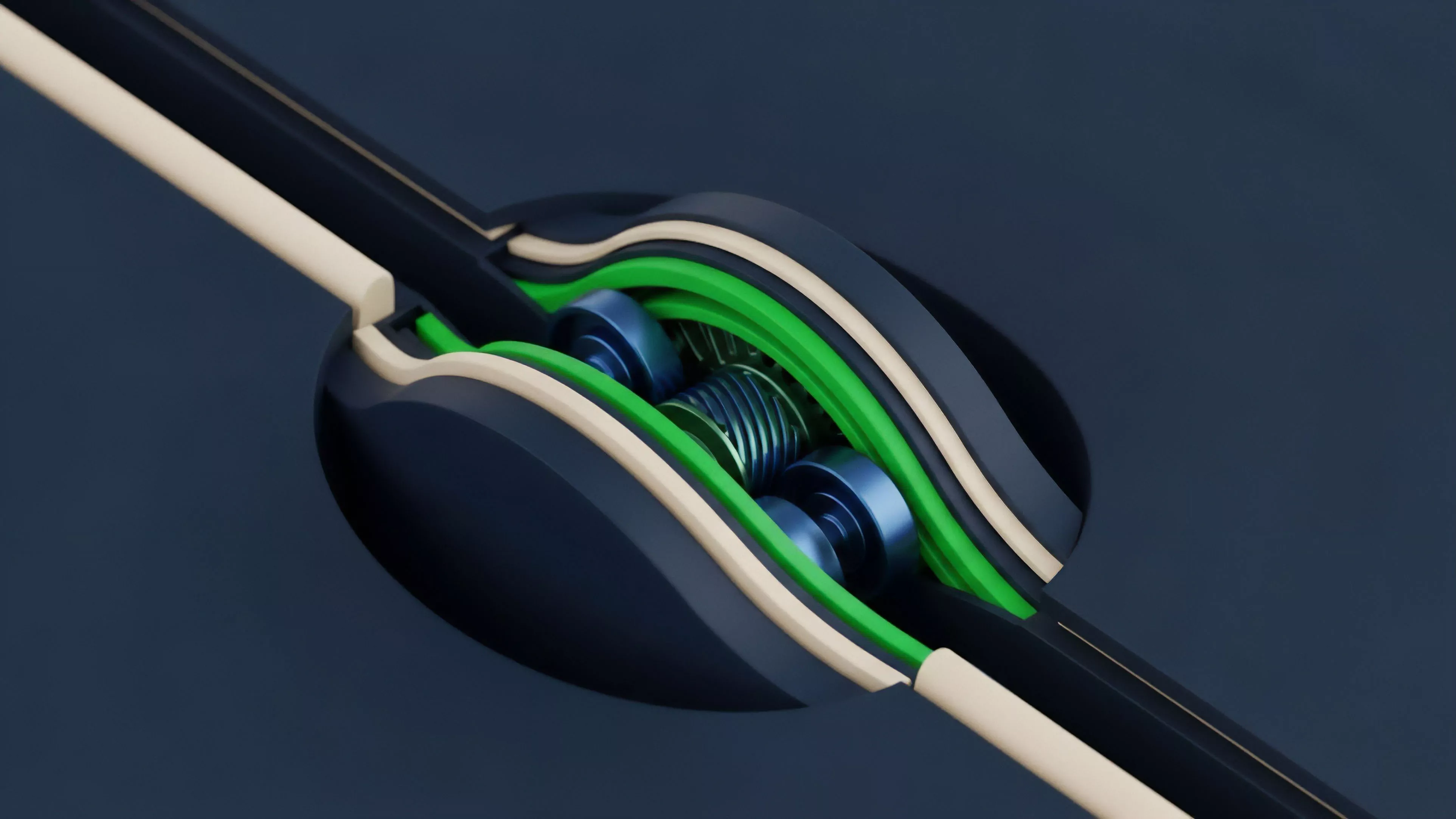

Network Congestion Monitoring relies on the application of queuing theory to blockchain transaction propagation. The mempool acts as a buffer where transactions await validation. When the arrival rate of transactions exceeds the service rate of the validator set, the queue grows, increasing both cost and latency for all participants.

This creates a non-linear relationship between network demand and transaction settlement time.

| Metric | Financial Impact | Systemic Risk |

|---|---|---|

| Mempool Depth | Execution delay | Liquidation failure |

| Fee Variance | Margin erosion | Capital inefficiency |

| Block Inclusion Time | Hedging slippage | Protocol instability |

From a quantitative perspective, the volatility of gas prices mirrors the volatility of the underlying asset. During market crashes, the demand for liquidations causes a massive surge in block space competition. Sophisticated actors model this as a dynamic game where participants bid against each other for immediate settlement, often resulting in fee spikes that far exceed the value of the transaction being processed.

Network Congestion Monitoring translates the probabilistic nature of block inclusion into a quantifiable risk parameter for managing leveraged positions.

The physics of the protocol, specifically the block time and gas limit, dictates the maximum capacity of the system. Any demand exceeding this threshold triggers a congestion event. These events are not random; they are often the direct consequence of systemic liquidation cascades, where hundreds of automated smart contracts simultaneously attempt to close positions.

Approach

Modern Network Congestion Monitoring involves deploying dedicated nodes to observe the mempool directly. By analyzing the fee distribution of pending transactions, systems can predict the probability of inclusion in the next block. This allows traders to calibrate their gas bids dynamically, balancing the cost of execution against the risk of delay.

- Node Infrastructure provides the raw data stream for monitoring real-time mempool activity and fee trends.

- Algorithmic Bidding uses predictive models to set gas prices that ensure timely inclusion without excessive overpayment.

- Latency Benchmarking tracks the performance of different RPC providers to ensure the most current view of the network state.

The current landscape requires a deep integration between the trading engine and the monitoring layer. A system that detects a sudden increase in Network Congestion Monitoring metrics can automatically trigger defensive measures, such as pausing new order submissions or increasing collateral buffers to account for the heightened risk of settlement failure. It is an exercise in managing technical debt alongside financial exposure.

Evolution

The transition from manual observation to autonomous monitoring systems marks a shift toward protocol-aware trading. Early strategies focused on simple fee estimation. Contemporary systems now incorporate multi-chain monitoring and cross-layer analysis, accounting for the unique congestion profiles of various layer-two scaling solutions.

The complexity of these systems has grown to match the sophistication of the derivatives they support.

The evolution of monitoring systems reflects the shift from reactive manual adjustment to predictive, machine-driven execution within decentralized derivatives markets.

This technical maturation is also a response to the increasing frequency of systemic stress tests. As decentralized protocols handle higher notional value, the cost of congestion increases. We have moved from treating gas fees as a negligible expense to treating them as a core component of trading strategy and risk management.

This evolution is driven by the necessity of survival in an adversarial, high-stakes environment where every millisecond of latency carries a financial penalty.

Horizon

The future of Network Congestion Monitoring lies in the integration of intent-based architectures and off-chain settlement batching. As protocols move toward more efficient state transitions, the definition of congestion will shift from simple block space scarcity to the efficiency of cross-domain liquidity routing. Future systems will prioritize predictive modeling that anticipates network load based on broader market volatility cycles.

| Future Metric | Application |

|---|---|

| Cross-Chain Latency | Arbitrage efficiency |

| State Bloat Impact | Long-term protocol health |

| MEV Extraction Rate | Execution cost modeling |

The next iteration will likely see the rise of decentralized oracle networks providing real-time, consensus-verified congestion data. This will allow smart contracts to adjust their own operational parameters, such as liquidation thresholds or collateral requirements, in response to network conditions. The ultimate goal is to build a financial system that remains robust regardless of the underlying ledger performance, effectively decoupling economic settlement from technical congestion.