Essence

Network Capacity Planning represents the systematic assessment and management of throughput, latency, and settlement finality thresholds within decentralized ledger architectures to ensure stable operation of financial derivatives. It defines the physical and logical boundaries under which complex option contracts can execute, clear, and settle without triggering systemic failure or prohibitive cost escalation during periods of peak market volatility.

Network Capacity Planning functions as the foundational risk management layer that ensures blockchain throughput can accommodate the rapid, high-frequency state changes required by sophisticated derivative instruments.

The primary challenge lies in the inherent tension between decentralization, security, and the raw performance required for order flow execution. As derivative complexity increases, the underlying network must maintain sufficient headroom to prevent congestion-driven liquidation cascades or arbitrage failures that arise when transaction inclusion latency exceeds the delta-neutral rebalancing requirements of market participants.

Origin

The necessity for rigorous Network Capacity Planning emerged from the transition of decentralized finance from simple token transfers to complex, state-dependent financial protocols. Early iterations of smart contract platforms operated under assumptions of static demand, failing to account for the non-linear relationship between network load and gas-based transaction costs.

- Transaction Serialization: The move from simple asset swaps to multi-leg option strategies necessitated a shift toward understanding how sequential block space competition impacts execution pricing.

- Latency Sensitivity: Financial markets operate on time-priority mechanisms, making the unpredictability of mempool inclusion a critical risk factor for delta-hedging strategies.

- Throughput Constraints: The realization that protocol-level bottlenecks directly influence the liquidity profile of decentralized option pools and automated market makers.

This evolution reflects the maturation of decentralized markets from experimental environments to sophisticated financial venues. Architects now treat network bandwidth and computational cycles as finite, priced resources rather than infinite public goods, mirroring the infrastructure planning seen in traditional high-frequency trading environments.

Theory

The theoretical framework governing Network Capacity Planning centers on the interplay between block space scarcity, transaction priority, and the cost of state propagation. Quantitative modeling of these systems requires analyzing the Poisson distribution of transaction arrivals against the fixed interval of block production.

Mathematical Determinants

The structural integrity of a derivative protocol depends on its ability to maintain a predictable relationship between the Greeks of an option ⎊ specifically gamma and theta ⎊ and the transaction costs required to manage them. If network latency increases, the effective cost of delta-neutrality rises, potentially leading to a widening of bid-ask spreads and reduced liquidity provision.

| Metric | Financial Implication |

| Block Gas Limit | Maximum concurrent trade volume per interval |

| Mempool Latency | Execution risk for time-sensitive delta hedging |

| Finality Time | Settlement risk and capital efficiency duration |

Effective capacity management requires balancing the cost of immediate transaction inclusion against the risk-adjusted returns of the underlying derivative strategies.

A significant challenge involves the adversarial nature of block space auctions. Participants act to maximize their own execution speed, often at the expense of network-wide stability. This creates a feedback loop where periods of high volatility, which necessitate the most frequent rebalancing, also coincide with the highest transaction costs, thereby creating a systemic risk of gridlock during market stress.

Approach

Current operational strategies for Network Capacity Planning rely on a combination of off-chain computation, layer-two scaling solutions, and sophisticated fee-market mechanisms.

Protocols now implement batching, state compression, and off-chain order books to decouple the execution of trades from the finality requirements of the underlying settlement layer.

Operational Frameworks

- Layer Two Offloading: Moving execution to secondary chains significantly increases throughput, allowing for high-frequency adjustments to option positions without competing for congested layer-one block space.

- Batch Auctioning: Aggregating multiple orders into a single transaction minimizes the footprint on the ledger, effectively smoothing out demand spikes and reducing the variance in execution costs.

- Predictive Gas Modeling: Advanced algorithms now estimate optimal gas bids based on historical congestion patterns and current volatility, providing a more stable environment for automated market makers.

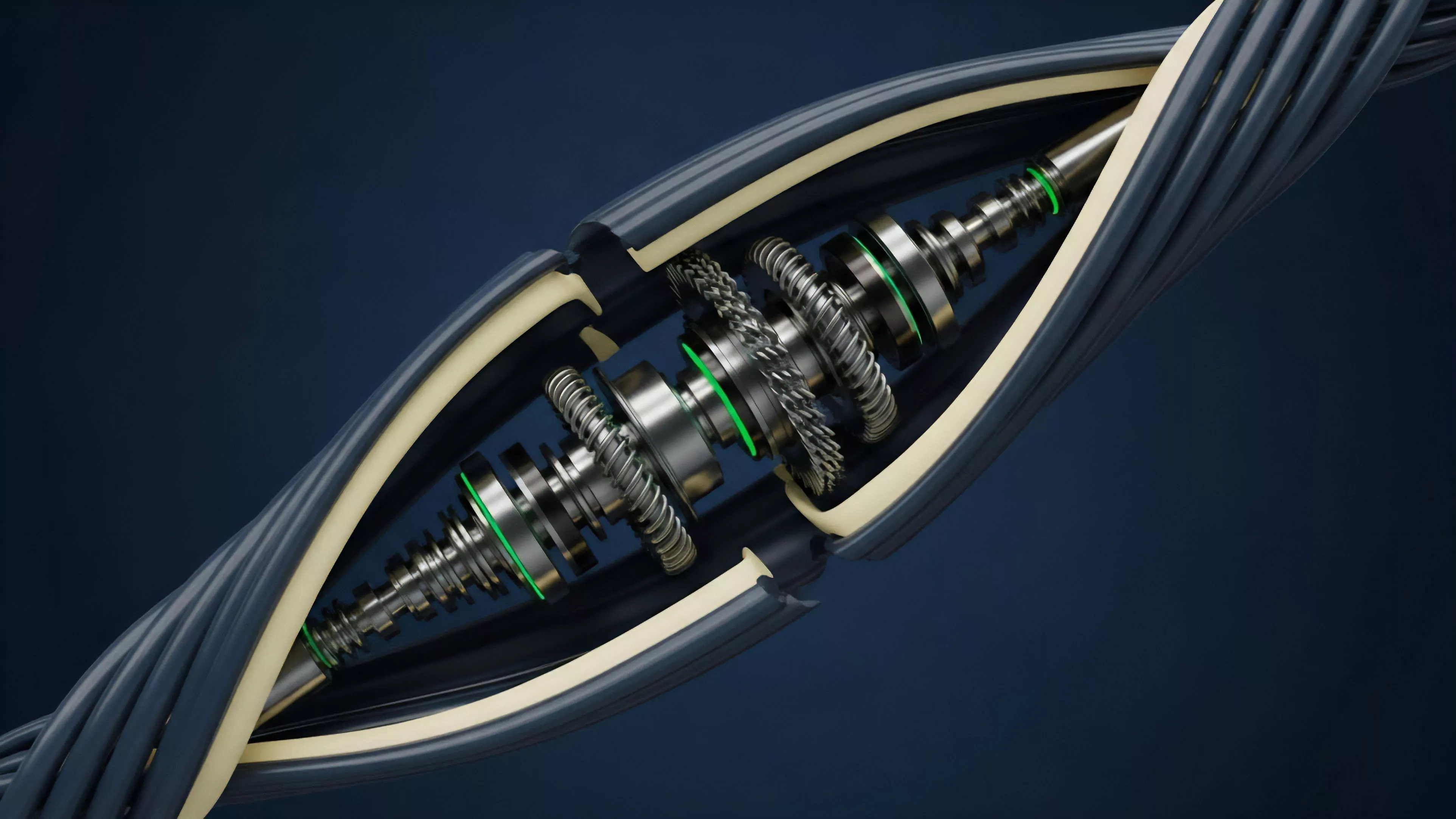

The transition toward modular architecture allows protocols to specialize, separating the high-throughput execution layer from the high-security settlement layer.

The strategic goal is to minimize the execution decay that occurs when network congestion prevents the timely adjustment of derivative hedges. By implementing robust off-chain sequencing, architects reduce the reliance on immediate on-chain inclusion, providing a buffer that preserves liquidity during market turbulence.

Evolution

The trajectory of Network Capacity Planning has moved from naive capacity estimates toward dynamic, protocol-level resource management. Initially, developers viewed throughput as a static parameter defined by the consensus rules.

Modern approaches treat it as a variable that must be dynamically adjusted to meet the shifting demands of institutional-grade financial instruments. Sometimes I think the industry forgets that the physics of computation is just as unforgiving as the laws of supply and demand in a traditional exchange. This realization has forced a pivot toward modularity, where the network itself is re-architected to handle the distinct requirements of execution and settlement.

- Protocol-Specific Sequencing: The development of custom sequencers ensures that high-priority derivative trades receive deterministic inclusion times, independent of general-purpose network traffic.

- State Growth Management: Advanced data structures minimize the computational burden of maintaining open derivative positions, ensuring long-term scalability without sacrificing historical auditability.

- Cross-Chain Settlement: Future-oriented designs allow for liquidity to be fragmented across multiple environments while maintaining a unified risk management framework.

This progression indicates a shift toward specialized financial infrastructure. The goal is no longer just increasing raw transactions per second, but ensuring that the network can provide the deterministic performance required for complex financial settlement under any market condition.

Horizon

The future of Network Capacity Planning lies in the integration of artificial intelligence for real-time resource allocation and the adoption of zero-knowledge proofs for verifiable, low-latency settlement. As decentralized derivatives reach greater scale, the network will need to anticipate demand shifts before they occur, dynamically adjusting protocol parameters to maintain stability.

Systemic Trajectory

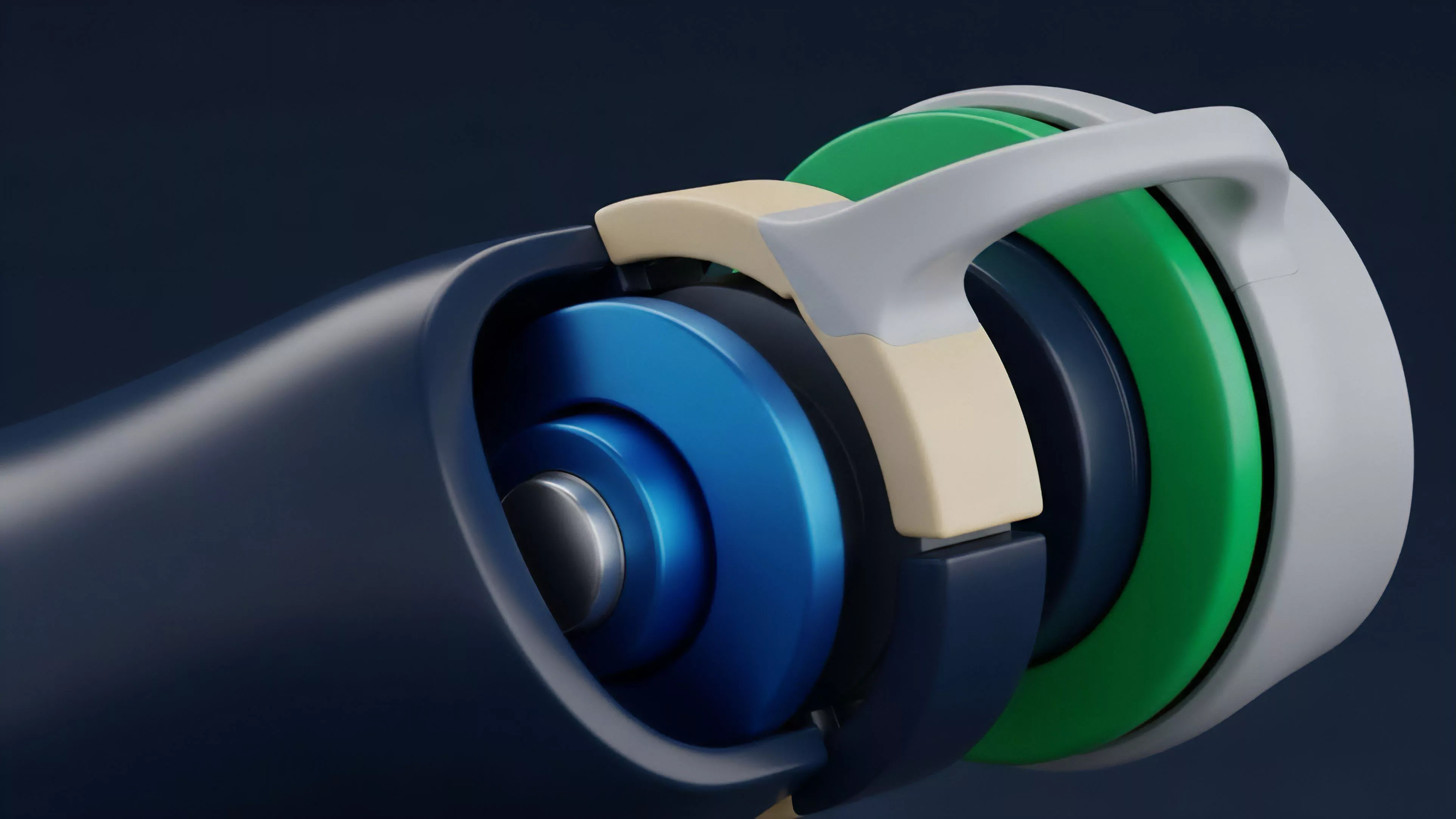

The next phase involves the implementation of asynchronous settlement architectures that allow for near-instantaneous execution of complex option structures while deferring finality to a later, less congested block. This approach effectively decouples the user experience from the physical limitations of the base-layer ledger.

| Innovation | Impact on Capacity |

| Zero-Knowledge Batching | Reduces proof-of-validity overhead per trade |

| Dynamic Sharding | Scales throughput linearly with network demand |

| AI-Driven Sequencing | Anticipates and mitigates congestion before peak load |

Ultimately, the goal is to create a financial environment where the underlying network capacity is abstracted away entirely, leaving participants with a seamless, high-performance interface. The success of this endeavor depends on the ability to maintain decentralization while achieving the performance metrics of traditional, centralized exchanges.