Essence

Network Capacity Management within decentralized financial derivatives constitutes the active regulation of throughput, latency, and block space allocation to ensure stable execution of complex option strategies. It functions as the infrastructure layer governing how order flow interacts with validator constraints during periods of extreme volatility.

Network Capacity Management defines the operational limits governing derivative settlement throughput and systemic liquidity resilience.

This practice involves the deliberate calibration of gas mechanisms, fee markets, and sequencer throughput to prevent congestion-induced arbitrage failures. Market participants must account for these technical bottlenecks, as they directly dictate the reliability of hedging activities when blockchain demand surges.

Origin

The necessity for this discipline arose from the inherent limitations of early blockchain architectures during high-volatility events. As trading volumes increased, fixed block size constraints and competitive fee auctions frequently created significant execution delays for complex derivative strategies.

- Congestion bottlenecks occurred when transaction demand exceeded available throughput capacity.

- Arbitrage failures resulted from stale price feeds during periods of extreme network latency.

- Priority gas auctions forced participants to overpay for transaction inclusion, eroding profit margins.

These initial systemic challenges compelled developers to architect more sophisticated mechanisms, shifting the focus from simple transaction processing toward structured throughput optimization.

Theory

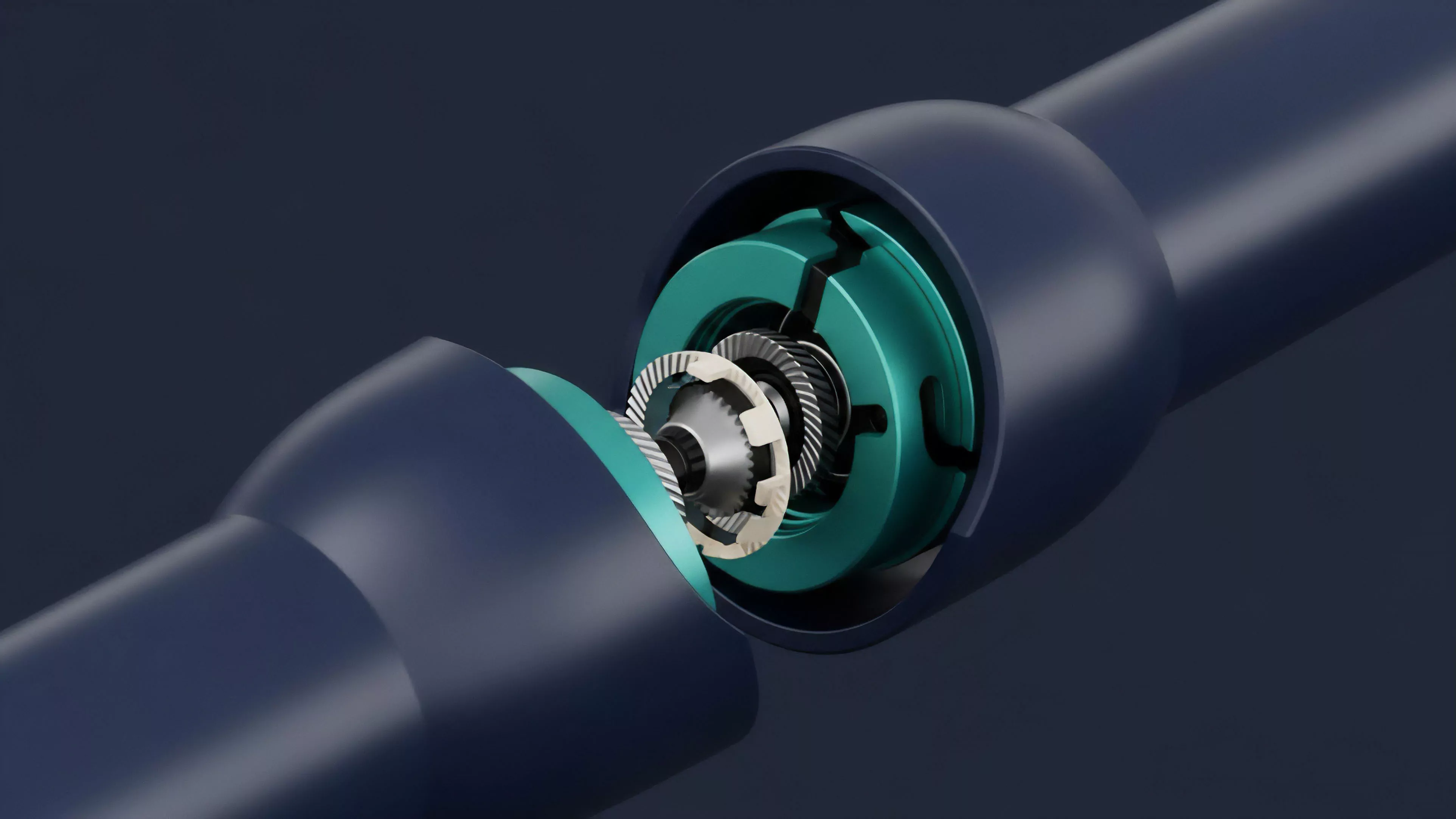

The architecture relies on balancing protocol physics with financial settlement requirements. Effective management requires precise modeling of how block space demand correlates with option delta-hedging activity.

Protocol Physics

Validators prioritize transactions based on fee structures, which creates a dynamic environment where execution cost fluctuates with network demand. This introduces a non-linear relationship between volatility and transaction cost.

| Variable | Impact on Capacity |

| Block Gas Limit | Sets the maximum computational throughput per block. |

| Fee Market Dynamics | Determines transaction inclusion probability under load. |

| Sequencer Throughput | Dictates the speed of order matching in rollups. |

The interaction between validator consensus speed and derivative settlement requirements determines the practical liquidity depth of any decentralized venue.

Quantitative Risk Modeling

Quantitative models incorporate network latency as a distinct variable within the pricing of options. If the time required to update a position exceeds the block time, the resulting exposure risk increases, necessitating larger margin requirements.

Approach

Current strategies emphasize the utilization of Layer 2 scaling solutions and off-chain order matching to mitigate primary chain congestion. These approaches decouple order discovery from final settlement, allowing for high-frequency interaction without immediate reliance on mainnet capacity.

- Sequencer decentralization minimizes the risk of single-point failures in transaction ordering.

- Batch processing optimizes throughput by aggregating multiple derivative executions into single settlement transactions.

- Dynamic fee estimation algorithms adjust bid prices in real-time to ensure timely inclusion.

This technical shift requires sophisticated software agents capable of monitoring network load and adjusting trading behavior accordingly. The objective is to maintain execution parity with centralized venues while operating within the constraints of decentralized consensus.

Evolution

The transition from basic transaction inclusion to advanced throughput orchestration marks a shift in market maturity. Early protocols treated capacity as an external constraint, whereas modern architectures internalize these limitations through custom fee models and dedicated application-specific chains.

Systemic stability requires aligning protocol throughput design with the mathematical requirements of complex derivative pricing and risk management.

Technological advancements now allow for dedicated block space for financial primitives, reducing competition with unrelated network activity. This evolution reflects a broader movement toward institutional-grade infrastructure where execution reliability is a fundamental design requirement rather than an afterthought.

Horizon

Future developments will likely center on predictive throughput allocation, where protocols anticipate volatility-driven demand and scale capacity proactively. Integration with hardware-accelerated consensus mechanisms will further reduce latency, enabling complex, cross-chain option strategies to function with minimal friction.

| Innovation | Anticipated Outcome |

| Predictive Scaling | Reduced transaction failure rates during volatility. |

| Hardware Acceleration | Microsecond-level latency for order execution. |

| Cross-Chain Interoperability | Unified liquidity across fragmented network environments. |

The ultimate goal remains the total elimination of network-induced execution risk, transforming decentralized markets into seamless engines for capital allocation.