Essence

Network Bandwidth functions as the fundamental throughput capacity for decentralized protocols, acting as the primary constraint on transaction finality, state propagation, and the execution velocity of automated market makers. Within crypto derivative systems, this capacity defines the upper bound of order flow density that a validator set can process without incurring significant latency or congestion-based slippage. When decentralized exchanges operate near their maximum throughput, the cost of securing a position escalates, creating a direct correlation between protocol utility and the scarcity of available data transmission channels.

Network bandwidth represents the physical throughput limit governing the speed and cost of decentralized financial settlement.

This resource is the invisible ledger beneath every trade, determining whether an arbitrage opportunity is captured or lost to high-frequency actors with superior infrastructure. Traders often mistake protocol speed for a fixed constant, failing to account for the competitive nature of block space acquisition. In environments where demand exceeds available capacity, Network Bandwidth becomes a priced commodity, auctioned through gas mechanisms that inherently shift the risk profile of every option strategy.

Origin

The genesis of this constraint resides in the foundational design of distributed consensus mechanisms, where security and decentralization were prioritized over high-frequency data throughput.

Early network architectures required every validator to process every transaction, creating an unavoidable bottleneck as adoption scaled. This design choice, while robust against censorship, introduced the reality of finite Network Bandwidth as a hard cap on global financial activity.

| System Era | Constraint Focus | Bandwidth Utilization |

| Monolithic Chains | Security | High contention per transaction |

| Modular Architectures | Throughput | Distributed data availability |

Developers recognized that increasing block sizes to accommodate more data risked centralizing the network, as only entities with massive infrastructure could maintain participation. This inherent tension between security and performance birthed the current landscape of layer-two scaling and sharding. The industry transitioned from viewing bandwidth as a static environmental variable to treating it as a dynamic resource that can be optimized through sophisticated state compression and off-chain execution proofs.

Theory

The quantitative modeling of Network Bandwidth requires analyzing the relationship between transaction density and the probability of inclusion within a specific block timeframe.

As order flow increases, the mempool experiences exponential queuing delays, which directly impacts the delta and gamma of short-dated options. A delay in execution, even measured in milliseconds, alters the effective strike price of an instrument, rendering standard Black-Scholes assumptions incomplete in congested market states.

Execution latency stemming from bandwidth saturation creates an unavoidable drag on derivative pricing models.

Game theory suggests that validators will prioritize transactions with higher economic incentives, effectively creating a tiered access structure for Network Bandwidth. This leads to the following systemic behaviors:

- Transaction Prioritization: Traders employ automated agents to outbid competitors for block space, turning bandwidth access into a secondary market for priority.

- Latency Arbitrage: Sophisticated participants exploit the physical distance between nodes to secure earlier access to the broadcast of state changes.

- Congestion Premiums: Option premiums adjust to reflect the expected cost of gas required to execute liquidations during periods of high volatility.

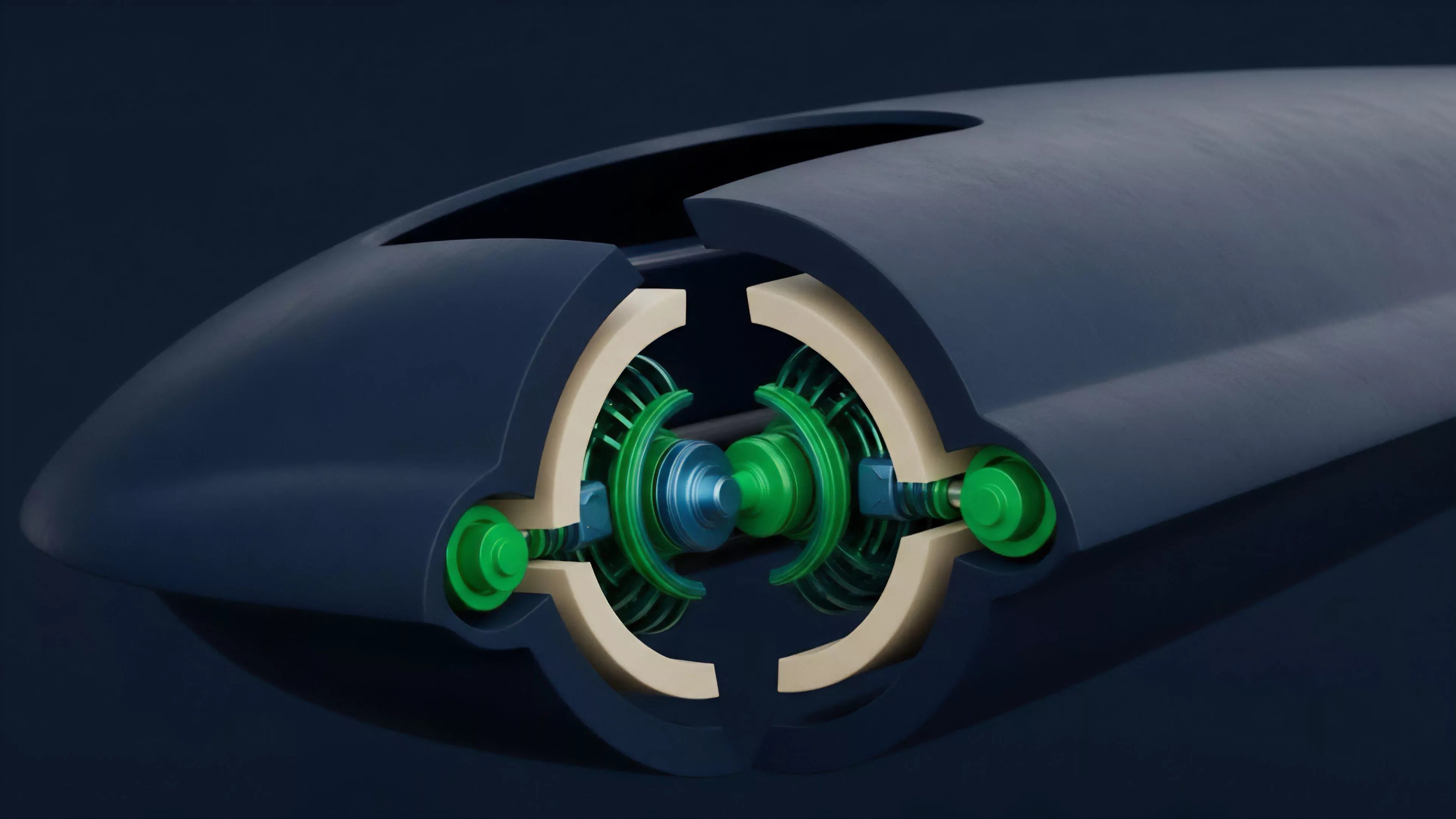

Consider the physics of light speed and node synchronization ⎊ the physical limit of information transfer across a global network ensures that no system can ever be perfectly synchronized, creating a perpetual edge for those who minimize the distance between their infrastructure and the protocol’s sequencer. This physical reality forces a departure from the idealized, frictionless markets assumed in classical finance, replacing them with a reality where throughput is a competitive advantage.

Approach

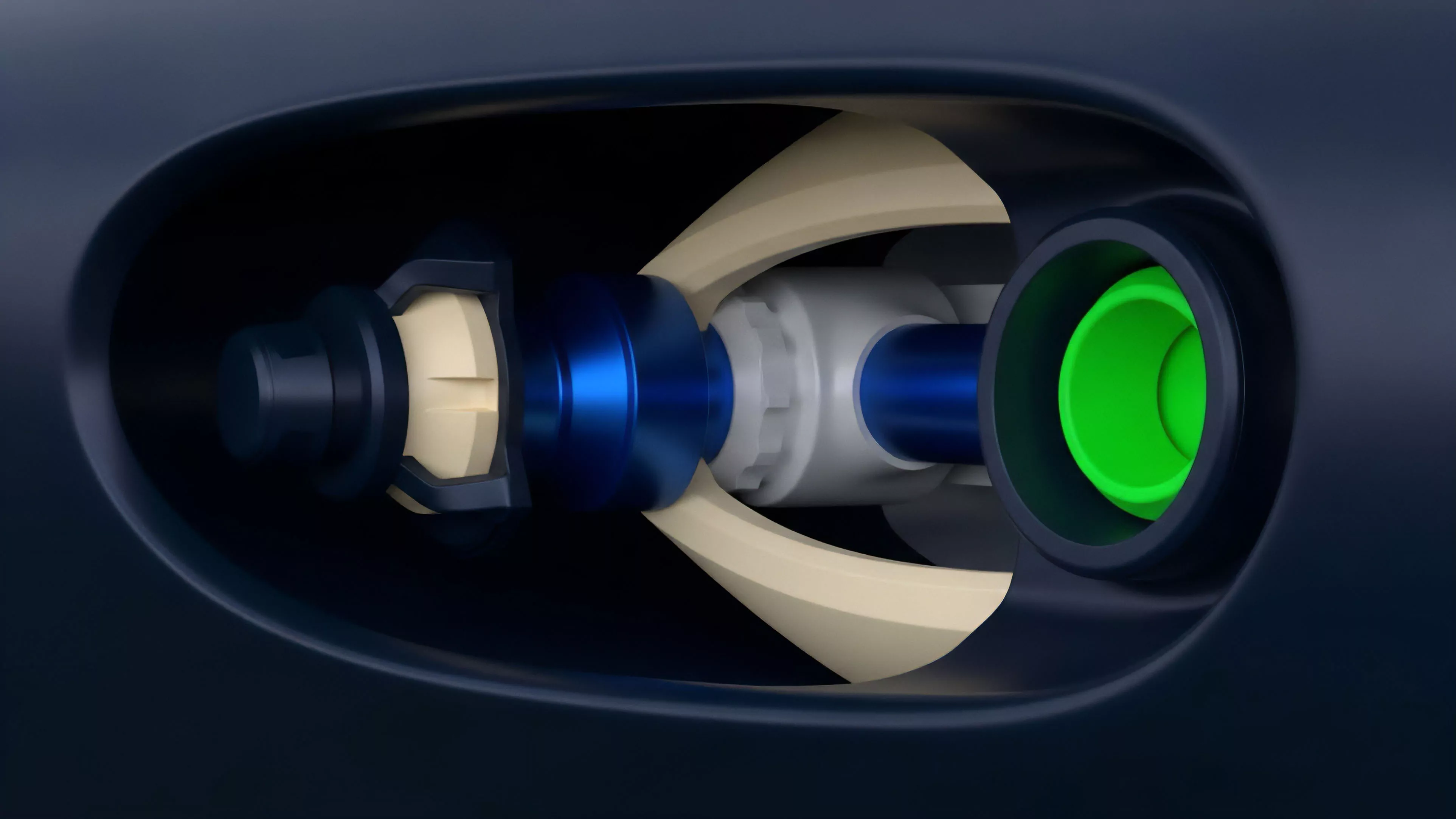

Current market strategies focus on maximizing capital efficiency by minimizing the footprint of trades on the base layer. Market makers now utilize batching protocols and specialized sequencing engines to aggregate order flow, effectively smoothing out the volatility of Network Bandwidth demand.

By moving execution off-chain and only settling the final state, these systems reduce the contention for block space, allowing for a more predictable cost structure.

Strategic management of execution footprint is the primary mechanism for maintaining profitability in high-demand periods.

Professional desks employ rigorous monitoring of mempool activity to adjust their exposure during expected congestion. The following table outlines the current methods for managing this resource:

| Strategy | Mechanism | Impact |

| Batching | Aggregate trades | Reduced gas cost |

| Sequencing | Pre-order flow | Minimized slippage |

| Proof Aggregation | State compression | Higher throughput |

The reliance on these secondary layers introduces a shift in systemic risk, as the security of the derivative contract becomes dependent on the liveness and data availability of the scaling solution. Traders must now account for both the volatility of the underlying asset and the potential for bandwidth-related outages in the execution environment.

Evolution

The trajectory of this domain moves toward complete decoupling of execution and settlement, where Network Bandwidth is no longer a shared pool but a specialized resource for specific protocol domains. We are witnessing the rise of application-specific chains that optimize their internal consensus for the unique data requirements of derivatives, such as high-frequency updates for price feeds.

This shift signifies a transition from general-purpose blockchains to highly tuned financial machines. The historical struggle with congestion has forced a refinement in how state is managed, with current efforts focusing on parallel execution models that allow multiple transactions to utilize bandwidth concurrently without blocking the entire network. This evolution reduces the systemic fragility of early decentralized systems, moving toward a architecture where throughput scales linearly with the addition of computational resources.

Horizon

Future developments will focus on the integration of hardware-level optimizations and decentralized sequencing networks that treat Network Bandwidth as a fully liquid, programmable asset.

We expect to see the rise of bandwidth derivatives, allowing participants to hedge against the volatility of transaction costs themselves. As these markets mature, the ability to secure predictable throughput will become as critical as liquidity management for the stability of global decentralized finance.

- Bandwidth Futures: Financial instruments that provide protection against spikes in transaction fees during high volatility events.

- Adaptive Throughput: Protocol designs that automatically reallocate bandwidth based on real-time demand and market urgency.

- Hardware Acceleration: Integration of specialized zero-knowledge hardware to minimize the data footprint of complex derivative settlements.

The ultimate goal remains the creation of a system where the physical limitations of the network are abstracted away, providing a seamless experience for participants while maintaining the integrity of the underlying ledger. The success of this transition determines the viability of decentralized markets as a replacement for traditional financial infrastructure.