Essence

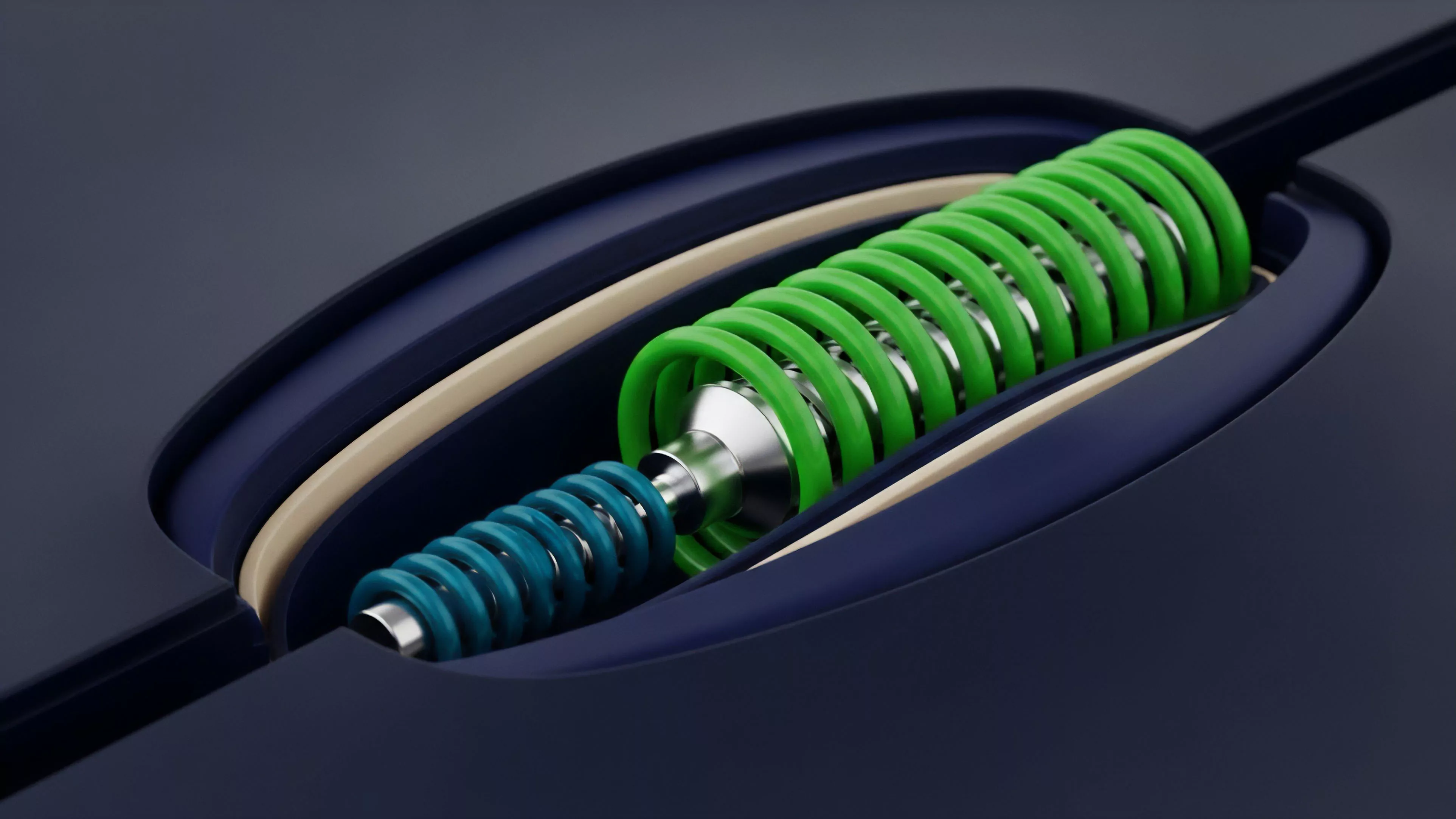

Mathematical Modeling in crypto derivatives serves as the foundational architecture for risk quantification, price discovery, and liquidity management. It translates stochastic market behaviors into computable structures, allowing participants to value complex instruments like options and perpetual futures. This modeling framework creates the bridge between abstract volatility and actionable financial exposure.

Mathematical modeling transforms volatile market inputs into structured risk metrics for pricing and hedging decentralized derivative products.

These models function as the operational logic for smart contracts, determining liquidation thresholds, margin requirements, and funding rate mechanisms. Without precise quantitative representations, decentralized exchanges face catastrophic insolvency risks from sudden price dislocations. The efficacy of these systems rests on their ability to account for non-linear payoffs and rapid regime shifts inherent in digital asset markets.

Origin

The genesis of Mathematical Modeling for digital assets traces back to the adaptation of classical quantitative finance frameworks to the unique constraints of blockchain technology.

Early pioneers utilized the Black-Scholes-Merton paradigm, adjusting for high-frequency volatility and the absence of traditional clearing houses. This transition necessitated a shift from centralized, trusted counterparty clearing to decentralized, algorithmic margin engines.

- Black-Scholes-Merton provided the initial framework for pricing European-style options by assuming geometric Brownian motion of underlying assets.

- Binomial Option Pricing introduced discrete-time modeling to account for early exercise features common in American-style derivative structures.

- Automated Market Makers replaced traditional limit order books in early decentralized protocols, requiring new models for constant product liquidity.

These adaptations occurred under intense adversarial pressure, as early protocols struggled with oracle latency and flash loan attacks. The evolution required moving beyond Gaussian assumptions to incorporate fat-tailed distributions and reflexive market dynamics, acknowledging that digital asset price action often defies standard normal distribution curves.

Theory

The theoretical rigor of Mathematical Modeling centers on the precise calibration of Greeks and volatility surfaces. Delta, Gamma, Theta, and Vega provide the sensitivity analysis required to maintain market-neutral positions.

In a decentralized context, these variables must be calculated on-chain or via decentralized oracle networks to maintain transparency and trustless execution.

| Metric | Financial Significance |

| Delta | Sensitivity to underlying asset price change |

| Gamma | Rate of change in Delta relative to price |

| Vega | Sensitivity to implied volatility shifts |

| Theta | Rate of time decay for option contracts |

The structural integrity of these models depends on managing Systems Risk and Contagion. If a margin engine utilizes a flawed volatility model, the resulting liquidation cascade can drain liquidity pools, creating a feedback loop of price slippage and protocol insolvency. Effective modeling incorporates rigorous stress testing against extreme liquidity crunches and high-correlation events.

Greeks quantify the multi-dimensional sensitivity of derivative positions to market variables, enabling precise risk management in decentralized protocols.

Consider the intersection of game theory and quantitative finance. While a model may be mathematically sound, it must also remain incentive-compatible within an adversarial environment where participants exploit any mispricing or latency in the model’s update frequency. This creates a permanent tension between model accuracy and computational cost.

Approach

Modern practitioners utilize Stochastic Calculus and Monte Carlo Simulations to refine pricing models for exotic crypto derivatives.

The approach involves calibrating volatility surfaces that reflect the persistent skew observed in crypto markets, where downside protection is consistently priced at a premium.

- Volatility Skew Calibration adjusts models to reflect the higher demand for out-of-the-money puts.

- Dynamic Hedging Strategies utilize algorithmic agents to rebalance portfolios based on real-time delta and gamma exposure.

- Margin Engine Design implements tiered liquidation logic based on historical volatility and collateral quality.

These models now incorporate Macro-Crypto Correlation, acknowledging that digital asset volatility often spikes in alignment with broader liquidity cycles and interest rate shifts. Quantitative teams focus on building models that survive regime shifts, rather than merely optimizing for stable, low-volatility environments.

Evolution

The trajectory of Mathematical Modeling has moved from static, centralized pricing formulas to dynamic, protocol-integrated risk engines. Early attempts to mirror traditional finance (TradFi) models failed to account for the unique 24/7 liquidity and structural leverage prevalent in decentralized markets.

The industry has since pivoted toward models that prioritize Smart Contract Security and modular risk parameters.

The evolution of derivative modeling reflects a shift from rigid TradFi adaptations toward adaptive, on-chain risk engines capable of surviving extreme market stress.

| Era | Modeling Focus | Systemic Outcome |

| Foundational | Standard Black-Scholes | High liquidation vulnerability |

| Adaptive | Dynamic Volatility Skew | Improved capital efficiency |

| Integrated | Protocol-native Risk Engines | Enhanced systemic resilience |

The current state prioritizes Tokenomics and value accrual, where the modeling of derivative liquidity directly influences the governance and economic sustainability of the underlying protocol. This transition acknowledges that the model is not merely a tool for pricing but a core component of the protocol’s long-term viability and defense against adversarial behavior.

Horizon

The future of Mathematical Modeling lies in the integration of Machine Learning for predictive volatility modeling and the implementation of Zero-Knowledge Proofs for private, verifiable derivative settlement. As decentralized markets mature, models will increasingly account for cross-chain liquidity fragmentation and the complex interplay between decentralized identity and margin access. The shift toward autonomous, AI-driven risk management will likely replace static parameters with real-time, adaptive thresholds. This will reduce the reliance on manual governance interventions, creating a more robust and responsive financial infrastructure. The ultimate objective is the creation of a self-correcting derivative system that maintains stability through algorithmic rigor rather than discretionary human intervention.